The U.S. government has long seen challenges and opportunities in using AI to further its mission. Federal agencies have attempted to use facial recognition technology to identify suspects and taxpayers, raising serious concerns about bias and privacy. Some agencies have tried to use AI to identify veterans at high risk for suicide, both of which could negatively impact veterans’ health and well-being if predicted incorrectly. Meanwhile, federal agencies are already using AI in promising ways. From simplifying weather forecasting to predicting failures in aviation navigation equipment to automating paperwork processing, AI, used well, holds the promise of improving many of the federal services Americans rely on every day.

That's why we're thrilled to see the White House enact strong policy today that empowers federal agencies to responsibly harness the power of AI for the public good. This policy carefully identifies riskier uses of AI and sets strong guardrails to ensure those applications are responsible. At the same time, this policy creates leadership and incentives for agencies to harness AI's full potential.

This policy is based on the simple observation that not all applications of AI are equally risky or equally beneficial. For example, using AI to digitize paper documents is much less risky than using AI to make asylum decisions. The former does not require scrutiny beyond existing rules, but the latter poses risks to human rights and requires a much higher standard to be applied.

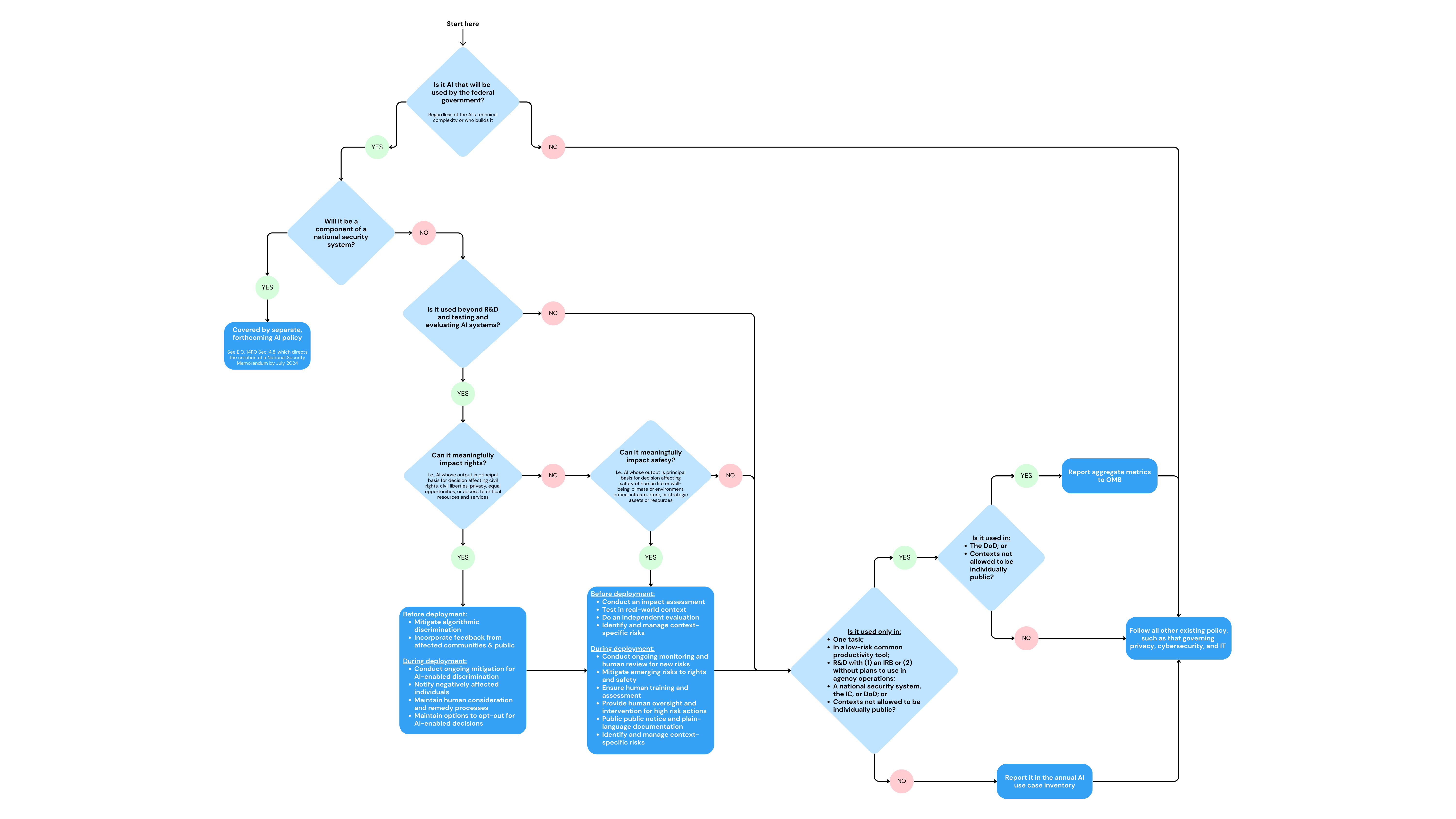

Diagram explaining how this policy mitigates AI risks.

Therefore, this policy will prioritize resources for AI accountability by adopting a risk-based approach. This approach will largely ignore AI applications that are low risk or well governed by other policies, and will focus on AI applications that could have a significant impact on people's safety or rights. For example, the use of AI in the power grid or self-driving cars will require impact assessments, real-world testing, independent evaluation, ongoing oversight, and appropriate public notice and human override. And the use of AI for filtering resumes or approving loans will need to incorporate the safeguards described above, mitigate bias, incorporate public input, conduct ongoing oversight, and provide reasonable opt-outs. These protections are based on common sense. AI that is essential for areas such as critical infrastructure, public safety, and government benefits will need to include testing, oversight, and human override. The details of these protections are consistent with years of rigorous research and incorporate public comment so that interventions are more likely to be effective and actionable.

The policy applies a similar approach to AI innovation. It asks agencies to develop AI strategies that focus on prioritizing key AI use cases, lowering barriers to AI adoption, setting AI maturity goals, and building the capabilities needed to leverage AI in the long term. This, combined with the actions in the AI Executive Order to focus AI talent in high-priority locations across the Federal Government, will position agencies to be in the places where they can most effectively deploy AI.

These rules also have built-in oversight and transparency. Agencies must appoint a senior Chief AI Officer to oversee both the accountability and innovation obligations in the policy. Agencies must also publicly disclose plans to comply with these rules and discontinue any use of AI that does not comply. In general, federal agencies must report AI applications in an annual AI Use Case Inventory and provide additional information about how they are managing risks from AI that impact safety and rights. The Office of Management and Budget (OMB) will monitor compliance, and the agency must be fully aware of any exemptions agencies seek to the AI risk mitigation practices outlined in the policy.

These efforts are expected to have a significant impact. Federal law enforcement agencies (including immigration and border security) will need to implement strong risk mitigation measures in many of their uses of facial recognition and predictive analytics. Millions of people work for the U.S. government, and these federal employees will have the protections outlined in this policy if their employer seeks to monitor and control their actions and behavior through AI. And if federal agencies seek to use AI to identify fraud in programs like food stamps and financial assistance, these agencies will need to be sure the AI actually works and does not discriminate.

These rules apply whether federal agencies build their AI themselves or buy it from a vendor. Because the U.S. government is the largest purchaser of goods and services in the world, this will have a significant impact on market shaping, encouraging agencies to only purchase AI services that comply with the policy. The policy further directs agencies to share AI code, models, and data, promoting an open source approach that is essential for the entire AI ecosystem. Additionally, it encourages agencies to foster market competition and interoperability among AI vendors and avoid self-favoritism and vendor lock-in when procuring AI services. All of this helps promote good government by ensuring taxpayer money is spent on safe and effective AI solutions, not dangerous, over-hyped quack medicines from contractors.

Federal agencies will work to comply with this policy in the coming months, and we will also develop follow-up guidance to support the implementation of this policy, improve AI procurement, and govern the use of AI in national security applications. The hard work is not over yet. There are still open issues to address as part of our future work, such as figuring out how to more explicitly incorporate open source requirements as part of the AI procurement process and reducing agencies' reliance on specific AI vendors.

With so much government activity on AI, it's worth taking a step back. Today is an important day for AI policy. The U.S. government is leading the way in establishing its own rules on AI, and Mozilla is here to help ensure their successful implementation.