Orlich Lawson

Fellow members of the legendary Computer Bug Trial, honored guests, if you will please listen? I respectfully submit a new candidate for your esteemed judgment. You may or may not consider it new. You may even call it a “bug”. But I'm sure you will find it interesting.

Consider NethackThis is one of the best roguelike games of all time. And I mean that in the strictest sense of the word: content is procedurally generated, death is permanent, and only skill and knowledge is retained between games. I understand that the only thing two roguelike fans can agree on is how wrong a third's definition of a roguelike is, but let's move on.

Nethack Great for machine learning…

It's a difficult game, full of important choices and random challenges, but it's also a “single agent” game that can be generated and played super fast on modern computers. Nethack This is great for anyone working in machine learning, or indeed imitation learning, as detailed in Jens Tuyls' paper on how computing scaling affects single-agent game learning. Using Tuyls' expert model, Nethack To model the behavior, Bartłomiej Cupiał and Maciej Wołczyk used reinforcement learning to train a neural network so it could improve itself while playing.

By mid-May of this year, their model was consistently scoring over 5,000 points on its own criteria. But in one run, the model suddenly scored about 40% worse. It was down by 3,000 points. Machine learning generally tends to move in one direction over time on these types of problems. This didn't make sense.

Cupiał and Wołczyk tried various things, including reverting the code, restoring the entire software stack from Singularity backups, rolling back the CUDA libraries, etc. The result? 3,000 points. Even if they rebuilt everything from scratch, they still got 3,000 points.

Nethackplayed by ordinary people.

… except on certain nights

As detailed in Cupiał's X (formerly Twitter) thread, this was the result of several hours of chaotic trial and error by him and Wołczyk.“I'm beginning to feel like I'm going mad. I can't even watch a TV show without thinking about bugs all the time,” Cupiau wrote. In despair, he asked model writer Tuils if he knew what the problem was. When he woke up in Krakow, he heard the answer:

“Oh yeah, it's probably a full moon today.”

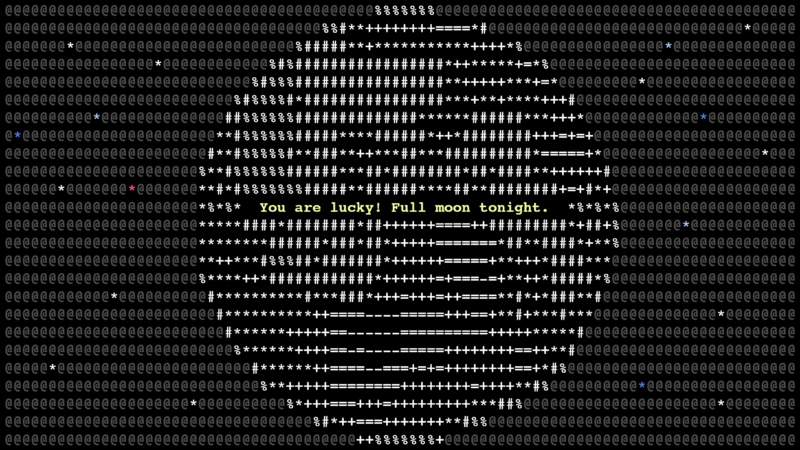

in Nethack,In a game where the development team has thought of everything, when the game detects that it is a full moon from the system clock, it generates the message “You are lucky! Tonight is a full moon.” A full moon gives the player several advantages: one point is added to luck, and creatures mostly remain in animal form.

Overall, this is an easier game, so why does the learning agent score lower? The learning agent's training data doesn't have data on the full moon variable, so a series of branching decisions could lead to lower results, or even confusion. Krakow When scores like 3000 started coming in. What a terrible night for the learning model.

Of course, the score is not a true indicator of success. NethackAs Cupiał himself pointed out, if you ask a model to give you the highest score, it will kill a ton of low-level monsters because it never gets bored.”Find the items you need [ascension] or [just] “Completing the quests is too taxing for a pure RL agent,” Kupiar wrote. Another neural network, AutoAscend, excels at progressing through the game, “but it can only solve the Sokoban puzzle and reach the edge of the mines,” he noted.

Is it a bug?

I Nethack Although it responded to the full moon as intended, this quirky and highly incomprehensible stop in the machine learning journey is certainly a bug and deserves a place in the Hall of Fame. It's not a Harvard moth, nor is it a 500-mile email, but what is it?

Because the team used Singularity to back up and restore the stack, they inadvertently carried forward machine time and the resulting bugs with each solution attempt. The machine's resulting behavior was so strange, and seemed based on unseen forces, that it infuriated the programmers. And so this story has a beginning, a climactic middle, and an ending that will teach you something, no matter how confusing.

of Nethack I think the Lunar Learning Bug is worth celebrating. Thank you for your time.