Researchers are tackling the persistent challenge of using neural networks to accurately model resonance phenomena within electromagnetic simulations. Sunghyun Nam from the Korea Advanced Institute of Science and Technology, Chan Y Park, and Min Seok Jang from the KAIST KC Machine Learning Lab, along with colleagues, introduce a new data-efficient training framework called dissipative relaxed transfer learning (DIRTL). This effort is important because it addresses instability and performance degradation caused by high-amplitude resonances, which often appear as outlier samples during training. By first pre-training the model with a small amount of fictitious material loss to smooth the response landscape, and then fine-tuning it with lossless data, DIRTL enables stable adaptation and significantly improves prediction accuracy, achieving error reductions of up to 2x in some cases, establishing a physically grounded and versatile approach for powering machine learning-based electromagnetic solvers.

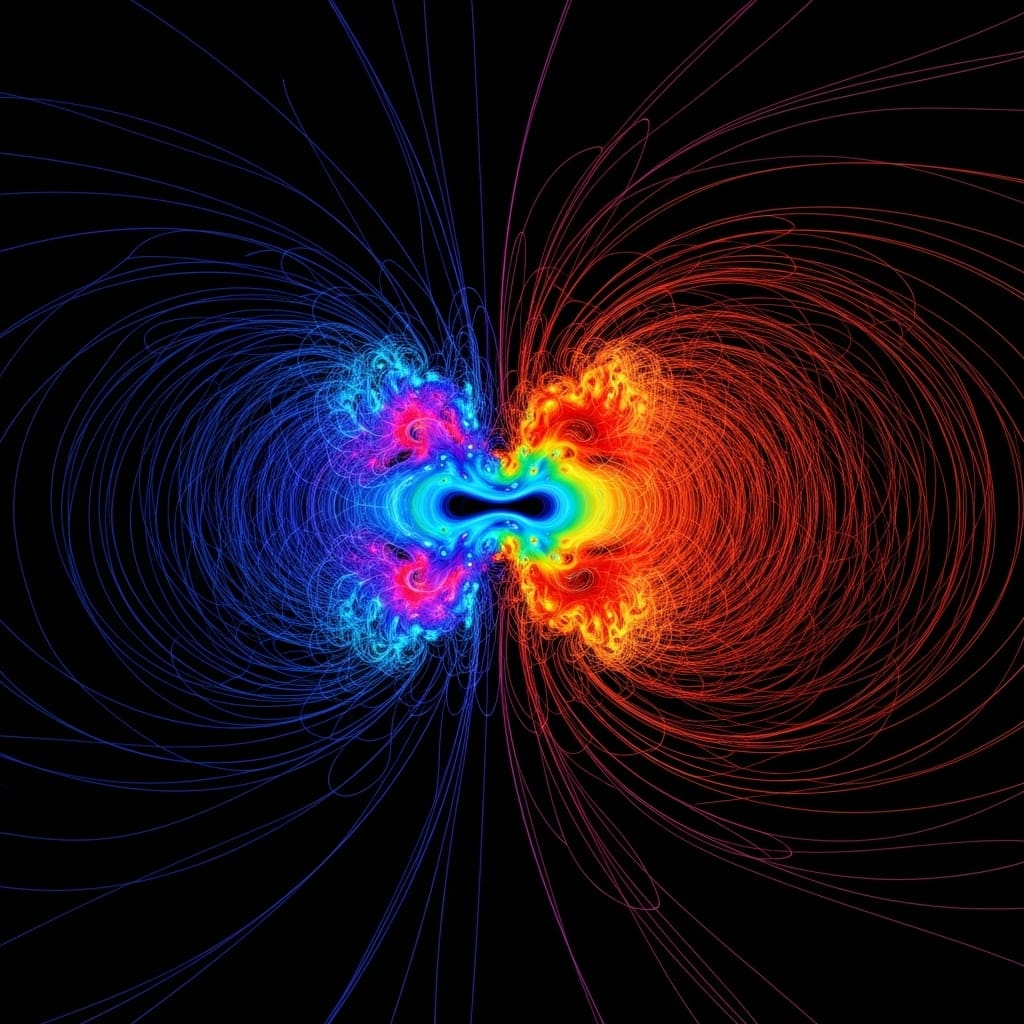

Antenna phenomena remain a central challenge. High-amplitude resonances generate strongly localized field patterns that appear as outlier samples and deviate significantly from the general distribution of non-resonant cases, leading to instability and poor prediction performance. To address this, we introduce Dissipative Relaxation Transfer Learning (DIRTL), a data-efficient training framework that integrates transfer learning and loss regularization optimization principles with high-Q photonics. DIRTL first pre-trains the model on data generated with small fictitious material losses. This broadens the sharp resonant modes and suppresses the extreme field amplitudes. This smoothing of the response landscape promotes stable learning and improved generalization.

DIRTL training significantly improves resonance prediction accuracy

The scientists believe that the 0.1(a)? diffraction grating consists of a 1 × 64 array in free space and is illuminated by a transverse magnetic (TM) plane wave that is incident perpendicularly to the device. Each element moves alternately between free space and a dielectric material with refractive index nn =?. The training and testing datasets cover wavelengths from 650nm to 750nm in 10nm steps, and all electromagnetic field data are generated using a Rigorous Coupled Wave Analysis (RCWA) solver. Fourier orders were used in the simulations and were shown to be sufficient for numerical convergence (see Section 1 in the Supporting Information).

From this problem setting, we observe the emergence of the resonant outlier case discussed above. As shown in Figure 0.1(b), most of the generated test samples are non-resonant and do not exhibit significant local field enhancement within the lattice space, although highly local field cases with large field amplitudes occasionally emerge. This distribution is quantified in Figure 0.1(c), showing a long tail corresponding to the case of strong resonances with maximum amplitudes reaching 0.1(c). The central challenge addressed is to accurately model resonance phenomena. Resonance phenomena generate outlier samples that degrade prediction performance due to strongly localized magnetic field patterns.

The researchers approached this problem by first pre-training the model on data generated with small fictitious material losses, effectively broadening the resonant modes and suppressing the extreme field amplitudes. This smoothing of the response landscape allows the model to more effectively learn global modal features before fine-tuning the target reversible dataset containing true high-amplitude resonances. Experimental results reveal that applying DIRTL to both Fourier Neural Operators (FNO) and UNet architecture significantly improves prediction accuracy. Specifically, the FNO variant achieved up to 2x error reduction when leveraging the DIRTL framework.

The researchers measured prediction errors across a one-dimensional multiwavelength binary grating irradiated with transverse magnetic plane waves and observed consistent performance improvements with DIRTL compared to standard training methods. A Fourier order of 81 was used in the simulations to ensure numerical convergence, and the training and testing datasets covered wavelengths from 650nm to 750nm in steps of 10nm. Data show that DIRTL exhibits robustness across different training conditions and supports strong multitasking performance, highlighting its versatility. The study focused on a 1 × 64 array in free space with alternating free space and dielectric materials with a refractive index of 2.

The scientists noted that most of the test samples produced were non-resonant, lacking significant local field enhancement, while resonant cases showed markedly different field patterns. This framework achieves a sample efficiency of approximately 9 times, demonstrating its potential as an efficient method for training electromagnetic surrogate solvers. Measurements confirm that DIRTL implicitly embeds physical knowledge in the learning process, providing applicability beyond electromagnetic simulation. This breakthrough provides a physically grounded, architecture-independent curriculum for increasing the reliability of machine learning-based electromagnetic surrogate solvers. Tests demonstrate that a two-step learning process inspired by photonic optimization strategies guides the network through a sophisticated learning environment, allowing for smooth convergence and enhanced generalization. Taken together, these results prove that DIRTL is a valuable tool for accurately capturing the resonant behavior that is important in photonic device design.

DIRTL significantly improves the accuracy of resonant electromagnetic simulations

Scientists have developed a new training framework called dissipative relaxation transfer learning (DIRTL) to improve the accuracy of neural network surrogate solvers used in electromagnetic simulations. A central challenge being addressed is that these solvers have difficulty accurately modeling high-amplitude resonance phenomena, which often appear as outlier data points. DIRTL employs a two-step process, first training a model using data that incorporates a small amount of fictitious material loss to broaden the resonant modes and reduce the amplitude of extreme magnetic fields. The pre-trained model is then fine-tuned on the lossless dataset, allowing for stable adaptation and improved prediction accuracy.

The results show significant improvements for both the Fourier Neural Operator (FNO) and the UNet architecture, with up to 2x error reduction for the FNO variant. Furthermore, the framework shows robustness across different training conditions and supports effective multitasking performance, demonstrating its versatility. The authors acknowledge that the effectiveness of this method is dependent on the specific curriculum and the initial loss parameters may need to be chosen carefully. DIRTL establishes a physically grounded, architecture-independent approach to increase the reliability of machine learning-based electromagnetic surrogate solvers. The data efficiency of this method is particularly noteworthy, as it has been demonstrated by achieving comparable accuracy with significantly less training data. Future research may consider applying DIRTL to other physical systems where physics-based learning is important, extending its impact beyond electromagnetic simulation.

👉 More information

🗞 Data-efficient electromagnetic surrogate solver using dissipative relaxation transfer learning

🧠ArXiv: https://arxiv.org/abs/2601.18235