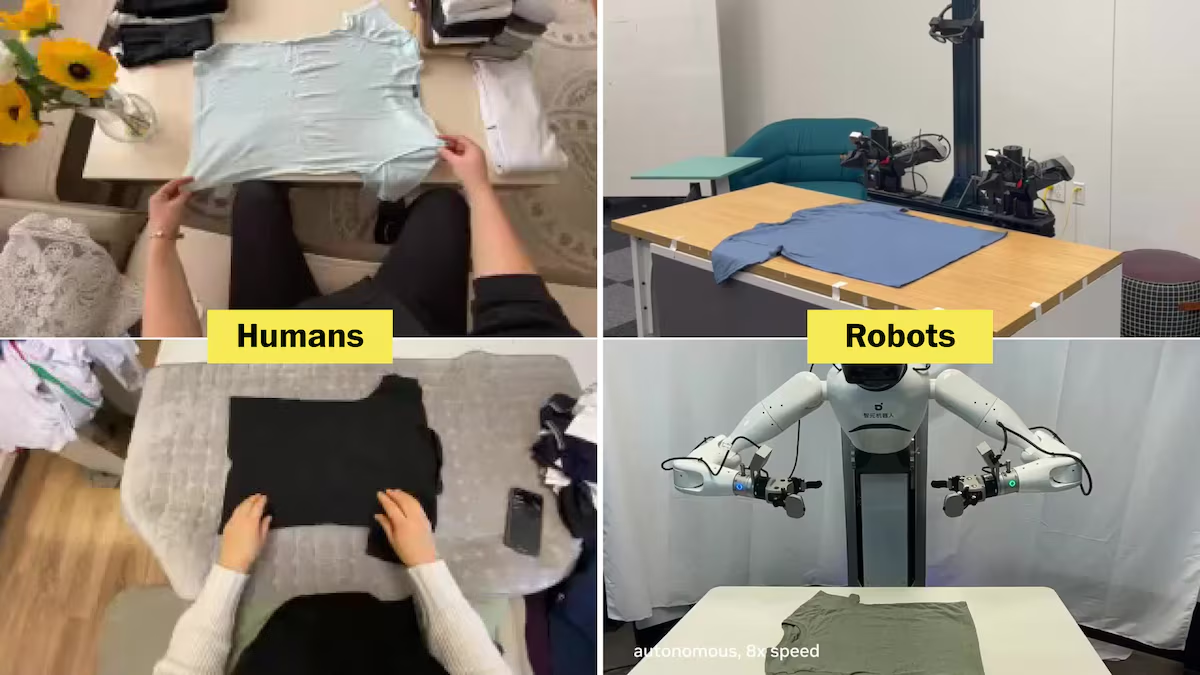

Silicon Valley’s next big leap may be built on videos of people folding laundry.

Startups and entrepreneurs, including Tesla CEO Elon Musk, are trying to build robots smart enough to help with chores around the house. But to adapt artificial intelligence to new tasks, chatbots need sample data, such as online text and photos, to enable them to generate high-quality documents and images.

Food delivery service DoorDash has joined a cottage industry of companies and researchers collecting data for the robot revolution in the form of videos of people doing tasks like folding clothes and washing dishes. Gig workers can now earn $25 an hour by recording themselves doing chores for DoorDash.

We’ll explain how this video is used and why it’s so valuable.

How to train a robot to fold clothes

Capturing household data is a bet on what AI insiders call the “law of scaling.” Researchers have found that AI models for working with text and images get better over time as they are trained on more data, and they hope the same will be true for robotics.

Ken Goldberg, a roboticist and distinguished engineering chair at the University of California, Berkeley, said “there is evidence that large amounts of data can help robots perform more complex tasks.”

But unlike chatbots, there is no easy place to get a ton of relevant data. “There’s no internet where you can get robot data,” Goldberg said.

Chatbots learn how to produce coherent sentences by analyzing readily available raw materials from human-written text, the web, books, and many other sources.

Training robot control software is even more complex. robot that takes care of housework It must decipher data from sensors, predict movements that accomplish a goal, such as folding a shirt, and send commands to limbs and grippers to perform the appropriate movements. There is no off-the-shelf data repository that shows you how to do that. Even videos of people doing housework may not have all the necessary elements.

AI training dataset size

One way to collect training materials is to record data while a human is manually operating the robot. “Robot teleoperation data is considered to be probably the highest quality data because it includes the robot’s movement commands,” said Simar Khalil, a robotics researcher at Georgia Tech and a pioneer in training robots with human video.

But “it’s just the most expensive thing to collect,” Carrier said. That’s because you have to pay people to operate expensive robots, and “humans complete these tasks much, much slower than they could with their own hands.”

Kareer is working to show that large collections of inexpensive human video data can provide AI with a baseline understanding of how to perform tasks, and that smaller pools of expensive teleoperation data can be used to refine and teach software how to perform specific robot movements.

Other researchers and companies are trying different strategies to reduce the cost of collecting the training data needed for the robot revolution.

One is to provide humans with a handheld version of the robot gripper, allowing them to more easily and quickly demonstrate tasks in a way that can be easily translated into robot control software. Some people are building robots that are as human-like as possible. The idea is that if machines had the same number of fingers and joints as humans, it would be easier for AI software to transfer skills from videos of humans to robots. Another idea is to let the robot experiment and learn in a simulated environment, like a video game, before transferring the control software to the real robot.

Ultimately, the best data to help robots get better at folding clothes will come after they are deployed and begin performing real tasks in the world. But it’s unclear how quickly that will be possible.

How long will it be before robots can do laundry? “Probably in two, three, five, 10, 20 years,” Goldberg said. “Or more.”