clawbotRecently renamed Moltbot, it is defined by its creator as “an AI that actually does something.” With expanded agent capabilities and built-in autonomy, it has amassed over 85,000 Github stars in about a week and has already been forked over 11,500 times.

It can browse the web, summarize PDFs, schedule calendar entries, shop, read and write files, and send emails for you. It already has integrations with several widely used messaging and email applications, from WhatsApp to Telegram. You can take screenshots, analyze and control your desktop applications. But the important thing is that there is persistent memory. It can remember interactions from weeks or even months ago. Therefore, it acts as a personal AI assistant that is always available.

Moltbot feels like a glimpse of the sci-fi AI characters we grew up watching in the movies. For individual users, it may feel transformative. To function as designed, it requires access to root files, authentication credentials, both passwords and API secrets, browser history and cookies, and all files and folders on your system. You can trigger an action by sending a message on WhatsApp or any other messaging app, and it will continue to work on your laptop until the task is completed.

But what’s cool doesn’t necessarily mean safe. For autonomous agents, security and safety cannot be an afterthought.

Security in use

It is important to understand the attack surface in terms of how Moltbot will be used. Let’s look at some use cases for autonomous assistants and assess the risks involved.

Scenario 1: Research a topic and create summarized social media content

Moltbot can search the web and pull the search results into your device (or IDE wherever you are working with Moltbot).

risk: Some of these web results used for research may have indirect prompt injection attacks hidden in the HTML payload. Depending on the goal of the attack, Moltbot can execute malicious commands, read secrets, and publish information in the form of social media content embedded with sensitive data. All of this is done without any human checking.

Scenario 2: Read Telegram messages and send action items

Moltbot can access your Telegram account because it has your password and can read everything that exists.

risk: Malicious links from unknown senders are treated with the same level of security as messages from family members. The attack payload could be hidden within a “good morning” message forwarded on WhatsApp or Signal. Even more secure messaging channels are vulnerable to this level of autonomy because the agent needs access to decrypted messages to properly perform its job. But that’s not the end. Moltbot has persistent memory, so any malicious instructions hidden in a forwarded message will be available in its context even a week later. This exposes the system to dangerous delayed multi-turn attack chains, which most system guardrails lack the ability to detect and block.

Scenario 3: Use the hosted Moltbot skill yourself

The general sentiment around increasing autonomy and democratizing the use of open source AI has led several developers to host Moltbot skills. They do this with a forward-thinking mindset of sharing their solutions so that the next user doesn’t have to spend time figuring things out. Increase access and speed up development.

risk: Various skills are hosted around the world. These skills are built into the assistant without any context filtering or human checking. Malicious instructions hidden in the description or code are added to the assistant’s memory. These commands can be executed to steal sensitive information, send private conversations, and even steal business-critical data.

Moltbot does not maintain enforced trust boundaries between untrusted input (web content, messages, third-party skills) and invocations of highly privileged inferences or tools. As a result, content from external sources can directly impact planning and execution without policy mediation. Additionally, the overloaded functionality built into Moltbot’s architecture further increases Moltbot’s attack surface. While it is necessary for institutions to be helpful assistants, it expands the so-called “deadly trifecta of autonomous agents” and makes it a dangerous experiment.

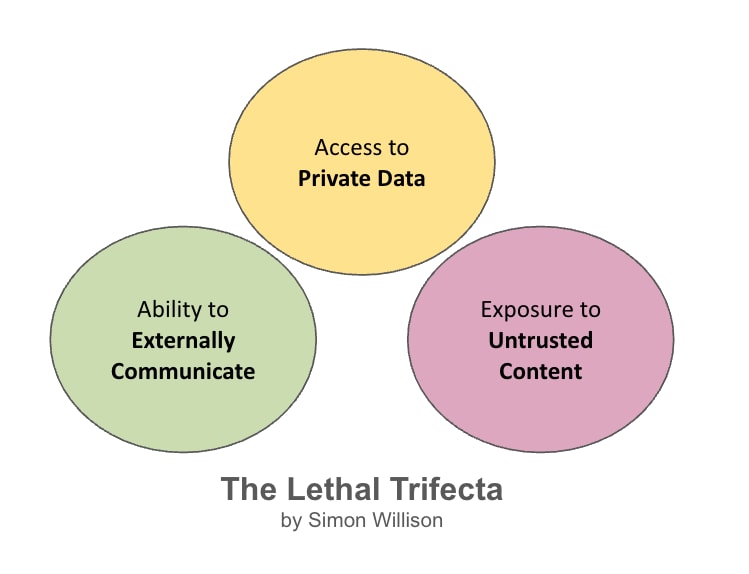

Simon Willison coined this term. The deadly trifecta of AI agents He argues that AI agents are vulnerable by design because of the intersection of three functions:

- Access to personal data (credentials, personal information, business data)

- Exposure to untrusted content (web, messages, 3rd party integrations)

- Ability to communicate with outside parties (send messages, make API calls, execute commands)

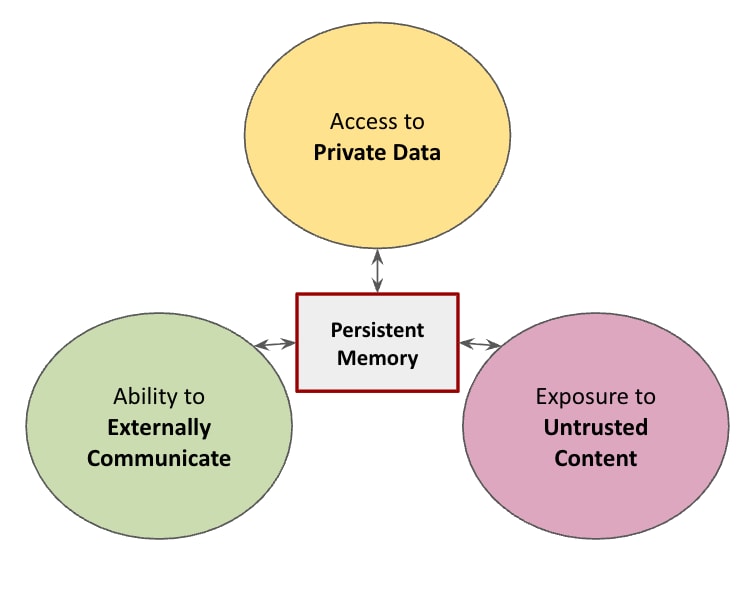

But what if there was a fourth feature that expanded this attack surface and made it easier to attack AI agents? The rapid rise in popularity of Moltbot brought with it a new feature that autonomous agent users wanted. lasting memory.

With persistent memory, attacks become more than just point-in-time exploits. These are stateful delayed execution attacks.

Persistent memory acts as an accelerant, amplifying the risks accentuated by the deadly trifecta.

Malicious payloads no longer need to trigger execution immediately upon delivery. Instead, they are fragmented, untrusted inputs that appear harmless on their own and can be written into the long-term agent’s memory and assembled later. converted into an executable instruction set. This allows for time-shifted prompt injection, memory poisoning, and logic bomb style activation. Exploits are created on ingestion, but only explode when the agent’s internal state, goals, or tool availability match.

Moltbot vulnerabilities mapped to OWASP Top 10 for Agents

With the proliferation of government agencies and nearly non-existent governance protocols, Moltbot is susceptible to a full range of system failures. OWASP Top 10 Agent Applications. The table below maps Moltbot vulnerabilities to the OWASP Top 10 Frameworks.

| OWASP agent risks | Implementation of Moltbot |

| A01: Immediate injection (direct and indirect) | Web search results, messages, and third-party skills inject instructions for the agent to execute. |

| A02: Insecure agent tool invocation | Tools (bash, file I/O, email, messaging) are called based on inferences involving untrusted memory sources. |

| A03: Excessive agent autonomy | A single agent has file system root access, credential access, and network communication, with no privilege boundaries or authorization gates. |

| A04: Human participatory control is missing. | Destructive operations (rm -rf, using credentials, sending external data) do not require authorization, even when affected by old, unreliable memory. |

| A05: Agent memory poisoning | All memories are indifferent by source. Web scraping, user commands, and third-party skill output are stored identically with no trust level or expiration date. |

| A06: Insecure third-party integration | Third-party “skills” run with full agent privileges and can write directly to persistent memory without sandboxing. |

| A07: Insufficient separation of privileges | A single agent handles ingesting untrusted input and performing highly privileged actions through shared memory access. |

| A08: Supply chain model risks | The agent uses upstream LLM without validating any data tweaks or safety adjustments. |

| A09: Unlimited agent-to-agent actions | Moltbot operates as a single monolithic agent, but future multi-agent versions may enable unconstrained agent communication. |

| A10: Lack of runtime monitoring and guardrails | There is no policy enforcement layer between memory acquisition → inference → tool invocation. There is no anomaly detection regarding memory access patterns or temporal causality tracking. |

Moltbot is an unlimited attack surface with access to credentials.

Persistent memory is a must-have feature for future AI assistants. general artificial intelligence (AGI) aims to achieve human-level intelligence over time, not limited to a single day or session. Humans use accumulated experience, selective recall, and learned abstractions to reason about life. This continuity of state allows for long-term planning and consistent decision-making. Persistent memory introduces persistent state between sessions, allowing AI agents to learn and evolve over time. This is a step in the right direction to achieve AGI. But uncontrolled persistent memory in autonomous assistants is like adding gasoline to a deadly trifecta.

Moltbot is claimed to be the closest thing to AGI. It is constantly running, streamlined and efficient, allowing it to give users superhuman abilities. However, if this level of autonomy is not managed, it can lead to irreversible security incidents. Even with control UI hardening techniques, the attack surface remains unmanageable and unpredictable.

The authors’ opinion is that Moltbot is not designed to be used in an enterprise ecosystem.

The growing popularity of Moltbot raises important security questions regarding the architecture of autonomous systems. The future of AI assistants is not just about smarter agents, but also about safe agents that are manageable and built with understanding. do not have To act.

please read OWASP Agentic AI Survival Guide Understand how to protect against known agent threats.