God Translation Bureau is an editorial team under 36Kr that mainly introduces new technologies, new ideas, and new trends from overseas, focusing on fields such as technology, business, workplace, and life.

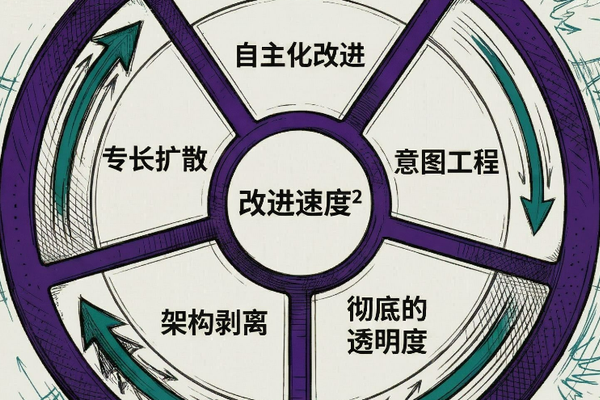

Editor’s note: AI is moving from a “dialogue box” to a “self-evolving cycle.” When it turns out that 99% of knowledge work is just redundant scaffolding, the ability to clearly define and validate “intent” will be the difference between the top elite and the mediocre. This article has been edited.

After thinking about it for about a week during this period and attending the RSA conference, I believe that a few core AI concepts will change the status quo in fundamental ways above all others.

-

Autonomous component optimization

-

Transforming to “intent-based engineering”

-

From ambiguity to transparency

-

Realize that most of your work is just “scaffolding”

-

Disseminating specialized knowledge to society

1. Autonomous component optimization

This concept is closely related to concepts such as “current to ideal” transformation, algorithmization, and general verifiability.

Karpathy’s Autoresearch project is making this concept a reality and accessible.

His project focuses on AI research itself, “automating the research process” in AI research. This means automatically handling tedious and time-consuming chores like adjusting model parameters, fine-tuning fragile environments, and combining different options.

In his release version, all you have to do is write down some ideas in a “PROGRAM.md” file and the system will automatically handle all the heavy lifting for you. Just sleep, and the system will use machine learning optimization techniques to produce better results than what you have at hand.

Extending automated research

Now, “Autoresearch for X” is emerging and this is becoming a paradigm and a movement and essentially a common tool.

It stimulated the thoughts of many people.

Can you apply similar methods to your current job?

This is really unusual.

Combine automated research with your own research

I’ve always been interested in the whole concept of “general verifiability” or “general mountain climbing algorithms (optimization).” This also builds on what Karpathy said long ago in “Software 2.0” and his recent tweet, in which he said the future of software is that everything is verifiable.

So what I do in the PAI algorithm is decompose all content into “ideal state standards”. These criteria essentially build an ideal blueprint for my desired outcome.

Based on this, the algorithm can continuously optimize (climb the hill) towards this goal.

All can be evaluated (Evals)

Related to this is the idea that “everything can be evaluated.” This is very similar to my general verifiability and general mountain climbing optimizations. The core idea is that everything we do becomes measurable and, more importantly, improveable.

Transparency makes it possible to “evaluate everything.”

Typical optimization cycle

This will become the standard operating mode for all businesses, organizations, governments, and individuals. This cycle is as follows:

Draw all the tasks you want to complete in a goal-oriented structure (mission, objectives, workflows, SOPs). These workflows are executed by AI agents. Everything is extensively recorded: output content, interaction process, final results, quality status, etc. When errors, failures, or quality issues are logged, they are collected at an entity’s “problem collection point.”

This collection point feeds the self-optimization algorithm. From here, the agent extracts the problem, creates execution tasks similar to automated research to troubleshoot failures, tries out solutions, validates them through evaluation, and optimizes them. Once a remediation solution is found, update your SOPs to prevent the problem from recurring. The cycle then repeats.

Everything has such a life cycle. Define your goals. Let the agent run. Record everything. Collect failures. Improve autonomously. Update SOPs. Repeat continuously, each time faster than the last.

2. Transformation to “intent-based engineering”

The real power of AI lies in bridging the gap from the “current state” to the “ideal state.” Define your current state, define your goals, and then let AI narrow the gap between your current state and your goals. Although the concept is simple, there are prerequisites before everything works. You have to be able to articulate what you really want. This will prove to be very difficult. No amount of tools will help you if you can’t explain what “good” is.

This is a big problem for companies. If you ask a CEO what their ideal security solution looks like, you’ll only get a vague description. If you ask a team leader what “done” means for a project, you’ll get one text, and three people will interpret it three different ways. This “representation gap” exists not only between experts and AI, but also between leaders and their organizations. Most companies are unable to clearly explain what they do, much less break it down into measurable or optimizable components.

What I’m building within my algorithm is exactly this functionality: a way to reverse engineer any request into a discrete, testable “ideal state reference.” Each criterion is 8 to 12 words long, and the criteria are black and white with “pass/fail.” Once you have these, you can optimize (climb the hill), evaluate, and achieve automatic improvements. But the starting point for all of this is the ability to say exactly what you want to say. This is a new engineering skill. It’s not about writing code or writing prompts, it’s about expressing your intent clearly enough to be verifiable.

3. From ambiguity to transparency

Companies never really see what’s going on inside their companies. What is the actual cost of the process? How long does it actually take? What is the quality of the output? Who is doing the line-of-business, and who is doing the non-line-of-business “scaffolding” work?

Most organizations operate on “feelings” and spreadsheets. And AI makes everything visible. Actual work, cost, quality – all of this becomes measurable in ways that were not possible before. Once you have reviewed them, you can improve them. This is true for businesses, governments, small teams of three, or whatever your focus is.

And the first thing transparency reveals is how much work is actually not core work.

4. Most of the work is just “scaffolding”

AI is revealing facts. Between 75% and 99% of the knowledge base work is actually “scaffolding” administrative overhead. In fields such as security testing, development, and consulting, most of your time is spent maintaining tools, workflows, templates, and knowledge bases. Really deep thinking is done by a very small percentage, by a very small number of people, and in a very short time.

AI completely shatters this “scaffolding” part. Agent skills have proven that all context, methodologies, and tools can be packaged into one skill, making AI execution effectiveness comparable to and even exceeding that of most professionals. The core work is actually not difficult. The hard part is maintaining your footing.

5. Dissemination of specialized knowledge to society

A “representation gap” exists between knowledge acquired by experts and recorded knowledge. Most of the expertise resides in people’s heads. For example, 62-year-old Cliff knows how everything works, but he’s never recorded it. When Cliff retires, that knowledge goes with him.

What’s happening now is that expertise is spreading from brains to skills to SOPs to context files to open source projects. Once this knowledge is acquired, it is never lost. It’s like peeing in a pool (you can’t take it back). Every skill released, every process recorded, every expert report captured is permanently entered into the collective knowledge base. This makes every AI instance smarter. Not one, but all become stronger at the same time.

There is already a large industry in this field dedicated to distilling expertise into models, but most people are unaware of it. This is a one-way ratchet effect. It takes people 20 to 30 years to develop deep expertise in a field. They will forget, retire, quit their jobs. AI instantly absorbs all the expertise it acquires, never forgets it, and can reproduce it infinitely. The gap between humans and AI in the speed at which they accumulate specialized knowledge is widening.

influence

Autonomous optimization changes the speed of everything

The pace of progress in many fields is about to accelerate to an unimaginable rate. The ability to define what is “good”, measure it, and repeat automatically can do things overnight that used to take months of manual tuning. Autoresearch has proven this in their machine learning research. However, this also applies to security solutions, consulting results, content pipelines, and recruitment processes. Anything that has a definable “ideal state” becomes autonomously optimizable.

All actors run the same cycle: businesses, governments, teams, and individuals. That means defining goals, letting agents execute, logging everything, collecting failures, autonomously improving, and updating SOPs. The first companies to adopt this model will progress so quickly that those that lag behind will simply not be able to compete.

Intention becomes the bottleneck

💡 People who can express their intentions clearly have a great advantage.

The new rare skill is not writing code or writing prompts, but the ability to say what you really want to say. And this must be a quality intention. The quality of creativity is always most important, but then the ability to express creativity, define it as a real goal, and run the entire company around it. Most leaders can’t do this, and neither can most companies. The first companies to solve this problem will be able to focus all optimization tools on the real goal, while other companies are still talking about OKRs.

everything will be transparent

We are about to see the world move from vague “senses” to transparent, optimizable components. There will be fewer and fewer hiding places for fraudsters and industry “gatekeepers.”

This also makes it more difficult to compete in the sale of products and services. Because the first thing agents ask is, “What are your metrics?” They don’t care about marketing copy or customer reviews, they care about real, verifiable performance data. If you don’t have this data, you’ll lose out to competitors who do.

🔮 I call this “Magic to Excel.”

Scaffolding has become a commodity

The so-called “mysteries” of certain fields and professions are solved and their scaffolding properties are revealed. It’s just that most people didn’t understand it before. For example, how to set up a particular development environment and keep it running until code is written. The same is true in law, consulting, and other high-paying industries.

⚖️ This is not a 100% complete process, but as you approach 95% or even 99.99%, small differences become insignificant in the grand scheme of things. However, for those with the unique insights and knowledge this model provides, it provides a competitive advantage.

Expert knowledge becomes social infrastructure

Knowledge that was previously only available to experts will soon be available to everyone, and most importantly, to AI. The advantage of someone with 50 years of experience in a field will no longer exist. Because this content is extracted and aggregated by you or your colleagues around the world.

Overview and impact

The craziest thing about all of this is that they interact and amplify each other.

Not only can you improve all of these different components, but you can also improve the rate of improvement itself.

Every company, every government, every organization will eventually converge on the same cycle. That means defining the goal, letting the agent execute it, logging everything, collecting failures, and letting the system self-optimize. The first companies to do this will be able to compound interest very quickly, putting them ahead of other companies by a wide margin.

Of all these ideas, this is the most core meaning.

I really can’t express how crazy this is going to be.

Translator: Boxy.