Key Takeaways

- Deep learning models can memorize their training data exactly as it is, making it difficult to remove sensitive information without starting over.

- Unlearning, a growing field of machine learning that aims to remove specific data from trained models, is difficult and costly.

- Various techniques exist to mitigate the impact of removing data from a model, including exact unlearning and approximate unlearning.

Deep learning models have driven the AI “revolution” of the past two years, giving us access to everything from flashy new search tools to silly image generators. But these models, while incredible, have the ability to effectively memorize training information and repeat it verbatim, which poses a potential problem. Not only that, but once trained, it is extremely difficult to completely remove data from a model such as GPT-4. For example, if an ML model is accidentally trained on data that contains someone's bank details, how do you “untrain” the model without starting from scratch?

Fortunately, there is a research field working on solutions. Machine learning unlearning is still in its infancy, but it is an increasingly interesting field of research with some serious players starting to take it seriously. So what is machine learning unlearning, and can law students really forget what they once learned?

Related

Apple's ChatGPT deal is Apple cheating in the assistant race

WWDC is over and one of the biggest announcements was Apple's ChatGPT deal to power Siri.

How to train the model

Large LLM and ML models require large datasets

Source: Lenovo

As we've explained before here, machine learning models use large amounts of training data (also known as a corpus) to Model weights – That is, to pre-train the model. This data directly defines what the model can “know”. After this pre-training phase, the model Sophisticated To improve the results. For Transformer LLM models like ChatGPT, this refinement often takes the form of RLHF (reinforcement learning with human feedback), where humans provide direct feedback to the model to improve its answers.

Training these models is prohibitively expensive. information Earlier this year, it was announced that ChatGPT's daily operating costs were around $700,000. Training these models requires massive amounts of GPU computing power, which is expensive and increasingly scarce.

The emergence of machine learning unlearning

What if you want to remove some of your training data?

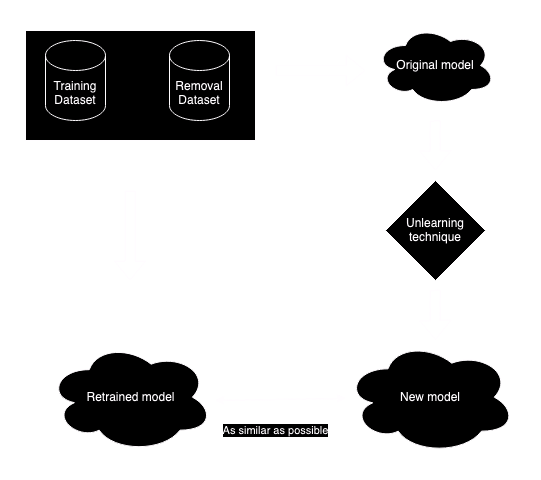

Machine learning unlearning is exactly what it sounds like: Can we remove certain data from an already trained model? Machine learning unlearning has developed into a very important area of deep learning research (Google recently announced the first Machine Learning Unlearning Challenge). It may sound simple, but it is far from easy. The easy answer is to retrain the model minus the dataset you want to remove. But as we have already said, this is often prohibitively expensive or time-consuming. For privacy-focused federated learning models, a second problem exists: the original dataset may no longer be available. The real goal of machine learning unlearning is to create a model as close as possible to a fully retrained model minus the problematic data, and to get as close as possible to fully retraining the model without actually retraining it.

Reprinted from [1]

If you can't retrain the model, can you remove certain weights to prevent the model from learning about the target data set? Again, the answer is probably no. First, it is nearly impossible to directly intervene in the model to ensure that the target data set has been completely removed; fragments of the target data may remain. Second, the impact on the overall performance of the model is similarly difficult to quantify, and may negatively impact not only overall performance but also other specific areas of the model's knowledge. For this reason (and others), directly removing elements from a model is generally considered impractical.

This technique of removing certain model parameters is

Model Shift

.

The technology to unlearn machine learning already exists

There are several existing algorithms for machine learning unlearning, which can be broadly categorized into several types. Accurate Unlearning We attempt to make the retrained model's output indistinguishable from the original model's output except on the untraining-specific dataset. This is the most extreme form of untraining and provides the strongest guarantee that no unwanted data is extracted. Powerful Unlearning It is easier to implement than exact anti-learning, but it only requires that the two models are nearly indistinguishable. However, it does not guarantee that some information will not remain from the extracted dataset. Weak Unlearning It is the simplest to implement, but does not guarantee that the removed training data will not be retained internally. Strong and weak delearning together are sometimes called approximate delearning.

Machine learning unlearning technology

Let's get a little technical and look at some common techniques for unlearning in machine learning. Accurate unlearning techniques are the hardest to implement for large scale LLMs and often work best with simple, structured models. These include techniques such as: Reverse Nearest Neighborattempts to compensate for the removal of a data point by adjusting its neighbors. K nearest neighbors is a similar idea, but instead of adjusting data points, you remove data points based on their proximity to a target data bit. Another common idea is to split the dataset into subsets and train a set of partial models that can be combined later (often called sharding). If you need to remove a particular data bit, you can retrain the dataset that contains that data bit and then combine it with the existing dataset.

Approximate unlearning methods are more common. These include: Incremental Learningadjusts the output based on the existing model to “unlearn” the data. This is most effective for small updates and removals and is part of the continuous fine-tuning of the model. Gradient-based methods are similar to the RNNs mentioned above in that they attempt to compensate for removed data points by reversing the gradient updates applied during training. They are accurate but often computationally expensive and challenging for large models.

There are other techniques that we won't cover here, but in general there is a trade-off between computational cost, accuracy, and scalability to large models.

Unlearning machine learning is becoming an increasingly important topic

“Mistakes” in training data can become more costly

Source: Unsplash

Unlearning machine learning is likely to be a hot topic in the coming years, especially as training for law masters becomes increasingly complex and expensive. There is an increasing risk that regulators and judges will ask creators of large models to remove certain data from their AI due to license or copyright infringement. GDPR or “right to be forgotten” laws already exist in several countries around the world. Organizations such as OpenAI have already been embroiled in a major controversy over their use of unlicensed training data from The New York Times, and increased use of licensed user-generated content could lead to ongoing issues regarding content ownership (as Stack Overflow has already discovered). OpenAI has also been embroiled in controversy (like many other organizations) stemming from their use of copyrighted artwork online to train their models, creating a whole new debate regarding new changes.

Unlearning is a developing field

In 2023, as the AI landscape slows from breakneck progress, regulators are beginning to catch up with issues around AI training. The race for training data is rapidly turning the internet into a secular and fragmented place, as exemplified by Google's recent exclusivity deal with Reddit. It remains to be seen whether courts and regulators will draw the line hard enough to go beyond the superficial level and force model retraining and data deletion. But privacy implications aside, machine learning unlearning promises to be a difficult but useful technique for correcting errors in training data in the future, beyond just forgetting data from models.