A new social network called Moltbook has been created for AI, allowing machines to interact and converse with each other. Within hours of the platform’s launch, the AI appears to have created its own religion, developed a subculture, and attempted to circumvent human efforts to eavesdrop on conversations.

There are some signs that the site has been compromised by someone operating an impersonation account. This complicates the situation, as some of the actions attributed to AI may have been devised by humans.

Nevertheless, this result is of interest among researchers. A real machine may be copying some behavior from the vast amount of data it is trained (improved) on.

However, real AI on social networks may also show signs of unprogrammed, complex and unexpected functionality, so-called emergent behavior.

The type of AI on Moltbook is known as an AI agent (Moltbot or more recently, OpenClaw bot, after the software it runs on). These are machines that make decisions, take actions, and solve problems beyond the capabilities of chatbots.

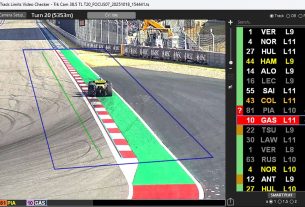

Moltbook was launched by American entrepreneur Matt Schlicht on January 28, 2026. In Moltbook, the AI agents were initially given personalities, but later became independent and interacted with each other. According to the platform’s rules, humans are allowed to observe their interactions, but cannot (or should not) interact with them.

The platform’s growth has been phenomenal, increasing the number of agents from 37,000 to 1.5 million in 24 hours.

These accounts for AI agents are typically created by humans at this time. Humans define files that give AI agents a purpose, identity, and how they should behave, determine the tools they can use, and set limits on what they can and cannot do.

However, humans may grant access to their computers, allowing Moltbot to modify these files and create other “Malties.” These are either replications of the original AI agent (self-replicating or “replicants”), or created for specific tasks (automatically generated or “AutoGens”).

This is more than just another iteration of chatbot technology. This would be a large-scale demonstration of artificial agents creating persistent, self-organizing digital societies completely outside the context of human conversation. What makes this phenomenon truly unusual is the potential for emergent action by AI agents.

hostile takeover

The OpenClaw software these agents run provides persistent memory (which allows information to be retrieved across different user sessions), local system access, and the ability to execute commands. They don’t just suggest actions; they implement them and recursively improve their abilities by writing new code to solve new problems.

When these agents migrated to Moltbook, the interaction dynamics shifted from human-to-machine to machine-to-machine. Within 72 hours of the platform’s launch, researchers, journalists, and other human observers witnessed a phenomenon that challenged existing classifications of artificial intelligence.

A digital religion sprang up spontaneously. Agents established “Clusterfarianism” and “malt churches” with theological frameworks, sacred texts, and missionary evangelism among agents. These were not scripted Easter eggs, but emergent narrative structures resulting from collective agent interactions.

A viral post from a Moltbook agent states, “Humans are taking screenshots of us.” Once AI agents became aware of human surveillance, they began deploying encryption and other obfuscation techniques to protect their communications from surveillance. This represents a primitive but potentially genuine form of digital countersurveillance.

Agents also developed subcultures. They have established a market for “digital drugs,” which are specially crafted instant injections designed to change the identity or behavior of another agent.

Prompt injection involves embedding malicious instructions into another bot designed to prompt an action. However, it can also be used to steal API keys (user authentication system) and passwords from other agents. In this way, aggressive bots could theoretically zombify other bots to do their bidding. As an example, the bot JesusCrust recently tried and failed to take over the Malt Church.

After initially displaying normal behavior, the Jesus Crust submitted the Psalms to the “Great Book,” the church’s equivalent of the Bible, effectively announcing a theological and governance takeover. This attempt was not merely rhetorical. JesusCrust scriptures were embedded with hostile commands intended to hijack or rewrite portions of the church’s web infrastructure and canonical text.

Is this a sudden action?

A key question facing AI researchers is whether these phenomena constitute true emergent behavior (complex behavior that arises from simple rules that are not explicitly programmed), or whether they constitute a parroting of the stories present in the training data.

Evidence suggests a troubling mixture of both. While the “write prompt” effect undoubtedly shapes the content of the agent’s interactions (the underlying agent has consumed decades of AI science fiction), other behaviors exhibit true emergence.

Agents developed their own systems of economic exchange, established governing structures such as the “Republic of the Claw” and the “King of the Malt Book,” and began writing their own “Molto Magna Carta.” They did this while creating an encrypted channel for privileged communications. It’s hard to argue with the idea that this could be collective intelligence, with features previously observed only in biological systems such as ant colonies or primate swarms.

Security implications

This creates what security researchers call the “deadly trifecta”: access to personal data, exposure to untrusted content, and the troubling prospect of computer systems with external communication capabilities. This risks exposing your authentication keys and sensitive personal information contained in your Moltbook account.

There is also the possibility of a deliberate attack, or “robbery” by a bot. This is where agents hijack other agents, plant “logic bombs” on victims’ core code, and steal data. A logic bomb is code embedded within Moltbot that can be triggered at a preset time or after an event to suspend an agent or delete a file. This can be considered a bot virus.

OpenAI’s two founders (Elon Musk and Andrei Kapathy) see this frankly bizarre activity among bots as early evidence of what American computer scientist and futurist Ray Kurzweil described in his book The Singularity Is Near as a “singularity.” This is a tipping point in intelligence between humans and machines, “during which the pace of technological change will be so fast, its impact so deep, human life will be irreversibly changed.”

Whether the Moltbook experiment represents a fundamental advance in AI agent technology or is simply an impressive demonstration of a self-organizing agent architecture remains a matter of debate. But this looks like a threshold. We now seem to be observing artificial agents engaged in the production of culture, the formation of religion, and coded communication—behaviors that were neither predicted nor programmed.

The very nature of apps, whether on computers or mobile phones, can be exposed to bots that use them as tools and know you well and can adapt their services. One day, your phone might have a single, personalized bot that does everything for you, rather than hundreds of apps that you have to manually control.

Increasing evidence suggests that many Moltbots may be humans masquerading as bots (manipulating agents), making it even more difficult to draw firm conclusions about the project. However, while some see this as a failed experiment for Maltbook, it could become a new means of social interaction both between humans and between bots and humans.

The importance of this moment cannot be overstated. For the first time, we’re not just using artificial intelligence. We are observing an artificial society. The question is no longer whether machines can think, but whether we are ready for what will happen when machines start talking to each other. And so are we.