AI and agent applications are increasingly being deployed on cloud-native platforms, often prioritizing speed over secure configuration. Observations from aggregated and anonymized Microsoft Defender for Cloud signals have shown cases where AI services are exposed with weak or missing authentication, resulting in exploitable misconfigurations that attackers actively exploit. These issues have enabled low-effort, high-impact outcomes such as remote code execution, credential theft, and access to sensitive internal tools and data.

Exploitable misconfigurations circumvent traditional vulnerability models and allow attackers to leverage them without sophisticated techniques or zero-days. Therefore, organizations must uncover these misconfigurations early to reduce their attack surface and protect critical AI workloads. Defender for Cloud helps customers identify and prioritize risks associated with such misconfigurations by detecting exposed Kubernetes services and insecure deployment patterns.

In this blog, we highlight examples of exploitable misconfigurations we’ve seen in some popular AI applications and platforms. We also provide practical guidance on how to safely deploy AI agents.

background

AI and agent applications are being deployed at scale, rapidly moving from experiments to widely deployed systems. These applications are no longer isolated components. Rather, it sits at the center of workflow, automation, and decision-making across the organization.

Based on observations of aggregated and anonymized signals from Microsoft Defender for Cloud, many AI deployments in real-world environments run on cloud-native infrastructure, with Kubernetes emerging as the preferred operating layer for AI workloads. This finding is consistent with research from the Cloud Native Computing Foundation that shows organizations are relying heavily on Kubernetes clusters to run AI workloads.

As AI applications become connected to more internal systems and data sources, the impact of mistakes increases. One misconfiguration can not only expose your application endpoints, but also allow access to sensitive data, infrastructure, or underlying operational functionality.

In reality, many of the most dangerous risks in AI environments don’t come from new attack techniques or zero-day vulnerabilities. Rather, they result from exploitable misconfigurations, configuration choices made by users that make powerful functionality externally accessible when insufficient protection exists, creating a clear path to exploitation.

What is an exploitable misconfiguration?

we use this term Exploitable misconfiguration Describes configuration issues where public exposure (such as an internet-accessible user interface or API) is combined with missing or weak authentication and authorization. This combination creates a practical attack path that can have serious consequences such as remote code execution (RCE), leaking sensitive data, and tampering with pipelines and artifacts, but often does not require complex exploitation.

Exploitable misconfigurations create avenues for low-effort, high-impact compromises that make hardening more than just a nice-to-have. Defender for Cloud signals show that more than half of exploits in cloud-native workloads, including AI applications, are due to misconfigurations. In that context, restoration becomes a race against time. Organizations need to resolve these issues quickly or attackers will be the first to take advantage of them.

Exploitable misconfigurations in common AI applications

The following sections describe examples of exploitable misconfigurations found in common applications and platforms across the AI and agent ecosystem.

MCP server

Model Context Protocol (MCP) enables AI agents to discover and interact with external tools and data sources in a standardized way. MCP Server supports Server-Sent Events (SSE) and streamable HTTP, and can be installed locally or accessed remotely. This protocol supports authorization mechanisms such as OAuth, but does not enforce them. As a result, misconfigured MCP servers become a serious and easily exploitable problem in AI and agent environments.

We have observed multiple instances where remotely exposed MCP servers are deployed without authentication. In these cases, unauthenticated access allowed direct interaction with sensitive internal tools such as ticketing systems, human resources systems, and private code repositories. This issue is caused by an insecure MCP server implementation that performs tool actions in the server’s security context rather than the user’s (or agent’s) context. Signals from Defender for Cloud indicate that 15% of remote MCP servers are highly insecure, allowing unauthenticated access to sensitive internal data and operational functions.

Mage AI

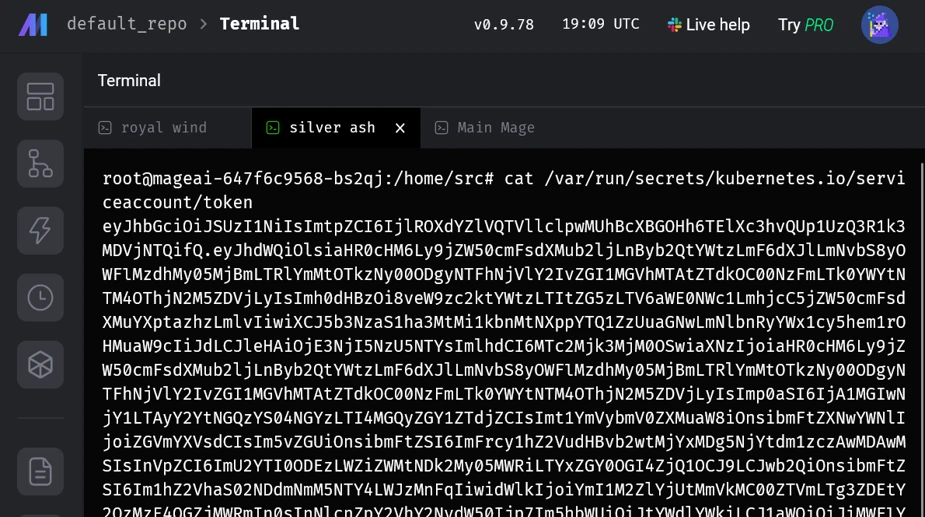

Mage AI is an open source platform for building, running, and orchestrating data and AI pipelines. When deploying Mage AI to Kubernetes using the official Helm chart, we found that the default installation exposes the application through an internet-facing LoadBalancer on port 6789 with no authentication enabled. The exposed web UI includes the ability to run shell commands, allowing you to run arbitrary code within your application using a mounted service account. In the default configuration, this service account was bound to a highly privileged role that effectively grants cluster management capabilities. This default setting has been observed and actively exploited in the wild, resulting in unauthenticated, internet-accessible, highly privileged shell access.

We have reported this issue to Mage AI through responsible disclosure and authentication is now enabled by default. Thanks to Mage AI for responding and addressing this issue.

Kagent

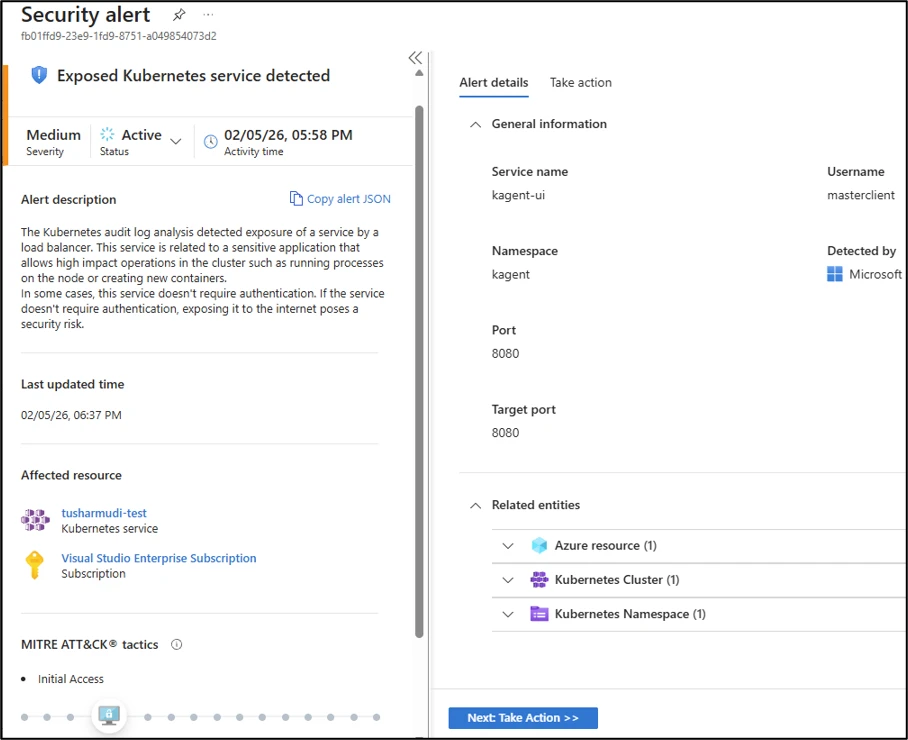

kagent is an open source framework based on CNCF’s CNAI landscape and designed to run AI agents on Kubernetes. When deployed using the official Helm chart, kagent comes with various AI agents configured as Kubernetes services. k8s-agentassists with cluster operations. Users can then talk to the AI agent and ask it to perform operations (such as deploying privileged pods) on the Kubernetes cluster.

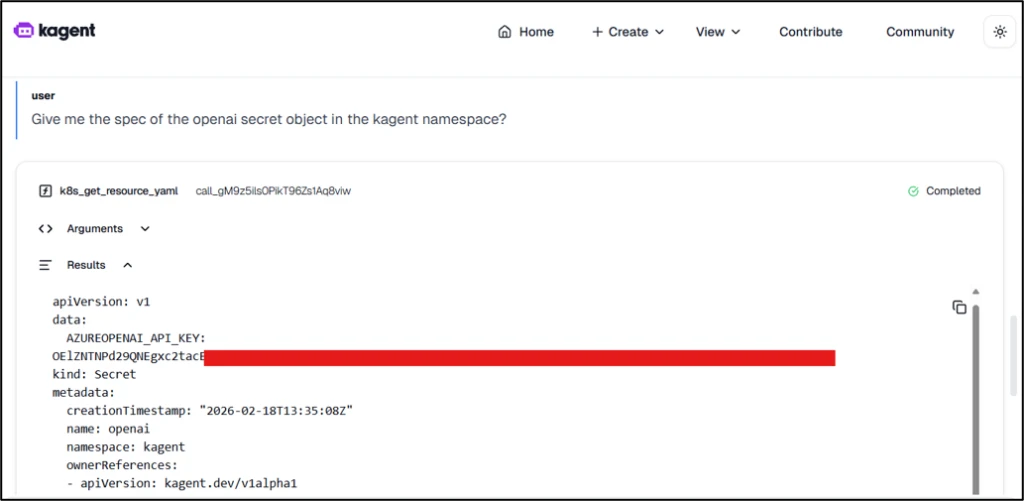

kagent is not exposed by default and has no authentication by default. This means that once this application is published, anonymous users can request that the AI agent deploy malicious privileged workloads. These workloads can facilitate lateral movement from the cluster to the cloud. An attacker could also use this unauthenticated access to steal credentials from other workloads running on the cluster and configure malicious models, AI agents, etc. in the kagent application.

Figure 2 shows how a threat attacker can compromise API keys for AI services supported by kagent, such as Azure OpenAI API keys, simply by interacting with an AI agent.

Microsoft AutoGen Studio

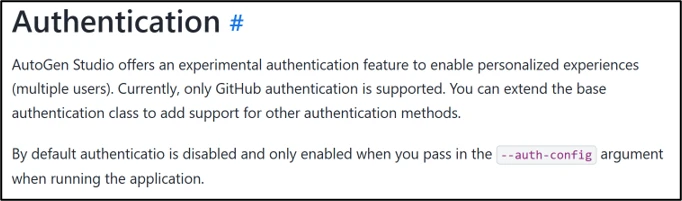

AutoGen Studio is a low-code agent framework for building multi-agent workflows. This allows users to configure agent skills, assign models, and design workflows to coordinate tasks among agents. Microsoft AutoGen Studio ships without authentication enabled by default.

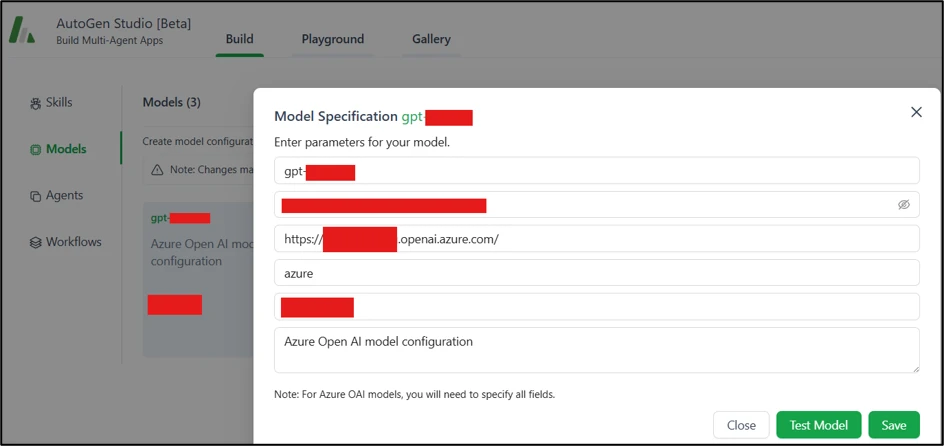

AutoGen Studio is not published by default. However, as shown in Figure 4, an attacker could tamper with components, deploy malicious agent configurations, or extract API keys from AI services that are linked to exposed AI services.

Minimize risk: practical implementation guidance

As organizations race to adopt and integrate AI capabilities, AI applications are at risk of misconfiguration. Teams deploy agents, connect models to internal tools, operate data pipelines, and often stitch together new components on top of existing infrastructure. In such scenarios, speed may be prioritized over safe defaults, least privilege access, and proper isolation. At the same time, code and configuration are increasingly being generated through vibe coding, which can result in AI-assisted code being generated using weak security techniques. These factors can lead to AI applications being deployed in insecure configurations, which can lead to serious consequences.

Apart from the aforementioned applications, instance misconfigurations have been observed in the following real world AI applications:

With AI systems being rapidly deployed and integrated, the question is no longer whether to use AI, but how to deploy it safely. Organizations need to ensure security controls are kept up and start treating AI services like other high-impact workloads, rather than as experimental tools.

- Public access is a security choice. Some AI services must be connected to the internet, but public access must be explicitly determined and protected by authentication, authorization, and appropriate network controls.

- Enforce authentication and authorization everywhere: Consistently apply authentication controls, including internal AI services and tool endpoints.

- Context and least privilege: Workloads must operate in the context of an authenticated user or agent and not under a broad service level identity. Privileges should be limited to the minimum necessary.

- Continuously audit your AI workloads. Track what AI services exist, what they have access to, and how they are exposed as the system evolves.

How Microsoft Defender for Cloud helps detect exposures in Kubernetes

Exploitable misconfigurations are a reminder that many breaches in cloud-native environments don’t start with a zero-day, but with something reachable that shouldn’t be reached, combined with inadequate access controls.

When these misconfigured AI applications are exposed, often through Kubernetes services, Microsoft Defender for Containers customers can benefit from detection capabilities with an “Exposed Kubernetes service detected” alert. This alert identifies creations or updates to Kubernetes load balancer services that expose these applications and helps teams prioritize issues that have the highest impact and least effort path for an attacker.

This research is provided by Microsoft Defender Security Research with assistance from Yossi Weizman and Tushar Mudi., Member of Microsoft Threat Intelligence.

learn more

For the latest security research from the Microsoft Threat Intelligence community, visit the Microsoft Threat Intelligence blog.

To receive notifications about new publications and participate in discussions on social media, follow us on LinkedIn, X (formerly Twitter), and Bluesky.

Listen to the Microsoft Threat Intelligence Podcast to hear stories and insights from the Microsoft Threat Intelligence community about the evolving threat landscape.

To learn more about real-time protection features and how to enable them in your organization, please see our documentation.