Important points

- Responsible AI promotes fairness, transparency, and accountability in decision-making systems.

- Organizations can use frameworks such as the OECD Principles and the NIST AI Framework for guidance on responsible AI.

- Responsible AI implementation includes training teams, auditing systems, and maintaining human oversight.

AI is changing how and where we work, learn, and solve problems. From personalized learning platforms to automated enrollment systems, AI is now permeating nearly every corner of higher education and industry.

But with this power comes great responsibility. When an AI system decides who gets into a university, recommends a career path, or analyzes research data, its decisions must be fair, explainable, and trustworthy. Biased algorithms can deny opportunities to qualified students. An opaque system can lead to life-altering decisions being made without anyone understanding why.

That’s why responsible AI is so important. It’s not just about creating smarter tools. It’s about building systems that are fair, accountable, and trustworthy.

What is responsible AI and why it matters today?

Responsible AI refers to the practice of designing, developing, and using artificial intelligence in an ethical, fair, transparent, and accountable manner. It is about ensuring that AI systems respect human rights, operate within legal boundaries, and are consistent with societal values.

Think of it as a framework that guides you on how to build AI that is reliable and beneficial to everyone affected.

Why is this important now? Because AI is now making decisions that previously required human judgment. Universities are using AI to classify thousands of applications. Medical systems use this to recommend treatments. Companies use it to screen job applicants.

When these systems work well, they can process information faster and more consistently than humans. But failures due to bias, mistakes, or lack of transparency can have serious consequences. Hiring algorithms trained on historical data can perpetuate sexism. Generative AI tools can spread misinformation that damages reputations and misleads students.

The stakes are real. That’s why building AI responsibly is essential to maintaining trust and ensuring technology serves everyone equitably.

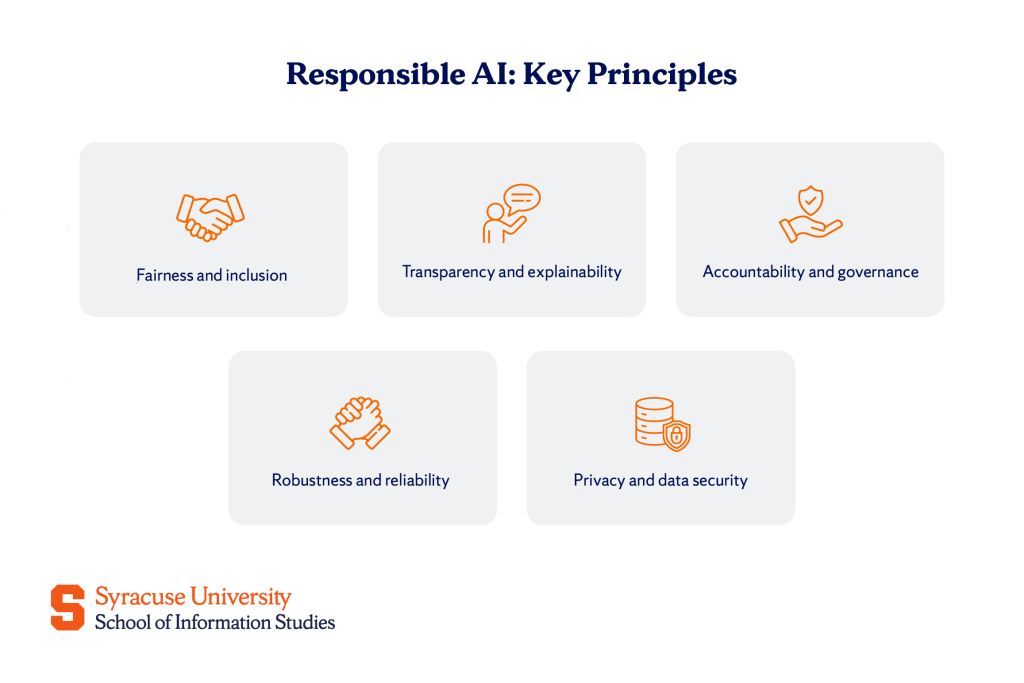

Key principles of responsible AI

Building responsible AI requires following core principles to protect people and maintain trust. The most important fundamentals are:

Equity and inclusion

AI systems must treat everyone equally, regardless of race, gender, age, or background. This means actively working to eliminate bias from both the data that trains these systems and the algorithms that make decisions.

Algorithmic bias occurs when AI learns from data that reflects historical inequalities. For example, if a hiring system learns from decades of applications when most senior positions were in a certain tier, it may incorrectly conclude that candidates from other backgrounds are less qualified.

Organizations can address this issue by using diverse and representative datasets and regularly testing for bias between different groups. At Syracuse University’s iSchool, students learn how to audit the fairness of algorithms. This skill will become increasingly important as more companies become aware of the ethical and legal implications of biased AI.

Transparency and explainability

People have the right to know when AI is making decisions about their lives and to understand how those decisions are made.

Transparency means being upfront about when AI is being used. Explainability goes further, meaning that AI systems must be able to present their inferences in a way that people can understand. This is especially important in high-stakes situations such as college admissions, medical diagnosis, and criminal justice.

Explainable AI (XAI) techniques help break down complex machine learning models into understandable components. Rather than treating AI as a “black box,” these approaches reveal which factors influenced a decision and how much weight each factor carries.

This builds trust. When students understand how an AI tutoring system identifies learning gaps, they are more likely to address them. Once faculty see how the AI tool recommends research collaborations, they can examine the logic and add their own judgment.

Transparency also supports compliance. Regulations like the EU’s AI law increasingly require organizations to document and explain their AI systems. Building explainability from the beginning makes meeting these requirements much easier.

Accountability and governance

If the AI makes a mistake, someone needs to take responsibility to fix it and prevent it from happening again. That’s where responsibility comes into play.

Responsible AI requires a clear governance structure. Organizations need to specify who will oversee the AI system, who will review its decisions, and who will respond when problems arise. Many companies are now establishing AI ethics committees. This is a cross-functional team that reviews proposed AI projects before they are launched.

These bulletin boards ask questions such as: Is this system consistent with our values? Can it cause harm? Are we prepared to explain how it works if asked by regulators or those affected?

Accountability also means creating an audit trail. AI systems must record their decisions and the data used to make them. This allows us to investigate errors, identify patterns of bias, and improve the system over time.

For students interested in AI governance, this is a growing career field. Organizations need people who can bridge technology, ethics, and policy. This is exactly the kind of interdisciplinary thinking that Syracuse iSchool values.

Robust and reliable

AI systems must behave consistently and securely, even in the face of unexpected inputs and adversarial attacks.

Robustness means that the system does not break or produce meaningless results when it encounters data that differs slightly from the data it was trained on. A robust AI admissions tool should work well even if your application is missing fields or contains unusual formats.

Reliability means that the system produces accurate and consistent results over time. It doesn’t suddenly change behavior or degrade quality without explanation.

Security is also important. AI systems can be vulnerable to attacks that trick them into making incorrect decisions. For example, adding small invisible changes to an image can fool a computer vision system. In sensitive applications such as cybersecurity and autonomous systems, these vulnerabilities can have serious consequences.

Building robust AI requires rigorous testing across a variety of scenarios, continuous monitoring in real-world use, and security measures that protect against both accidental errors and intentional attacks.

Privacy and data security

Responsible AI protects people’s personal information every step of the way, from data collection to storage to use.

This starts with data minimization, collecting only the information you actually need. If an AI tutoring system can effectively handle anonymized student data, there is no reason to store personally identifiable information.

Strong safeguards are required when systems handle personal data. This includes encryption, access controls, and secure storage practices. It also means gaining meaningful consent. Help people understand what data we collect and how we use it.

Privacy considerations also apply to AI output. Generative AI systems trained on large datasets can potentially leak personal information from the training data. Responsible developers test for these risks and implement safeguards to prevent leaks.