In the rapidly changing world of artificial intelligence (AI), the only constant is change. It seems that almost as soon as a new model is released, it is rendered obsolete by the release of a competitor. This breakneck speed of advancement means constant enhancements and new features, constantly improving performance and increasing productivity. However, despite their growing importance, TinyML applications are not progressing at the same rate.

Machine learning engineer Felix Galindo believes the reason we haven’t seen as much innovation on small hardware platforms is not because of poor model performance, but because of a lack of existing infrastructure. Cloud-based models are frequently updated, whereas TinyML models are typically frozen upon deployment and have no easy way to update them. Galindo is trying to change this using an incremental model update system called . Tiny ML Delta.

move forward with changes

TinyMLDelta aims to solve one of the biggest pain points in embedded AI: the difficulty of updating machine learning models running on microcontrollers. Traditional over-the-air (OTA) updates require sending the entire TensorFlow Lite Micro model (often tens or even hundreds of kilobytes) to a large number of devices. This consumes bandwidth, increases data costs, causes flash wear, and slows down iterations. As a result, most TinyML deployments remain in the initial model, even as improvements become available.

The solution proposed by Galindo is to send only the differences. Instead of replacing the entire model, TinyMLDelta generates compact “patches” that modify the model already stored on your device. In actual testing using a 67KB sensor model, only 383 bytes changed between versions. The resulting patch weighs only 475 bytes, small enough to be cheap to send and quickly applied on even the smallest MCUs.

However, this system is more than just a lightweight differential mechanism. Galindo emphasizes that the guardrails are the most important part of the design. TinyMLDelta checks compatibility at multiple levels, including interpreter ABI version, operator set, tensor I/O schema, and required memory size. If the new model is incompatible with the existing firmware, TinyMLDelta will automatically reject the patch to avoid bricking the device. Updates use an A/B slot mechanism with crash-safe journaling to ensure that the device either fully succeeds or safely rolls back if power is lost during an update.

The future of TinyML

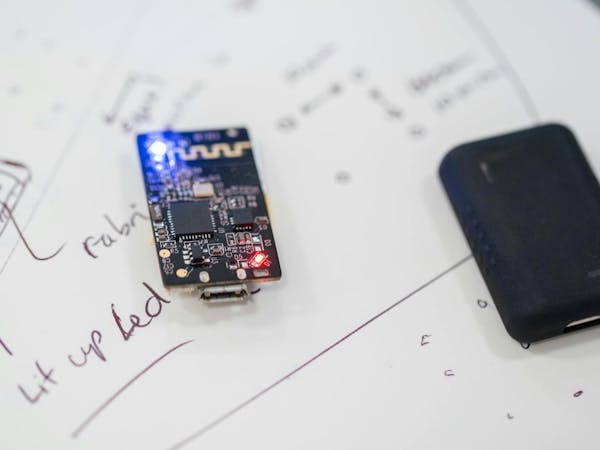

TinyMLDelta currently supports TensorFlow Lite Micro and includes a complete POSIX/macOS demo environment that simulates flash behavior. Planned additions include secure signatures with SHA-256 and AES-CMAC, model versioning metadata, and OTA reference implementations for popular embedded platforms such as Zephyr, Arduino Uno R4 WiFi, and Particle’s Tachyon.

With billions of microcontrollers deployed around the world and more edge AI applications emerging every year, the ability to safely and efficiently update models may prove to be as important as the models themselves. Galindo sees TinyMLDelta as an early but important building block towards a complete on-device AI lifecycle.