Today we look forward to unveiling support for the Dowhile loop on Amazon Bedrock Flows. This powerful new feature allows you to create a direct, condition-based workflow within Amazon bedrock flow using prompt nodes, AWS Lambda capabilities, Amazon bedrock agent, Amazon bedrock inline code, Amazon bedrock knowledge base, Amazon Simple Storage Services (Amazon S3), and other Amazon flooring structures. This feature avoids the need for complex workarounds and allows for sophisticated iterative patterns that use the entire range of Amazon Bedrock Flows components. Tasks such as content improvements, recursive analysis, and multi-step processing can now seamlessly integrate AI model calls, custom code execution, and knowledge search over repeated cycles. By providing loop support on a variety of node types, this feature simplifies generative AI application development and accelerates enterprise adoption of complex, adaptive AI solutions.

Organizations using Amazon Bedrock Flows can use Dowhile Loops to design and deploy workflows to fully build more scalable and efficient AI applications within Amazon Bedrock environments, while:

- Iterative – Repeat operations until certain conditions are met, allowing for dynamic content improvements and recursion improvements

- Conditional logic – Implement sophisticated decision-making within a flow based on AI output and business rules

- Complex Use Cases – Manage multi-step generation AI workflows that require iteration and improvements

- Builder friendly – Create and manage loops via both the Amazon Bedrock API and the AWS Management Console in Trace

- Observability – Adopt seamless tracking of loop iterations, conditions, and execution paths

This post explains the benefits of this new feature and shows how to use Dowhile loops in Amazon bedrock flow.

The advantages of the Dowhil loop in Amazon bedrock flow

Using a Dowhile loop with Amazon Bedrock Flows offers the following benefits:

- Simplified flow control – Create sophisticated iterative workflows without complex orchestrations or external services

- Flexible processing – Enable dynamic condition-based execution paths that can be adapted based on AI outputs and business rules

- Enhance your development experience – Help users build complex iterative workflows through an intuitive interface without the need for external workflow management

Solution overview

The next section shows how to create a simple Amazon bedrock flow using Do-While Loops using Lambda functions. Our example presents a practical application that builds a flow that generates blog posts on a particular topic in an iterative way until a particular acceptance criteria are met. This flow illustrates the power to combine different types of Amazon bedrock flow nodes within a loop structure. This allows prompt nodes to generate and fine-tune blog posts, fine-tune inline code nodes, create custom Python code to analyze output, and store each version of blog post during the browsing process of S3 storage nodes. Dowhile Loop continues running until the quality of the blog post meets the loop controller's condition set. This example shows how different flow nodes can work together in a loop to gradually transform data. It gradually transforms data until desired conditions are met, providing the foundation for understanding more complex iterative workflows with different node combinations.

Prerequisites

Before implementing any new features, make sure you:

Once these components are installed, you can proceed with using Amazon bedrock flows with Dowhile Loop functionality in the generated AI use case.

Create a flow using the Dowhile Loop node

Complete the following steps to create the flow:

- Select on the Amazon Bedrock console flow under Builder Tools In the navigation pane.

- Create a new flow, for example, do while-loop-demo. For detailed instructions on creating flows, Amazon Bedrock Flows is now available in general with increased safety and traceability.

- Add a Dowhil loop node.

- Add additional nodes according to the solution workflow (described in the next section).

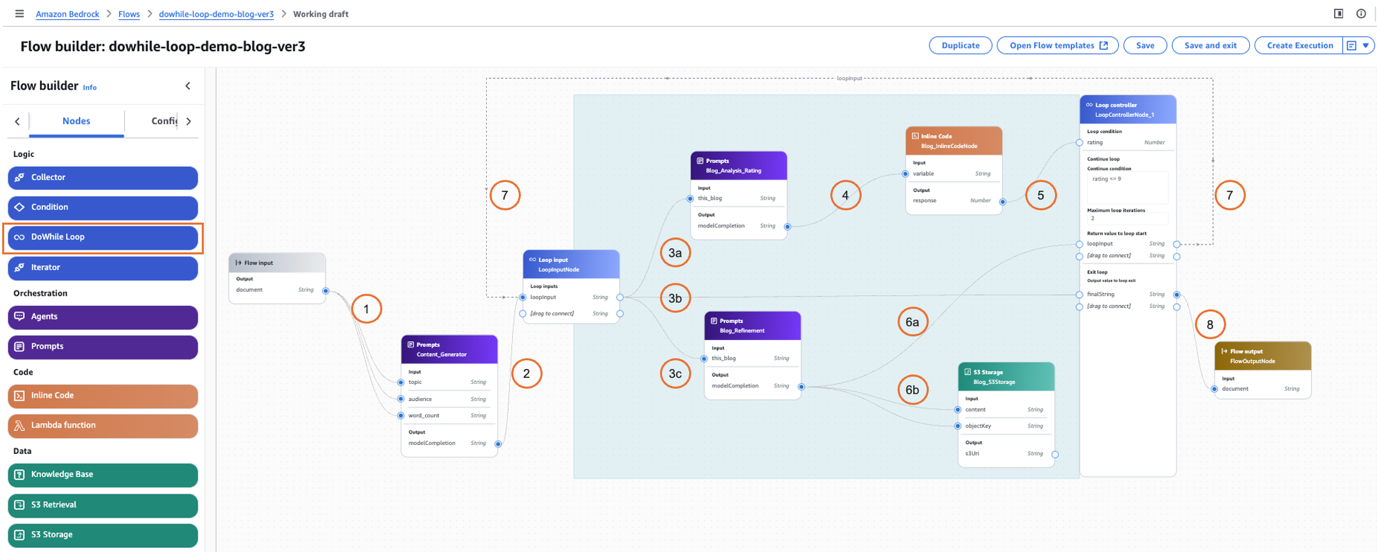

Amazon Bedrock offers a variety of node types to build prompt flows. In this example, we use a Dowhile Loop node to invoke different types of nodes and use an application with generated AI to create blog posts on a specific topic and check the quality in every loop. A flow has one Dowheel loop node. This new node type is in node Tabs in the left pane as shown in the following screenshot.

Dowheel Loop Workflow

The Dowheel loop consists of two parts: loop and Loop controller. The loop controller validates the logic of the loop and decides whether to continue or end the loop. In this example, every time a loop is executed, the prompt's inline code, the S3 storage node, is running.

Let's step through this flow as shown in the previous screenshot.

- Users will ask them to write a blog post about a specific topic (for example, use the following prompt: { “Topics”: “AWS LAMBDA”, “Audients”: “Word_Count”, “Word_Count”: “500}). This will be sent to the prompt node (content_generator).

- A prompt node (content_generator) writes blog posts based on the prompt using one of the LLMS (such as Amazon Nova or Anthropic's Claude) and is sent to the loop input node. This is the entry point to the Dowheel loop node.

- There are three steps in Tandem.

- The loop input node forwards the blog post content to another prompt node (blog_analysis_rating) and evaluates the post based on the criteria mentioned as part of the prompt. The output of this prompt node is JSON code, as in the following example: The output of the prompt node is always a type string. You can change the prompt to get different kinds of output depending on your needs. However, you can also ask LLM to output a single evaluation number.

- Blog posts are sent to the flow output during all iterations. This is the final version when the loop condition is not met (ends the loop) or when the maximum loop iteration is finished.

- At the same time, the output of the previous prompt node (content_generator) is forwarded by the loop input node to another prompt node (blog_refiniming). This node reproduces or modifies blog posts based on feedback from the analysis.

- The output of the prompt node (blog_analysis_rating) is fed to the inline code node, which extracts the required evaluation and returns it as the number or other information needed to check the conditions in the loop controller as input variables (e.g., evaluation).

Python code in inline code must be treated as untrusted and must implement appropriate analysis, validation, and data processing.

- The output of the inline code node is fed into the loop conditions in the loop controller and validates against the conditions set in the Continue Loop. In this example, the generated blog post checks for ratings below 9. You can check up to five conditions. Additionally, the maximum loop iteration parameter ensures that the loop does not continue infinitely.

- The steps are made up of two parts.

- The prompt node (blog_repiniming) forwards the newly generated blog post and repeats the loopput in the loop controller.

- The loop controller stores the version of the post in Amazon S3 to compare future references with different versions generated.

- This path is executed if one of the conditions is satisfied within a Continue Loop and Max Loop iteration. If this continues, new, previously changed blog posts will be forwarded to the input field of the loop input node, as they are followed by loopput and loop.

- The final output is generated after the Dowheel loop condition is met or after the maximum number of iterations is complete. The output will be the final version of the blog post.

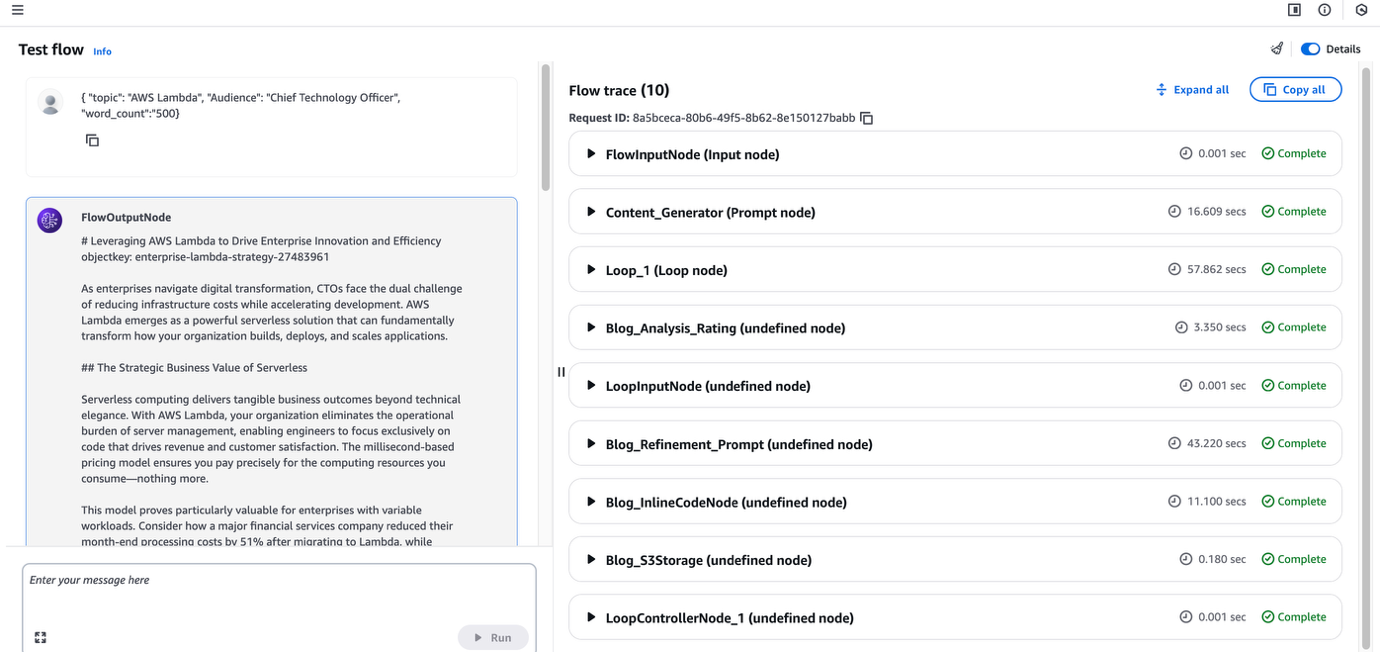

You can see the output as shown in the following screenshot: The system also provides access to node execution traces, providing highlighting each processing step, real-time performance metrics, and issues that may have occurred during the execution of the flow. You can enable Traces using the API and send it to Amazon CloudWatch logs. In the API, set the EnableTrace field to true in the InvokeFlow request. Each flowoutputevent in the response is returned along with the flowtracevent.

You have now successfully created and executed Amazon bedrock flow using the Dowhile Loop node. You can also run this flow programmatically using the Amazon Bedrock API. For more information on how to configure flows, Amazon Bedrock Flows is now available in general with increased safety and traceability.

Considerations

When using a Dowhile Loop node with Amazon Bedrock Flows, the following are important things:

- The dowhile loop node does not support nested loops (loops in loops)

- Each loop controller can evaluate up to five input conditions for the exit criteria

- You must specify a maximum iteration limit to prevent infinite loops and allow for controlled execution

Conclusion

The integration of Dowhile Loops in Amazon Bedrock Flows shows significant advances in iterative workflow capabilities, allowing sophisticated loop-based processing that can include prompt nodes, inline code nodes, S3 storage nodes, Lambda capabilities, agents, Dowhile Loop Nodes, and Knowneting Base Nodes. This enhancement directly addresses the needs of enterprise customers to handle complex, repetitive tasks within AI workflows, helping developers create adaptive, condition-based solutions without the need for external orchestration tools. By providing support for iterative patterns, Dowhile loops help organizations build more sophisticated AI applications that can improve output, perform recursive operations, and implement complex business logic directly within the Amazon bedrock environment. This powerful addition to Amazon Bedrock Flows democratizes the development of advanced AI workflows, making it more accessible and manageable across organizations.

The Amazon bedrock flow dowheel loop is now available in all AWS regions where Amazon bedrock flow is supported, except for the AWS Gov Cloud (US) region. To get started, open the Amazon Bedrock Console or the Amazon Bedrock API and start building the flow in Amazon Bedrock Flows. For more information, create your first flow in Amazon Bedrock and view its traces in Amazon Bedrock to track each step in the flow.

We're happy to see innovative applications building with these new features. As always, we welcome feedback via AWS Re: Amazon Bedrock or regular AWS contacts. Join the Community.aws Generate AI Builder Community to share your experiences and learn from others.

About the author

Shubhankar Sumar He is a senior solution architect at AWS and specializes in architecting generative AI-powered solutions for enterprise software and SaaS companies across the UK. Shubhankar is a powerful background in software engineering, excels in designing secure, scalable, and cost-effective multi-tenant systems on the cloud. His expertise is to seamlessly integrate cutting-edge generator AI capabilities into existing SaaS applications, helping customers stay at the forefront of innovation.

Shubhankar Sumar He is a senior solution architect at AWS and specializes in architecting generative AI-powered solutions for enterprise software and SaaS companies across the UK. Shubhankar is a powerful background in software engineering, excels in designing secure, scalable, and cost-effective multi-tenant systems on the cloud. His expertise is to seamlessly integrate cutting-edge generator AI capabilities into existing SaaS applications, helping customers stay at the forefront of innovation.

Jesse Manders I am the senior product manager for Amazon Bedrock, an AWS Generic AI developer service. He works at the intersection of AI-human interactions and aims to create and improve generative AI products and services to meet our needs. Previously, Jesse was a senior scientist at Silicon Valley startups, serving as an engineering team leadership role at Apple and Lumileds. He has an MS and a PhD. He was an MBA at the University of Florida, University of California, Berkeley, and the Haas School of Business.

Jesse Manders I am the senior product manager for Amazon Bedrock, an AWS Generic AI developer service. He works at the intersection of AI-human interactions and aims to create and improve generative AI products and services to meet our needs. Previously, Jesse was a senior scientist at Silicon Valley startups, serving as an engineering team leadership role at Apple and Lumileds. He has an MS and a PhD. He was an MBA at the University of Florida, University of California, Berkeley, and the Haas School of Business.

Eric Lee With AWS Software Development Engineer II, Amazon Bedrock and Sagemaker build core capabilities to support large-scale generation AI applications. His work focuses on designing safe, observable, cost-effective systems that help developers and businesses to confidently adopt generated AI. He is passionate about enhancing the developer experience for building on large-scale language models, making it easier to integrate AI into production-enabled cloud applications.

Eric Lee With AWS Software Development Engineer II, Amazon Bedrock and Sagemaker build core capabilities to support large-scale generation AI applications. His work focuses on designing safe, observable, cost-effective systems that help developers and businesses to confidently adopt generated AI. He is passionate about enhancing the developer experience for building on large-scale language models, making it easier to integrate AI into production-enabled cloud applications.