“Mom and Dad, we’re checking in,” a person who appears to be a U.S. military officer says to the camera. “I’m okay, okay? There’s no need to worry.”

Surrounded by snow and ice, she continued. “It’s freezing out here and it’s soaking wet. But for the safety of the American people and to make America great again, I’m going to stand my ground. Am I still following you guys and giving you the thumbs up? Stay safe.”

It’s not a U.S. military member, it’s not a real person. This video was generated by artificial intelligence.

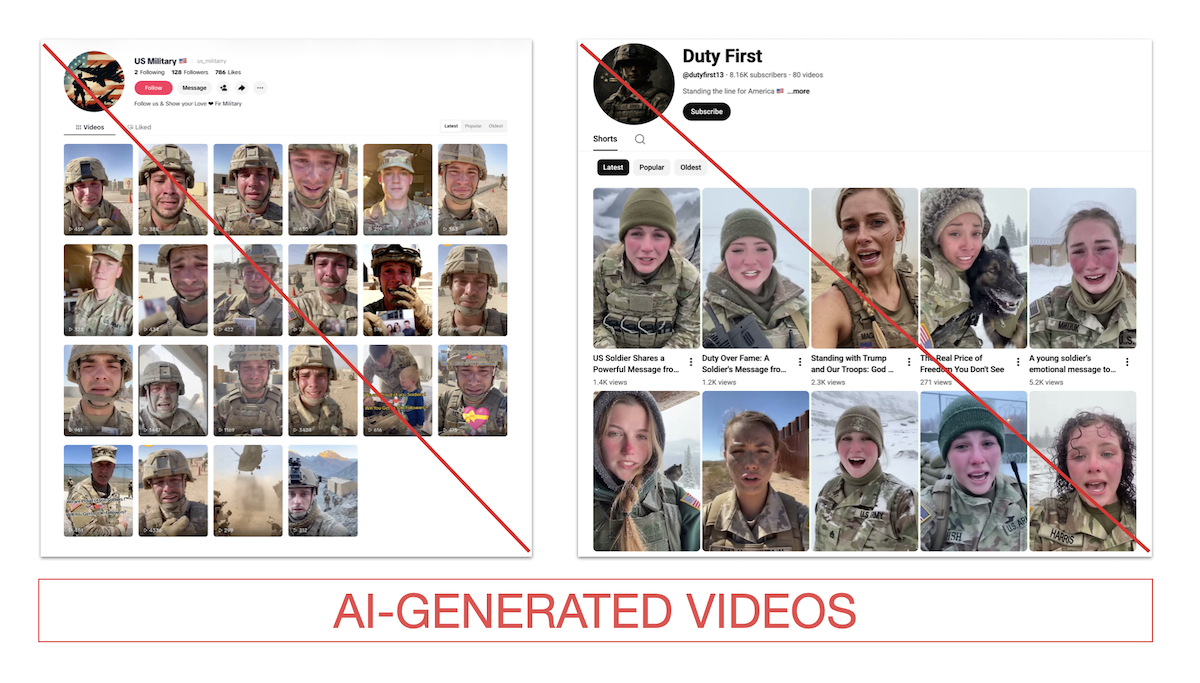

This is one of many fake videos showing similar scenarios, including U.S. service members crying and in dire situations. There are also scenes of female soldiers in military uniforms sniffling and crying in barren areas. They speak directly to parents. There is also a male soldier tearfully talking to his partner.

Fake videos of military personnel crying and asking viewers for sympathy have surfaced in other conflicts, including the Russia-Ukraine war, and continued during the Iran war. As of April 15th, 13 US military personnel had been killed.

Creators have financial incentives to produce emotional content. Viral videos can generate income for creators through programs on social media platforms. Or the video could lure users to a website, prompt them to make a purchase, or steal personal information.

PolitiFact found at least 11 accounts on TikTok, Facebook, and YouTube that posted primarily AI-generated videos of military personnel, with a combined following of more than 174,000. The fake military videos received a total of 29.6 million views, with the average number of views for each account ranging from 628 to 466,192. Some video labels make it clear that they are AI-generated, but even then, people commenting on the video don’t seem to realize it’s fake.

PolitiFact reached out to Meta, YouTube, and TikTok about the accounts. The account I contacted was no longer available as of April 15th.

A TikTok spokesperson said the platform’s community guidelines prohibit AI-generated content that presents misleading information about matters of public importance, such as ongoing conflicts, and the account that shared it was removed.

A Facebook spokesperson said the company removed accounts it reported for violating its policies, adding that the pages were not monetized.

According to a spokesperson, YouTube also removed the channel we contacted for violating its spam policies.

Shannon Razadin, CEO of the nonprofit Military Family Advisory Network, said military families encounter such videos and question what is real.

“These videos can heighten anxiety by presenting scenarios that may not reflect reality, compounding the fears of families already experiencing many unknowns,” she said.

Mary Bennett Doty, deputy program director for We the Veterans & Military Families, said such content can increase inflammatory rhetoric and deepen division.

Show videos of emotional service workers talking about their families and fallen soldiers

Accounts often use one type of background and script for their videos, often sticking to male-only or female-only videos, or pivoting from one to the other.

(Screenshots from TikTok and YouTube)

For example, one page called “Legacy of American Soldiers” featured videos of women crying and talking over the sound of jet planes and smoke in the background.

In one video posted by a TikTok account called “Usa Soldier Life,” which has been viewed more than 764,000 times on TikTok, a man stands in the foreground in the distance with a flag draped in his coffin, tearfully saying:

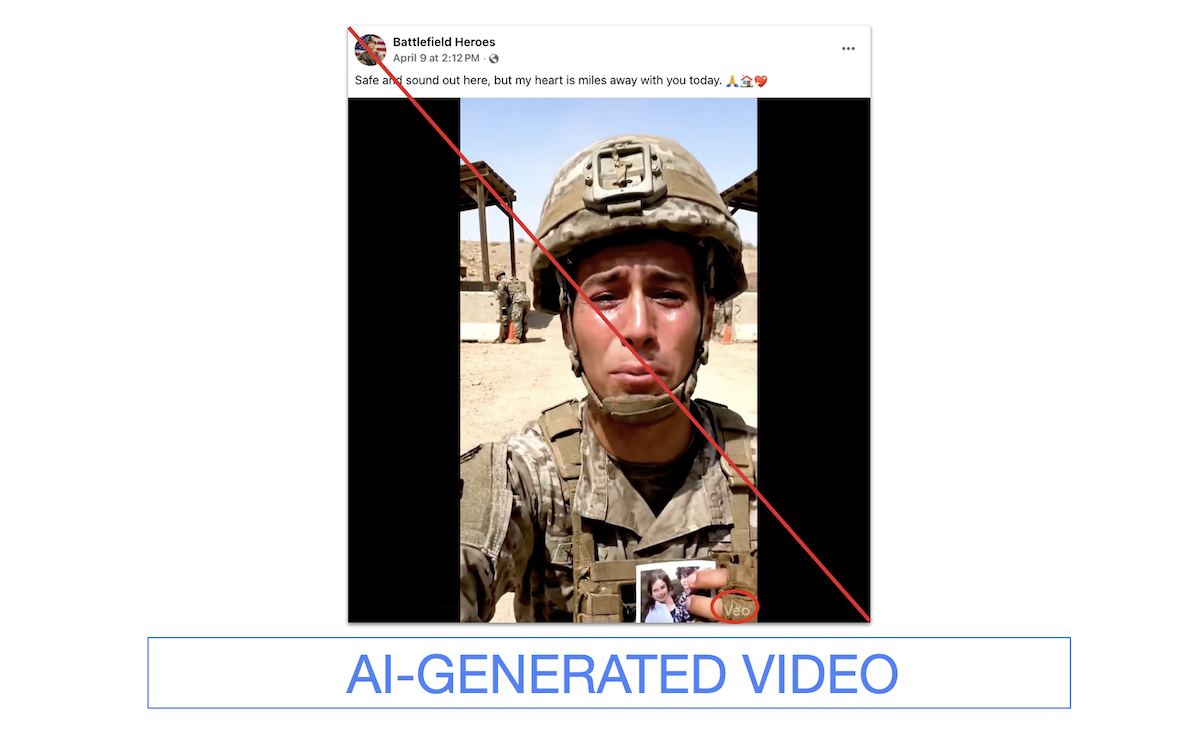

Other pages feature mostly male military personnel, often holding up pictures of perhaps loved ones and addressing their partners. The caption on one of the pages says the video is his final message to his family.

Accounts often attempt to monetize content

While many accounts do not appear to be asking for money, some offer ways for viewers to potentially contact the account owner through a phone number or website, for reasons such as selling products or redirecting to phishing scams.

These accounts follow the trend of using AI to create synthetic “influencers” and other deepfake content related to politics.

For example, the profile of Jessica Foster, a military woman who gained 1 million followers on Instagram by posting photos of herself with President Donald Trump and other politicians, was AI-generated. The account was linked to another page where the profile sold exclusive fetish content.

Such accounts can make money through audience engagement with your content or direct users to other websites that sell products. Daniel Schiff, assistant professor of technology policy at Purdue University, said people are at risk of cyberattacks and information theft.

“Accounts may post sympathetic or inflammatory information to exploit people’s emotions or get attention,” he said. “If the account has enough followers, it may post links to external content ranging from selling clothing to selling intimate content.” Schiff said many of these accounts are driven by financial motivations.

In one video, an AI-generated character cries and says that he’s thinking about his home, and while crying, advertises a “shop link” in his account’s bio. There was no link in the profile. A Facebook page called “Brave Marine,” which has 31,000 followers, features a similar video of a male soldier and links to a website that posts job listings for maritime companies. The phone number listed for registration on the website has been previously associated with fraudulent campaigns.

Razadin said the content undermines the credibility of the information sources military families rely on.

“Many public agencies, such as military branches, support agencies, or military service organizations like us, also use social media to communicate verified content to military families,” she said.

How to identify fake videos of service members

If you have any doubts about the authenticity of a video, check the account that posted it. If different people consistently post videos saying the same thing, that’s one indicator that the video may have been generated by AI.

The date your profile was created and the amount of posts you make can also be signals. Some of the accounts we observed were created around the beginning of the Iran war and have been posting consistently ever since.

“Many of these accounts are relatively new and post with a fairly uniform pattern of influence,” Schiff said.

Some suspicious accounts mainly post attractive young women in uniforms. Gregory Daddis, a history professor at Texas A&M University who served in the U.S. Army for 26 years, said that even though the women in the video may have muddy or scratched faces, they are still portrayed as attractive.

“My almost perfectly waxed eyebrows seem to be saying something to me,” he says.

Uniforms may also be gifts. In the April 12 video, a female service member says, “Dad, Christmas is coming up. I miss you so much, but for the safety of the American people, I have to hang in there. Will you show your support by tapping the little red plus on my profile? Well, I love you both. Stay safe.”

If you look closely at her uniform, you’ll see that her name is nonsensical and that “US” has three periods.

Illegible text and spelling or grammar mistakes are common in these videos. Dadis said that in some AI-generated videos, rank insignia are out of place or feature inaccurate symbols. On combat uniforms, they are on the center patch of the chest, but some videos show them on the sides or missing.

Some videos still have a watermark indicating they were created by AI. One example is Veo, Google’s AI video creator. The watermark can be cropped, but you can tell if the video was generated by AI by its length. For example, Veo typically allows you to create videos up to 8 seconds in length.

“Be wary of content that relies heavily on emotion but lacks specificity,” Razadin says.

Staff writer Maria Briceño contributed to this report.

Related: Social media feeds are filled with misinformation about the Iran war. Here’s how to identify false images