Many users are concerned about what will happen to your data when using cloud-based AI chatbots such as CHATGPT, Gemini, and DeepSeek. Some subscriptions claim to prevent providers from using personal data entered into chatbots, but we know if those terms actually exist. Additionally, cloud AI requires a stable, high-speed internet connection. But if you don't have an internet connection, what? Well, there's always an alternative.

One solution is to run your AI application locally. However, this requires that your computer or laptop have the right amount of processing power. Additionally, the number of standard applications currently relying on AI is increasing. However, if your laptop's hardware is optimized for AI use, you can work faster and more effectively with your AI applications.

Read more: “Vibe coding” your own app using AI is easy! 7 tools and tips to get started

It makes sense to use a local AI application

Running AI applications locally not only reduces dependence on external platforms, but also creates a reliable foundation for data protection, data sovereignty and reliability. Local use of AI increases trust, especially in small and medium-sized businesses with sensitive customer information and private households with personal data. Local AI is still available if your internet service is interrupted or if your cloud provider has technical issues.

The reaction rate is greatly improved as the computing process is not slowed down by delay times. This allows AI models to be used in real-time scenarios such as image recognition, text generation, and voice control.

Plus, you can learn how to use AI completely free of charge. In many cases, the software you need is completely free to use as an open source solution. Learn how to use AI with tools and benefit from the use of AI-supported research in your personal life.

Why NPUs Make a Difference

Without a special NPU, even modern notebooks will quickly reach limits with AI applications. Language models and image processing require enormous computing power that overwhelms traditional hardware. This will result in longer loading times, slower processes and significantly reduce battery life. This is exactly where the benefits of an integrated NPU arise.

idg

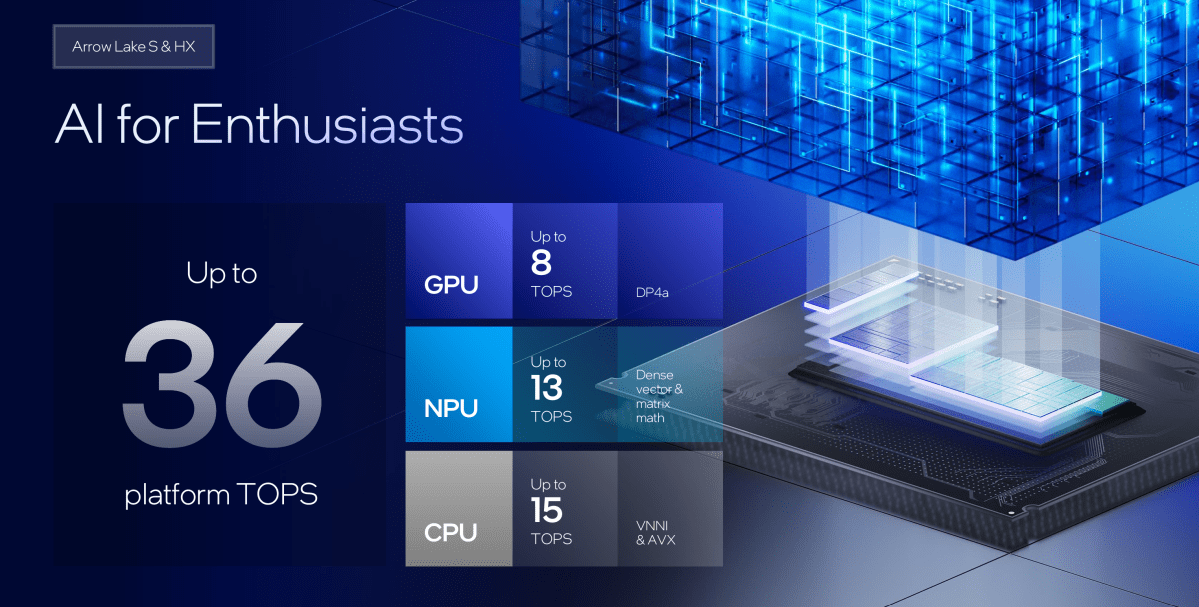

The NPU handles the computationally intensive portion of AI processing independently and is not dependent on the CPU or GPU. As a result, the system continues to respond overall, whether AI services are running in the background or even AI image processing is in progress. At the same time, the operating temperature remains low, the fan remains quiet, and the device operates stably even with continuous operation. So for local AI applications, NPU is not an add-on, but a fundamental requirement for smooth and usable performance.

NPUs will accelerate AI locally again

As a special AI accelerator, NPUs can efficiently run computationally intensive models on standard end devices. This reduces energy consumption compared to purely CPU or GPU-based approaches, making local AI interesting to begin with.

NPUs are special chips for acceleration tasks in which traditional processors operate inefficiently. NPU stands for “neuroprocessing unit.” Such networks are used in language models, image recognition, or AI assistants. In contrast to CPUs that run a variety of programs with flexibility, NPUs are focused on computations that are constantly executed in the field of AI. This allows it to work faster and more economically.

The NPU will take on those tasks exactly when the CPU reaches the limit. AI applications are often calculated with many numbers in the form of a matrix. These are tables of numbers with rows and columns. AI can help you configure and calculate large amounts of data. Text, images, or languages are converted to numbers and represented as a matrix. This allows the AI model to run the computing process efficiently.

NPUs are designed to process many of such matrices simultaneously. The CPU processes such arithmetic patterns one after another. This takes time and energy. On the other hand, NPUs were specifically built to perform many such operations in parallel.

Intel

For users, this means that the NPU handles AI tasks such as voice input, object recognition, and automatic text generation faster and more efficiently. Meanwhile, the CPU remains a free task for other tasks such as operating systems, internet browsers, and office applications. This ensures a smooth user experience with no delays or high power consumption. Modern devices such as Intel Core Ultra and notebooks with Qualcomm Snapdragon X elite already integrate their own NPUs. Apple has used similar technologies on its chips for many years (Apple Silicon M1-M4).

AI-supported applications run locally and respond quickly without transferring data to cloud servers. The NPU ensures smooth operation for image processing, text recognition, transcription, voice input, or personalized proposals. At the same time, it reduces system usage and saves battery power. So it's worth choosing a laptop with an NPU chip, especially if you're using an AI solution. These don't have to be special AI chatbots. More and more local applications and games are using AI. I also use Windows 11 itself.

YouTube

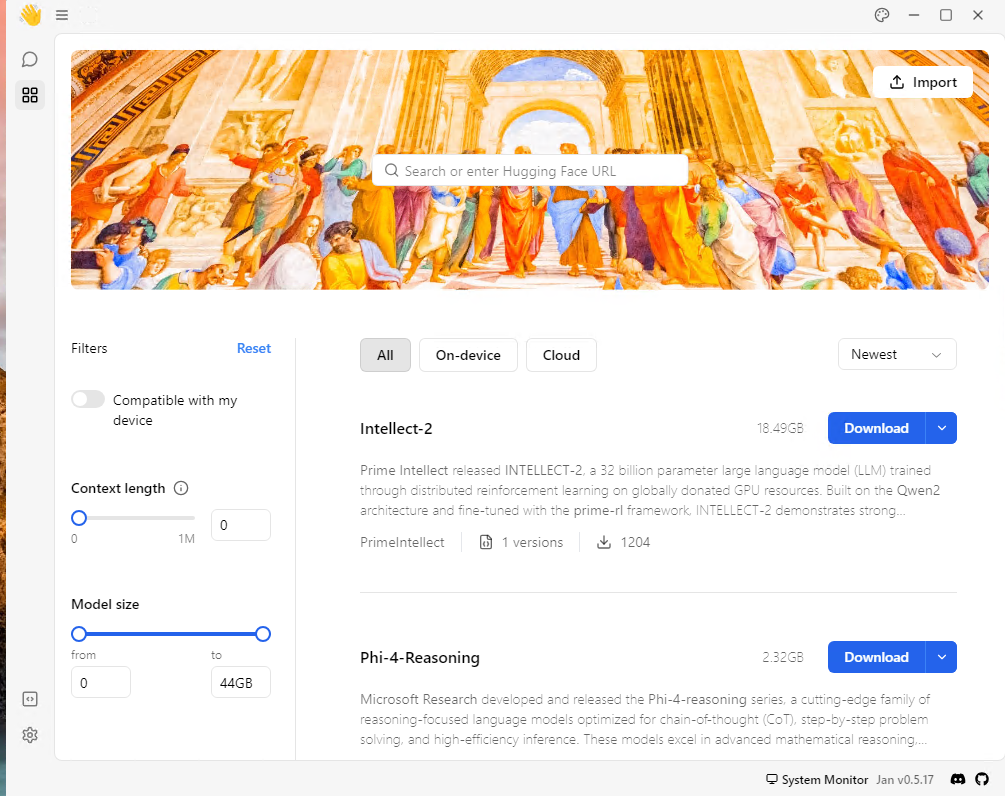

Open Source brings AI locally to your computer: Ollama and Open Web UI

Open source solutions such as Ollama allow you to run LLMS on your notebook for free with NPU chips. LLM stands for “Large Language Model.” LLMS forms the heart of AI applications. Computers can understand natural language and respond to it in meaningful ways.

Anyone who uses AI to create texts, summarise emails, or answer questions is interacting with LLM. AI models can help you develop, explain, translate, or modify. Search engines, language assistants and intelligent text editors also use LLM in the background. The critical factor here is not only the performance of the model, but also where it is performed. If you want to work with LLM locally, you can connect your local AI application to this local model. This means you are no longer dependent on the internet.

Ollama allows for the operation of many LLMs, including free LLMs. These include deepseek-r1, qwen 3, llama 3.3, and more. Simply install Ollama on your PC or laptop using Windows, Linux, and MacO. Once installed, you can operate Ollama via the Windows command line or MacOS and Linux terminals. Ollama offers a framework that allows you to install a variety of LLMs on your PC or notebook.

To work with Ollama like AI applications such as ChatGpt, Gemini, and Microsoft Copilot, you also need a web frontend. Here you can rely on OpenWeb UI solutions. This is also free. It can also be used as a free open source tool.

You can also use the more restricted tool GPT4All as an alternative to Ollama with an open web UI. Another option in this area is Jan.AI. It provides access to well-known LLMS such as OpenAI's DeepSeek-R1, Claude 3.7, and GPT 4. To do this, install Jan.AI, start the program, and select the desired LLM.

Thomas Joss

However, please note that the download of the model can reach 20 GB or more quickly. Furthermore, if your computer's hardware is AI-optimized, it would make sense to use it only if you are using an existing NPU, ideally.

This article was originally published in the sisters' publication PC-Welt, translated from German and localized.