Researchers at the University of Pennsylvania have unveiled HoloRadar, an AI-powered system that uses radio waves to allow robots to “see” around turns without having to look directly through them.

Rather than relying on visible light environments like other non-line-of-sight (NLOS) perception approaches, this new approach also works in a variety of lighting conditions, including complete darkness.

The Pennsylvania research team behind HoloRadar suggests their approach could help develop safer self-driving systems. HoloRadar could also improve the performance of automated robotic platforms used in factories, warehouses, and other crowded, high-traffic environments.

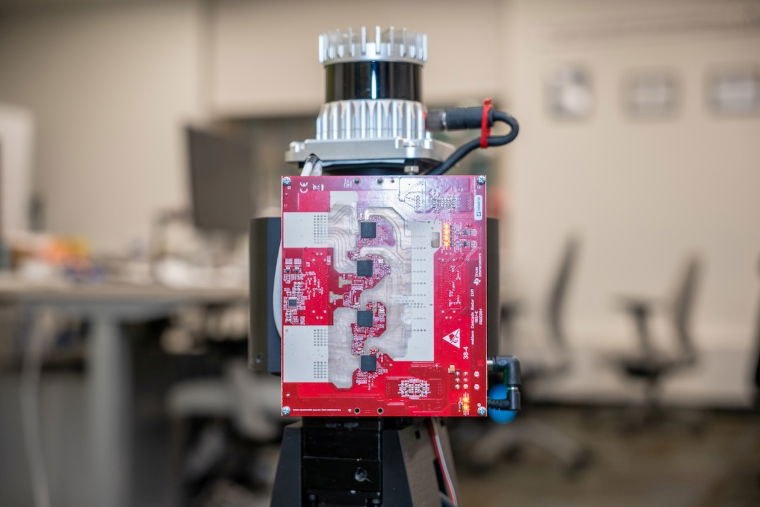

“HoloRadar is designed to operate in environments where robots would actually operate,” explains Mingming Chao, assistant professor of computer and information science (CIS) and lead author of a paper describing a robot that can see around corners. “The system is mobile, runs in real time, and does not rely on controlled lighting.”

Before HoloRadar, NLOS systems faced technical challenges

Other teams have demonstrated systems that can visualize hidden obstacles, but those approaches require visible light to work properly, Penn researchers said in a statement. That’s because these NLOS systems “see” around corners by analyzing patterns created by shadows and other indirect reflections.

There have been some attempts to use radio signals, but those approaches relied on slow and bulky scanning equipment. This approach has also often been perceived as a drawback, as the radio waves have long wavelengths and can limit resolution. But Penn’s team saw potential in these longer wavelengths.

“Because radio waves are much larger than small changes in the wall’s surface, the wall surface effectively becomes a mirror that reflects radio waves in a predictable way,” explained study co-author and CIS PhD student Haowen Lai.

In theory, these long wavelengths should bounce off walls, floors, and ceilings before transmitting that information back to the robot, which then turns it into a map of hidden locations and allows it to see around corners.

“This is similar to how human drivers rely on mirrors installed at intersections with poor visibility,” says Jitong Lan, a doctoral student in electrical systems engineering (ESE) and co-author of the paper. “HoloRadar uses radio waves, so the environment itself is filled with mirrors without actually changing the environment.”

How AI reverses the reflection process to build 3D images of hidden objects

Before building the final HoloRadar system, the Penn team started by addressing the main limitations of these systems. Specifically, radio waves that bounce multiple times before returning to the robot create what the researchers describe as a “complex set of reflections” that can thwart traditional signal processing approaches.

“In some ways, this challenge is like walking into a room full of mirrors,” Lunn says. “We see many copies of the same object appearing in different places, but the challenge is figuring out where the object actually is.”

To address this issue, the team built a hybrid model that combines machine learning and physically-based modeling. The process begins by increasing the resolution of the raw radio signal. According to the research team, this process helps the system identify multiple signal “returns” that correspond to the different reflection paths the radio waves take.

HoloRadar Core Intelligence then tracks these reflected signals backwards. The researchers say this step restores the “mirror effect” of the hidden location, allowing HoloRadar to reconstruct the actual three-dimensional scene. The result is AI that can distinguish between direct and indirect reflections of radio waves, ultimately allowing us to pinpoint the physical location of objects or people hiding around corners.

“Our system learns how to reverse that process in a physics-based way,” Lunn explained.

“Robots need to see beyond what’s in front of them.”

In a series of tests, the University of Pennsylvania team tested HoloRadar on a mobile robot. The tests also included hallways and cornered areas to simulate environments where robots are already used, such as factories and warehouses, which benefit from seeing around corners.

According to the research team, the HoloRadar-equipped robot “successfully reconstructed walls, hallways, and hidden human objects” that were outside the robot’s direct line of sight.

When discussing the applicability of their approach, the Penn State team said HoloRadar is not designed to replace current options. Instead, they said their approach adds an “additional layer of perception” to robotic platforms that are already equipped with LIDAR to sense objects in their field of view.

“Robots and self-driving cars need to look beyond what’s in front of them,” Zhao explained. “This capability is essential for enabling robots and self-driving cars to make safer decisions in real time.”

While the current HoloRadar system has been successful indoors, the team plans to explore outdoor environments such as urban roads and intersections. In such environments, robotic systems are challenged with longer ranges and more dynamic conditions, which are difficult to address with current approaches.

“This is an important step toward giving robots a more complete understanding of their surrounding environment,” Zhao said. “Our long-term goal is to enable machines to operate safely and intelligently in the dynamic and complex environments that humans move through every day.”

the study “Radar non-line-of-sight 3D reconstruction” presented at the 39th Annual Conference on Neural Information Processing Systems (NeurIPS).

Christopher Plain is a science fiction and fantasy novelist and head science writer at The Debrief. Follow and connect with him ×, To learn about his books, plainfiction.comor email him directly christopher@thedebrief.org.