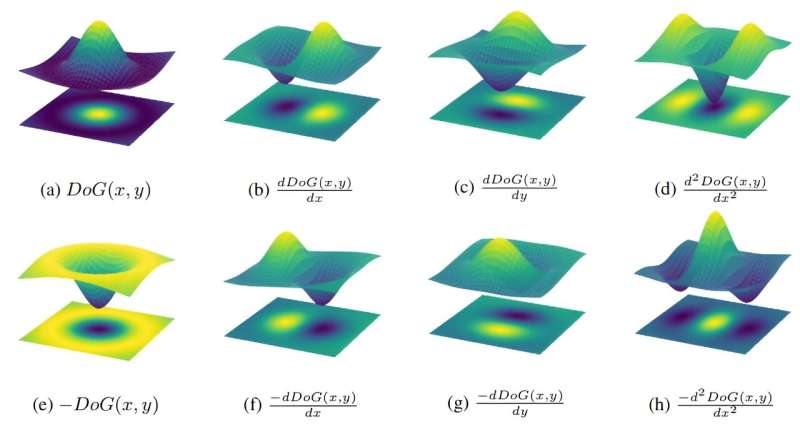

A complete diagram of On DoG and Off DoG filters and their derivatives. Source: https://openreview.net/pdf?id=4VgBjsOC8k

Researchers from the Vienna University of Technology have investigated how artificial intelligence classifies images, and their results show some striking similarities to the natural visual system.

How do we teach a machine to recognize objects in an image? Great progress has been made in recent years in this field. For example, with the help of neural networks, we can assign images of animals to their respective species with a very high success rate. This is achieved by training a neural network with a large number of example images. The network adapts incrementally, and eventually gives the most accurate answer possible.

However, it usually remains a mystery what structures are formed during this process and what mechanisms develop within neural networks to ultimately reach the goal.

A team from TU Vienna led by Professor Radu Grosu and from MIT led by Professor Daniela Rus investigated exactly this question and came to a surprising result: artificial neural networks form structures that are strikingly similar to those occurring in the nervous systems of animals and humans.

The research team presented their research at the International Conference on Learning Representations (ICLR 2024) in Vienna in May.

Peyman M. Kiasari, Zahra Babaie and Radu Gross (left to right). Photo courtesy of TU Vienna.

Multiple layers of neurons

“We work with so-called convolutional neural networks, which are artificial neural networks that are often used to process image data,” says Zahra Babaiee of the Institute of Computer Engineering at TU Vienna, who is first author on the paper and conducted part of the research in collaboration with Daniela Rus at MIT and Peyman M. Kiasari and Radu Grosu, also at TU Vienna.

The design of these networks was inspired by the biological neural networks of our eyes and brains, where visual impressions are processed by multiple layers of neurons: for example, certain neurons become active when activated by light signals in the eye, sending signals to neurons in the layers behind them.

In artificial neural networks, this principle is mimicked digitally on a computer: the desired input (for example, a digital image) is transmitted pixel by pixel to the first layer of an artificial neural network, where the activity of neurons depends on whether a bright or dark pixel is presented.

These activity values of the neurons in the first layer are used to determine the activity of the neurons in the next layer. Each neuron in the subsequent layer combines the signals from the first layer according to a very specific individual pattern (according to a very specific formula) to produce a value that is used to determine the activity of neurons in the next layer.

Remarkable similarities to biological neural networks

“In convolutional neural networks, not every neuron in one layer plays the role of every neuron in the next layer,” explains Zahra Babaiee. “Just like in the brain, not every neuron in a layer is connected to every neuron in the previous layer without exception, but only to neighboring neurons in very specific regions.”

Thus, convolutional neural networks use so-called “filters” to determine which neurons will influence a particular subsequent neuron and which will not. These filters are not determined in advance, but are formed automatically during the training of the neural network.

“These filters and other parameters are constantly adjusted while the network is trained on thousands of images. The algorithm tries which weightings of the neurons in the previous layers lead to the best result until the image is assigned to the correct category with the highest possible confidence,” says Zahra Babaiee. “The algorithm does this automatically, so we have no direct influence on it.”

However, at the end of training we can analyze which filters developed in this way, which reveals an interesting pattern: the filters do not take a completely random form, but rather fall into a few simple categories.

“Filters can develop in such a way that some neurons are particularly influenced by the neurons immediately preceding them, and little by others,” Zahra Babaie says.

Other filters appear as a cross or show two opposite regions – one where one neuron has a strong positive influence on the activity of neurons in the next layer, and another where the other neuron has a strong negative influence.

“Surprisingly, these very patterns have already been observed in the nervous systems of other species, including monkeys and cats,” says Zahra Babaie, and it seems likely that processing of visual data in humans works in a similar way.

It is probably no coincidence that biological evolution has produced the same filtering functions that arise in automated machine learning processes: “If we know exactly how these structures form again and again during visual learning, we can develop machine learning algorithms that take this into account in the training process and reach the desired result much faster than before,” says Zahra Babaiee.

For more information:

Revealing the invisible: Discriminative clusters in trained depthwise convolution kernels. openreview.net/pdf?id=4VgBjsOC8k

Courtesy of Vienna University of Technology

Quote: Researchers studying how AI categorizes images find similarities to natural visual systems (June 5, 2024) Retrieved June 5, 2024 from https://techxplore.com/news/2024-06-ai-categorizes-images-similarities-visual.html

This document is subject to copyright. It may not be reproduced without written permission, except for fair dealing for the purposes of personal study or research. The content is provided for informational purposes only.