Scientists are increasingly turning to machine learning to overcome difficult challenges in quantum technology. Together with their colleagues, Marin Bukov (Max Planck Institute for the Physics of Complex Systems) and Florian Marquardt (Max Planck Institute for Photoscience, Friedrich-Alexander-University Erlangen-Nuremberg) demonstrate how reinforcement learning (RL), a powerful method based on adaptive decision-making, can be applied to the optimization of quantum systems. In this review, we explore recent advances in leveraging RL for important tasks such as state preparation, gate design, and circuit construction, and extend it to interactive features such as feedback control and error correction. By focusing on experimental implementations, this study demonstrates the growing importance of RL and outlines key areas for future research that may accelerate the development of practical quantum technologies.

Reinforcement learning optimizes complex quantum systems

Scientists have demonstrated a powerful new approach to tackling quantum technology challenges by leveraging reinforcement learning (RL), a type of machine learning based on adaptive decision-making through interaction with quantum devices. This groundbreaking work establishes RL as a key methodology for optimizing complex quantum systems, going beyond traditional control strategies and opening the door to more efficient and robust quantum devices. The team has achieved significant progress in several important areas, including state preparation for both few- and many-body quantum systems, design and optimization of high-fidelity quantum gates, and automatic construction of quantum circuits applicable to variational eigensolvers and architecture searches. This study begins with a concise introduction of core concepts for a broad physics audience and reveals a comprehensive framework for applying RL to quantum systems.

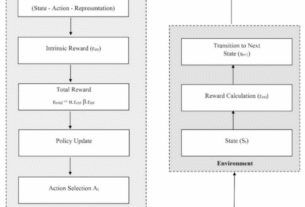

Researchers have meticulously detailed the key elements of RL: environment, state, actions, rewards, and how these translate to the quantum realm. Experiments show that by structuring quantum control problems within the RL paradigm, algorithms can learn optimal strategies for manipulating quantum states and implementing quantum operations without relying on predefined models or extensive manual tuning. This adaptive approach is particularly valuable in scenarios where accurate theoretical models are difficult to obtain or computationally expensive to solve. This work further focuses on the interactive capabilities of RL agents and demonstrates significant advances in quantum feedback control and error correction.

Specifically, this research reveals advances in the development of RL-based decoders for quantum error-correcting codes, as well as the discovery of entirely new error-correcting codes, which is an important step toward building fault-tolerant quantum computers. The team also explored the application of RL to quantum metrology, showing its potential to enhance parameter estimation and sensing capabilities. This study proves that RL is not just a theoretical possibility, but a practical tool for advancing quantum technology, as evidenced by several experimental implementations highlighted throughout the study. The study concludes with a critical discussion of open challenges, including improving scalability, increasing the interpretability of RL agents, and seamlessly integrating these algorithms with existing experimental platforms. However, the authors outline promising directions for future research and suggest that reinforcement learning will play an increasingly important role in shaping the development of quantum technologies and maximizing the potential for scientific discovery and innovation.

Reinforcement learning for quantum control and optimization

Scientists are increasingly leveraging reinforcement learning (RL) to address challenges in quantum technology development. This study details how RL, a machine learning paradigm focused on adaptive decision-making through interaction, has been applied to various fields of quantum information science. This work pioneers the use of RL algorithms for tasks ranging from quantum state preparation to error correction and demonstrates a significant shift toward data-driven control strategies. Researchers meticulously define the RL framework, establishing core concepts such as environment, state, observation, action, and reward, which form the basis for all subsequent applications.

The team designed a comprehensive survey of both policy gradient and value function techniques, alongside other related algorithms, to optimize quantum systems. The experiment employs deep reinforcement learning techniques to enable training of agents capable of navigating complex quantum control environments. An important methodological innovation lies in the distinction between model-free and model-based RL, allowing researchers to choose the optimal algorithm based on the specific quantum task at hand. This approach enables precise control of quantum systems beyond the limitations of traditional methods.

Scientists have exploited RL for quantum optimal control, with a particular focus on preparing both minority- and many-body quantum states. This research details how RL agents are trained to design and optimize high-fidelity quantum gates, a critical component for building scalable quantum computers. Furthermore, research extends to automatic circuit construction, including variational eigensolvers and applications to quantum architecture search, greatly accelerating the development of quantum algorithms. This system provides a solution for entanglement control, a fundamental requirement for quantum computing and communications.

This study also highlights the interactive capabilities of RL agents in quantum feedback control and error correction. Researchers have developed a quantum decoder using RL and demonstrated the potential for discovering new error-correcting codes. Quantum metrology experiments show how RL can be used for parameter estimation and sensing to push the limits of precision measurements. This study highlights the growing role of reinforcement learning in shaping the future of quantum technologies, while recognizing open challenges such as scalability and interpretability.

Optimizing RL policy by maximizing reward

Scientists have successfully applied reinforcement learning (RL) algorithms to leverage adaptive decision-making through interaction with devices to address complex technology challenges. The study details how an RL agent chooses an action based on a policy denoted by π(a|s), which encodes the probability of choosing action ‘a’ when observing state s. Experiments reveal that the agent updates this policy based on the rewards it receives, striving to maximize its expected return, the precise update rules that define the underlying RL algorithm. The optimal policy determined through these processes yields the highest achievable expected return, a key metric for evaluating performance.

The team measured the state of the RL environment, defined a complete description of the underlying physical state of the target system, and observed this state through measurements of the system. The actions performed by the agent cause the environment to transition between states according to the laws of the physical system, whether in a simulated or real laboratory setup. Key aspects of this work included addressing the issue of credit allocation and determining how to select rewards that accurately reflect task success. Reward signals, or performance indicators, are carefully designed to reflect the task, ensuring that policies that produce the same reward are equally effective.

The results show that the reward function, fixed before training, can depend on the current state, the chosen action, and the subsequent state of the environment, denoted by r(st+1, st, at). Measurements have confirmed that identifying dimensionless physical quantities such as typical energies, lengths, and time scales can be helpful in building effective reward functions. Given this agent-environment interface, scientists have used a series of algorithms to learn policies that maximize expected profits, producing solutions that often converge to a local optimum but go beyond traditional optimization techniques. This study focuses on two overlapping categories of RL algorithms: policy gradient methods and value function methods.

The policy gradient method parameterizes the policy π ≈πθ using a variational parameter θ and then performs gradient ascent to maximize returns. Testing has proven that representing the policy as a deep neural network, accepting RL states as input and outputting probabilities of actions, is a common and effective approach. Value function methods estimate the maximum score achievable from a given initial configuration and assign a value to each state. This innovative approach allows agents to learn optimal strategies through iterative refinement and reward maximization.

Reinforcement learning brings significant advances in quantum control and design

Scientists have demonstrated success in applying reinforcement learning (RL) algorithms to address quantum technology challenges. This review explores recent advances in RL across several key areas, including state preparation, gate design and optimization, and automated circuit construction, with applications extending to variational eigensolvers and architecture search. Researchers are increasingly exploiting the interactive capabilities of RL for feedback control and error correction, along with its potential in quantum metrology. The results of this study demonstrate that RL provides an efficient toolbox for designing error-robust quantum logic gates and outperforms even state-of-the-art human-designed implementations by directly incorporating experimental hardware interactions.

Specifically, research shows that RL agents can synthesize single-qubit gates with reduced execution time and improved trade-off between protocol length and speed, potentially enabling real-time operation. Additionally, a custom deep RL algorithm generated control pulses for superconducting qubits up to twice as fast as existing gates while maintaining comparable fidelity and leakage rates. However, the authors acknowledge that there are limitations, such as scalability concerns, difficulty in interpreting the decision-making process of RL agents, and challenges in integrating these algorithms with existing experimental platforms. Future research should focus on addressing these issues to fully realize the potential of RL in quantum technologies. Promising directions include the development of methods that increase scalability, improve interpretability, and streamline integration with experimental settings, ultimately paving the way to more sophisticated autonomous quantum systems.