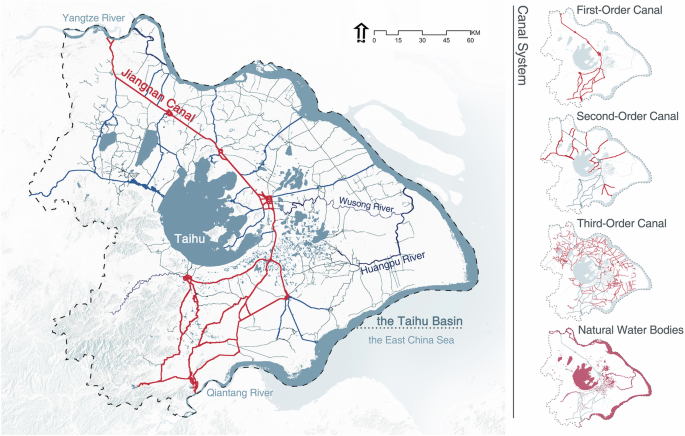

Study area and canal system

This study selects the Taihu Basin, traversed by the Jiangnan Canal, as the study area. While the region’s low-lying terrain and dense waterways weren’t naturally suited to the development of agricultural civilizations, they nonetheless provided a foundation for the construction of the canal16. To connect rivers, lakes, and the sea, various waterways were continuously extended, gradually forming a hierarchical canal system comprised of main canals supplemented by branch channels and natural water bodies (Fig. 2)17. The continued development of the canal also led to the rise of post stations along its route into prosperous commercial settlements. Simultaneously, the silt deposited along the riverbanks transformed into cultivated fields, which served as sources of food and handicraft raw materials for the urban settlements, continuously evolving under the irrigation and transportation guarantees of the canal system18. Ultimately, the Jiangnan Canal landscape system was formed, in which natural and artificial water networks interact, and towns, villages, and farmland coexist in a coordinated manner19.

The location of study area, Taihu Basin, China. Red lines indicate the Jiangnan Canal, and the right side shows the canal system with its main canal and lower-order branch channels.

Automatic interpretation of the landscape system

Current research on land cover interpretation with multifunctional attributes predominantly relies on morphological clustering analysis20,21,22,23,24. Additionally, some studies utilize POI data to adjust the functional semantics of spatial objects through graph neural networks25. However, these efforts focus solely on refining classifications of single surface types, rather than addressing landscape typology classification tasks that encompass cultural, functional, and other semantic dimensions. Studies on direct automatic identification of landscape types remain scarce. Li et al. conduct secondary clustering via SOM and K-means algorithms based on manually designed polder landscape morphological indices for landscape interpretation26. Deep-learning-based studies primarily employ convolutional neural networks. Fleischmann et al. categorized 16 composite land-use types centered on urban functions27. Meng et al. combined water network and parcel division patterns, using ResNet50 to interpret five types of polder landscape28. Shi et al., based on natural and cultural heritage characteristics, employed DeepLabV3+ to obtain five types of farmland landscapes29. Additionally, Cui et al. used KH-5 imagery to interpret three types of sandy polder landscapes, but their approach still required substantial manual post-processing30.

Automated interpretation of panchromatic remote sensing imagery

The keyhole series imagery, as the earliest accessible satellite imagery, is crucial aviation data for revealing past realities. However, due to its single-band panchromatic nature, combined with geometric distortions and noise from film data, apart from manual visual interpretation, there are currently few automated interpretation studies. Existing research predominantly employs conventional machine learning algorithms, including maximum likelihood (ML), random forest (RF), support vector machine (SVM), and self-organizing maps (SOM), focusing on historical land use applications. Limited studies have analyzed changes in urban built-up areas, forests, or agricultural land, generally employing binary or limited-category classifications. In light of the limitations of singular spectral data in panchromatic imagery, researchers have applied the gray level co-occurrence matrix (GLCM) to derive image texture features31. Building on this foundation, Rizayeva et al. further employed the SNIC algorithm to generate geometric features and integrated DEM data for multi-feature fusion, which enhanced model recognition accuracy32. Research has also been conducted on employing image texture features for pseudo-color synthesis33 or utilizing U-Net generators to colorize panchromatic imagery within GAN frameworks34,35. Despite issues such as spectral distortion, researchers have utilized SVM to extract land use from these colorized results36.

In the field of deep learning, most studies deal with a limited number of classes. The interpretation themes primarily focused on employing U-Net series models for land cover interpretation37,38, or reconstructing historical land use with multi-source data39. To address the scarcity of training samples and high annotation costs for panchromatic imagery, Mboga et al. combined U-Net with domain adaptation networks to enhance cross-regional interpretation performance for historical imagery40. Concurrently, Dahle et al. processed data into multi-scale representations for training and fused the outputs to integrate global and local features41.

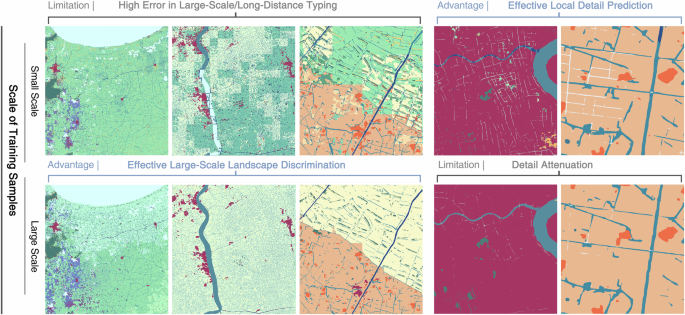

Geo-SegFormer

Current research utilizing deep learning for remote sensing image interpretation predominantly employs samples with 512 × 512 pixel resolution or smaller, typically addressing a limited number of categories. Studies on automated interpretation of landscape types using historical imagery are even scarcer, with no publicly available models capable of segmenting such landscape types. The Jiangnan Canal and its landscape system are dominated by long-distance linear water networks and large-scale, cross-regional agricultural landscape patches, while also incorporating smaller-scale elements such as capillary polder networks and point-like settlement patches. Hence, landscape recognition requires models that effectively capture local feature details while contextually perceiving global structures. Accordingly, training sample selection requires multi-scale synergy. Large-scale samples enhance contextual relevance by covering broader scenes and more complete spatial structures, improving long-range dependency modeling while reducing boundary effects from local truncation, thus boosting the integrity and accuracy of long-distance coherent feature identification. In contrast, small-scale samples focus on high-resolution local features to compensate for detail dilution in large-scale training while strengthening modeling capabilities for capillary water networks and discrete patches (Fig. 3).

The first column presents a macro-level identification performance comparison between small-scale and large-scale training samples, while the second column provides a micro-level comparison.

Therefore, to identify a suitable model architecture, we conducted a comparative performance analysis. U-Net, TransUNet, and SegFormer were selected as representative models of convolutional, hybrid convolutional-transformers, and pure transformer architectures, respectively. The training and validation data were prepared by replicating the original panchromatic imagery into three channels. As shown in Table 1, SegFormer outperformed the other models across all evaluation metrics on the test set, achieving an overall accuracy of 0.85, Kappa of 0.84, F1 score of 0.85, mIoU of 0.53, and mDice of 0.63. Simultaneously, SegFormer’s hierarchical Transformer encoder eliminates positional encoding, enabling synchronous modeling of high-resolution local details and low-resolution global semantics while adapting to arbitrary input sizes without accuracy degradation. Paired with a lightweight MLP decoder that synergizes global and local information, this framework reduces computational complexity and meets the segmentation task’s demands for the canal landscape system with multi-scale training. To this end, we select SegFormer as the foundational architecture.

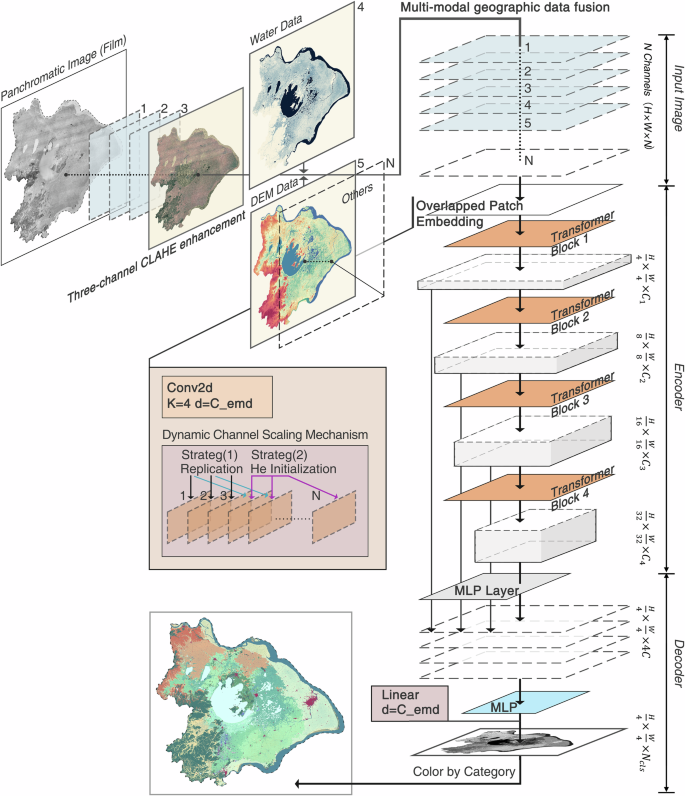

However, canal landscape features exhibit high diversity with composite spatial and cultural semantics. Combined with the absence of multispectral data in historical panchromatic imagery and spectral confusion among features, these factors pose significant challenges for effective segmentation of historical canal landscapes. Tracing the formation of the Jiangnan Canal landscape system reveals that terrain and hydrographic elements constitute key geographical drivers. Therefore, building on the spatial-cultural attributes of historical landscapes and the characteristics of panchromatic data, while accounting for the aforementioned complexities, we propose Geo-SegFormer: a semantic segmentation method for historical landscapes that integrates deep learning with multimodal geospatial data (Fig. 4). The remainder of this section details the proposed method.

The framework comprises three components: differential three-channel CLAHE enhancement for panchromatic imagery, multimodal geographic data fusion, adaptation and modification of SegFormer.

Differential three-channel CLAHE enhancement for panchromatic imagery

To address the single-band limitations of panchromatic remote sensing imagery and better adapt to pretrained model input structures, this study designs a differential three-channel CLAHE (Contrast Limited Adaptive Histogram Equalization) enhancement strategy.

Channel 1: Uses normalized orthoimagery data to preserve original surface texture information, serving as the baseline channel for multispectral synthesis.

Channel 2: Applies the standard CLAHE algorithm42 to normalized orthoimagery with a clip limit of 2.0. The output is:

$${C}_{2}(x,y)=255\times \frac{{\sum }_{k=0}^{(x,y)}{h}_{\mathrm{clip}}(k)}{{\sum }_{k=0}^{255}{h}_{\mathrm{clip}}(k)}$$

(1)

Where \({\rm{I}}({\rm{x}},{\rm{y}})\) is the normalized pixel value, \({{\rm{h}}}_{{\rm{clip}}}({\rm{k}})\) is the clipped histogram. The high-frequency texture of the imagery is enhanced, improving the local contrast and edge structural integrity of landscape elements, while strengthening visual separability between landscape patches and discriminability of linear features such as canal systems and road networks.

Channel 3: First compresses the brightness of the normalized remote imagery, then applies CLAHE enhancement.

$${C}_{3}(x,y)={\rm{CLAHE}}(0.8\times I(x,y))$$

(2)

This approach mitigates overexposure and noise risks from excessive bright-region histogram stretching during enhancement. It selectively enhances mid-low frequency information in shadow regions and other low-reflection, weak-texture areas such as mountains, farmlands, and lakes. These operations significantly improve discrimination among landscape types with similar textures but distinct cultural semantics.

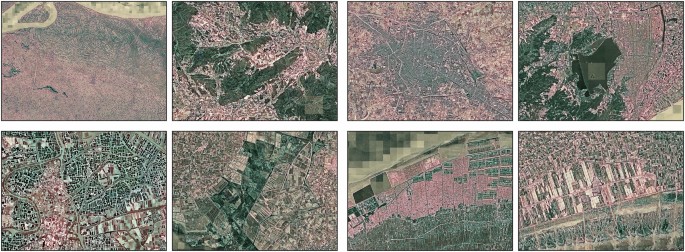

At this stage, the panchromatic imagery is enhanced from a single-band to a three-band TIFF format. Given spectral heterogeneity in frequency domains among landscape types (e.g., high-frequency edges, mid-frequency patches, low-frequency homogeneous areas)43,44, this processing enhances spectral differences between landscape types. After RGB pseudo-color mapping of the merged imagery, key landscape elements, including settlements, polders, dyke-ponds, lakes, marshes, and mountains, become distinguishable by color (Fig. 5).

The figure shows pseudo-colour composites generated from the enhanced panchromatic imagery, where different landscape types appear in contrasting hues.

Multimodal geographic data fusion

To enhance the accuracy of historical landscape identification, this study utilized terrain and hydrological data as additional inputs. This design is not a simple stacking of data, but is based on a deep understanding of the landscape evolution mechanism: in the Jiangnan Canal area, the terrain elevation and the distribution of the canal water system directly determine the spatial differentiation of landscape types. The height of the terrain dominates the distribution of water sources, forming different landform units, while the canal water conservancy further shapes various types of polder and settlement landscapes.

At the technical implementation level, the study normalized DEM and binarized water network data to eliminate sensor differences. These processed data were then stacked with the three-channel panchromatic image to form a five-channel input dataset, which was then fed into the Segformer model for high-dimensional fusion. This process simplifies data flow and improves fusion efficiency. Its encoder first extracts multi-scale feature embeddings with global semantics from multi-channel data, and then the MLP decoder performs nonlinear fusion of these embeddings in high-dimensional space. This process achieves spatial, semantic alignment and aggregation of cross-scale features, rather than a shallow superposition of information from each channel. This design is simple and efficient, and is the key to achieving efficient semantic segmentation.

SegFormer architecture

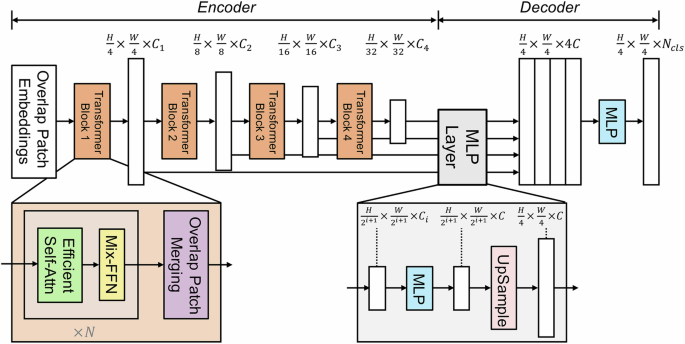

SegFormer is a Transformer-based semantic segmentation model. As illustrated in Fig. 6, it comprises a hierarchical Transformer encoder omitting positional encoding and a lightweight ALL-MLP decoder. This design enables the model to achieve a favorable trade-off between global semantic understanding and local detail preservation through multi-scale feature aggregation and computational efficiency optimization13.

The image is taken from ref. 13. The framework comprises a hierarchical Transformer encoder and a lightweight ALL-MLP decoder.

In the hierarchical transformer encoder, the key advantage is its ability to simultaneously capture high-resolution fine details and low-resolution global contextual information, enabling the model to achieve both high accuracy and efficiency in segmentation at both macro and micro levels. It abandons the reliance on fixed positional encodings found in traditional Vision Transformers (ViTs) and instead constructs multi-scale feature representations through three core components—Overlapped Patch Embedding (OPE), Efficient Multi-head Self-Attention (EMSA), and Mixed Feed-Forward Network (Mix-FFN)—working in concert, with the design of the self-attention mechanism being particularly crucial.

The self-attention mechanism is capable of capturing both high-resolution local details and low-resolution global context. However, its quadratic computational complexity with respect to input size \({\mathscr{O}}\left({{\rm{N}}}^{2}\right)\) poses a major bottleneck for processing high-resolution images.

$$Attention\,\left(Q,K,V\right)=Softmax\left(\frac{Q{K}^{T}}{\sqrt{{d}_{{head}}}}\right)V$$

(3)

Q,K,V∈RN×C refers to the Query, Key, and Value matrices. N = H × W denotes the total number of image patches; dhead represents the dimension of each attention head. Therefore, the EMSA module significantly reduces computational complexity to \({\mathscr{O}}\left(\frac{{{\rm{N}}}^{2}}{{\rm{R}}}\right)\) by applying a preset reduction ratio R (64, 16, 4, 1) across four stages, leveraging convolutional downsampling to compress the sequence length.

Moreover, the Overlapped Patch Embedding (OPE) in the encoder employs an overlapping convolutional downsampling strategy, where the kernel size K, stride S, and padding P are carefully configured to ensure that adjacent image patches overlap at their boundaries. This effectively mitigates the loss of local continuity caused by non-overlapping patch partitioning in conventional models, thereby enhancing the joint representation of local details and global structure. Meanwhile, the Mixed Feed-Forward Network (Mix-FFN) completely discards positional encoding and instead explicitly captures local spatial information through depth-wise convolutions. This design avoids the performance degradation typically caused by interpolating positional encodings when the input resolution at test time differs from that during training, enabling the model to handle inputs of arbitrary sizes seamlessly.

The decoding process is handled by the lightweight ALL-MLP decoder. The MLP can perform nonlinear fusion of high-dimensional data from each channel, enabling thorough integration and effective utilization of information across different dimensions and channels, while the upsampling and downsampling design further enhances fusion efficiency. Owing to the larger effective receptive field (ERF) provided by the hierarchical Transformer encoder, the decoder of SegFormer can be simplified into a lightweight, fully MLP-based structure, eliminating the need for complex upsampling modules or fine-grained hyperparameter tuning. Its high-dimensional nonlinear fusion proceeds as follows: first, the four multi-scale feature maps output by the encoder are linearly projected into a common dimension; subsequently, all feature maps are upsampled via bilinear interpolation to one-quarter of the input resolution to achieve cross-scale spatial alignment; next, the aligned features are concatenated along the channel dimension and passed through a 1 × 1 convolution (i.e., a linear layer) to reduce channel dimensionality, thereby lowering computational cost while simultaneously fusing semantic information across scales; finally, another MLP (or linear layer) nonlinearly integrates the fused high-dimensional features to directly produce the semantic segmentation prediction map. This design efficiently realizes nonlinear multi-scale feature fusion in a high-dimensional space, achieving a balance between performance and simplicity.

$$\hat{{\rm{Y}}}={{\rm{W}}}_{2}{\rm{\sigma }}\left({{\rm{W}}}_{1}[\uparrow ({{\rm{F}}}_{1}),\uparrow ({{\rm{F}}}_{2}),\uparrow ({{\rm{F}}}_{3}),\uparrow ({{\rm{F}}}_{4})]\right)$$

(4)

\({{\rm{F}}}_{{\rm{i}}}\) denotes the feature map from the i-th encoder stage, \(\uparrow (.)\) represents bilinear upsampling to one-quarter of the input resolution, \([.]\) denotes channel-wise concatenation, \({{\rm{W}}}_{1}\) and \({{\rm{W}}}_{2}\) are learnable linear transformations (implemented as 1 × 1 convolutions), and \({\rm{\sigma }}\) is a nonlinear activation function.

Adaptation and modification of SegFormer

First, we introduce the Dynamic Channel Scaling mechanism. The original SegFormer architecture is designed for three-channel input, whereas in this study the input data has a variable number of channels; therefore, the model needs to be equipped with the capability to dynamically adapt to different channel counts. To effectively utilize the pre-trained model while accommodating these multi-channel inputs, this study proposes a systematic approach for input adaptation and pre-trained weight transfer. This mechanism preserves the representational capacity of the pre-trained parameters, while allowing the model architecture to be flexibly adapted to the specific task.

To begin, the input channels are dynamically allocated. In the newly constructed convolutional kernel matrix, the first \({{\rm{C}}}_{0}\) channels can directly reuse the pre-trained weights from the original model:

$${{\rm{W}}}_{{\rm{new}}}\left[:,{{\rm{C}}}_{0}\!:,:,:\right]={{\rm{W}}}_{{\rm{old}}}$$

(5)

\({{\rm{W}}}_{{\rm{old}}}\) is the original first-layer weight, and \({{\rm{W}}}_{{\rm{new}}}\) is the new first-layer convolution kernel matrix. In cases where the input channel count is fewer than three (e.g., grayscale imagery), we employ the following adaptation strategy: the available channel weights are replicated to fill the first three dimensions, while the remaining dimensions are initialized with low-variance weights using He initialization45, approximating the distribution of natural image channels.

For input modalities with more than three channels, we propose two complementary strategies to effectively incorporate the additional information while preserving the knowledge encoded in the pre-trained weights.

Channel Weight Replication: This strategy is particularly applicable when the additional input channels share semantic or spectral similarity with the original channels. For instance, in multimodal imagery where channels such as water bodies or vegetation exhibit spectral characteristics similar to the blue or green channels, the weights of the existing channels can be directly replicated to initialize the new ones:

$${{\rm{W}}}_{{\rm{new}}}\left[:,{{\rm{C}}}_{0}\!:,:,:\right]={{\rm{W}}}_{{\rm{old}}}\left[:,{{\rm{C}}-{\rm{C}}}_{0}\!:,:,:\right]$$

(6)

Channel-wise He Initialization with Scaled Variance: When the additional channels represent new modalities (such as Digital Elevation Model (DEM) or enhanced feature maps) that lack a direct correspondence to the original input channels, simple weight replication may introduce undesirable bias. In such cases, the weights for the new channels are initialized using the Kaiming He initialization to ensure a statistically appropriate distribution. An empirical scaling factor \(\gamma {=}\sqrt{\frac{2}{1+\frac{1}{\pi }}}\) is introduced to enhance the stability of the initial weight distribution. This factor is motivated by a modified form of He initialization, specifically adapted to maintain consistent variance across layer activations under multi-channel input conditions during forward propagation:

$${{\rm{W}}}_{\mathrm{new}}\left[:,{{\rm{C}}}_{0}\!:{\rm{C}},:,:\right]\leftarrow {{\rm{W}}}_{\mathrm{new}}\left[:,{{\rm{C}}}_{0}\!:{\rm{C}},:,:\right]\cdot {\rm{\gamma }}$$

(7)

Second, we implement dynamic weight loading and parameter migration in the model. The parameters of the SegFormer pre-trained model are only suitable for three-channel input, whereas the new model supports a variable number of channels; thus, the channel compatibility of the parameters needs to be optimized. In the context of transfer learning, aligning the model architecture with the pre-trained weights becomes a critical task. Particularly after modifications to key components such as the input channels, classification head, and decoder structure, the original pre-trained parameters can no longer be directly applied in their entirety. A naive loading of these weights would result in dimension mismatches, gradient anomalies, or even training failure. To address this challenge, a structural compatibility screening mechanism is introduced to selectively map and retain transferable parameters. To enable effective transfer learning under architectural modifications, we propose a structural compatibility screening mechanism. This approach identifies and maps the subset of pre-trained parameters that remain compatible with the adapted model structure, ensuring stable initialization and preserving the knowledge encoded in the original weights.

The structural compatibility mapping can be formulated as:

$${{\rm{\theta }}}_{\mathrm{compatible}}=\left\{\left({\rm{k}},{\rm{\nu }}\right){\rm{\epsilon }}{{\rm{\theta }}}_{\mathrm{pre}}\mathrm{|k}{\rm{\epsilon }}{{\rm{\theta }}}_{\mathrm{custom}}\wedge \mathrm{shape}({\rm{\nu }})=\mathrm{shape}({{\rm{\theta }}}_{\mathrm{custom}}[{\rm{k}}])\right\}$$

(8)

where \({{\rm{\theta }}}_{\mathrm{pre}}\) is the parameter set of the original pre-trained model, and \({{\rm{\theta }}}_{\mathrm{custom}}\) is the parameter set of the current custom model initialization. That is, only parameters with both identical key names and matching tensor dimensions are retained. Subsequently, the compatible parameters are transferred to the corresponding layers of the customized model:

$${{\rm{\theta }}}_{{\rm{custom}}}[{\rm{k}}]\leftarrow {{\rm{\theta }}}_{{\rm{pre}}}[{\rm{k}}],\forall {\rm{k}}\in {{\rm{\theta }}}_{{\rm{compatible}}}$$

(9)