As the potential of quantum computing increases, integrating these techniques into existing workflows becomes a challenge for non-experts. Silvie Illésová, Tomáš Bezděk and colleagues at the Gran Sasso Institute of Science are tackling this problem by developing a practical framework that guides users from standard classical machine learning to a hybrid quantum reinforcement approach. Their method starts with a classical self-training model, introduces minimal quantum components, and, importantly, employs diagnostic feedback to optimize the hybrid architecture. Experiments demonstrate that this approach dramatically improves performance, increasing accuracy from 0.31 to 0.87 on standard datasets, suggesting that even small quantum enhancements, if properly guided, can significantly improve machine learning capabilities for practitioners without deep quantum computing expertise.

This study begins with a classic self-learning model, specifically partial least squares regression applied to the Iris dataset, which first assigns arbitrary labels to 150 samples with four features. The model undergoes iterative refinement, updating labels based on cross-validation accuracy and establishing a baseline for comparison over up to 20 iterations. The team then designed a minimal hybrid model, called Quantum-FAST, that mirrors the classical structure but incorporates quantum components.

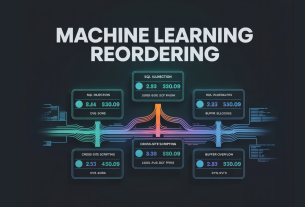

The input data undergoes principal component analysis to reduce four features to a compact representation suitable for quantum encoding. This reduced data is fed into a quantum neural network, a 2-qubit EstimatorQNN, followed by a smaller classical neural network that maps quantum outputs to label predictions. This design demonstrates the potential for improvement while minimizing quantum resource usage. To evaluate and refine this hybrid model, this study uses QMetric, a toolkit used to analyze training behavior, feature space properties, and quantum circuit properties.

This analysis provides targeted feedback and guides modifications to the minimal hybrid model to improve training characteristics and address identified weaknesses. The researchers demonstrated this workflow using the Iris dataset implemented in Qiskit, Qiskit Machine Learning, PyTorch, and scikit-learn, and compared the performance of a classical model, an initial minimal hybrid model, and a refined hybrid model. The resulting accuracy improvement increases from 0.31 for the classical approach to 0.87 for the quantum approach, demonstrating the potential of this framework to enhance class separation and representation capabilities in hybrid learning.

Hybrid learning with quantum diagnostics improves accuracy

This work successfully demonstrated a practical route for individuals with classical machine learning expertise to incorporate quantum computational elements into their models without prior quantum knowledge. The team developed a three-stage framework. We start with a classical self-learning model, then introduce a minimal hybrid quantum-classical variant, and finally refine the hybrid architecture by applying diagnostic feedback using a tool called QMetric. Experiments on the Iris dataset reveal that this approach improves accuracy from 0.31 to 0.87, demonstrating that even small quantum contributions can significantly enhance learning and improve data representation when guided by appropriate diagnostics.

Further analysis using QMetric showed that the refined hybrid model not only achieved higher accuracy, but also exhibited stronger determinism in label assignment and more global entanglement within the quantum circuit. Importantly, these expressivity improvements were achieved without compromising the stability of the training process or encountering problems such as sterile plateaus. The team proposes an iterative refinement loop in which diagnostic feedback guides the gradual development of classical models, moving beyond a trial-and-error approach to model architecture to increasingly effective hybrid designs. The authors acknowledge that the current study focuses on a specific dataset and model configuration, and future research is likely to explore the generalizability of this framework to more complex datasets and machine learning tasks.