Nvidia recently published a research paper on a new NTC (Neural Texture Compression) that promises to reduce VRAM usage by up to 85% without sacrificing quality.

This comes as Nvidia admitted that VRAM usage was spiraling out of control due to consumers demanding photo-realistic graphics.

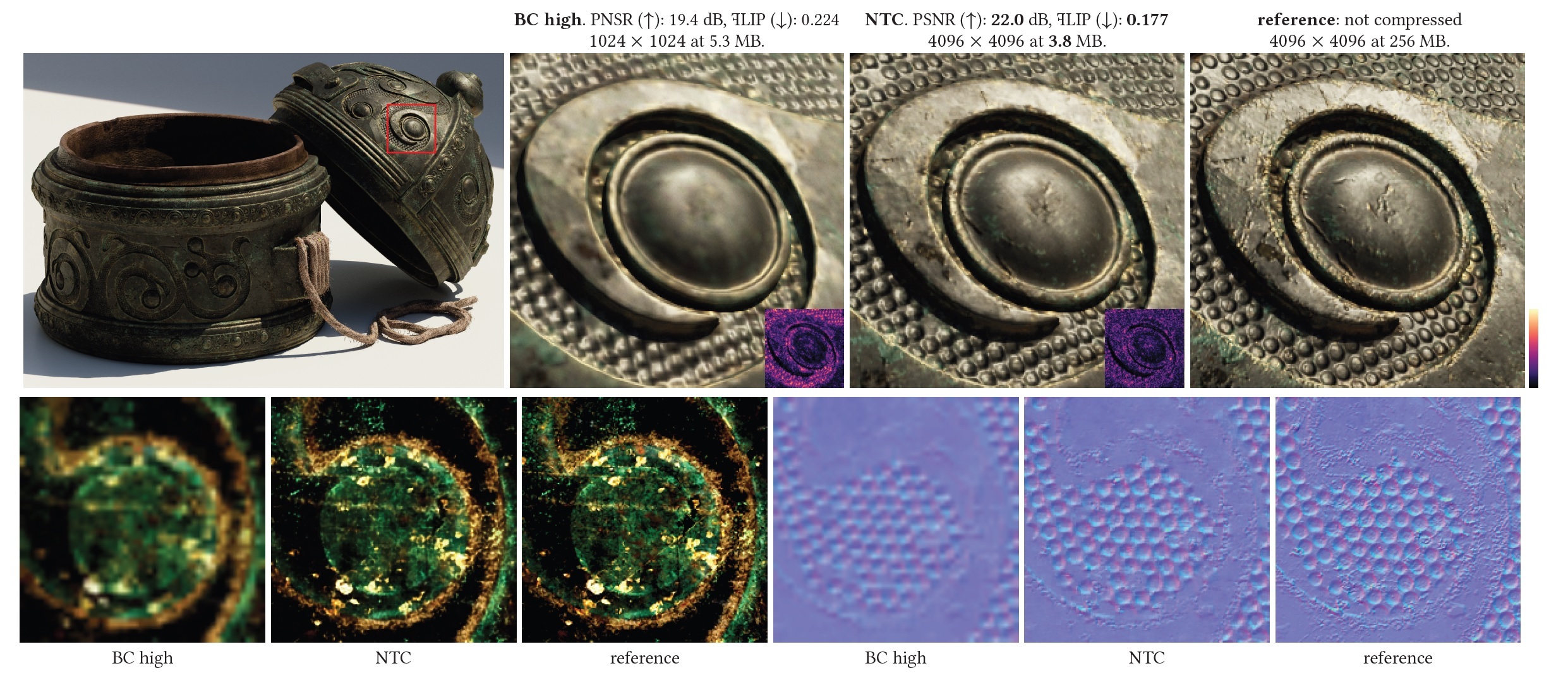

This paper is quite technical, but looks at how textures can be encoded rather than stored at full resolution. It is based on machine learning and uses neural network techniques to reconstruct images. This will also cut the size of the texture. The most extreme example Nvidia revealed was 1/24th of the original size.

One of the main points is that this method does not use any generative algorithms and is completely deterministic. This is a good way to show that no random elements are used and that the same input always produces the same output. The encoding and neural processing is done in the Matrix Engine, which is driven by Tensor Cores, so the performance of regular CUDA cores is not affected. This also means that the latest RTX50 series cards should theoretically be able to support it as soon as game developers start implementing it.