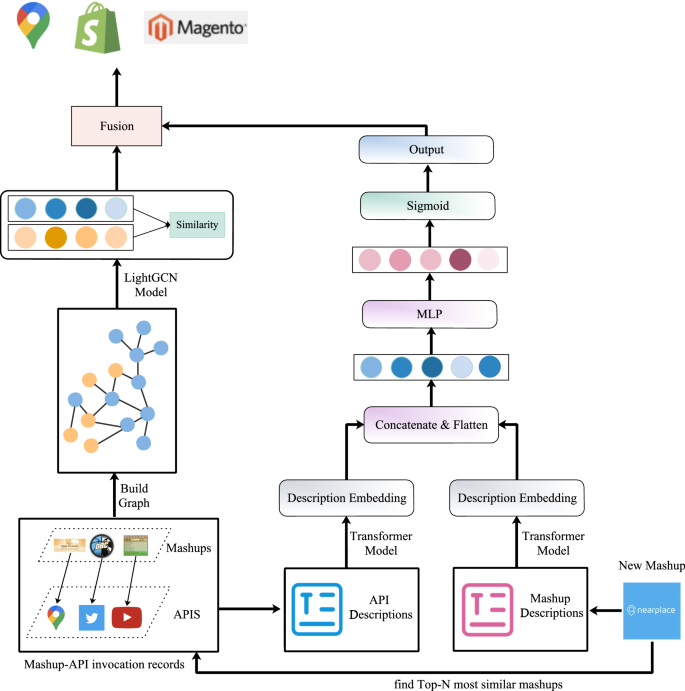

For service recommendation, there are three steps. Firstly, we build the recommendation model; Secondly, the recommendation model will be trained against the invocation records of services and mashups gathered from ProgrammableWeb. After that, developers or users submit their requirements which described in natural language, and the candidate collection of services will be generated. The framework of this paper is demonstrated in Fig. 2. In this section, we will discuss our method in detail.

The framework of MSRDL. The figure is created using ORIGINLAB ver.2023 (https://www.originlab.com/).

Problem definition

Definition 1

(Mashup) A mashup m represents the composition of one or many web services into one single application, formalized as a 4-tuple, i.e., \(m=<m_n; m_{des};(s_1,s_2…s_n);(m_{t1},m_{t2},…,m_{tj})>\), where \(m_n\), \(m_{des}\), \((m_{t1},m_{t2},…,m_{tj})\) represent the name, text description and tag sets of m and \((s_1,s_2…s_n)\) represents the service record invoked by m.

Definition 2

(Service) A Service s is a structure based on programming language, which makes it easier for developers to create complex functions, formalized as a 3-tuple, i.e., \(s=<s_n;s_{des};(s_{t1},s_{t2},…,s_{ti})>\), where \(s_n\), \(s_{des}\), \((s_{t1},s_{t2},…,s_{ti})\) represent the name, text description and tag sets of s, respectively.

Definition 3

(Mashup-service invocation) Given a set of mashups as \(M =\{m_1,m_2..m_q\}\) and a set of services as \(S =\{s_1,s_2…s_p\}\), A Mashup-Service Invocation matrix \(Y\in R^{q\times p}\) is defined according to the service invocation by mashups. For mashup m and service s, \(y(m,s) = 1\) means that s is invoked by m, and \(y(m,s) = 0\) if otherwise.

Problem definition Given the mashup service history invocation records and the text information about mashups and services, for a new required mashup at the moment with user queries as a collection of words, a ranked list of services will be recommended to the requesting user. Services with higher rank in the list should have higher probability to be adopted by the user than others with lower ranks.

Service recommendation

Preprocessing

When creating a new mashup \(m’\), the developer needs to enter the mashup requirements (represented by \(d_i\)) consisting of a set of phrases, sentences, or even paragraphs as the developer’s initial query for similar mashups. Such descriptions usually contain noises and cannot be used directly for model learning. To reserve the important information in the texts, the following processes are usually required.

-

1

Text Filtering: Words such as tags, punctuation marks, non-characters and stop words are usually unmeaningful. The regular expressions can be used to filter them out.

-

2

Abbreviation Replacement: Abbreviations should be replaced with their complete spellings. For example, ‘dont’ is replaced to ‘do not’.

-

3

Lemmatization: Lemmatization is the process of converting a word to its base form. The Lemmatization method is based on WorldNet’s built-in morph function. For example, the word “trees” is reduced to the word “tree”, and the word “used” is reduced to “use”.

For example, when developing a mashup named NearPlace as shown in Fig. 2, the description \(d_i\) pre-processed to the resulting word set \({d_i}’\)= {‘nearplace’, ‘free’, ‘store’, ‘locator’, ‘google’, ‘map’, ‘marker’, ‘product’, ‘user’, ‘friendly’, ‘time’, ‘advanced’, ‘widget’, ‘thanks’, ‘extremely’, ‘easy’, ‘management’, ‘always’, ‘actual’, ‘news’, ‘opening’, ‘hour’, ‘product’, ‘service’, ‘many’}.

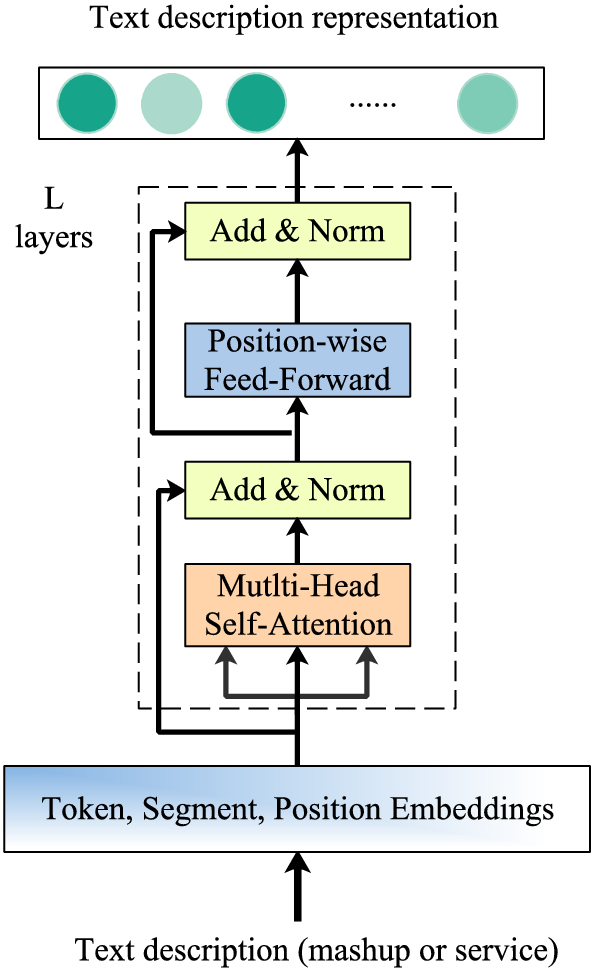

Content component (CC)

For a given input token, the corresponding embeddings including three parts, namely token, segment and position. The input representation is constructed by summing these embeddings in our study. Token embedding indicates word embedding of each word, segment segmentation is used to distinguish two sentences, and position embedding refers to encoding the position information of words. A visualization of this construction can be seen in Fig. 3. Transformer uses the task of masking language model for self-supervised training together with the task predicted in the next sentence. Masking language model refers to randomly masking 15% of sub-words in the text, replacing 80% with [MASK] label, 10% with random words, and leaving 10% unchanged. By asking the model to predict the 15% of these sub words that are covered, the model learns the vector representation of the text. The final input token representation \(E_t\) in transformer model22 is constructed by summing its corresponding token, segment and position embedding as Eq.(1).

$$\begin{aligned} E_t=E_{token}+E_{pos}+E_{seg} \end{aligned}$$

(1)

As illustrated in Fig. 3, the Word-Level Module (WLM) applies multiple Transformer Layers (TL) iteratively to compute the hidden representations at each layer for each word and propagate the matching signal at word-level simultaneously. Each TL contains two sub-layers: a Multi-Head SelfAttention sub-layer and a Position-wise Feed-Forward network.

The stage of learning representation of text description.

The Self Attention can be described as mapping a set of key-value pairs of query and key value to the output, and the output is calculated as the weighted sum of values. The weight assigned to each value is calculated by the similarity function between the query and the corresponding key This form of attention is called Scaled Dot Product Attention, and the corresponding mathematical Equation is as follows:

$$\begin{aligned} Attention(Q,K,V)=softmax(\frac{(QK^T)}{\sqrt{d_k}})V \end{aligned}$$

(2)

where Q, K and V represents the query, key and value matrix correspondingly, which are projected from \(E_t\) matrix with different learned projection matrices as in Eq.(1), and \(\frac{1}{\sqrt{d_k}}\) is a scaling factor to avoid extremely small gradients by producing a softer attention distribution. For Multi-Head Self-Attention layers, Q, K and V are first mapped through the parameter matrix, then self-attention is done, and finally the results are concatenated and sent to a fully connected layer. The calculation process is as Eq.(3) and Eq.(4):

$$\begin{aligned} head_i=Attention(E_t W_i^Q,E_t W_i^K,E_t W_i^V) \end{aligned}$$

(3)

$$\begin{aligned} MultiHead(Q,K,V)=[head_1; head_2… head_h]W^O \end{aligned}$$

(4)

where \(W^O\), \(W_i^Q\), \(W_i^K\) and \(W_i^V\) are learnable parameters, i represents \(i-th\) head. The experimental results show that multi head can extract the features of different heads at a more detailed level, and the effect of feature extraction is better when the overall computational load is the same as that of a single head. The Position-Wise Feed-Forward network is a fully connected feedforward network, and the words in each position pass through the same feedforward neural network separately. It consists of two linear transformations, that is, two fully connected layers. The activation function of the first fully connected layer is ReLU activation function, can be expressed as Eq.(5) :

$$\begin{aligned} FFN(x)=ReLU(xW_1+b_1 ) W_2+b_2 \end{aligned}$$

(5)

where \(W_1\), \(W_2\) and \(b_1\), \(b_2\) are learnable parameters and shared across all positions. The Transformer layer then employs the residual connection and layer normalization function LN around the above two sub-layers to extract the contextual representation, as Eq.(6) indicates.

$$\begin{aligned} v_m=LN(x+FFN(x)) \end{aligned}$$

(6)

The final representation of mashup and service, extracted by L stacking Transformer layers, are denoted as \(v_m\) and \(v_s\). We concatenate \(v_m\), \(v_s\), then utilize an MLP to capture interactions among \(v_m\), \(v_s\). Moreover, we select the parametric rectified linear unit (ReLU) as the activation function since it can improve model fitting with nearly zero extra computational cost and little overfitting risk. The learning process can be written as Eq.(7):

$$\begin{aligned} i_{m,s}=MLP(v_m\oplus v_s) \end{aligned}$$

(7)

where \(i_(m,s)\) is the learned vector. We finally feed the learned interaction vector into a sigmoid function, whose output, \({\hat{r}}\), represents the probability of m selecting s as the service to be recommended. The process can be written as Eq.(8) :

$$\begin{aligned} {\hat{r}}=sigmoid(W^T i_{m,s}+b) \end{aligned}$$

(8)

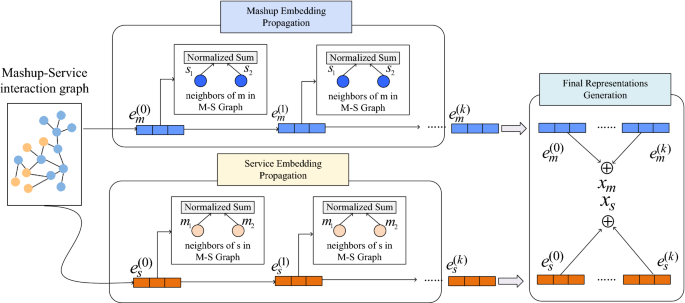

Interaction component (IC)

Through the mashup-service innovation matrix, we build a mashup service tag network graph \(G = (V,E)\), where \(V=\{v_1,v_2…v_n\}\) represents the node set, and E represents the edge set. Here, mashup set M, service set S are used to build the node set \(V = M\cup S\). For the edge set E, if an invocation between the mashup m and service s, they are connected in the graph, thus forming the edge set of \(E_{m,s}\). The mashup-service network graph is trained based on the LightGCN model23 as Fig. 4 shows. It consists of 3 parts: (1) a embedding layer, the mashups and services from their identifiers (IDs) is mapped to a dense vector with fixed dimensions, (2) multiple propagation layers, the embeddings in the mashup-service graph are propagated, and (3) final representations generation, the final representations of the mashups and services are generated by this part.

Architecture design of IC. The figure is created using ORIGINLAB ver.2023 (https://www.originlab.com/).

The embedding layer encoders a mashup m (or a service s) with a fixed-length vector \(e_m^{(0)}\in R^d\) (\(e_s^{(0)}\in R^d\)). Such a vector is called an embedding. Here d is pre-specified parameter, denoting the size of an embedding. The superscript “k” is a layer index, indicating that the embeddings are the output of the \(k-th\) propagation layer, the superscript “0” is used to indicate the output of the embedding layer. After being initialized in the embedding layer, the embeddings will be iteratively optimized in the sub-sequential training process of the LightGCN model. For a mashup m and a service s, we use the mashup-service interaction graph to update the mashup embedding outputted from the (\(k-1\))-th layer. To do this, two embeddings are generated for the mashup m and service s in the mashup-service interaction graph as Eq.(9) and Eq.(10), denoted \(x_m^{(k)}\) and \(x_s^{(k)}\).

$$\begin{aligned} x_m^{(k)}=\sum _{s\in N_m}\frac{1}{\sqrt{\vert N_m\vert } \sqrt{\vert N_s\vert }}x_s^{(k-1)} \end{aligned}$$

(9)

$$\begin{aligned} x_s^{(k)}=\sum _{m\in N_s}\frac{1}{\sqrt{\vert N_m\vert } \sqrt{\vert N_s\vert }}x_m^{(k-1)} \end{aligned}$$

(10)

Where \(N_m\) and \(N_s\) denote the mashup m’s neighborhood set and service s’s neighborhood set in mashup-service interaction graph. After being propagated through the K layers, the embeddings of the mashup m and service s are obtained, i.e., \(\{x_m^{(0} ), x_m^{(1)}… x_m^{(k)}\}\) and \(\{x_s^{(0)},x_s^{(1)} … x_s^{(k)}\}\) respectively. Based on this, the final representations of the mashup and service are generated by summing up the outputs of the K layers Eq.(11) and Eq.(12):

$$\begin{aligned} x_m= & {} \sum _{k=0}^Ka_kx_m^{(k)} \end{aligned}$$

(11)

$$\begin{aligned} x_s= & {} \sum _{k=0}^Ka_kx_s^{(k)} \end{aligned}$$

(12)

Service selection and ranking

Through the transformer model, we obtain the text requirements vectors of the mashups and services, denoted as \(v_m\) and \(v_s\) . Meanwhile, by employing the LightGCN model, we obtain the low dimensional representations of the mashup m and service s, denoted as \(x_m\) and \(x_s\). When a new mashup \(m’\) needs to be created, we calculate its representation vector using Eq.(13):

$$\begin{aligned} v_{m’}=\sum _{nm’\in N(m’)} cosine(v_{m’}, v_{nm’})\cdot x_{nm’} \end{aligned}$$

(13)

where \(nm’\) is the neighbor of the new mashup \(m’\). In this paper, we select Top-N neighbor mashups that are most similar to the new mashup \(m’\) as the final representation. \(x_{nm’}\) is the representation of the neighbor mashup obtained by the LightGCN model. The raking score of the mashup m on service s is calculated by taking the inner product of their representations as Eq.(14):

$$\begin{aligned} p_{m’}^s=\frac{\sum _{nm’_{i=1}}^Nx_{nm’_i}\cdot x_s}{N} \end{aligned}$$

(14)

where N is the number of the neighbor mashups of \(m’\), \(x_s\) is the embedding of service s that obtained by the LightGCN model. Finally, the preference of mashup m for service s is shown in Eq.(15):

$$\begin{aligned} s_{final}={\hat{r}}+p_{m’}^s \end{aligned}$$

(15)