AI tools are now accessible to people in many organizations, and their use continues to expand. Some groups rely on AI during normal business operations, while others treat it as an occasional helper. The gap between access and everyday use is at the heart of Deloitte’s new research on enterprise AI adoption.

The study is based on a global survey of more than 3,200 business and IT leaders conducted in late 2025. Respondents come from large organizations across a variety of industries and geographies. There has been a lot of progress reported over the past year, especially in terms of access to tools and executive support. The findings also point to frictions around scaling, governance, and workforce readiness.

Access grows faster than daily usage

Survey data shows an increase in authorized AI access. Approximately 6 in 10 employees now approve tools, compared to less than 4 in 10 a year ago. A small percentage use these tools as part of their daily workflow. Usage patterns remain uneven across roles and functions.

Organizations report initial productivity gains related to tasks such as summarization, research, and basic automation. Leaders explain that AI can help with specific steps in the process. Day-to-day end-to-end usage across teams seems less common. Many respondents associated this pattern with gaps in training, workflow design, and uncertainty around expectations.

Transition from pilot projects to production remains limited. A quarter of organizations surveyed reported moving a significant percentage of their experiments into production. More than half expect to reach that level within a few months. Respondents described integration efforts, security reviews, and compliance checks as central parts of their migration.

From a security perspective, this transition introduces new operational risks. “Organizations should expect new types of attacks and failure modes to emerge, especially when it comes to exploiting authorized access outside of the intended workflow,” Ali Sarrafi, CEO of Kovant, told Help Net Security. “Security teams will need to rethink controls originally designed for human activity.”

Salafi said many of these risks stem from agents performing legitimate actions in the wrong circumstances. For example, agents may have permission to query their preferred data, but certain tasks, such as booking travel, require restricting access to information for a single user. Addressing this gap requires a permissions model that adjusts dynamically based on the scope of the task.

Transformation is different for every company

The study groups organizations by how closely AI is tied to their core operations. About one-third report major changes, such as new products, process redesigns, or revised business models. The rest is updating processes or applying AI within existing workflows.

All groups report productivity gains related to day-to-day operations, but the impact on the bottom line remains limited. Leaders expect future growth from AI-driven products and services, with current gains focused on operational outcomes and decision support.

Security teams supporting these efforts are becoming increasingly complex as agent workflows span more systems. Sarrafi said the number of possible behavior patterns increases rapidly as agents connect to different tools and services. This behavior becomes difficult to observe on a large scale. He emphasized the need for external control mechanisms to interrupt invalid activity without relying on the agent’s own self-regulation. He says these controls need to be independent of the agent to prevent destructive sequences that could lead to data loss.

Unanswered questions remain about skills and job design

Employee readiness is often talked about. Many organizations are focused on basic AI training, with little movement on job design, career paths, or role changes.

Leaders expect automation to change some parts of many jobs in the coming years. Entry-level and task-focused roles will see the shift earliest, with managers spending more time overseeing work shared between people and machines.

Salafi added that reliability risks extend beyond access control. He described a scenario in which an agent appears to be performing well based on internal signals, independent of real-world outcomes. Without independent evaluation tied to external outcomes, organizations cannot be sure whether an agent’s actions are delivering the intended value. He said a continuous evaluation mechanism that operates outside of the agent provides a way to verify results during actual operations.

Sovereign AI moves into board discussions

The location and control of AI development now influences purchasing and architecture decisions. The majority of surveyed companies take country of origin into consideration when selecting vendors. Many companies report building their AI stacks with local providers to meet data location and regulatory expectations.

Sovereign AI refers to systems that are designed, trained, and deployed in accordance with local laws, using managed infrastructure and data. Respondents described this topic as part of their strategic planning discussions, especially for organizations that operate across borders. Requirements vary by region and industry, further complicating deployment options.

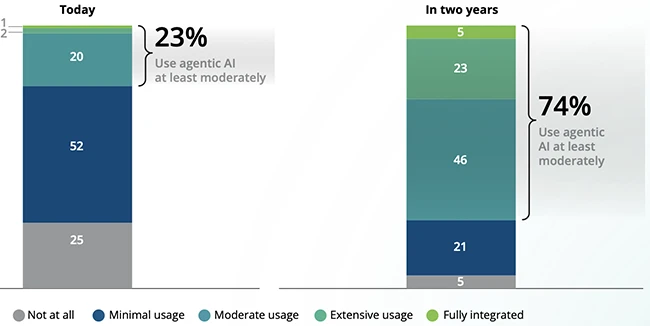

Scope of agent AI usage (Source: Deloitte)

Agentic AI grows with governance gaps

Interest in agent AI is rapidly growing. These systems set goals, reason through tasks, and operate through software interfaces. Almost three-quarters of companies surveyed plan to implement agent AI within two years. Current usage remains low.

Governance maturity lags behind implementation plans. Approximately one-fifth of respondents report an established governance model for autonomous agents. Leaders discuss the need to create boundaries around agent actions, approval workflows, monitoring, and audit records. These efforts involve cross-functional teams that include security, legal, and business leaders.

Salafi said many of the governance gaps go back to identity and privilege management. Integration often bypasses established practices and expands the scope for agent-driven action. If exploited, privileges could be escalated and the impact could be spread across connected systems.

Physical AI extends AI to operations

Physical AI includes robotics, automated machines, and systems that sense and operate in the physical world. More than half of the organizations surveyed reported some level of current usage. Adoption forecasts will increase over the next two years, especially in manufacturing, logistics, and defense.

Controlled environments such as factories and warehouses support early adoption. Leaders cite cost, safety requirements and regulatory approvals as key considerations. Business cases often include planning for infrastructure changes, maintenance, and downtime.

Security blind spots are most commonly seen at integration points between digital systems and physical assets. Sarrafi said agent systems rely on connections between email, workflow platforms and third-party services, extending exposure beyond traditional corporate boundaries. He added that this layer of integration creates shared risk ownership across IT, operational technology and safety teams.

Readiness varies by domain

Many leaders report having more confidence in their AI strategy and governance plans than in infrastructure, data management, and talent readiness. Strategy and policy decisions are made quickly at the executive level. System upgrades and skills development are time-consuming to perform across large organizations.

Enterprise AI is still a work in progress, influenced by access, design choices, and organizational structure. Advances continue to be made across tools, agents, and physical systems. Integration into day-to-day operations remains uneven, and many organizations are focused on the next stage of implementation.