Researchers at Meta, MIT, and other institutions connected servers with 12 Nvidia GPUs with optical switches and robotic arms to come up with a new interconnect that could be used for machine learning. Called “TopoOpt,” this fabric can create network topologies on-the-fly according to your computing needs. The technology comes at a time when high-performance computers are being strained by the widespread adoption of AI techniques like ChatGPT, which is testing the limits of Microsoft’s AI supercomputing.

A paper on this technology was presented at the USENIX Symposium on Design and Implementation of Network Systems held this week.

TopoOpt uses algorithms to find the fastest parallel computing technology based on information such as processing requirements, available computing resources, data routing technology and network topology. The researcher also improved Nvidia’s AllReduce feature, which minimizes communication time between the GPU and other components.

“TopoOpt uses reconfigurable optical switches and patch panels to create a dedicated partition for each training job and jointly optimize the topology and parallelization strategy within each partition,” the researchers wrote. I’m here.

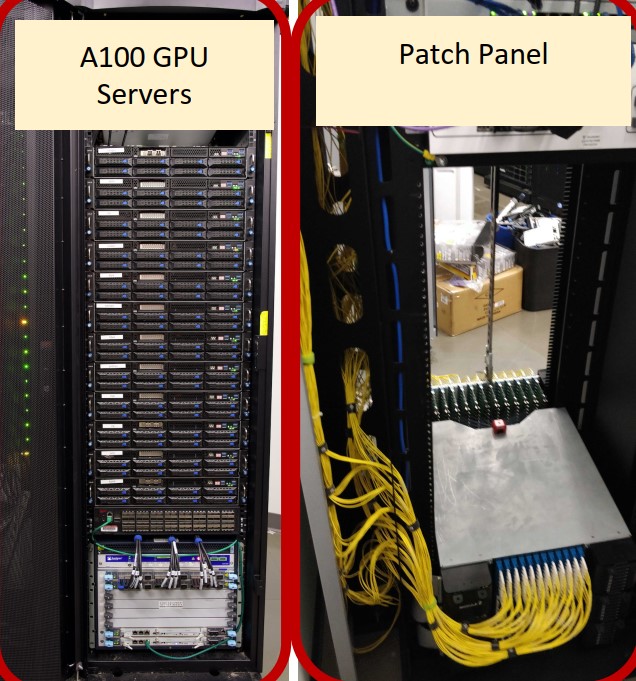

Researchers tested TopoOpt within a Meta infrastructure using 12 Asus ESC4000A-E10 servers each with 1 A100 GPU, HPE NICs and 100 Gbps Mellanox ConnectX5 NICs. The NIC had an optical transceiver with a breakout fiber.

“TopoOpt is the first system to co-optimize topology and parallelization strategies for ML workloads and is currently being evaluated for deployment on Meta,” said the researchers.

The setup also uses a Telescent patch panel that reconfigures the network using “a robotic arm that grabs the transmitting fiber and connects it to the receiving fiber.” A software-controlled robotic arm moves up and down, connecting transmit fibers to receive fibers anywhere in the system. This gives you the flexibility and elasticity you need to quickly reconfigure your network. Patch panels are already widely used in commercial applications, but are now being proposed for use in data centers.

Google recently published a paper detailing how they used an AI supercomputer with optical circuit switches to improve the training speed of TPU v4 chips while consuming less power. Optical circuit switching (OCS) in Google’s setup is not as mobile as a robotic arm, but it uses mirrors to switch between input and output fibers. Google’s setup was also a large testbed with a large deployment spanning his 4,096 TPUs.

The researchers chose patch panels because they found Google-style optical switches to be “five times more expensive” and support fewer ports. At the same time, researchers say OCS technology like the one used by Google is aimed at large-scale deployment. “The main advantage of OCS is that reconstruction latency is four orders of magnitude faster than patch panels,” the researchers wrote.

TopoOpt pre-provisions your compute and network requirements and is ready to use when your servers are ready and your tasks are ready to be deployed. “We already know the order in which the jobs will arrive and how many servers each job will require,” the researchers wrote, adding, “This design allows each server to participate in her two independent topologies.” will be,” he adds.

The researchers concluded that TopoOpt provided 3.4 times faster training iteration times than another technique called “fat trees.” In Fat Tree, the network backbone is the center of the infrastructure, with data processed across multiple layers of static switches linking the core network backends. From hardware to front-end servers. The technique is widely used today.

Using optical networks in data centers is a new concept, with researchers introducing robotic arms and new communication protocols as a cheaper way to build AI network infrastructure. The feasibility of this technique has been tested by Meta.