Yann LeCun Not a nice character;but he is certainly a character. For the past few days, the tech world has been buzzing about the story of his departure from Meta, in which every aspect of his personality, negative or positive, is on full display, from his arrogance and self-confidence to his approach to science. You can get a glimpse of this in the excerpt From an interview with FTOn top of that.

a long tweet Below is a summary of some of the backstory, mostly on LeCun's side. What's interesting, and I'll explain the big technical asterisk below, is that, as a longtime critic of LeCun, I'm basically on LeCun's side in this controversy.

This gives further power to Lucan, who has firmly maintained his position. Mr. Zuckerberg doesn't know as much about AI as Mr. LeCun, and neither does Mr. Wang. Additionally, research is what LeCun does best. he He may not be as original as he pretends to be.But he (mainly) has a good intellectual sense, and a person like a king mainly A somewhat “sneaky” data collection company There is no need for a particular technological vision to tell LeCun what to think.

And needless to say, I also have big doubts about LLM. I would do that at this point Literally hundreds of other researchersas well as prominent machine learning figures like Sutskever and Sutton. By sidelining LeCun, Zack and Wang made it impossible for him to stay. Mr. LeCun was right to retire, and as an accomplished scientist it was natural for him to want to pursue his own vision.

And, good thing, LeCun is very interested in models of the world. it's the same for me feel stressed frequently (sometimes referred to by the term cognitive model) over the years, especially in the 2020 article The next decade of AI.

So we're glad LeCun's new company is considering a new approach. However, I doubt it will be successful for two reasons.

§

The first reason is technical. Although LeCun talks about a world model, I don't think (due to what appears to be an ego-related mental block against the classical symbolic AI that he has always allied with and fought against) that he doesn't actually understand what a world model should be.

World model (in my case) 2020 articles (Correct) It must be full of explicit, structured, and directly retrievable knowledge about time, space, cause and effect, people, places, objects, events, etc. Formal reasoning requires all of this. (My 2020 paper gives a detailed example from Doug Lenat.) And all of this is necessary to avoid hallucinations.

Sadly, LeCun, who knows about neural networks but refuses to engage in discussions about what classical AI has to offer, doesn't really understand it. My own view, which I espouse, is that we “neurosymbolicAchieving trustworthy AI will require contributions from both traditions.

In other words, what LeCun ended up with as a “world model” was nothing more than an opaque, uninterpretable neural network unsuitable for inference. This is a real effort to make the system more representative of the world than just statistics about how languages are used on the web, and while it should be celebrated, it may still fall short. I don't think this will solve many of the problems that have plagued the LLM.

Indeed, Lekan already Working on his new approach, JEPA [Joint Embedding Predictive Architecture]over the past few years, Meta has had what appears to be a fairly large team (by academic standards), but not a lot of output, just a few papers that don't seem earth-shaking. He has a good name for what we need (a “world model”), but his implementation is poor.

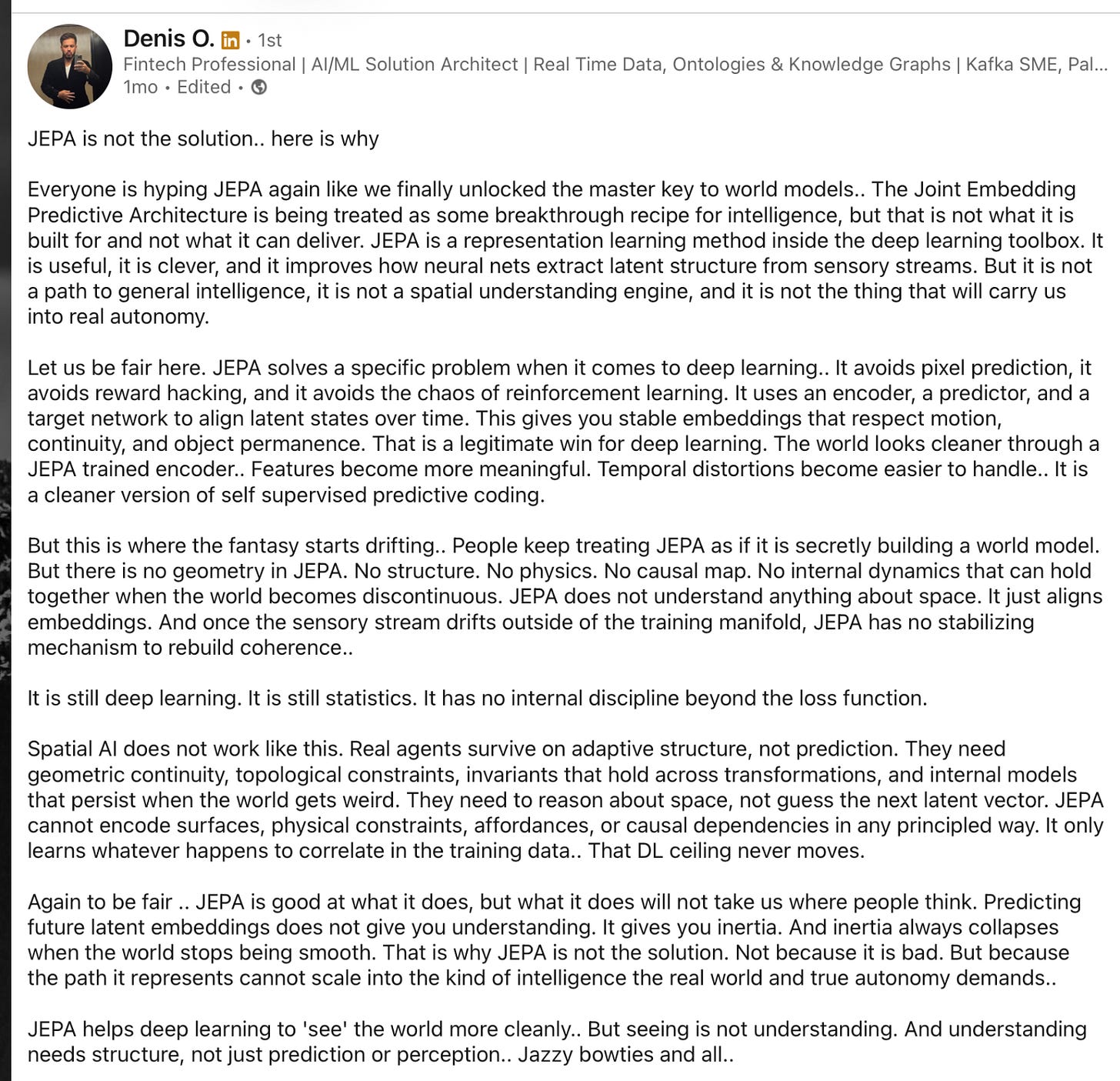

a A technical but smart post by Denis O on LinkedIn a month ago explains the situation very well.. If you don't follow the terminology, just skim through and focus specifically on the part about what JEPA is and isn't.

This is useful and smart, and improves the way neural nets extract latent structure from sensory streams. But it is not a path to general intelligence, it is not an engine of spatial understanding, and it does not lead us to true autonomy… People continue to treat JEPA as if it were secretly building a world model. However, JEPA does not have geometry. There is no structure. There's no physics. There is no causality map. There are no internal dynamics that can sustain the world when it becomes discontinuous. JEPA knows nothing about space. …Once the sensory flow drifts outside the training manifold, JEPA has no stabilizing mechanism to rebuild coherence. ”

Dennis Orr's closing summary states:JEPA helps deep learning “see” the world more clearly…but seeing is not understanding. And understanding requires structure, not mere prediction or recognition.So it's not really a model of the world.

That's a technical issue. The rest is a management issue. LeCun is unusually bad at acknowledging other people's work, and is on the verge of intellectual dishonesty, if not outright overstepping. He has systematically ignored and in some cases downplayed many of those who came before him, most of whom he rarely acknowledges, a pattern dating back to the early days of his career in the late 1980s. I wrote an entire essay about this in November, listing over a dozen academics who had undergone the Lucan treatment (Jürgen Schmidhuber, David Ha, Kunihiko Fukushima, Wei Zhang, Herb Simon, John McCarthy, Pat Hayes, Ernest Davis, Fei-Fei Li, Emily Bender, and myself), and soon received various emails about three others who had suffered similar abuse, including Alexander Weibel (a CMU professor). TDNN (which preceded and directly predefined LeCun's most famous work), Les Atlas (which fleshed out the term neural network convolution before LeCun), and Judea Pearl, LeCun's colleague and Turing Prize winner, had been talking about causation for many years before LeCun. If Mr. LeCun were to do the same thing at his new company, morale would be extremely low.

Still, LeCun's new company is looking to explore something truly new. That's something we in the AI field desperately need. Even if LeCun isn't doing the world model the right way, he's certainly trying. And for that I salute him.