In the past, if a client who normally preferred short email communications suddenly started sending long messages like legal memos, Ron Schulman would have suspected that he had received assistance from a family member or partner.

Family lawyers in Toronto are now asking clients if they have ever used artificial intelligence. And in most cases, he says, the answer is yes.

Almost every week, his company receives messages written or driven by AI, but Schulman said he's noticed a change in the past few months.

While AI can effectively summarize information and organize notes, some clients seem to trust it as “some kind of superintelligence” and use it to decide how to proceed with a case, he said.

“That forms a significant problem,” Schulman said in a recent interview, because AI is not always accurate and often agrees with the opinions of those using it.

Some people are now using AI to represent them in court without a lawyer, he said, but this could delay proceedings and increase legal costs for others as parties read through large volumes of AI-generated material.

As AI permeates many aspects of daily life, it is also making its way into courts and the legal system.

Further AI documents filed in court

Materials created on platforms such as ChatGPT have been submitted to courts, tribunals and commissions in Canada and the United States in recent years, sometimes tormenting lawyers and people trying to navigate the legal system on their own over so-called “hallucinations” – references that are inaccurate or simply made up.

In one high-profile case, a Toronto lawyer was found in contempt of court after including an example fabricated by ChatGPT in a filing earlier this year and then denying it during cross-examination by the presiding judge. Months later, his lawyer said in a letter to the court that he misrepresented what happened out of “fear of the possible consequences and utter embarrassment.”

AI illusions can come with financial as well as reputational costs.

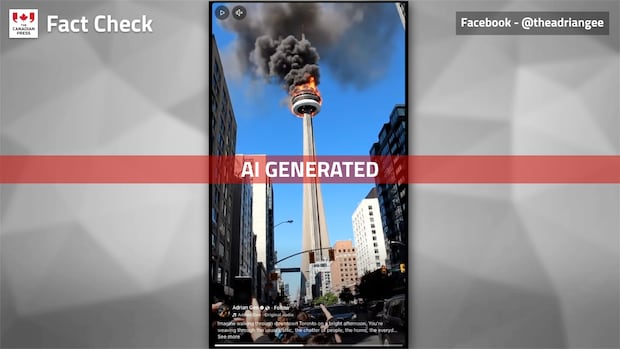

An AI-generated video of the CN Tower going up in flames has racked up millions of views since it was posted on Facebook earlier this week. While some people were quick to call the video fake, many others were fooled into re-sharing it. JP Gallardo shares some tips on how to tell the real thing from the fake.

In the fall, a Quebec court imposed a $5,000 penalty on a man who relied on generated AI to help him prepare a filing after parting ways with his lawyer. Shortly thereafter, Alberta's Supreme Court ordered $500 in additional costs to a woman whose filing included three fake officials, and warned that self-proclaimed litigants could expect “more significant fines” in the future if they did not follow the court's guidance on AI.

Courts and professional bodies in several states have issued guidelines regarding the use of AI, and some, including federal courts, require that the use of generative models be declared.

Some lawyers who use or have encountered AI in their work say it can be a useful tool when deployed wisely, but when used improperly, it can invade privacy, stall communications, erode trust and increase legal costs, even if no penalties are imposed.

Ksenia Chern-McCallum, a Toronto immigration lawyer who is licensed to practice in both Canada and the U.S., said more people are using AI to conduct research and submit completed applications for her to review.

She said clients could also use AI to “fact-check” her and run documents she authored through the platform, potentially exposing personal information and undermining trust in her work.

“It can put a huge strain on your relationships with your clients, because I'm telling them to do something and they second-guess me or say to me, 'I don't think you need to do this, why do you need to do this?' And they're fighting back, so how do I represent you and your best interests?” McCollum said.

“AI can scout the internet and tell you generally that it's part of this process, but my experience and knowledge of what works and what doesn't work in these processes is something that AI can't capture.”

AI-powered forms and investigations do not meet lawyer standards

Online forums for people working on immigration issues are also encouraging the use of AI to prepare applications and save on legal costs, she said.

“They submit that material, and the court says, 'Okay, we know you used AI, but you haven't disclosed it. But not only are you not disclosing it, you're referring to incidents that don't actually exist, you're referring to pathways that don't exist, and you're citing irrelevant laws,'” McCollum said.

“People are actually getting compensation against them because they're coming to court on their own behalf, thinking that AI is going to create these beautiful facts and not knowing that that's not what's supposed to happen.”

Shulman, the family lawyer, said trying to use AI to save money can sometimes have the opposite effect.

The Law Society of Ontario is considering replacing the bar exam with a skills-based course. CBC's Naama Weingarten explains why this change is possible.

He said one customer recently sent him a five- to six-page AI-written document about exclusive property rights (the right of a married couple to live in separate homes) that essentially told the company to include it in a court filing. problem? That was not the case since the client was not married.

“You just spent half an hour of your money reading something that's not useful in the first place,” he said.

Schulman said he now provides a basic disclaimer to customers, telling them they must read everything they send. He also advises clients to ask AI to explain legal concepts to them, or at least teach them how to use AI more effectively, rather than relying on it.

This type of guidance and information is in demand, said Jennifer Leach, executive director of the National Voluntary Litigation Project, an organization that advocates for voluntary litigants and develops resources.

Responsible use of AI requires learning, advocates say

The organization held a webinar last month to help people without lawyers properly and safely use AI in litigation, which was attended by about 200 people, she said, adding that more sessions are planned for the new year.

Leach said she sees it as a form of harm reduction: “People are going to use it, so let's use it responsibly.”

Her advice includes checking to ensure that cases referenced by the AI exist and are cited correctly, looking at court guidance on AI, and ensuring you stay within the length limits for filings.

AI has the potential to improve access to justice by enabling people to use a wealth of information to assist them in organizing their cases, but Leach said it is currently “a bit of a wild west”, especially when it comes to credibility.

“For lawyers in law firms, there are great AI programs to help with practice management, research, and drafting, but it's all kind of behind the wall of a paycheck,” she says.

“However, anything published in open source is less reliable and carries risks such as illusions and mistakes that don't exist in programs behind paywalls.”

Nainesh Kotak, a personal injury and long-term disability lawyer based in the Toronto area, said law firms need to leverage some form of AI to stay competitive.

The key, he said, is to have lawyers scrutinize and modify what AI generates, and to ensure compliance with privacy and data security rules and professional regulations.

After all, AI is a tool and cannot replace legal judgment, ethical obligations or human understanding, he said.