Ashley Liu, Contributing Illustrator

ChatGpt was released three years ago. According to a professor of computer science, their field has not been the same since.

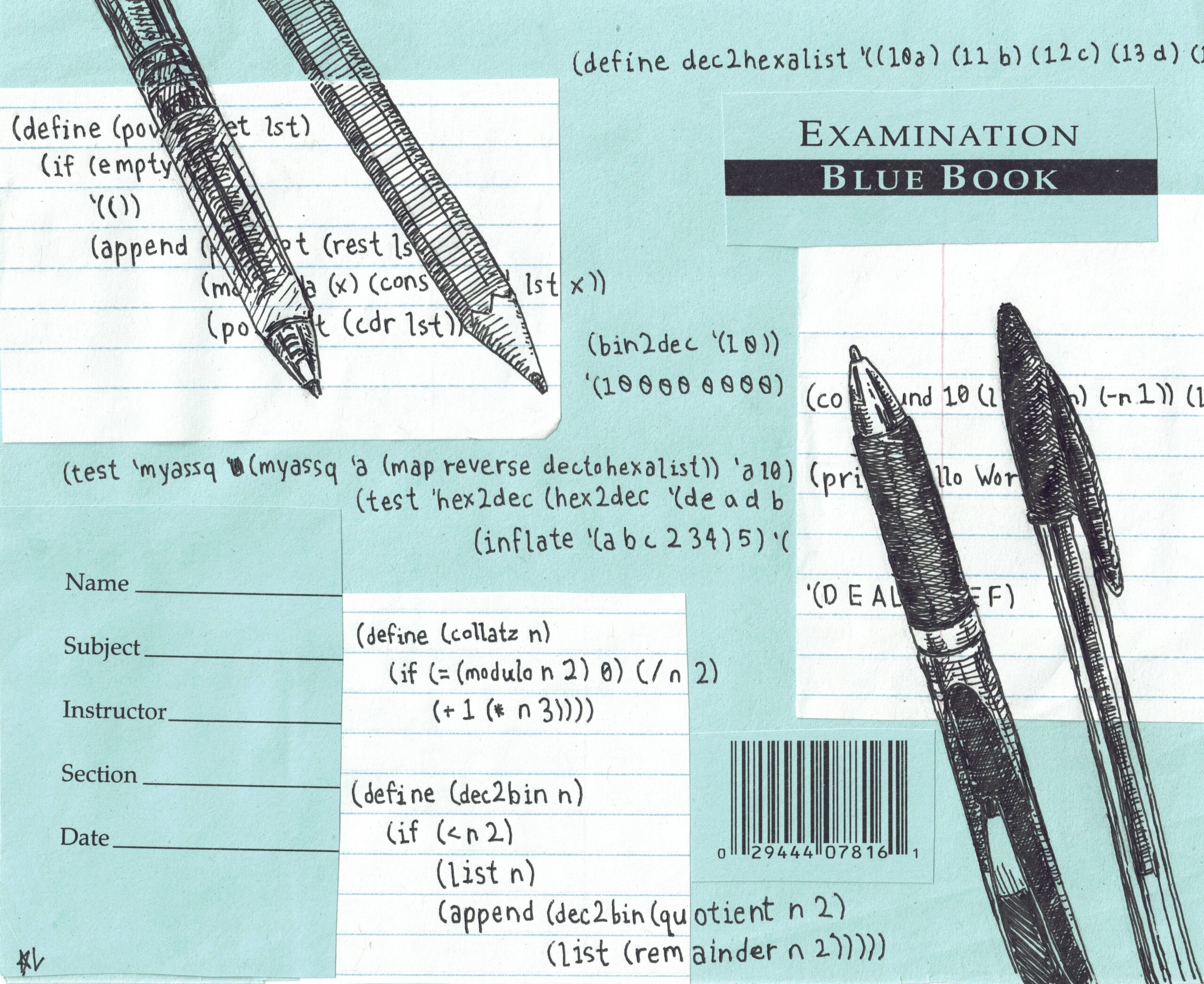

Grade weights for at least two course problem sets have been reduced to zero. The weight of the exam reached 90%. Some classes have introduced graded face-to-face meetings. However, the impact exceeds results.

“From my perspective as a former student, I think using AI to complete assignments in university courses is a high level of self-destruction,” Computer Science Professor Alan Weide wrote in an email to the news, explaining that using AI can hinder problem-solving skills. “From my perspective as a teacher, I think it dramatically increased the skepticism of seeing student submissions on asynchronous assignments, but this is not a good feeling.”

Theodore Kim, the undergraduate director of undergraduate research, wrote in the news in an email that instructors reported that students have begun to reduce their understanding of basic computer science since the advent of CHATGPT.

Six professors told the news that the use of AI would hinder learning the fundamentals of computer science concepts, if not all. They pointed to the virtue of training themselves to learn new crafts. Even if you make a mistake in the process and don't involve writing the best code from the start.

“If you want to learn to play the piano, it doesn't matter if the piece performs better on Spotify,” Professor Xuyen Chen said in a telephone interview with the News. “If you want to learn that, you have to sit down and do it yourself. So those are the decisions you have to make for yourself.”

Professor Michael Scher compared the learning process to code and to long-distance running training. There's no alternative to wearing shoes and “running a few miles.” Students need to use their undergraduate years “greedy” to build critical thinking skills and use AI carefully, he wrote in the news in an email.

Shah added that basic “thinking” makes programming interesting, rather than “generating as much code as possible.”

Weide writes that he has not yet seen the application of generative AI that he would rather not do himself.

“Thinking about things is fun,” he wrote. “Writing programs is fun. Fixing broken programs is fun. Giving good lectures is fun. Reading books, blogs and documentation to learn new things is fun. Why outsource this?”

Shah also writes that asking questions and talking to professors, undergraduate learning assistants or peers can bring valuable social and technical skills. He described it as “one of the great benefits” of being in college.

Curriculum changes

Weide wrote that he restructured the class's “data science and programming techniques” grading system, which directly affects student achievement. 90% of grades are based on in-person exams, and 10% are based on completing weekly in-class lab assignments.

“When I'm taking an in-class exam, I can't access ChatGpt, my phone, or Google,” Professor Stephen Slade said in a Zoom interview with the News. “It must be between your ears.”

According to Weide, he has not completely banned the use of AI in his other class, “full stack web programming,” but he has reduced the weight of his multi-week programming allocation from 55% to 13%. A significant portion of the group projects, which account for 55% of the grade, includes in-person meetings with group advisors, he added.

In Professor Ozan Erat's class, exams also account for 90% of grades and zero% of question set.

“Our idea here is to remove the use of AI in these tasks, because simply completing them doesn't affect grades. So the only way students can benefit from the task is to actually do their job and understand the problem,” writes Weide.

Computer Science professor Rex Ying wrote in the news in a mail that while allowing AI to use it for homework, he designed homework so that AI models aren't always right, and imposing a “heavy” penalty for irresponsible use, including copy and pasting without checking accuracy. The process has made writing homework questions even more challenging, he added.

The Computer Science department does not have a comprehensive policy on AI use, so professors will rely on discretion to create AI policies for each course. The “wide latitude” is in line with Yale's policies, Kim wrote, adding that he worked together to provide feedback to the Poor Centre for AI guidelines in education and learning.

“It depends on the class, it depends on the students,” Slade said. “I don't think I'm comfortable communicating my colleagues about whether or not they can use AI. I think they're all knowledgeable and clever people and they should be able to understand what works for them.”

Constructive AI use

Chen teaches “Introduction to AI Applications.” This is a course that I say is “actually trying to push boundaries” with the use of AI.

“We hope that students will learn how to use AI to help them learn about AI,” she said. “I really ask them to ask your chatgpt or any other AI system you've chosen a lot of questions, and I find it generally productive.”

According to Chen, she allows AI to be used in coding, but the goal of the class is not to learn how to code, but the introduction to code is a prerequisite for the course.

“I fully expect that I'm really using it like working with my colleagues,” she said. “Then I have a place where I want to reflect on my personal learning, and there is just a lameness to use AI.

Three professors told the news that AI could be a useful tool for reviewing exams.

When the student asked Slade about true and phallus sample questions for the upcoming midterm of binary coding, he said he simply told chatgpt to create sample questions based on the documentation on binary coding.

When I asked Gemini, Google's AI assistant, to create 100 true or phallus questions afterwards, he said, “They all looked pretty good.”

“It's as if you had a smart sister who could help you study for the test,” he said. “My hope is to allow students to prepare for the exam using what they have at their own disposal and achieve their acquisition of materials.”

The Computer Science Division was founded in 1969.