Cloud providers are building an army of GPUs to deliver more AI firepower. Today at the annual Google I/O developer conference, Google unveiled his 26,000 GPU-powered AI supercomputer. The Compute Engine A3 supercomputer is yet another proof that in its battle for AI supremacy with Microsoft, it is devoting more resources to aggressive counterattacks.

This supercomputer has about 26,000 Nvidia H100 Hopper GPUs. For reference, the world’s fastest public supercomputer, Frontier, has 37,000 AMD Instinct 250X GPUs.

“For our largest customers, we can build A3 supercomputers with up to 26,000 GPUs in a single cluster, as well as multiple clusters in our largest regions,” a Google spokesperson said in an email. We are working to build a ,” he said, adding that “not all of our locations will be operational.” Scaled up to this large size. ”

The system was announced at the Google I/O conference in Mountain View, California. This developer conference has emerged as a venue to showcase many of the capabilities of his AI software and hardware at Google. Google accelerated its AI development after Microsoft brought OpenAI technology to Bing search and office productivity applications.

This supercomputer is aimed at customers looking to train language models at scale. Google has announced his accompanying A3 virtual machine instances for companies looking to use the supercomputer. A number of cloud providers are now deploying his H100 GPUs, and Nvidia launched its own DGX cloud service in March, but this is the first generation of his A100 GPUs to rent. expensive compared to

Google said the A3 supercomputer will significantly upgrade the computing resources provided by existing A2 virtual machines powered by Nvidia’s A100 GPUs. Google pools all geographically distributed A3 compute instances into one supercomputer.

“The scale of the A3 supercomputer provides up to 26 exaflops of AI performance, which greatly improves the time and cost of training large-scale ML models,” said a director at Google. One Roy Kim and product manager Chris Kleban said in a blog entry.

The exaflops performance metric is used by companies to estimate the real-world performance of AI computers, but is still viewed with a bit of a discount by critics. For Google, flops are solved with ML-targeted bfloat16 (“brain floating point”) performance, much faster than double-precision (FP64) floating point arithmetic, which most classical HPC applications still use. We will realize “exaflops”. Required.

The number of GPUs has become a key business card for cloud providers to push AI computing services. Microsoft’s AI supercomputer on Azure, built in partnership with OpenAI, has 285,000 CPU cores and 10,000 GPUs. Microsoft also announced its next-generation AI supercomputer with more GPUs. Oracle’s cloud services provide access to clusters of 512 GPUs and are working on new technologies to improve GPU communication speeds.

Google touts its own TPU v4 artificial intelligence chip, which is used to run internal artificial intelligence applications with LLMs such as Google’s Bard product. Google’s AI subsidiary DeepMind said its high-speed TPUs are leading AI development for general and scientific applications.

In comparison, Google’s A3 supercomputer is versatile and can be tailored for a wide range of AI applications and LLMs. “Given the high demands of these workloads, a one-size-fits-all approach is not enough. We need dedicated AI infrastructure,” Kim and Kleban said in his blog entry.

Google loves its own TPUs, but Nvidia’s GPUs have become a must-have for cloud providers, given that customers are building AI applications with CUDA, Nvidia’s own parallel programming model. The software toolkit produces the fastest results based on the acceleration provided by the H100’s specialized AI and graphics cores.

Customers can run AI applications via A3 VMs and use Google’s AI development and management services available via Vertex AI, Google Kubernetes Engine, and Google Compute Engine services.

Companies can use the A3 supercomputer’s GPUs as a one-time rental to train large models in combination with large language models. The model is then updated with new data that is fed into the model without having to retrain from scratch.

Google’s A3 supercomputer combines a variety of technologies to improve inter-GPU communication and network performance. The A3 virtual machine is based on Intel’s 4th generation Xeon chip (codenamed Sapphire Rapids) that ships with the H100 GPU. It’s unclear if the VM’s virtual CPUs support the inference accelerator built into his Sapphire Rapids chip. The VM comes with DDR5 memory.

Google’s A3 supercomputer combines a variety of technologies to improve inter-GPU communication and network performance. The A3 virtual machine is based on Intel’s 4th generation Xeon chip (codenamed Sapphire Rapids) that ships with the H100 GPU. It’s unclear if the VM’s virtual CPUs support the inference accelerator built into his Sapphire Rapids chip. The VM comes with DDR5 memory.

Training models on the Nvidia H100 is faster and cheaper than previous generation A100 GPUs, which are widely available in the cloud. A study conducted by AI services firm MosaicML found that the H100 was “30% more cost-effective and three times faster than the NVIDIA A100” on his MosaicGPT large-scale language model of 7 billion parameters.

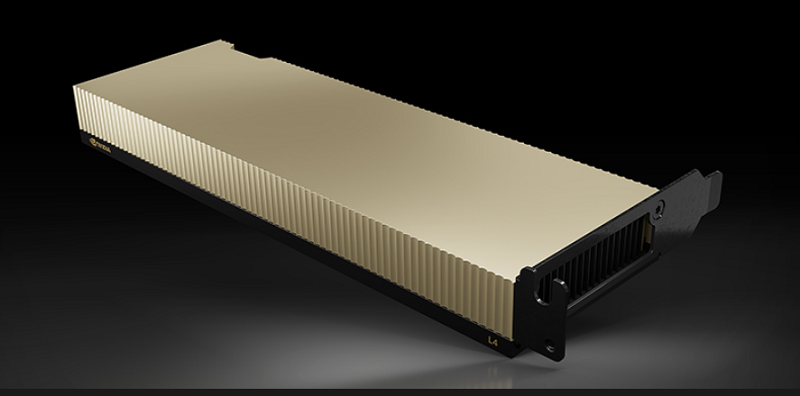

The H100 can also do inference, which might seem excessive considering the processing power the H100 provides. Google Cloud offers his Nvidia’s L4 GPU for inference, and Intel has an inference accelerator on his Sapphire Rapids CPU.

“A3 VMs are also great for inference workloads, offering up to 30x inference performance improvement compared to A100 GPUs in A2 VMs,” said Google’s Kim and Kleban.

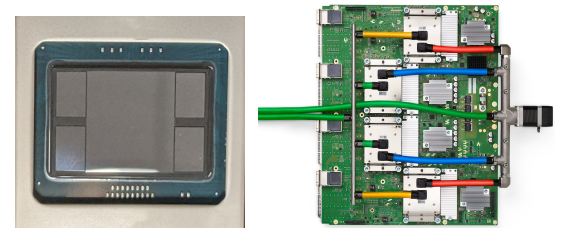

The A3 VM is the first VM to connect to a GPU instance through an infrastructure processing unit jointly developed by Google and Intel called Mount Evans. IPUs allow A3 virtual machines to offload network, storage management, and security functions traditionally done on virtual CPUs. The IPU enables data transfer at 200Gbps.

“A3 is the first GPU instance to use custom-designed 200 Gbps IPUs, allowing data transfers between GPUs to bypass the CPU host and flow through a separate interface from other VM network and data traffic. This enables up to 10x more network bandwidth compared to our A2 VMs, with lower tail latency and higher bandwidth stability,” a Google executive said in a blog entry.

IPU throughput may soon be challenged by Microsoft. Microsoft’s upcoming AI supercomputer will be powered by his Nvidia’s H100 GPU, with the chipmaker’s Quantum-2 400Gbps networking capabilities. Microsoft has not disclosed how many H100 GPUs it will have in its next-generation AI supercomputer.

The A3 supercomputer is built on spines derived from the company’s Jupiter data center networking fabric, which connects geographically diverse GPU clusters via optical links.

“It delivers workload bandwidth indistinguishable from more expensive off-the-shelf non-blocking network fabrics for nearly any workload structure,” Google said.

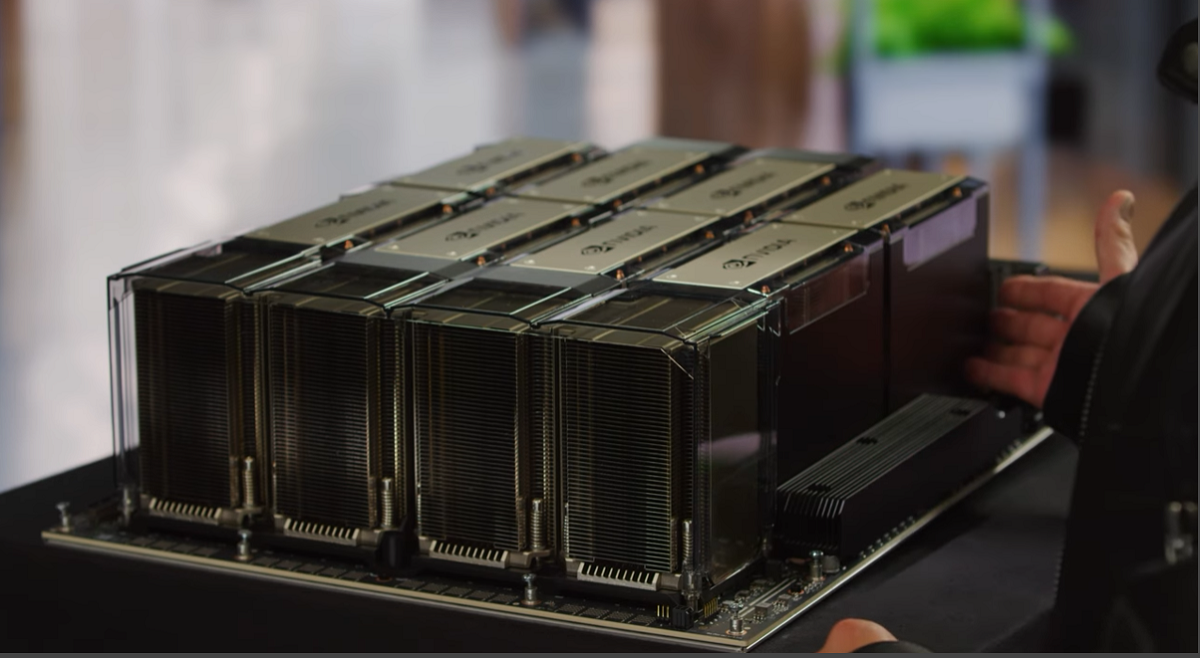

Google also shared that the A3 supercomputer will feature eight H100 GPUs interconnected using Nvidia’s proprietary switching and chip interconnect technology. The GPU is connected via his NVSwitch and NVLink interconnects and communicates at speeds of around 3.6TBps. Azure also offers the same speeds on its AI supercomputer, and both companies have introduced his Nvidia board design.

“Each server uses NVLink and NVSwitch within the server to interconnect eight GPUs. For the GPU servers to communicate with each other, we use multiple IPUs on the Jupiter DC network fabric.” said a Google spokesperson.

This setup is similar to Nvidia’s DGX Superpod with a 127-node setup, with each DGX node equipped with 8 H100 GPUs.

AI hardware, AI supercomputer, Google, Google IO, GPU, GPU, LUMI, Nvidia, TPU, TPU v4, TPU