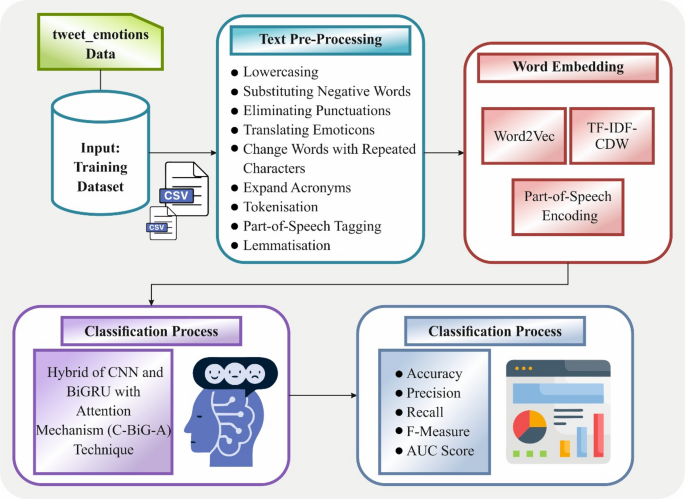

In this work, a new IERT-HDLMWEP model is developed for emotion identification using textual data. The proposed model aims to enhance the accuracy of a DL-based TER system in identifying and interpreting emotions in text, thereby improving communication support for individuals with disabilities. It encompasses text pre-processing, word embedding, and a hybrid classification method. Figure 1 indicates the complete process of the IERT-HDLMWEP model.

Overview of the IERT-HDLMWEP model, comprising data preprocessing, word embedding, and a hybrid DL with AM. Evaluation metrics include accuracy, precision, recall, F-measure, and AUC score.

Text pre-processing method

Initially, the text pre-processing step involves several typical levels to enhance analysis and reduce the dimensionality of the input data. Information gained from various resources, primarily social media, is often unstructured31. Raw data may be noisy and contain grammatical and spelling errors. Therefore, texts need to be cleaned before examining. Considering that several words are insignificant and add nothing to the text (for example, special characters, prepositions, stop words, and punctuation), pre-processing is used to improve the study and reduce the input data dimensionality. Some usual work is involved in the complete process, as shown.

-

Substitute negative words. Negations are words like never, not yet, and no that specify the unrelated meaning of phrases or words. The SA aims to substitute the negation with an antonym. For instance, the word not good is substituted with bad which is the opposite of good. Therefore, a sentence like “The car is not good” is converted into “The car is bad. “Still, particular negative words, like never, not, and no, and negative reductions, like doesn’t, mustn’t, and couldn’t, are frequently a part of stopword lists. Therefore, replacing each negative word and contraction with not, and then correcting this problem after stop-word removal as part of the spelling corrections.

-

Lowercasing: It converts each character in the dataset to lowercase, as opposed to capitalizing correct words, names, and initials at the beginning of the sentence. Capitalization is challenging on Twitter, as users often avoid capital letters, which can create a tone of ease in the chat, making the messages more conversational. Exchanging each of the texts’ letters for a similar circumstance. The word ‘Ball’, for example, will turn out to be ‘ball’.

-

Converting emoticons. Now, users use emoticons to explain their emotions, thoughts, and feelings. Therefore, converting each emoticon to consistent words will give better outcomes.

-

Removing redundant information [comprising hashtags (#), additional spaces, punctuation, special characters \(\:(\$,\:\&,\:\%,\:.\:.\:.)\), @username, URL references, stop words (e.g. ‘the’, ‘is’, ‘at’, etc.), non-ASCII English letters and numbers are neglected to preserve the individuality of the information encoding in English]. This type of information fails to predict the emotions expressed by users accurately.

-

Expanded acronyms to their new words, utilizing the acronym dictionary. Slang and abbreviations are improperly organized words that are often utilized on Twitter. They should be restored to their original form.

-

Change words with repetitive characters to their English roots. Each frequently uses words with repetitive letters (for example: ‘coooool’) to express their emotions.

-

PoS tagging. Numerous constructive modules in the text, such as nouns, verbs, adjectives, and adverbs, are recognized in this phase.

-

Tokenization. It deconstructs text into smaller textual units (for example, documents into expressions, sentences into words).

-

Lemmatization. There are derivationally related families of words with comparable meanings, like presidential, president, and presidency. This model aims to lower derivational and inflectional forms of the word to the standard base. It uses morphological and vocabulary studies to eliminate inflectional ends and return the dictionary or base form of the word, such as the lemma. If lemmatization were applied to the word ‘saw’, it would attempt to return both ‘see’ and ‘saw’ according to whether it was used as a noun or a verb. It is the procedure of decreasing a specific word to its simpler method, equal to the stem, but it preserves word-related data such as PoS tags.

Word embedding-based Word2Vec approach

For the word embedding process, the IERT-HDLMWEP model employs a hybrid feature representation that combines Word2Vec, TF-IDF-CDW, and POS Encoding for enhanced emotion detection in textual data32. This model is chosen for its superior capability in capturing semantic relationships between words by representing them as continuous vector spaces, which conventional bag-of-words models fail to do. The context-dependent word representations are effectively learned by the Word2Vec model, enabling it to comprehend subtle variations and similarities in language usage. This results in richer feature representations that enhance downstream tasks, such as emotion detection. This model is appropriate for handling extensive textual datasets and is computationally efficient and scalable to large corpora. The robustness of the model is enhanced by its ability to capture both syntactic and semantic data, outperforming simpler encoding techniques in capturing complex language patterns. Overall, Word2Vec presents a balance of effectiveness and efficiency, making it an ideal choice over conventional or more resource-intensive embedding methods.

Word2Vec

Word2Vec is a widely used static embedding model that encodes words as dense vectors in a fixed semantic space, where semantically relevant terms are located. It utilizes two different training mechanisms: CBOW and Skip-gram. It focuses on inferring context words from specified centre words, while CBOW forecasts the central term by combining data from its neighbouring words. To leverage the CBOW model to develop word embeddings. CBOW calculates the likelihood of the centre word \(\:{w}_{n}\), given its adjacent context words \(\:{w}_{c}\), depending on the embeddings of numerous surrounding words \(\:{w}_{c}\).

$$\:p\left({w}_{n}|{w}_{c}\right)=\frac{\text{e}\text{x}\text{p}\left({w}_{n}{h}_{n}\right)}{{\varSigma\:}_{{w}^{{\prime\:}}\in\:corpus}\text{exp}\left({w}^{{\prime\:}}{h}_{n}\right)}$$

(1)

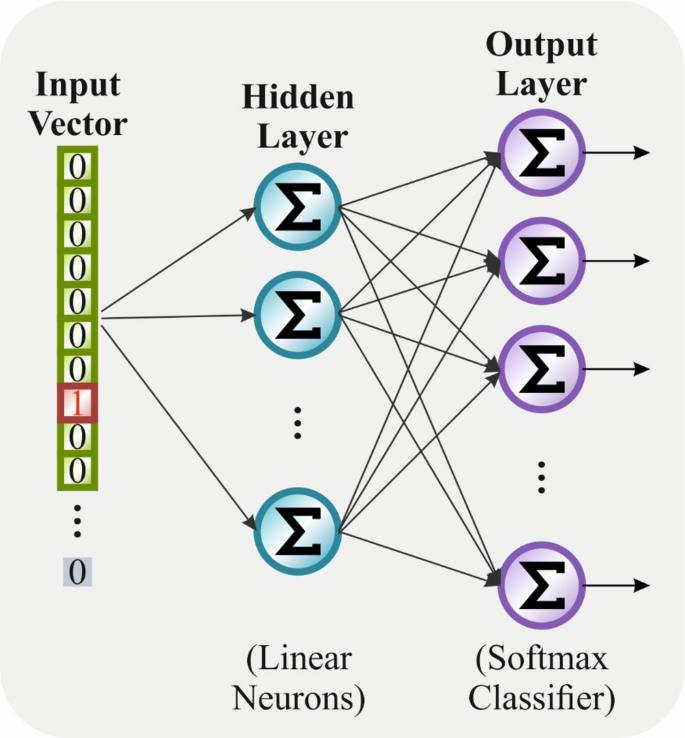

Now, \(\:{h}_{n}\) depicts an average embedded vector of the nearby contextual window, \(\:{w}_{n}\), and \(\:{w}_{c}\) represents the context words. Figure 2 illustrates the architecture of the Word2Vec model.

Architecture of the Word2Vec method consisting of an input vector, a hidden layer with linear neurons, and an output layer with a softmax classifier.

TF-IDF-CDW

TF-IDF is a commonly endorsed term-weighting model in NLP intended for assessing the importance of words in specific documents. Term frequency \(\:\left(TF\right)\) imitates how frequently a term \(\:{t}_{i}\) arises inside a document \(\:{d}_{j}\):

$$\:TF\left({t}_{i},{d}_{j}\right)=\frac{{n}_{i,j}}{\left|{d}_{j}\right|}$$

(2)

Now, \(\:{n}_{i,j}\) represents the \(\:TF\) of \(\:{t}_{i}\) in document \(\:{d}_{j}\), and \(\:\left|{d}_{j}\right|\) refers to the overall word count in \(\:{d}_{j}\). IDF assesses the rarity of the term through the overall corpus.

$$\:IDF\left({t}_{i}\right)=\text{l}\text{o}\text{g}\frac{\left|D\right|}{1+\left|\left\{{d}_{j}:{t}_{i}\in\:{d}_{j}\right\}\right|}$$

(3)

Here \(\:\left|D\right|\) depicts the total number of documents inside the corpus, and \(\:\left|\left\{{d}_{j}:{t}_{i}\in\:{d}_{j}\right\}\right|\) indicates document counts comprising term \(\:{t}_{i}\). To multiply \(\:TF\) and \(\:IDF\), the TF-IDF score is attained to depict the importance of term \(\:{t}_{i}\) in document \(\:{d}_{j}\):

$$\:TP-IDP\left({t}_{i},{d}_{j}\right)=\frac{{n}_{i,j}}{\left|{d}_{j}\right|}\times\:\text{l}\text{o}\text{g}\frac{\left|D\right|}{1+\left|\left\{{d}_{j}:{t}_{i}\in\:{d}_{j}\right\}\right|}$$

(4)

Consequently, some terms that occur regularly within particular classes but rarely elsewhere can receive inadequately low weights. To tackle this restriction, project an improved metric named \(\:CDW\).

$$\:CDW\left({t}_{i}\right)=1+\frac{\sum\:_{c\varepsilon C}P\left(c|{t}_{i}\right)\text{l}\text{o}\text{g}P\left(c|{t}_{i}\right)}{\text{log}\left|C\right|+1}$$

(5)

Now, \(\:P\left(c|{t}_{i}\right)\) depicts the proportion of documents including \(\:{t}_{i}\) which belong to class \(\:c\), and \(\:\left|C\right|\) refers to overall class counts. This model is designed to identify terms that are consistently distributed across classes, while highlighting those with category-specific preferences. CDW is primarily efficient at recognizing terms strongly associated with specific classes. To combine CDW, the methodology allocates a higher weight to category-specific terms. At last, the score of TF‐IDF‐CDW is calculated to multiply the value of TF‐IDF with an equivalent value of CDW, thus acquiring either document- or category‐level term significance:

$$\:TP-IDP-CDW\left({t}_{i},{d}_{j}\right)=TP\left({t}_{i},{d}_{j}\right)\cdot\:IDP\left({t}_{i}\right)\cdot\:CDW\left({t}_{i}\right)$$

(6)

This formulation enables the method to assign higher significance to terms that contribute more significantly to the distinction class, thereby enhancing the performance of classification and improving the quality of semantic representation.

POS encoding.

POS acts as a basic syntactic indicator in language study, depicting the grammatical part of a word within the sentence. Integrating POS data could enhance the understanding of the grammatical framework and the semantic context. Specifically, in tasks of text classification, POS tags such as verbs and nouns tend to be more effective for classifier outcomes. Therefore, integrating POS aspects enhances the syntactic awareness of the methodology and the performance of classification.

To accept an arbitrary vector-based POS encoding approach. In particular, every dissimilar POS class is allocated an arbitrary 10-D vector that can adjust over training. Then calculate the TF‐IDF‐CDW weight for every word \(\:TI{C}_{1:n}=\{ti{c}_{1},\:ti{c}_{2},\:\cdots\:,\:ti{c}_{n}\}\) as described in the equation mentioned above. POS encoding vectors \(\:PO{S}_{1:n}=\{po{s}_{1},\:po{s}_{2},\:\cdots\:po{s}_{n}\}\) are derived utilizing the method. At last, the improved embedding vector \(\:{V}_{1:n}\) for every word is developed to scale the Word2Vec vector \(\:p{v}_{i}\) with its equivalent TF‐IDF‐CDW weight \(\:ti{c}_{j}\) and aimed at a 10-D POS encoding vector \(\:po{s}_{i}\), producing a 310-D representation:

$$\:{V}_{1:n}=\left\{p{v}_{1}\cdot\:ti{c}_{1}+po{s}_{1},p{v}_{2}\cdot\:ti{c}_{2}+po{s}_{2},\dots\:,p{v}_{n}\cdot\:ti{c}_{n}+po{s}_{n}\right\}$$

(7)

An innovative improved word embedding vector reflects the significant differences of similar words through diverse texts and further acquires POS data. The created embedding matrix emphasizes key semantic components while reducing noise interference in the classification method.

Hybrid classification process

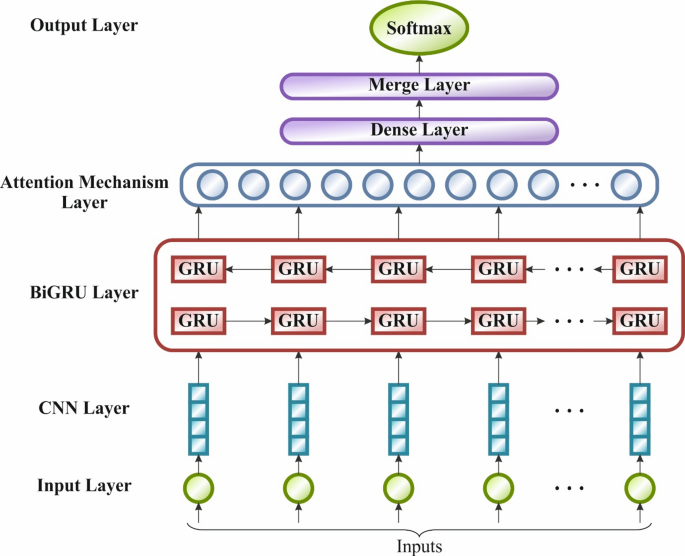

At last, the hybrid of the C-BiG-A technique is employed for the classification process. This hybrid model is chosen for its ability to effectively capture both local patterns and long-range dependencies in textual data. The Bi-GRU model processes sequential data in both forward and backwards directions, and CNN outperforms in extracting spatial features and local n-gram patterns, capturing contextual relationships. The AM model further enhances the model by selectively focusing on the most relevant parts of the input, thereby improving both interpretability and performance. This fusion model effectually balances complexity and efficiency, presenting superior emotion classification accuracy, particularly for complex and mixed emotions compared to standalone models. Its architecture is appropriate for handling varying text lengths and diverse linguistic structures, making it a robust choice over simpler or less adaptive models. Figure 3 specifies the structure of the C-BiG-A technique.

Framework of C-BiG-A technique consisting of input, convolutional, recurrent, attention, and dense layers resulting in the softmax-based output.

CNN can well remove the features and information33. By selecting the convolutional kernel across various input sequence positions, it can successfully capture the changing local features and patterns of the sequence data. The convolutional layer takes local features from the time series data by using a sliding window. While the RNN has the benefit of being able to remove contextually related data, it may admit a minimum range of contextual information and suffer from the long-term dependency problem. Then, it is essential to introduce a threshold mechanism into the RNN architecture to maintain the required data. It can not only be an efficient solution to the problems of gradient explosion and gradient vanishing, but also partially address the disadvantages of transferring information over longer distances. The generally applied RNN frameworks with gate mechanisms are the LSTM and GRU structures, which possess temporal solid processing abilities. Still, if GRU fails to maintain memory, the internal architecture of the network has only dual gates that prevent the memory area, decrease the training parameters, and outcomes at the fastest training speed. The fundamental structure of a GRU method, and the forward GRU computational equation, is as shown:

$$\:{r}_{t}=\sigma\:\left({W}_{r}\left[{h}_{t-1},{x}_{t}\right]+{b}_{r}\right)$$

(8)

$$\:{z}_{t}=\sigma\:\left({W}_{z}\left[{h}_{t-1},{x}_{t}\right]+{b}_{z}\right)$$

(9)

$$\:{\stackrel{\sim}{h}}_{t}=\text{t}\text{a}\text{n}\text{h}\left({W}_{h}\left[{r}_{t}*{h}_{t-1},{x}_{t}\right]+{b}_{h}\right)$$

(10)

$$\:{h}_{t}=(1-{z}_{t})*{h}_{t-1}+{z}_{t}*{\stackrel{\sim}{h}}_{t})$$

(11)

$$\:{y}_{t}=softmax\left({W}_{0}\cdot\:{h}_{t}+{b}_{0}\right)$$

(12)

whereas, \(\:{x}_{t}\) characterizes the input vector of the present time step; \(\:{W}_{r},\) \(\:{W}_{z}\), and \(\:{W}_{h}\) characterize the weighted matrices; and \(\:{b}_{r},\) \(\:{b}_{z}\), and \(\:{b}_{h}\) symbolize the biased vectors.

The Bi-GRU model can learn either past or future information. It may learn the input sequence information more broadly to prevent missing information after processing longer sequences. \(\:{g}_{t}^{{\prime\:}}\) and\(\:\:{g}_{t}\) represent the consistent outputs for the Backwards and Forward GRU layers at moment \(\:t\), correspondingly presented as shown:

$$\:{g}_{t}^{forward}=GRU\left({x}_{t},{h}_{t-1}^{forward}\right)$$

(13)

$$\:{g}_{t}^{backward}=GRU\left({x}_{t},{h}_{t+1}^{backward}\right)$$

(14)

The Bi-GRU output \(\:{O}_{t}\) is presented as shown:

$$\:{O}_{t}=\overrightarrow{W}{g}_{t}+\overleftarrow{W}{g}_{t}^{{\prime\:}}\leftarrow\:+{b}_{t}$$

(15)

whereas \(\:\overleftarrow{W}\) and \(\:\overrightarrow{W}\) represent the weighted matrices for the backwards and forward GRU structures, individually, while \(\:{b}_{t}\) symbolizes the output layer’s bias vector.

They present a linear generalized attention mechanism, which achieves near-linear growth in both time and space complexity. This model significantly reduces training time, particularly after processing longer sequences. The attention matrix \(\:A\in\:{\mathbb{R}}^{L\times\:L}\) as demonstrated:

$$\:A(i,j)=K({q}_{i}^{T},{k}_{j}^{T})$$

(16)

Here, \(\:{k}_{j}{\:and\:q}_{i}\) characterize the \(\:jth\) and \(\:ith\) row vectors of the key \(\:K\) and query \(\:Q,\) respectively. The kernel \(\:K\) is represented as demonstrated:

$$\:K\left(x,y\right)=\mathbb{E}\left[\varphi\:{\left(x\right)}^{T}\varphi\:\left(y\right)\right]$$

(17)

Now, \(\:\varphi\:\left(x\right)\) signifies mapping functions. If \(\:{Q}^{{\prime\:}},{\:K}^{{\prime\:}}\in\:{\mathbb{R}}^{L\times\:p}\), their row vectors are embodied as \(\:\varphi\:\left({q}_{i}^{T}\right)\) and \(\:\varphi\:\left({k}_{j}^{T}\right)\), individually. The efficient attention, depending on the kernel description, is formulated as shown:

$$\:\widehat{Att}\leftrightarrow\:\left(Q,K,\:V\right)={\widehat{D}}^{-1}\left(BV\right)$$

(18)

whereas, \(\:B={Q}^{{\prime\:}}({K}^{{\prime\:}}{)}^{T}\) and \(\:\widehat{D}=diag\left(B{1}_{L}\right)\). Now, \(\:\widehat{Att}\) represents the estimated attention, while the brackets specify the computing sequence. Table 2 specifies the key hyperparameters of the C-BiG-A technique.