Docker Offload, a fully managed service provided by Docker, supports GPU acceleration and helps you run compute-intensive AI workloads seamlessly.

Deploying AI applications requires large amounts of computing resources, especially for GPU acceleration, which developers may not have on their local laptops or cloud servers. This can be a difficult scenario to seamlessly build and test AI applications. This is why Docker launched Docker Offload, which connects users to its cloud engine and provisions the GPU acceleration needed for AI applications. This helps developers use Docker as a fully managed service.

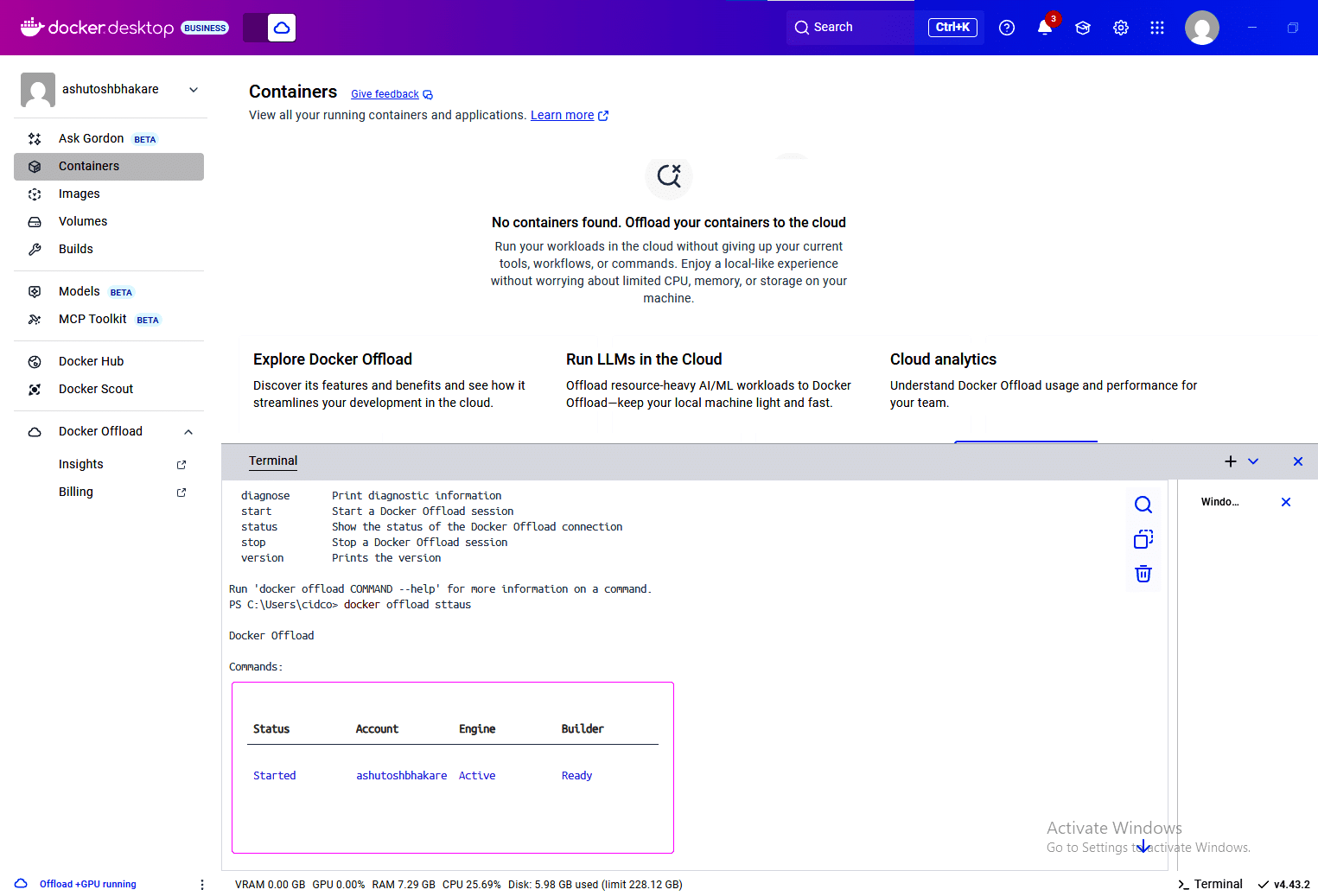

If your request to use Docker Offload is approved, you will see the screen shown in Figure 1.

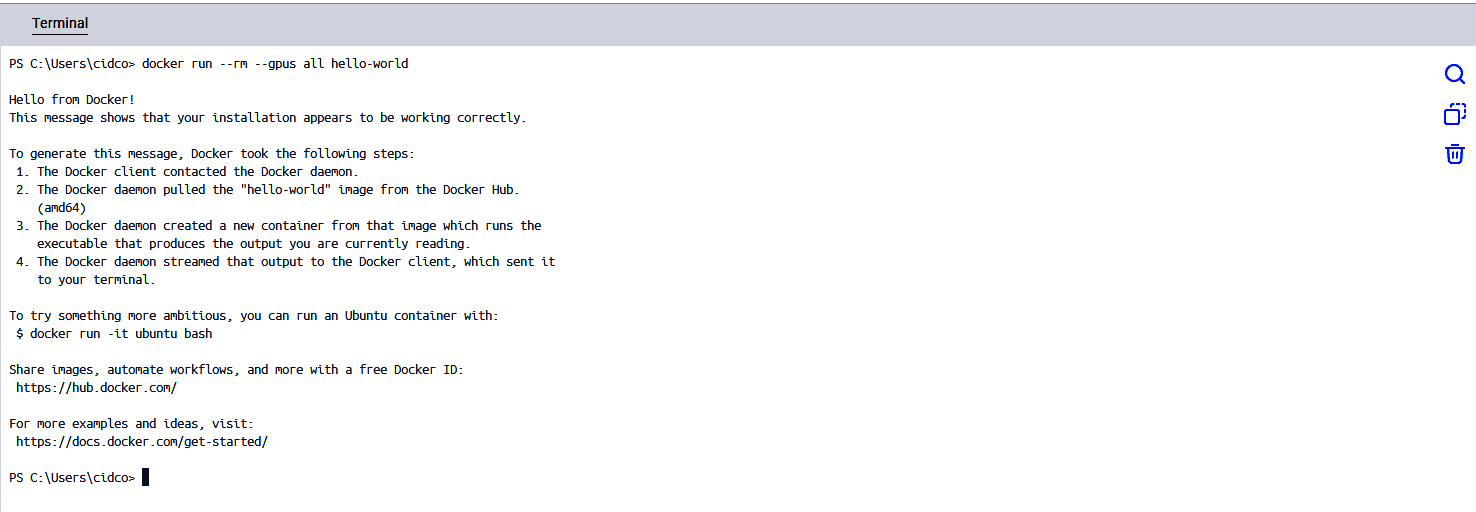

You can check GPU acceleration by starting the container with the following command: –gpus Options shown below:

# docker run --rm --gpus all hello-world

If you get output similar to the one shown in Figure 2, Docker Offload is working properly.

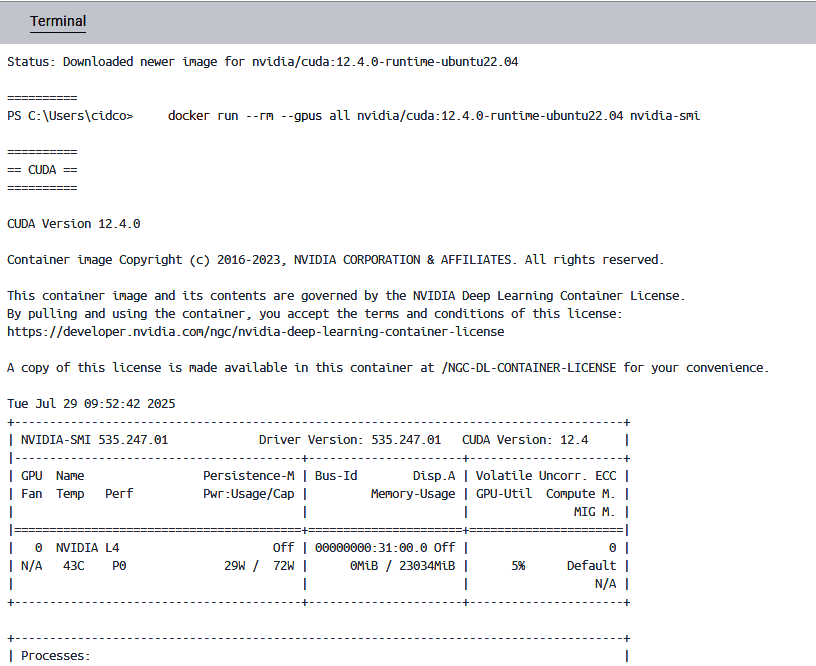

To check the configuration of NVIDIA processes, you can use the following command:

# docker run --rm --gpus all nvidia/cuda:12.4.0-runtime-ubuntu22.04 nvidia-smi

Figure 3 shows the output.

Benefits of Docker Offload include:

- Cloud-based remote build.

- NVIDIA L4 GPU backend. Enabling developers to seamlessly run compute-intensive workloads.

- An encrypted tunnel between your Docker desktop and your cloud environment.

To learn more about this fully managed service provided by Docker, please visit the following link: https://docs.docker.com/offload/about/.