summary: For humans, “fetch” is a simple game, but for robots, finding a specific object in a cluttered room is a computational nightmare. Researchers discovered a surprising solution by observing dogs, the world champion fetch players. By studying how dogs interpret human pointing gestures and gaze, the team developed a new AI framework called LEGS-POMDP.

This system allows robots to combine natural language (words) and physical gestures (pointing) to navigate uncertainty. In laboratory tests, the robot achieved an 89% success rate in finding the correct object, significantly outperforming systems that rely solely on words or vision.

important facts

- Multimodal reasoning: Robots don’t just “hear” commands. It uses a “cone of probability” derived from the positions of the human eye, elbow, and wrist to narrow down the object’s location.

- POMDP framework: The robot uses a “partially observable Markov decision process” to deal with uncertainty. If the robot isn’t sure what it’s looking at, it will move to get a better view, rather than guessing blindly.

- dog inspiration: The gesture model is brown dog labThis research investigates how dogs intuitively solve cooperative problems with humans through gaze and gestures.

- Improved performance: Combining language and gestures yielded a nearly 90% accuracy rate, proving that showing is just as important as telling when interacting with AI.

- Visual Language Model (VLM): The system integrates AI that can “see” the scene and understand complex natural language descriptions at the same time.

sauce: brown university

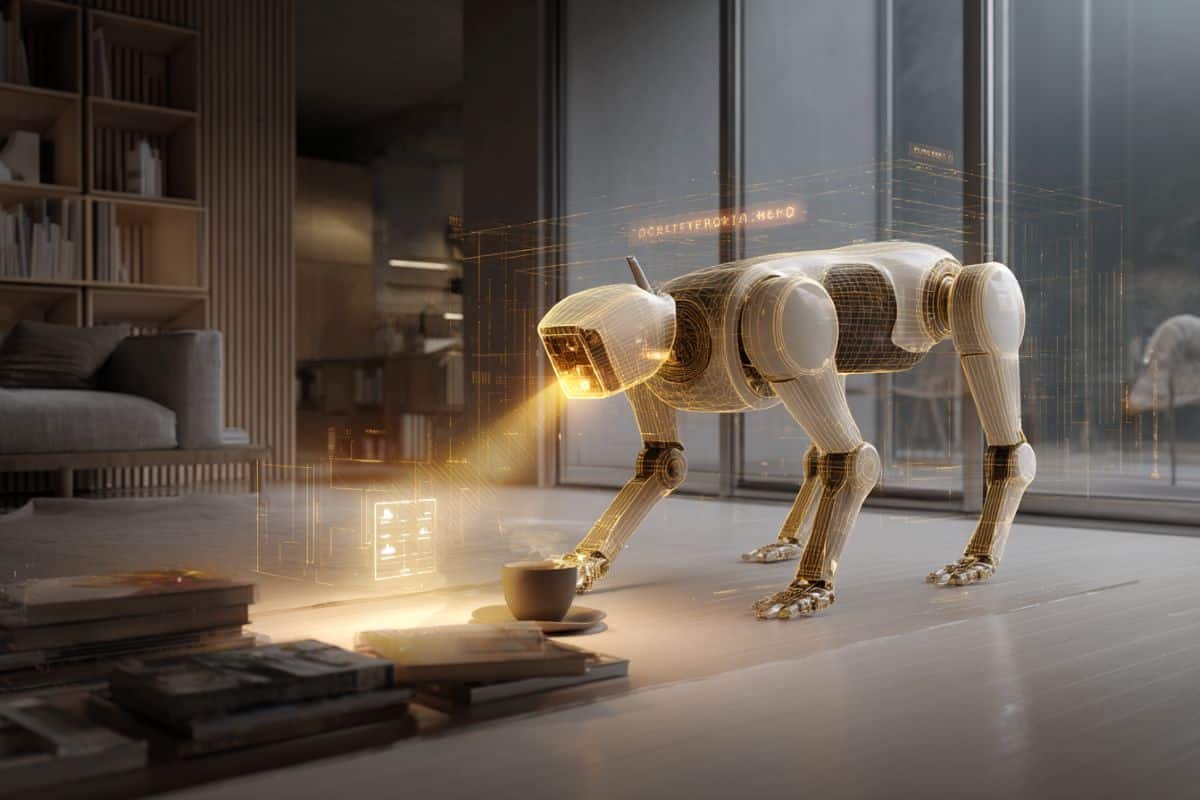

Robotic assistants that can pick up items for people, whether it’s in the kitchen or on the work floor, can be extremely helpful. Now, researchers at Brown University have developed a way to help robots know exactly what items users want them to retrieve.

The new approach allows robots to use input from both human language and gestures when reasoning about how to find and retrieve target objects. In a study to be presented on Tuesday, March 17 at the International Conference on Human-Robot Interaction in Edinburgh, Scotland, researchers showed that the approach had an 89% success rate in finding the correct object in complex environments, outperforming other object search approaches.

“Looking for things requires robots to navigate vast environments,” said Ivy He, a graduate student at Brown University and lead author of the study. “With current technology, robots are pretty good at identifying objects, but the task becomes more difficult when the environment is cluttered, objects move around, or objects are hidden by other objects. So in this study, we use both language and gestures to aid in that exploration task.”

This study utilizes an approach to robot planning called POMDP (Partially Observable Markov Decision Process). It is a mathematical framework that allows robots to reason under uncertainty. In the real world, robots rarely fully understand the world. Different types of objects can look the same. There may be more than one specific object in a room. Items may be partially or completely hidden and not visible.

For robots to explore successfully, they must act even when they don’t know what they’re seeing. If you don’t have a way to manage that uncertainty, you can freeze. Even worse, you may end up making overconfident final decisions based on incomplete information.

POMDP transforms ambiguity into a probabilistic framework that helps robots track how confident they are about what is in the world and update that belief according to new information, including information from large-scale vision and language models. Along the way, you can choose actions (such as moving to get a better view) that will help you learn more before making your final decision.

The innovation in this latest research is POMDP, which incorporates input from both language and human gestures, such as pointing to a target object. To incorporate the gesture component, he used insights from Brown’s lab, led by Cognitive Psychological Science Associate Professor Daphna Buxbaum, to study how dogs, the undisputed world champions of fetch, interpret human pointing.

Building on this expertise, he and student Madeline Pellegrim conducted research into the finer points of human pointing and how dogs interpret pointing gestures. This research helped him model the target of the pointing gesture within a cone of probability.

“What we discovered is that humans use their gaze to match what they’re pointing at,” he said. “So it’s natural to create a cone based on the connecting line from the eye to the elbow to the wrist. We found that this was a fairly accurate approximation of where someone was pointing.”

“Our research at the Brown Dog Lab shows how sophisticated dogs are at communicating with humans, solving many of the cooperation problems we want robots to solve. This makes dogs a natural model for intuitive human-nonhuman cooperation. This research translates dogs’ intuitive understanding of human gaze and pointing into a probabilistic model that allows robots to handle the ambiguity inherent in human communication, bringing us closer to truly intuitive robot assistants.

He then combined the gesture model with the Vision Language Model (VLM), an AI system designed to interpret visual scenes with natural language descriptions. The result is POMDP, which allows robot planning to incorporate both language and gestures.

In a laboratory experiment, the researchers asked a quadrupedal robot to find various objects scattered around a laboratory space. Experiments showed that by using a combination of gestures and language, the robot was able to find the correct object nearly 90% of the time, much better than using either input alone.

For him and her co-authors, this work is a step toward robots that can work alongside people at home and at work.

“The framework we developed helps pave the way for seamless multimodal human-robot interactions,” said study co-author Jason Liu, a postdoctoral fellow at MIT who worked on the project while completing his Ph.D. In brown. “In the future, we will be able to communicate with assistant robots in the same way that humans interact through language, gestures, gaze, demonstrations, and more.”

This research was supported through Brown’s AI Research Institute on Interaction for AI Assistants (ARIA), which receives funding from the National Science Foundation.

“This is a really great example of how strengthening the collaboration between computer science and cognitive science can lead to more natural and effective human-machine interactions,” said Ellie Pavlik, an associate professor of computer science at Brown University who leads ARIA. “Embracing what we know about how humans naturally want to communicate and building systems that align with human tendencies and intuitions about behavior is the right way forward.”

Funding: This research was supported by the National Science Foundation (2433429) and the Long Range Autonomy Program for Ground and Underwater Robotics (GR5250131), and the Office of Naval Research (N0001424-1-2784, N0001424-1-2603).

Answers to key questions:

answer: Because humans actually have very “awkward” communication skills. We point vaguely or use words like “that thing over there.” Dogs have evolved over thousands of years to become experts at reading our body language and gaze. Scientists can teach robots to look at their eyes, elbows, and wrists, just like dogs do, so they can figure out exactly what “it” is, even in a messy room.

answer: Not exactly. It uses a mathematical framework called POMDP. Dogs use instinct, robots use probability. Calculates how confident we are about the location of an object. When you have low confidence, you “think” like an explorer. Move around, look under tables, and look from different angles to gather more data before making your final selection.

answer: This is a major step towards introducing robots into homes and workplaces. Whether it’s a robotic assistant in a hospital retrieving a specific surgical instrument, or a home robot helping someone with mobility issues, being able to understand gestures means you don’t need to give perfectly programmed voice commands for every task.

Editorial note:

- This article was edited by the editors of Neuroscience News.

- Journal articles were reviewed in full text.

- Additional context added by staff.

About this AI/Robotics research news

author: kevin stacy

sauce: brown university

contact: Kevin Stacy – Brown University

image: Image credited to Neuroscience News

Original research: The findings will be presented at the ACM/IEEE Human-Robot Interaction International Conference (HRI).