Everyone agrees that accelerated computing is energy efficient computing.

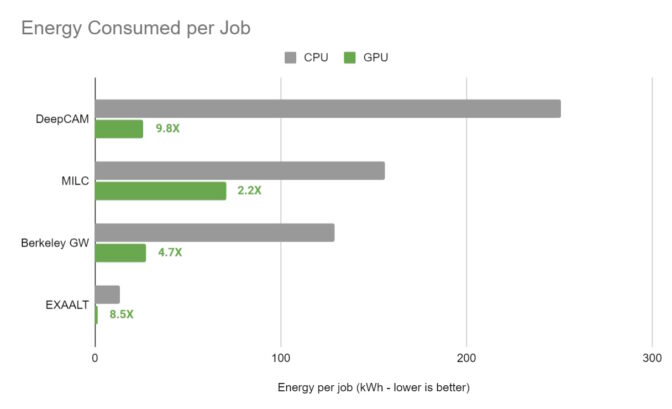

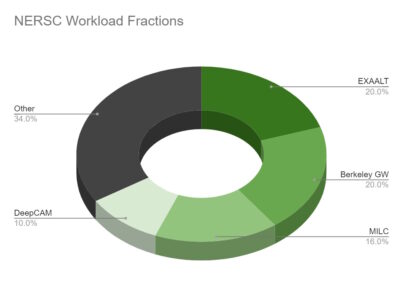

The National Energy Research Scientific Computing Center (NERSC), the U.S. Department of Energy’s lead facility for open science, measured results for four major high-performance computing and AI applications.

They measured how fast their applications ran and how much energy they consumed on CPU-only and GPU-accelerated nodes on Perlmutter, one of the world’s largest supercomputers with NVIDIA GPUs. .

The results were clear. Accelerated with NVIDIA A100 Tensor Core GPUs for an average 5x energy efficiency improvement. The weather application recorded a 9.8x gain.

Save megawatts with GPUs

On a server with 4 A100 GPUs, NERSC delivered up to 12x speedup compared to a dual socket x86 server.

In other words, at the same performance level, a GPU-accelerated system consumes 588 megawatt hours less energy per month than a CPU-only system. Running the same workload on a 4-way NVIDIA A100 cloud instance for a month saved the researcher over $4 million compared to his CPU-only instance.

Measuring real-world applications

This result is important because it is based on measurements of real-world applications, not synthetic benchmarks.

This advantage means that the 8,000+ scientists using Perlmutter can tackle bigger challenges and open the door to further breakthroughs.

Among the many use cases for the more than 7,100 A100 GPUs on Perlmutter, scientists are investigating subatomic interactions to find new green energy sources.

advance science at every scale

Applications tested by NERSC span molecular dynamics, materials science, and weather forecasting.

For example, MILC simulates the fundamental forces that hold particles within atoms. It is used to advance quantum computing, study dark matter, and search for the origin of the universe.

BerkeleyGW helps simulate and predict the optical properties of materials and nanostructures, an important step towards the development of more efficient batteries and electronic devices.

With an 8.5x efficiency improvement on the A100 GPU, EXAALT solves fundamental challenges in molecular dynamics. This gives researchers the equivalent of a short video of atomic motion, rather than a series of snapshots provided by other tools.

The fourth application in the test, DeepCAM, will be used to detect hurricanes and atmospheric rivers in climate data. 9.8x energy efficiency improvement when accelerated with A100 GPU.

Savings from fast computing

NERSC results reflect previous calculations of potential savings from accelerated computing. For example, another analysis conducted by NVIDIA found that GPUs are 42x more energy efficient than CPUs for AI inference.

That means switching all CPU-only servers running AI around the world to GPU-accelerated systems could save a staggering 10 trillion watt hours of energy annually. This is equivalent to saving the energy that 1.4 million households consume in his one year.

Accelerate your enterprise

You don’t have to be a scientist to use accelerated computing to improve energy efficiency.

Pharmaceutical companies are using GPU-accelerated simulation and AI to speed up the drug discovery process. Automakers like the BMW Group use it to model entire factories.

They are among the growing companies at the forefront of what NVIDIA founder and CEO Jensen Huang calls the industrial HPC revolution fueled by accelerated computing and AI.