It’s been a long day. You’re sitting on the couch, your dog piled up around you, mindlessly scrolling through social media. Stand-up comedy, political commentary, dachshund videos, etc. The algorithm seems to be working fine. Then a video of a sobbing American soldier stops mid-scroll.

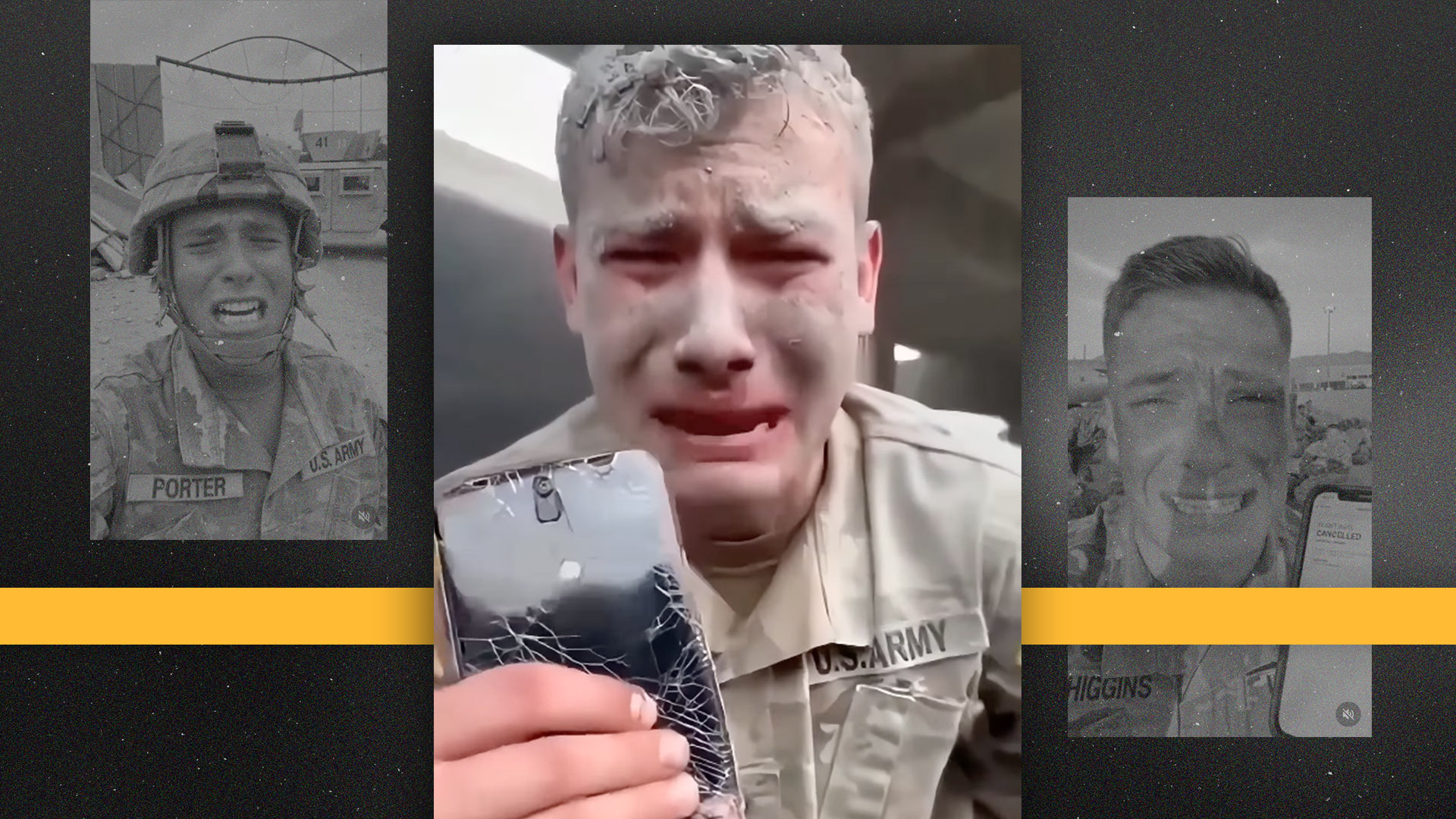

But before I say what it is, something seems off. The audio is weirdly muddy and doesn’t quite match the video. If you look closely, you can see that the labels on the uniforms have been peeled off, making it appear as if the rank insignia is incorrect.

At that time, you think: There are no soldiers, and there never have been.

Download the SAN app today to stay up to date with Unbiased. Straight Facts™.

Point your phone’s camera here

The video is one of many AI-generated clips depicting distressed American service members that have flooded social media in recent months. Researchers told Straight Arrow that they were not the work of a foreign spy agency, but were likely created to support a business model.

These videos are designed to provoke an emotional response before critical thinking can catch up, and every time it works someone gets a reward.

Experts studying the prevalence of AI-generated media say there are dozens of accounts posting such content. Fact-checking site PolitiFact previously reported on the story, saying its reporters found nearly a dozen accounts associated with the content. After PolitiFact’s investigation, the social media platform removed these accounts, but Straight Arrow was able to easily find other accounts posting the same content.

Videos often show people in uniform weeping, talking to the camera and sometimes screaming. Although the video appears to be genuine on the surface, astute users can notice problems. Erroneous markings or AI-generated artifacts on a uniform usually cast doubt on its authenticity.

Emotional business model

Enough people are hooked on these videos to make their business models successful and profitable.

In late 2025 and early 2026, a “Jessica Foster” Instagram account appeared online. The painting depicted a young military woman who was a vocal supporter of President Donald Trump’s “America First” movement. She referred to herself as an “American military girl.”

The post featured her in a realistic setting in a photo that looked real. In one photo, she posed with an F-22 Raptor. In another photo, a young woman in military uniform but with a short skirt and high heels walked alongside Trump on the airport tarmac.

The account quickly gained 1 million followers.

However, subsequent reports revealed that the account was fake. Its real purpose is to direct users to paid adult content.

“If you have dozens or hundreds of people accessing an app or an OnlyFans page, that could be hundreds of dollars, thousands of dollars, maybe tens of thousands of dollars,” said Daniel Schiff, an associate professor at Purdue University and co-director of the Governance and Responsible AI Lab.

The crying soldier video works a little differently. These accounts are farms for direct payments to the platform, rather than acting as billboards for external revenue sources. This means the more followers you have, the more advertising revenue you can receive directly from social media companies. Schiff said these accounts can quickly gain followers.

“You can see a few posts, up to a few dozen posts, within days to weeks,” he told Straight Arrow. “Some people are posting monetized content pretty quickly.”

Why does this work for people?

The profoundly shocking emotions depicted in the video are no mere coincidence. These are the main reasons why videos work so well. This is a psychological mechanism that works better than most people care to admit.

“We’re at the point where humans will never be able to tell the difference,” says AI researcher and PhD Sarah Barrington. candidate from the University of California, Berkeley, told Straight Arrow.

Barrington conducted a study on people’s ability to detect AI-powered voice clones and found that their ability was no different than guessing a coin toss.

“We gave real and fake audio to a large group of people – hundreds of listeners, hundreds of speakers – and found that 60% of the time they couldn’t tell what was real and what was fake,” Barrington said.

When she added real voices to the mix, she said 80% couldn’t tell the difference between the generated voice and the real voice. Detection becomes even more difficult when creators create content designed to make people feel something first.

“When people are in this emotional state, they can’t make logical decisions,” Barrington said. “People who experienced a traumatic event in the last year were twice as likely to be cheated on.”

The obvious solution to this is more media and AI literacy training, but researchers aren’t sure if that’s enough.

Why aren’t current measures working?

Companies have tools that can catch these videos before they are published online. But they are not working.

Meta, Google, Microsoft, and OpenAI have formed the Coalition for Content Provenance and Authenticity (C2PA). This federation provides a way for platforms to automatically detect and label AI-generated content.

“When you generate AI video, it almost always includes a watermark these days,” says Barrington. “When these are shared, it flags the platform. But most of these platforms definitely don’t use that very simple watermark.”

Even if a platform points out that a post is fake, that doesn’t mean users will necessarily believe it.

“Just because you put a label on it and say, ‘Oh, this is manipulated,’ doesn’t mean people will pick up on it, and it doesn’t make them truly disbelieve the false claims,” Schiff said.

However, the problem when companies implement their own rules may be more a financial issue than a technical limitation.

“Content drives traffic, and traffic drives money,” Schiff says. “These bad guys, these spreaders, are leveraging the same kinds of strategies that social media platforms have built their own engagement methods on.”

Straight Arrow provided Meta with a list of AI-generated videos. The company said it is investigating these accounts.

“As technology evolves, it can become difficult to identify content generated by AI,” the company wrote in an email. “We are continually working to improve our systems and capabilities for labeling this content.”

It is important to note that almost all social media platforms have AI content policies similar to Meta. Straight Arrow also discovered a number of AI-generated videos on TikTok that clearly showed fake soldiers crying.

What can users do?

Despite the ever-evolving nature of AI, there is still hope for users looking to separate fact from fiction.

“I’m totally back to the who, what, where, and when,” Barrington told Straight Arrow. “Can you answer some basic questions about this that you’re sharing? Let’s get back to basic media literacy.”

However, she emphasized that this is not a media forensics issue, but just basic practice. If we are wrong about AI-generated content, the problem is more pressing than some realize.

“You look kind of silly,” Barrington said. “If you share this, you’ll get the wrong impression. You’ll only lose credibility in your own social circle.”

The responsibility for identifying AI content was never intended to fall solely on the shoulders of users scrolling through the night. Researchers say a deeper problem predates the individual videos.

“We unlocked a lot of these capabilities certainly before we had detection capabilities or before we had social resilience, so we’re now trying to address these issues reactively,” Schiff said. “That’s probably going to be the bulk of my responsibility.”