From traditional machine learning models to generative AI to agent systems that can perform multi-step tasks across software environments, AI systems are impacting many business processes today. In fact, according to McKinsey’s 2025 State of AI Study, 88% of respondent organizations are using AI in at least one business function.

As organizations become more dependent on AI systems, failures of all kinds, including technical, ethical, operational, and reputational, become more significant. However, the maturity of governance has not caught up. Many organizations implementing AI still lack formal governance frameworks and clearly defined accountability mechanisms.

When properly implemented, responsible AI is an organization’s ability to enhance the quality of decision-making, reduce operational risk, and increase stakeholder trust. AI systems are expanding across business functions, automating increasingly complex decisions with generative and agentic capabilities. As a result, successful companies will treat AI as a disciplined decision-making system embedded in clear responsibility structures.

What is responsible AI?

Responsible AI refers to the ability to ensure that the decisions, actions, and outcomes produced by AI systems are traceable to clearly defined human and organizational responsibilities. Responsible AI is enforceable through governance mechanisms and essential in the event of failure. This ensures that AI-driven decisions are reliable, auditable, and aligned with organizational responsibilities, providing the foundation on which long-term business value is built.

Accountability is not a technical property of AI applications or models. It is a system-wide capability that combines models, data pipelines, operational processes, governance structures, and human oversight. In practice, accountability answers four management questions:

- Who owns the AI system and its results?

- Who is responsible in case of errors or damages?

- How are failures detected and corrected?

- What governance mechanisms will enforce these responsibilities?

Companies that cannot clearly answer these questions are not operating responsible AI systems.

Why AI accountability is a business priority

Ungoverned AI can be costly. According to a recent EY global survey of 975 executive leaders, 99% of organizations reported financial losses related to AI-related risks, with 64% experiencing losses of more than $1 million. Accountability is needed to reduce risk and in case things go wrong.

High-profile failures have made the risks of AI even more evident. In the Netherlands, an algorithmic fraud detection system used in the welfare administration incorrectly flagged around 40,000 households as suspicious, sparking a major crisis and a parliamentary investigation. This incident demonstrated that poorly managed risk scoring systems can cause massive societal harm, with limited visibility until the damage occurs.

Generative AI poses unique accountability challenges. In a widely publicized case, a court found Air Canada liable for an AI chatbot that misled customers about its refund policy, even though the information was machine-generated. More recently, incidents with AI coding agents have demonstrated the ability of AI systems to perform actions beyond their intended boundaries, leading organizations to impose stricter human accountability on automated deployments.

Regulatory pressure on AI is also increasing. The EU AI law introduced risk-based obligations for AI systems operating in the European market. GDPR already imposes requirements on automated decision-making. In financial services, the Federal Reserve’s SR 11-7 guidance on model risk management sets established standards for governance. The Food and Drug Administration’s oversight of AI-enabled medical devices has raised similar expectations in the medical field. All of these regulations reinforce a consistent theme. Accountability for AI systems is becoming a regulatory baseline, rather than an enhanced option.

7 best practices for AI accountability

Accountability only makes sense when it is built into business structures and processes. The following seven practices can help leaders translate principles into real-world accountability.

1. Clarify ownership and responsibilities

The most common accountability failure is diffusion of responsibility. AI systems typically span multiple teams. Without explicit ownership, a liability gap is almost inevitable. Executives must assign responsible ownership of each AI system, consisting of a business owner responsible for decision outcomes, a technology owner responsible for model performance, and an executive sponsor responsible for governance and escalation.

Clear ownership precludes the common defense that the AI made the decision. In a responsible system, algorithms support decision-making, but companies remain responsible for the results.

2. Adopt a structured AI accountability framework

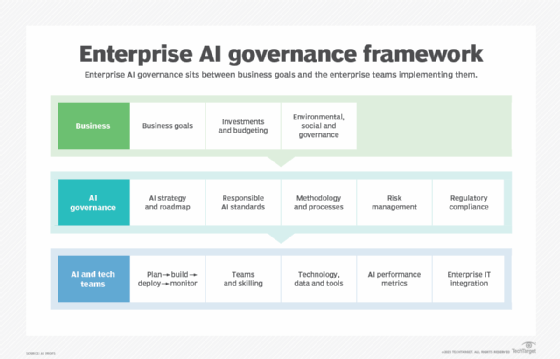

Ad hoc governance hinders scale-up. Organizations deploying multiple AI systems need a structured framework that integrates with the enterprise’s risk management and internal control processes. An effective governance structure should include a risk classification model, a pre-implementation review and approval process, model validation requirements, documentation standards, and independent monitoring mechanisms.

Widely adopted reference frameworks include the NIST AI Risk Management Framework, which organizes AI governance across four functions, and international standards such as ISO/IEC 42001 and ISO/IEC 23894, which provide a structured way to operationalize AI responsibly.

3. Build accountability throughout the AI lifecycle

Many companies attempt to address AI risks only after implementation. This approach is ineffective and expensive because many risks occur early. Accountability needs to be embedded throughout the AI lifecycle: through problem definition, data sourcing, model development and validation, deployment, ongoing monitoring, and ultimately retirement. Lifecycle governance moves accountability from post-incident investigation to ongoing risk management.

This is especially important for generative and agent systems. Generative models pose risks related to hallucinations, intellectual property disclosure, and misinformation. Agent systems add concerns about autonomous task execution and cascading operational errors. Accountability must therefore include responsive governance, monitoring of outcomes, and explicit constraints on autonomous action.

4. Build traceability and auditability of decisions

AI decisions must be reproducible. If something goes wrong, or if asked by regulators, companies need to be able to answer questions such as:

- Which model version made your decision?

- What data inputs were used?

- Who approved the implementation?

- What governance controls were applied?

Model registries, version control systems, and decision logs are the basic tools here. Without traceability, companies cannot diagnose failures or demonstrate accountability.

5. Prepare for failure and repair

AI systems are not error-free. For example, models degrade over time due to data drift, changing external conditions, and adversarial behavior. Enterprises should treat AI failures as operational incidents with corresponding response capabilities: continuous model monitoring, incident response procedures, escalation channels, customer remediation mechanisms, and root cause analysis processes. Organizations that plan for failure are better positioned to contain damage and restore trust when failure occurs.

6. Align accountability with business results

Business leaders should position responsibility as protection of values. Strict accountability reduces errors and bias, improving the quality of decision-making. It also detects failures early to ensure regulatory compliance, strengthen operational resiliency, and increase customer confidence in AI-enabled services. EY research shows that companies with formal AI monitoring and monitoring mechanisms report greater cost efficiency and revenue growth compared to companies without a structured framework.

7. Manage third party and vendor responsibilities

Enterprises may rely on third-party AI technologies such as models, data pipelines, underlying model APIs, and agent platforms, but external technologies do not change responsibilities. Vendor management should include contractual obligations regarding model performance and compliance, transparency requirements for training data and known limitations, audit rights, and defined incident response procedures. This is especially important in generative AI ecosystems where enterprises routinely rely on third-party underlying models and agent platforms.

Pitfalls to avoid with AI accountability

Despite growing awareness, many companies are experiencing responsible AI failures, including:

- Lack of management sponsorship. Accountability initiatives without executive or board-level support lack the authority to force change across business units.

- Black box decision making. Models whose output cannot be audited or explained undermine trust, invite regulatory scrutiny, and make remediation difficult.

- Governance only after implementation. Applying controls after a system is in production, rather than integrating accountability throughout the development lifecycle, is less effective and more costly.

- Don’t overlook vendor dependence. Relying on external AI platforms without sufficient oversight, audit rights, or contractual accountability creates risks that are hidden until they become reality.

- Undefined failure response. Organizations that do not plan for AI failures, including remediation paths and escalation protocols, face greater reputational and financial damage when an incident occurs.

Kashyap Kompella, founder of RPA2AI Research, is an AI industry analyst and advisor to leading companies in the US, Europe, and Asia Pacific. Kashyap is the co-author of three books: “Practical Artificial Intelligence,” “Artificial Intelligence for Lawyers,” and “AI Governance and Regulation.”