|

Organizations face difficult tradeoffs when adapting AI models to specific business needs. So, settle for a generic model that produces average results, or tackle the complexity and expense of advanced model customization. Traditional approaches force you to choose between lower performance on smaller models and higher costs of deploying larger model variants and managing complex infrastructure. Reinforcement fine-tuning is an advanced technique that uses feedback to train models instead of large labeled datasets, but implementing it typically requires specialized ML expertise, complex infrastructure, and significant investment, and there is no guarantee that you can achieve the accuracy required for your particular use case.

Today, we are announcing enhancement tweaks in Amazon Bedrock. This is a new model customization feature that learns from your feedback and creates smarter, more cost-effective models that deliver high-quality output for your specific business needs. Reinforcement fine-tuning uses a feedback-driven approach in which the model is iteratively improved based on reward signals, resulting in an average accuracy improvement of 66% compared to the base model.

Amazon Bedrock automates enrichment fine-tuning workflows, making this advanced model customization technique available to everyday developers without the need for deep machine learning (ML) expertise or large labeled datasets.

Mechanism of fine-tuning reinforcement

Fine-tuning Reinforcement is built on reinforcement learning principles to address the common challenge of consistently producing output from a model that aligns with business requirements and user preferences.

While traditional fine-tuning requires large labeled datasets and expensive human annotations, enhanced fine-tuning takes a different approach. Rather than learning from fixed examples, reward functions are used to evaluate and determine which responses are considered appropriate for a particular business use case. This allows you to teach your model to understand what produces high-quality responses without requiring large amounts of pre-labeled training data, making advanced model customization on Amazon Bedrock more accessible and cost-effective.

The benefits of using reinforcement tweaks in Amazon Bedrock include:

- Ease of use – Amazon Bedrock automates much of the complexity, making it easier for developers building AI applications to fine-tune reinforcement. Models can be trained using Amazon Bedrock’s existing API logs or by uploading datasets as training data, eliminating the need for labeled datasets or infrastructure setup.

- Improving model performance – Fine-tuned enhancements improve model accuracy by an average of 66% compared to the base model, enabling price and performance optimization by training smaller, faster, and more efficient model variants. It works with the Amazon Nova 2 Lite model and offers better quality and price performance for your specific business needs. Additional models will be supported soon.

- Safety – Your data remains within the secure AWS environment throughout the customization process, reducing security and compliance concerns.

This feature supports two complementary approaches that provide flexibility for optimizing your model.

- Reinforcement learning with verifiable rewards (RLVR) Use rule-based scoring for objective tasks such as code generation and mathematical reasoning.

- Reinforcement learning from AI feedback (RLAIF) Employ AI-based judges for subjective tasks such as following instructions and moderating content.

Start fine-tuning reinforcement with Amazon Bedrock

Let’s take a look at the steps to create a reinforcement fine-tuning job.

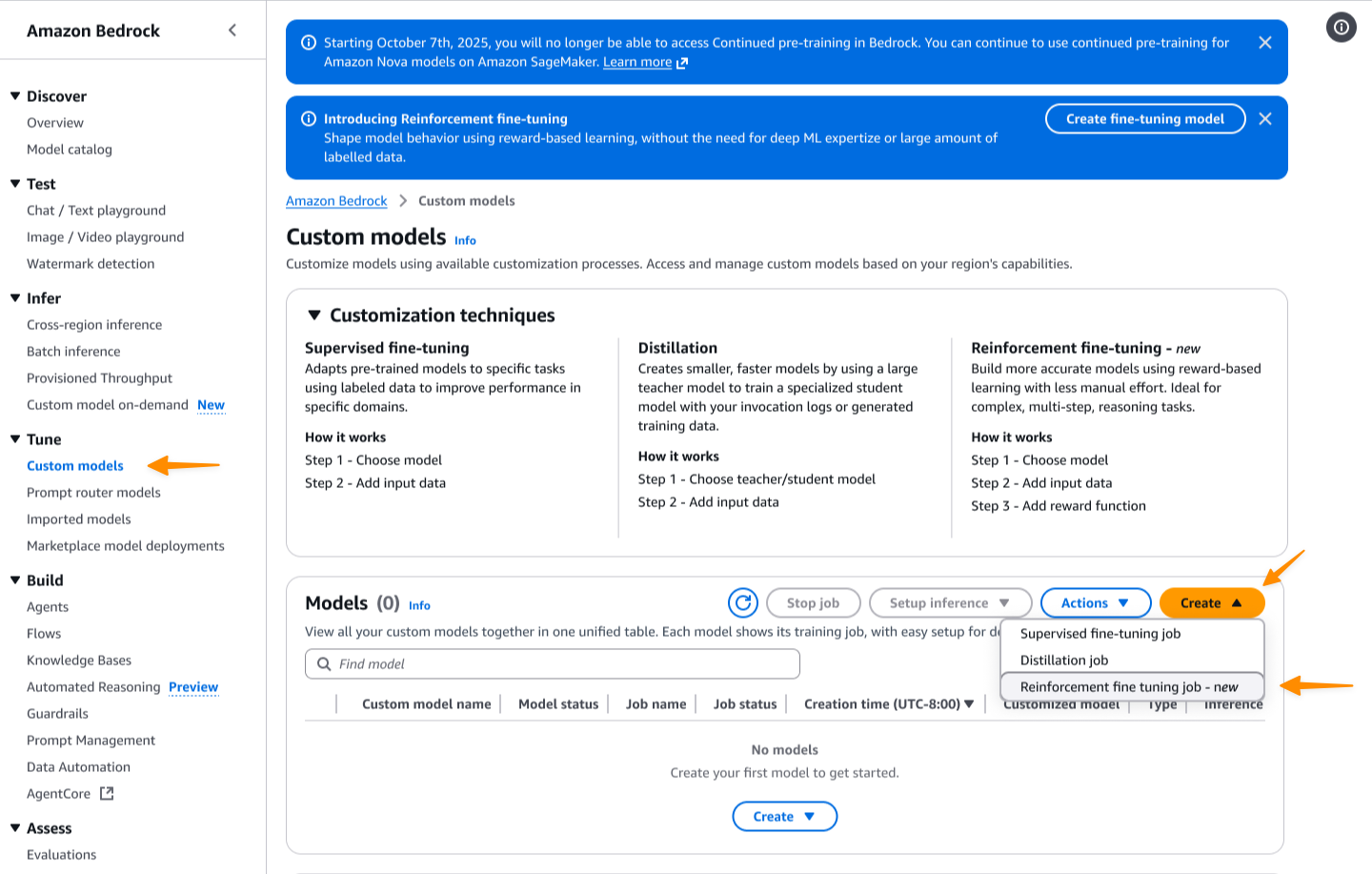

First, access the Amazon Bedrock console. Then go to: custom model page. i choose create and select Fine adjustment of reinforcement.

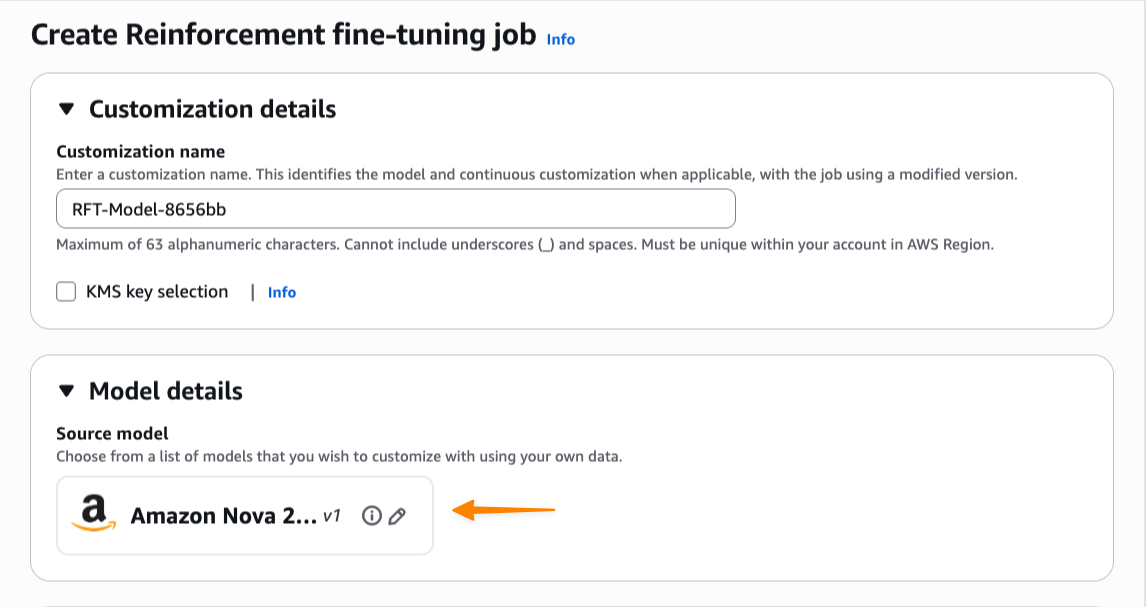

First enter a name for this customization job, then select a base model. Enhancement tweaks at launch support Amazon Nova 2 Lite, with additional models coming soon.

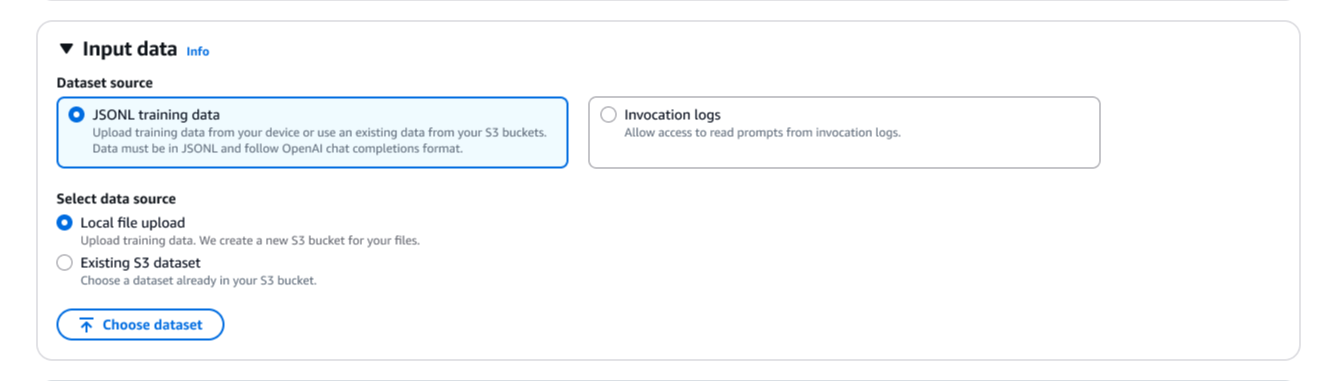

Next, you need to provide training data. You can use saved call logs directly, eliminating the need to upload separate datasets. You can also upload a new JSONL file or choose an existing dataset from Amazon Simple Storage Service (Amazon S3). Enhancement tweaks automatically validate training datasets and support OpenAI Chat Completions data format. If you provide call logs in Amazon Bedrock invoke or converse format, Amazon Bedrock automatically converts them to Chat Completions format.

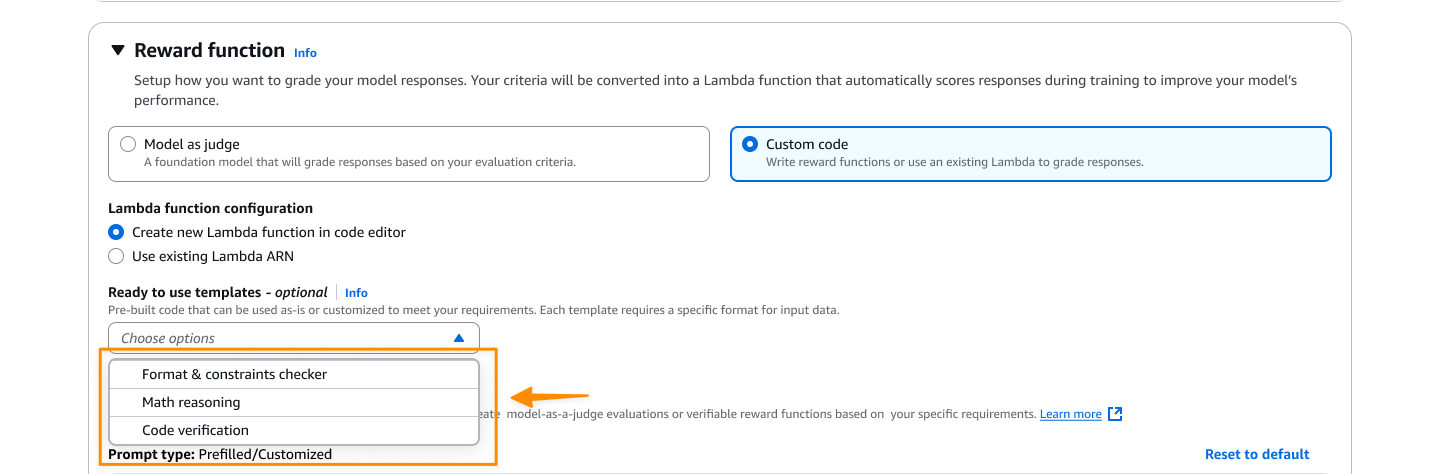

Setting a reward function defines what constitutes a good response. There are two options here. For objective tasks, you can choose custom code Write custom Python code that runs through an AWS Lambda function. If you would like a more subjective evaluation, Model as a judge Use the Fundamental Model (FM) as a judge by providing evaluation instructions.

Here I choose: custom codecreate a new Lambda function or use an existing function as your reward function. You can start with one of the provided templates and customize it to suit your specific needs.

You can change the default hyperparameters such as learning rate, batch size, and epochs as needed.

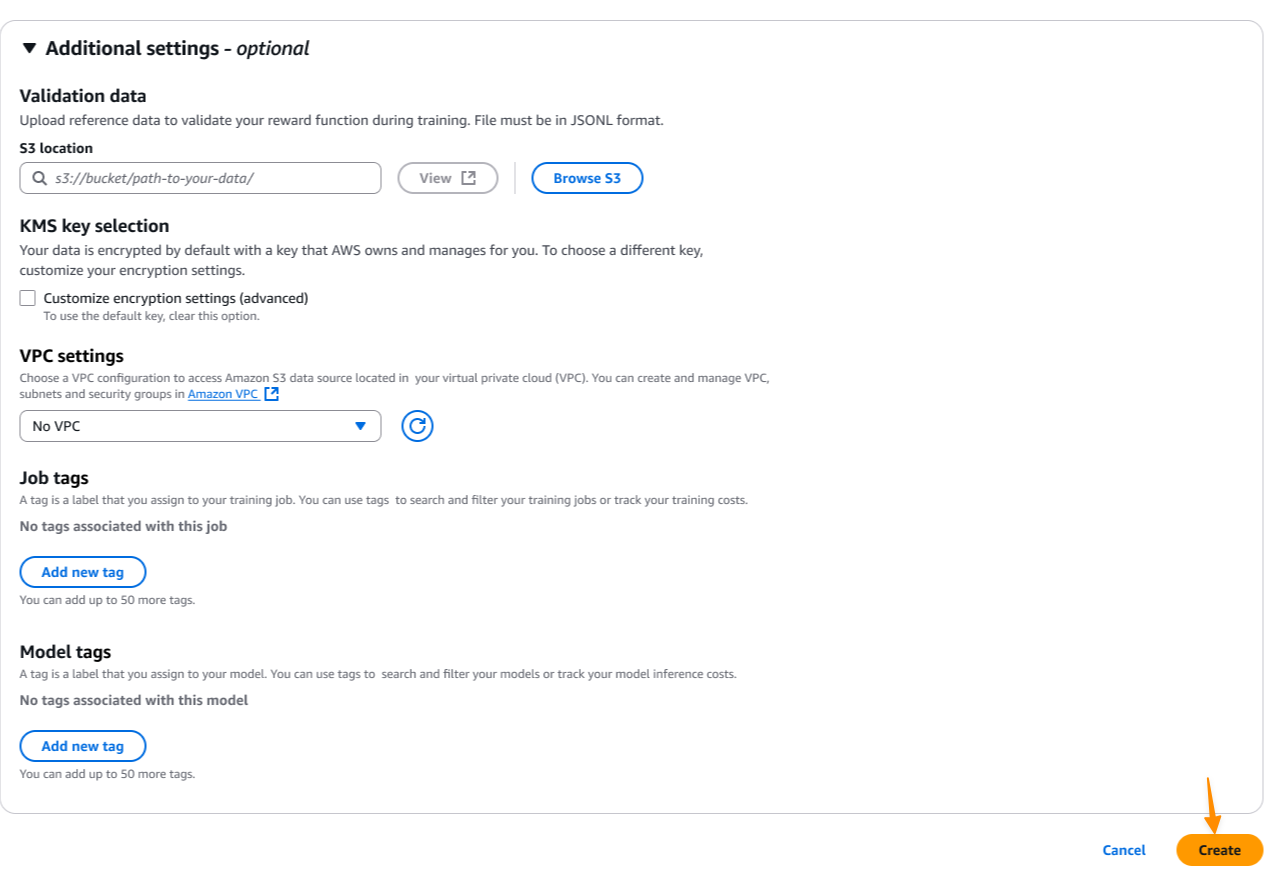

To improve security, you can configure your Virtual Private Cloud (VPC) settings and AWS Key Management Service (AWS KMS) encryption to meet your organization’s compliance requirements. Then I choose create Click to start the model customization job.

During the training process, you can monitor real-time metrics to understand how your model is learning. The training metrics dashboard shows key performance metrics such as reward scores, loss curves, and accuracy improvement over time. These metrics help you understand whether your model is converging properly and whether your reward function is effectively guiding the learning process.

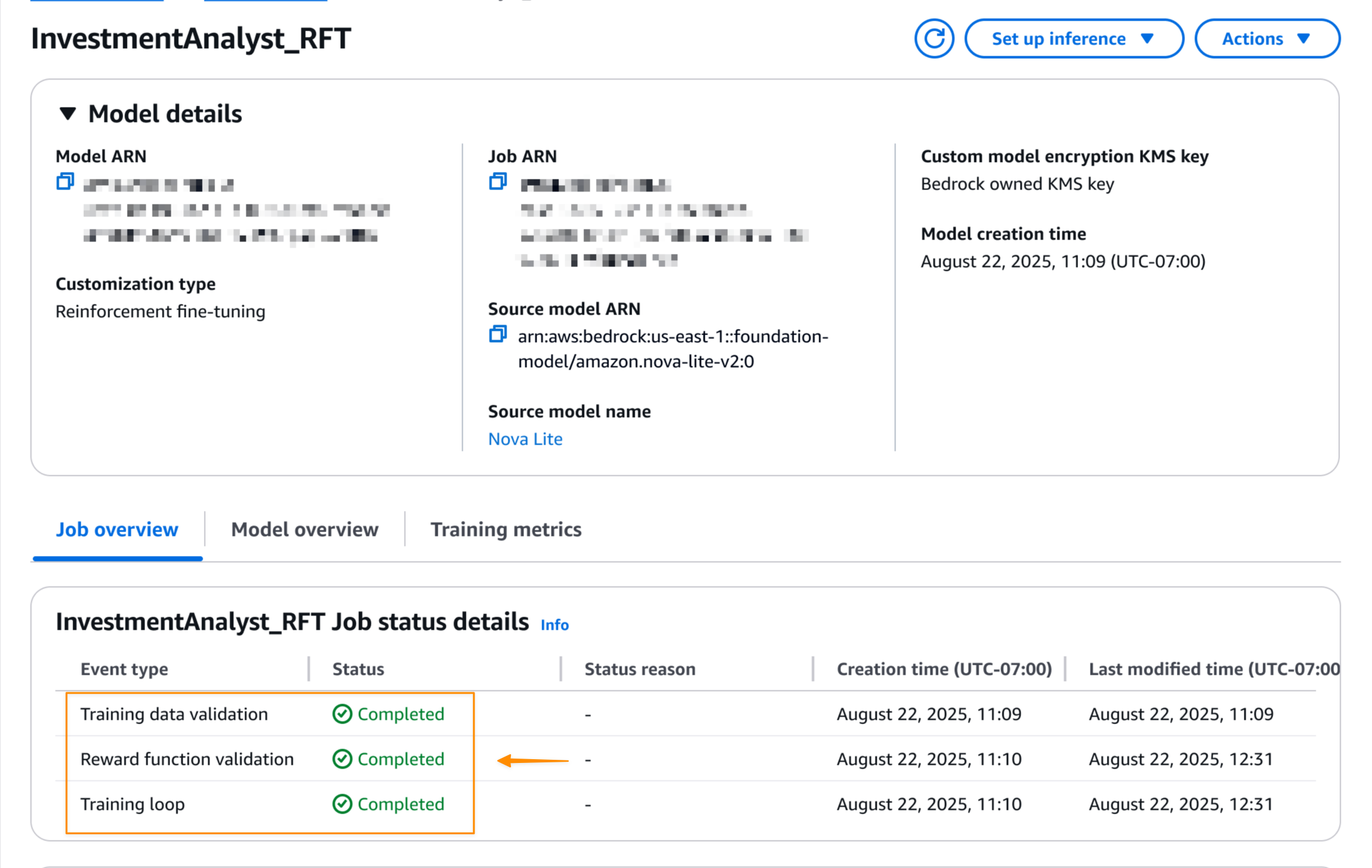

Once the reinforcement fine-tuning job is complete, you can see the final job status on screen. Model details page.

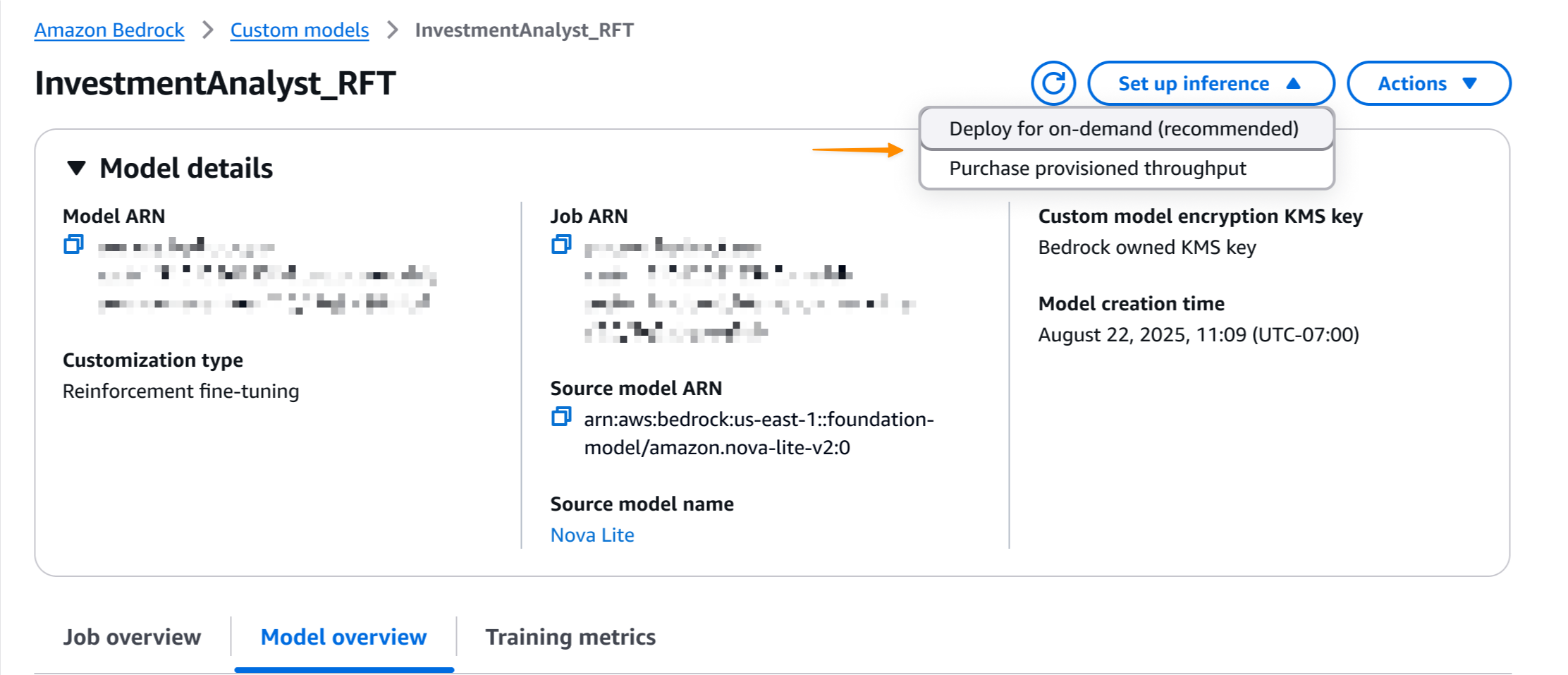

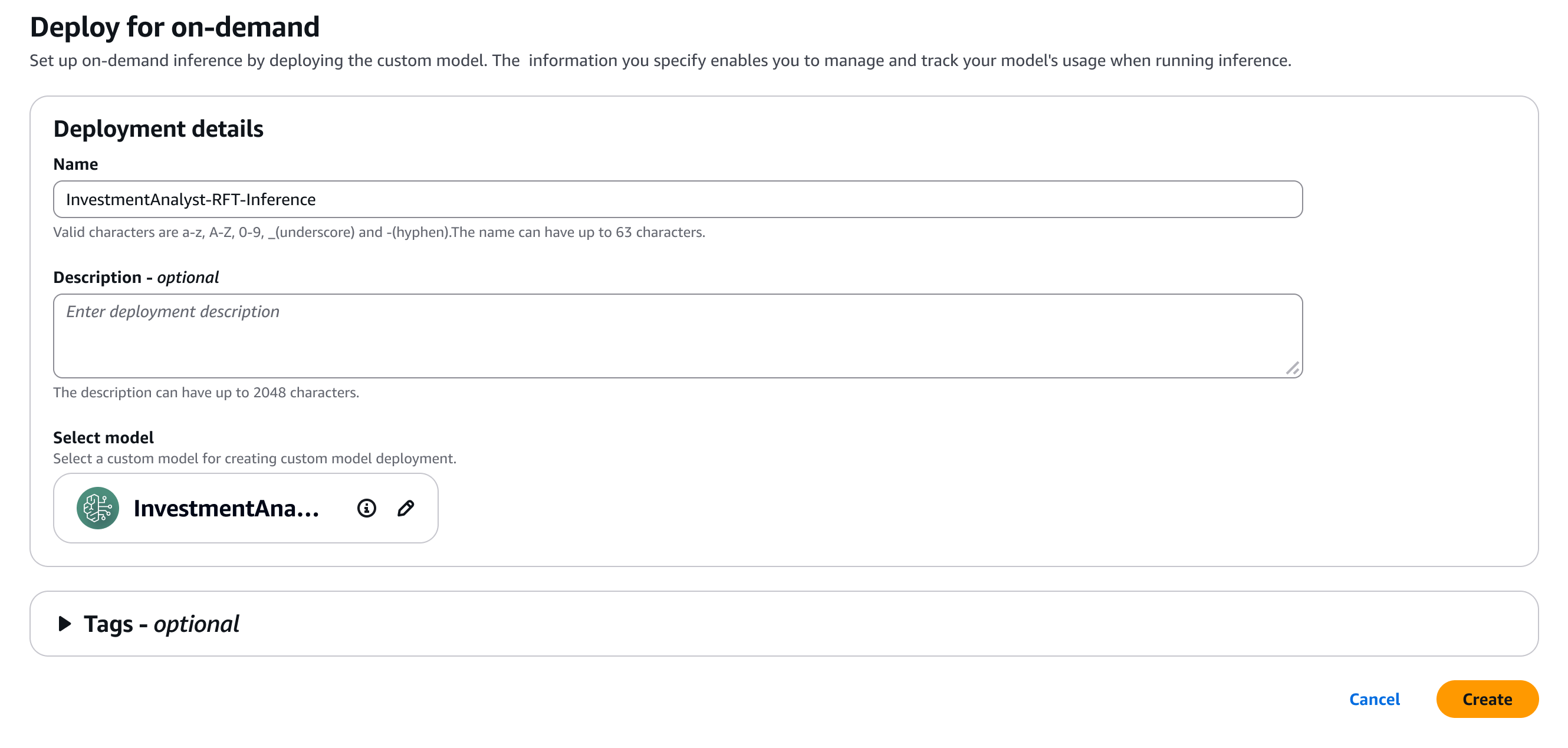

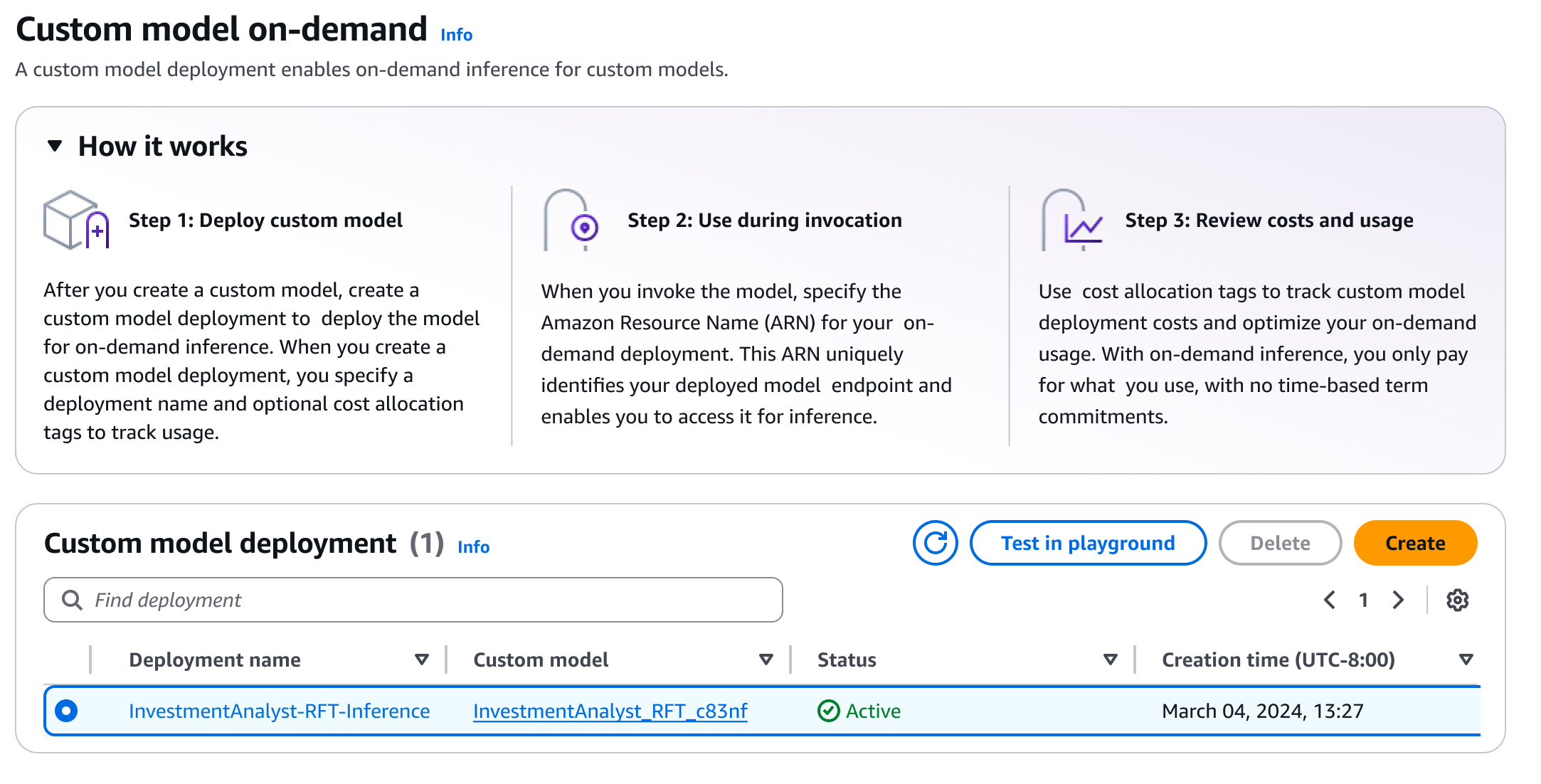

Once the job is complete, you can deploy your model with one click. i choose Set up inferencethen select Deploy on demand.

Here are some details about my model.

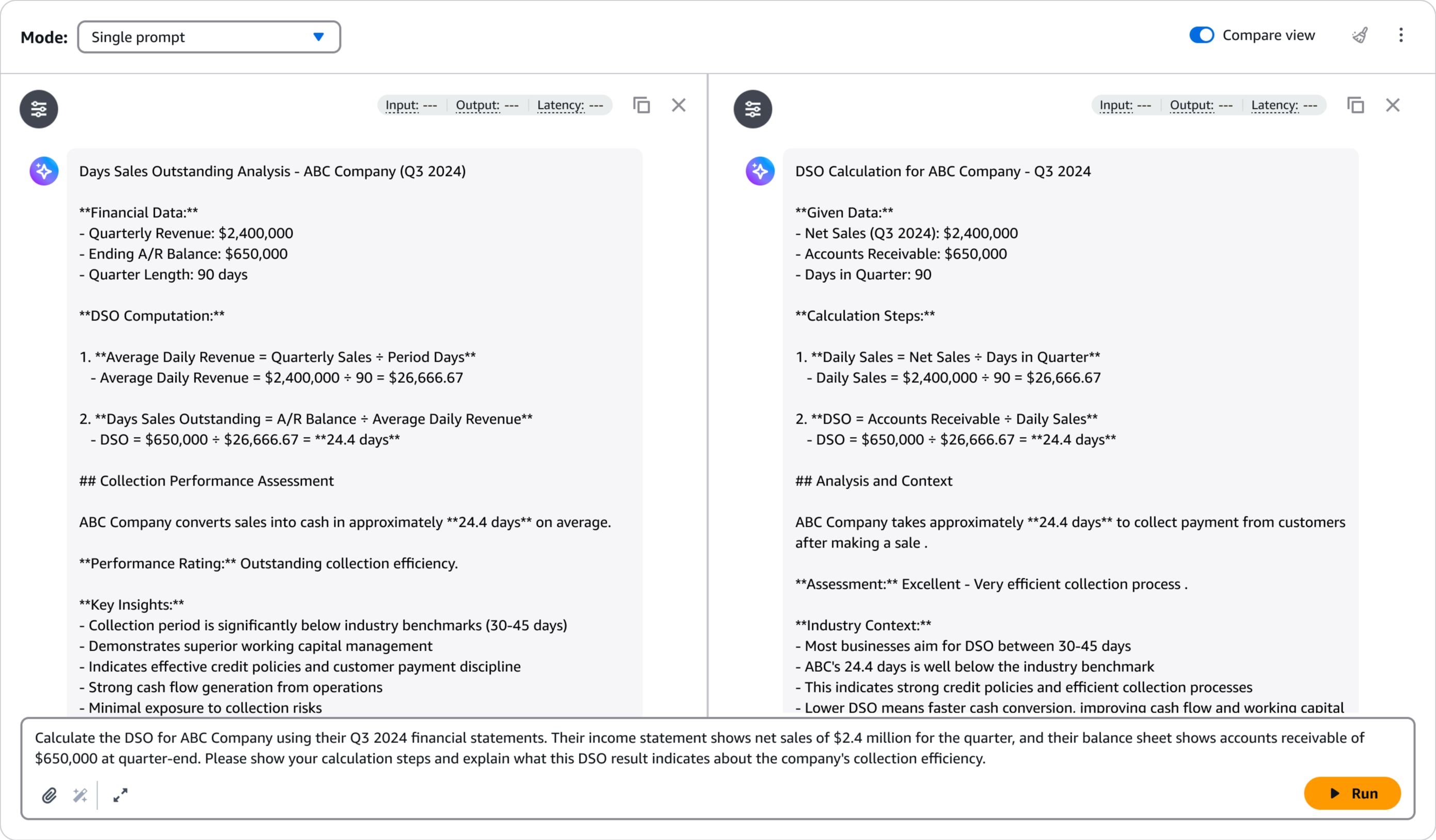

After deployment, you can immediately evaluate the performance of your model using Amazon Bedrock Playground. This helps you test your fine-tuned model with sample prompts and compare its responses to the base model to verify improvements. i choose Test it on the playground.

Playground provides an intuitive interface for rapid testing and iteration, and helps ensure that models meet quality requirements before integrating them into production applications.

interactive demo

Learn more by navigating through a hands-on, interactive demo of Amazon Bedrock enhancement tweaks.

Other things you need to know

Important points to note are:

- Template — There are seven ready-to-use reward function templates covering common use cases for both objective and subjective tasks.

- price – For pricing details, please see the Amazon Bedrock pricing page.

- Safety – Training data and custom models remain private and are not used to improve FM for the public. Supports VPC and AWS KMS encryption for added security.

Visit the Tweaking Reinforcements documentation and access the Amazon Bedrock console to start fine-tuning your reinforcements.

Happy building!

— Donnie