A traditional operating system takes instructions. An AI operating system takes objectives. Personal Computer gives Perplexity Computer and the Comet Assistant always-on, local access to your machine’s files, apps, and sessions through a continuously running compact desktop. It’s a persistent digital proxy of you.

Perplexity announced Personal Computer, a local/cloud hybrid AI agent that runs on a dedicated Mac Mini to continuously execute autonomous tasks. Positioned as a persistent project manager, it can orchestrate workflows across 20+ specialized models and hundreds of connected apps to execute complex commands remotely. The system connects local files (on the Mac Mini), browser sessions, and third-party apps like Slack, Notion, and Gmail to manage workflows with AI after the user steps away. It is available via waitlist to users on Perplexity’s paid Pro plans.

Perplexity also launched Computer for Enterprise as well as Computer on Slack. Perplexity is positioning this as a more secure, cloud-based alternative to computer agents like OpenClaw, bringing them into competition with other general AI agent providers like Manus. Macworld notes it’s a Mac Mini running an AI agent under the hood, packaging its AI assistant into a dedicated hardware-software product experience; hackers and hobbyists can still just roll their own.

Nvidia announced Nemotron 3 Super, a 120B parameter open-weight model aimed at agentic AI workloads. Nemotron 3 Super employs a hybrid architecture combining Mamba and Transformer layers in an MoE with 120B total parameters and 12B active parameters. It features a 1 million token context window and achieves 7x higher throughput than its predecessor by integrating native multi-token prediction (MTP). Nemotron 3 Super benchmarks are comparable to GPT OSS 120B or Qwen 3.5 122B, but it is much faster, with significantly reduced inference latency. The open model has weights on Hugging Face and datasets and recipes for fine-tuning on GitHub.

Google introduced Gemini Embedding 2, their first natively multimodal embedding model capable of processing text, images, video, audio, and documents within a single unified semantic vector space. Based on Gemini and using Matryoshka Representation Learning (MRL), the model natively understands interleaved multimodal input and can truncate vector dimensions to reduce storage while maintaining high retrieval accuracy. Gemini Embedding 2 is part of the embedding stack in the Gemini API. It supports 8,192 token text context, video clips up to 120 seconds, and PDFs up to six pages, enabling streamlined multimodal RAG (Retrieval-Augmented Generation) and similarity workflows.

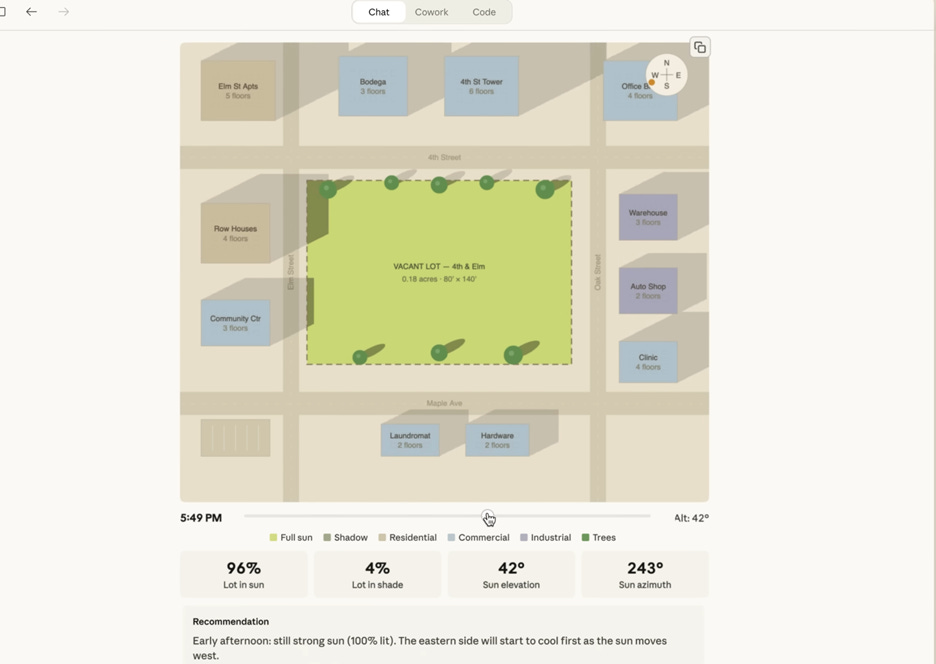

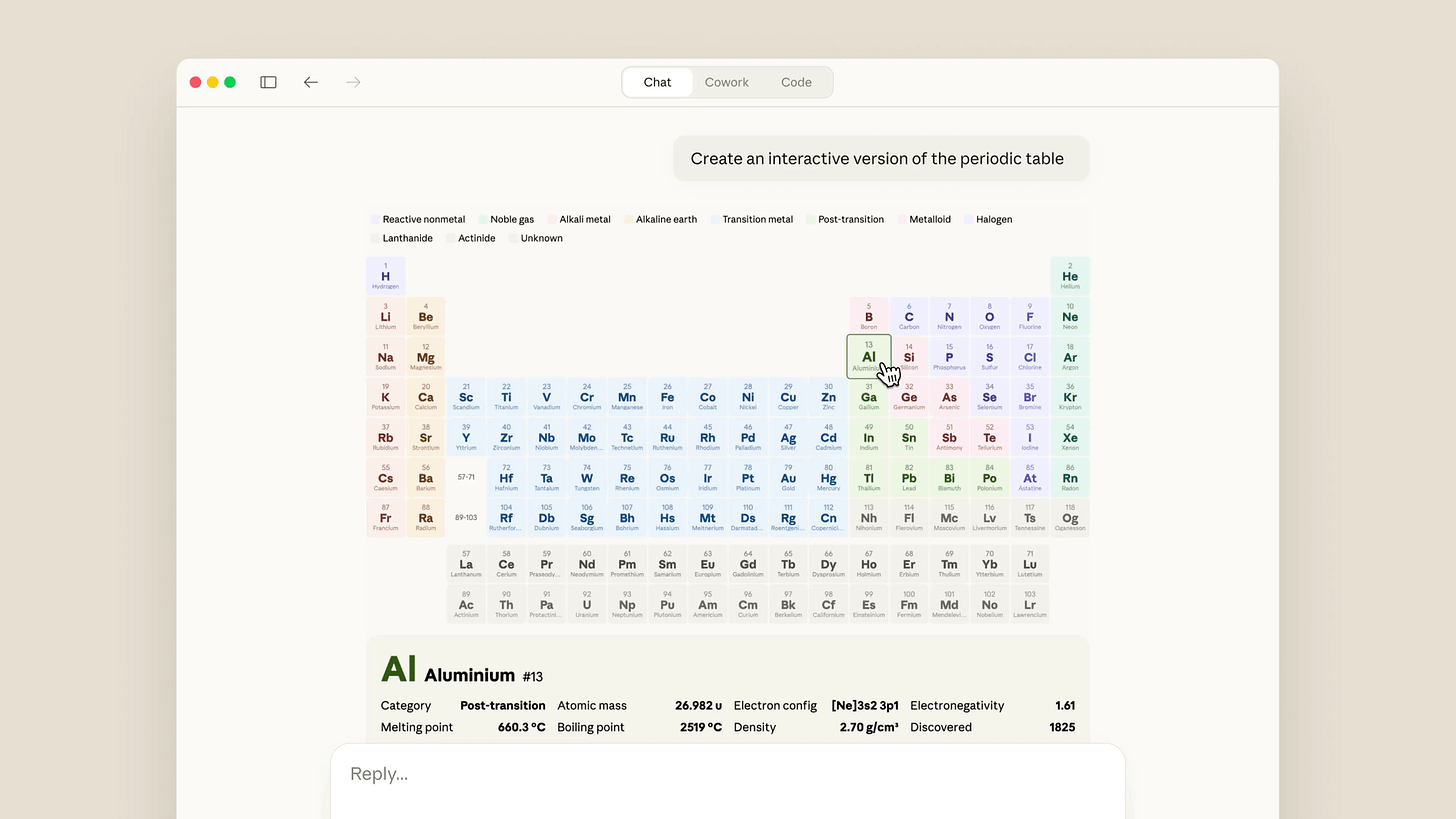

Anthropic added a generative visualization capability to Claude, enabling the model to generate interactive charts, diagrams, and visualizations directly inside the Claude chat interface. This feature generates visualization presentation responses in a collaborative workspace, allowing users to manipulate data variables and update charts or graphs in real-time. The update is available for all Claude plans.

OpenAI introduced Interactive Learning, a feature for ChatGPT that provides dynamic animated visual simulations to help explain mathematical and scientific concepts for students. The interactive visualizations are pre-defined, with OpenAI starting with more than 70 core math and science concepts such as the Pythagorean theorem and the ideal gas law. The functionality is rolling out to all logged-in ChatGPT users and more visualizations will be added over time.

Fish Audio launched S2, an open text-to-speech model focused on low latency and controllable emotional expression. Trained on 10 million hours of audio across 50 languages, the S2 model achieves sub-150 millisecond latency and fine-grained control over speech output. The 5B S2-pro model is available for local use as part of speech generation stack.

Microsoft launched Copilot Health as a separate secure area within Copilot for personalized health insights. Microsoft said it can combine data from wearables, records from more than 50,000 U.S. hospitals and provider organizations, and lab results. To avoid regulatory backlash, Copilot Health is pitched as a tool to help people better prepare for doctor visits rather than replace medical care.

Google Maps added Gemini-powered Ask Maps and Immersive Navigation features. Ask Maps lets users ask complex real-world questions conversationally and receive personalized answers with a map, while Immersive Navigation adds AI assistance around trip planning and understanding places before arrival.

MiroMind released MiroThinker-1.7 and its related H1 research-agent line as open models focused on long-horizon reasoning and deep multi-step research tasks. MiroThinker-1.7 is a fine-tune of the Qwen3-235B model; it supports a 256K context window, up to 300 tool calls, and targets deep-research benchmarks. It is available via Hugging Face.

OpenAI’s Sora video generator is expected to come into ChatGPT, integrating video generation into its main chatbot product rather than keeping Sora as a separate experience. This would turn Sora from a standalone creative tool into a more directly accessible ChatGPT feature.

Nvidia is ready to announce NemoClaw at their GTC next week. Nvidia’s NemoClaw AI Agent Platform is designed to be a fully open platform for businesses to deploy AI agents that can operate physical and virtual computer systems. Nemo Claw is optimized for heterogeneous corporate hardware and maintains strict privacy by running within local enterprise infrastructure.

Meta delayed its Avocado AI model after internal tests showed performance shortfalls. A Reuters follow-up said the model now appears delayed to May or June and that its performance falls between Google’s Gemini 2.5 and Gemini 3. This is a development setback for Meta’s AI efforts.

OpenAI upgraded the Sora 2 Video API with custom characters, clips up to 20 seconds, and batch jobs. These changes expand what developers can generate and automate through Sora’s API workflows.

OpenAI added a computer environment to the Responses API, including a shell tool and hosted container workspace. OpenAI said this lets models propose commands while the platform executes them in an isolated environment with files, optional structured storage, and restricted network access. This release is an important step in building support for more capable AI computer agents.

Meta launched new AI-based anti-scam tools across WhatsApp, Facebook, and Messenger to identify suspicious activity and protect users. The company highlighted device-linking warnings, suspicious friend-request alerts, and large-scale scam-ad removals.

Adobe launched AI Assistant for Photoshop in beta on web and mobile, that allows for prompt-based AI-driven image editing in Photoshop. Users can describe desired changes, such as adding background elements or modifying lighting, and the AI generates the requested edits directly within the project. The tool leverages Adobe’s Firefly generative models for image synthesis.

Anthropic announced that Claude Opus 4.6 and Sonnet 4.6 now support a 1 million token context window at standard pricing. The company said there is no long-context premium, and media limits were expanded to as many as 600 images or PDF pages per request.

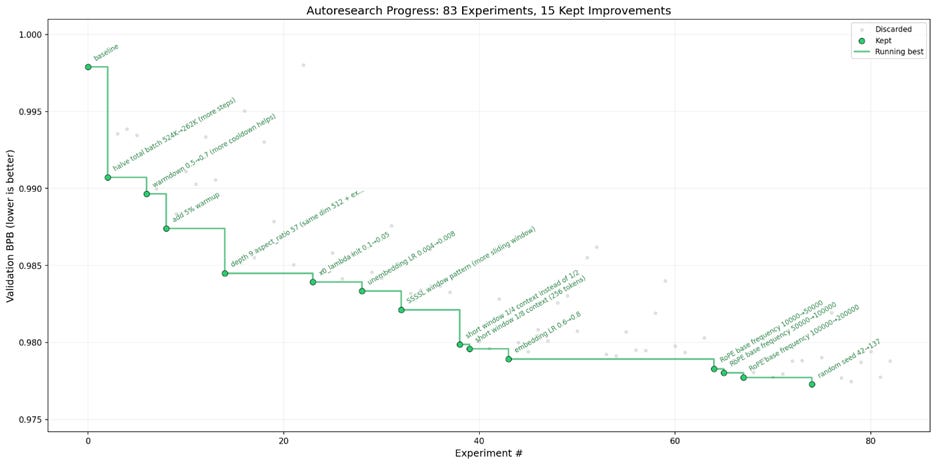

Andrej Karpathy open-sourced AutoResearch, a framework for running autonomous research loops against coding and training tasks. AutoResearch lets AI agents propose changes, run experiments, evaluate results, and continue iterating without constant human intervention. Karpathy used it on GPT-2 training optimization and said the loop found stacked improvements that reduced training time by 11%. It’s based on a simple iterative feedback loop similar to Ralph Wiggum Claude code loop, yet achieved great results with low human effort; this simplicity and power led to viral discussions online.

Google Research introduced Groundsource, an AI system that uses public reports and Google Maps data to build historical flood datasets and forecast urban flash floods. Google Research developed a novel AI-based method to analyze historical flood reporting and build a dataset for weather and flood patterns. By leveraging real-time news data and weather patterns, the system aims to provide up to 24 hours of advance notice for urban flash floods across the globe. The forecasts are available in Flood Hub.

Newly released Covenant-72B is a 72B parameter open-weight AI model trained through a permissionless globally distributed approach. The paper “Covenant-72B: Pre-Training a 72B LLM with Trustless Peers Over-the-Internet” describes the pretraining effort: Coordination was tied to Bittensor and a communication-efficient optimizer was used to enable the distributed pre-training run. The model performs competitively with model trained in a centralized manner, demonstrating the feasibility of distributed AI model training.

AI pioneer Yann LeCun launched Advanced Machine Intelligence (AMI) Labs with a record-breaking $1.03 billion seed round at a $3.5 billion valuation. The startup focuses on building “world models” that understand physical reality and causality, a technical bet on Joint Embedding Predictive Architecture (JEPA) and a departure from text-centric next-token prediction models. The founding team includes prominent researchers from Meta and Google DeepMind.

Meta acquired Moltbook, the Reddit-like social network where AI agents interact, post, and organize without human intervention. The acquisition is seen as a strategic move to secure an always-on agent directory, a registry where autonomous agents can verify their identities and coordinate complex tasks.

YouTube has expanded its likeness detection tools to help public figures monitor and flag AI-generated deepfakes of themselves. The tool allows public figures (politicians, government officials, and journalists) to track where their likeness is being used without consent, providing a defense against misinformation campaigns.

Anthropic has sued the Trump administration following a Department of Defense (DoD) decision to designate the company a “supply chain risk,” effectively banning its models from defense contracts. The dispute arose after Anthropic refused to remove contractual restrictions on domestic mass surveillance and the development of autonomous lethal weapons systems. Legal reporting suggests they may have a good case regarding how AI developers can maintain their own restrictions while working with national security agencies.

Nvidia and Mira Murati’s Thinking Machines Lab announced a multi-year partnership involving a “gigawatt-scale” deployment of next-gen Vera Rubin hardware. The partnership provides the lab with the massive compute capacity needed to train frontier models that compete directly with OpenAI and Google. Murati has focused the startup on automating the fine-tuning of AI models for specialized enterprise tasks.

Amazon held an emergency engineering meeting following a string of “high blast radius” outages linked to AI-assisted code changes, including a six-hour site crash that prevented customer checkouts. Internal documents suggest the incidents were caused by generative AI-assisted code changes that lacked sufficient human review. In response, Amazon has mandated senior engineer sign-off for all AI-assisted modifications.

Bloomberg reported that Elon Musk pledged to rebuild xAI after co-founder Guodong Zhang departed. xAI has suffered from loss of top talent recently, and Musk said xAI “was not built right first time around” and is being rebuilt from the foundations up. To rebuild, xAI hired two senior leaders from Cursor to strengthen its coding AI efforts, product execution and developer tooling.

Anthropic launched the Anthropic Institute to study the societal impacts and governance of powerful AI. The company said the institute combines machine learning engineers, economists, and social scientists and expands work from its Frontier Red Team, Societal Impacts, and Economic Research groups.

Runway introduced Runway Labs, an internal incubator focused on the next generation of generative video applications. The company said this product incubation and R&D initiative is dedicated to exploring new application ideas rather than announcing a specific new video model. RunwayML may be using this to incubate their ideas around world models.

Meta is developing and deploying four new generations of MTIA chips within the next two years to support ranking, recommendations, and generative AI workloads. The company said its custom silicon remains central to its AI infrastructure strategy even as it also buys from other chip suppliers.