Every time you run a ChatGPT artificial intelligence query, you use up a bit of your increasingly scarce resource: freshwater. After about 20 to 50 queries, about 0.5 liters (about 17 ounces) of fresh water is lost from the overloaded reservoir in the form of steam exhaust.

This is the result of a University of California, Riverside study that estimates for the first time the water footprint of running artificial intelligence (AI) queries that rely on cloud computing done on server racks within a warehouse-sized data processing center. Did.

Google’s data centers in the United States alone consumed an estimated 12.7 billion liters of fresh water to cool servers in 2021, at a time when drought is exacerbating climate change, researchers at the Bourns College of Engineering say. Journal arXiv as a report and preprint. Awaiting peer review.

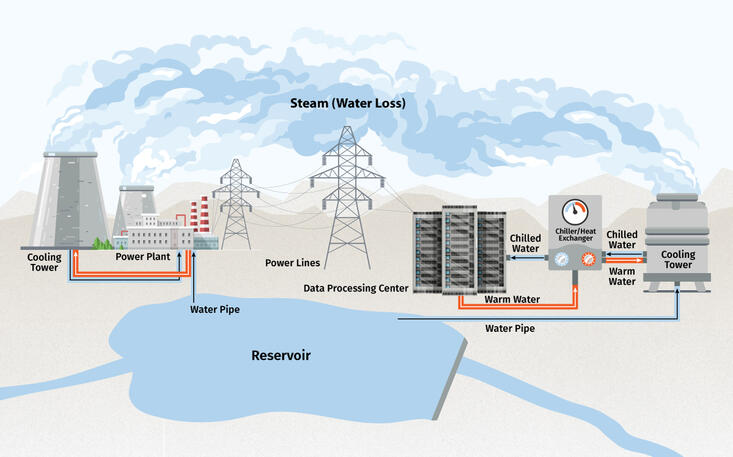

Shoalei Ren, associate professor of electrical and computer engineering and corresponding author of the study, explained that data processing centers consume large amounts of water in two ways.

First, these centers draw their power from power plants that use large cooling towers that convert water into steam that is released into the atmosphere.

Second, the hundreds of thousands of servers in data centers need to be kept cool because electricity passing through semiconductors generates heat continuously. This requires a cooling system like a power plant, typically connected to a cooling tower that converts water to steam for consumption.

“The cooling tower is an open loop, where water evaporates and heat is removed from the data center to the environment,” says Ren.

Ren said it was important to address water use by AI. AI is the fastest growing segment of computing demand.

For example, nearly two weeks of training for the GPT-3 AI program at Microsoft’s state-of-the-art US data center consumed approximately 700,000 liters of fresh water. That’s about the same amount of water used to build about 370 of his BMW cars. Or a 320-Tesla electric car, the paper said. Had they trained in Microsoft’s inefficient data centers in Asia, their water consumption would have tripled. Automotive manufacturing requires, among several other uses of water, a series of cleaning processes that remove paint particles and residue.

Ren and his co-authors, UCR graduate students Pengfei Li and Jianyi Yang, and Mohammad A. Islamic of the University of Texas at Arlington, believe that big technology must take responsibility and lead by example to reduce water use. I claim there is.

Fortunately, AI training has flexible scheduling. Unlike web searches or YouTube streams, which must be processed immediately, AI training can be done almost anytime. A simple and effective solution to avoiding wasted water use, he said, is to train the AI model during cooler times of the day when less water is lost to evaporation.

“AI training is like a very large lawn that requires a lot of water for cooling,” says Ren. “Don’t water the AI at noon because you don’t want to water the lawn at noon.”

This can conflict with carbon-efficient scheduling, which specifically favors following the sun for clean solar energy. “You can’t shift cool weather to noon, but you can store solar energy, use it later, and still be ‘green,’” says Ren.

“In the midst of a growing freshwater scarcity crisis, worsening and prolonged drought, and rapidly aging public water infrastructure, it is a truly critical time to uncover and address the secret water footprint of AI models. : “Uncovering and Addressing the Secret Water Footprint of AI Models.”