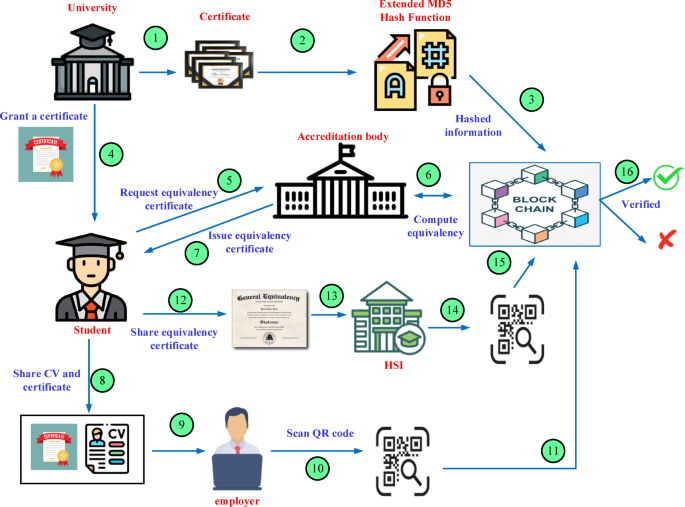

This study presents a comprehensive blockchain-based approach for quick and easy academic credential verification and equivalency issuance. As seen in Fig. 1, the suggested ecosystem for credential and equivalency verification often comprises several stakeholders, including employers, educational institutions, students, accreditation organizations, and HSI. The accrediting authority is accountable for the early establishment of the network, upholding the blockchain, and updating the network as users join or leave over time. An accrediting body is an organization or authority in charge of assessing and certifying the quality, initiatives, or organizations. The universities issue the transactions containing the credentials’ validation data to students and circulate in the blockchain. The Certification Authority tracks these communications on a scheduled interval, and the blocks resulting from this process are appended to the blockchain. Also, the accreditation body uses TCRN to issue the equivalency standard based on the transaction and other student details.

The university provides each graduate with a Hard copy of their academic degree and a transcript. The university administration generates a transaction with a digital signature of the student’s credentials. This transaction is E-signed by the university administrator and then propagates on the Credential and Equivalency Verification network (Cerberus++). Here, the accreditation body verifies the signatures on incoming transactions, checks the transactions for accuracy, gathers the transactions into a block, and appends the block to the blockchain. This stage is similar to an Equivalence certification and credential approval. A QR code for checking the credential’s authenticity is placed on the front side of the university’s Hard copy of the academic degree issued by the university to the students for verification of the details on the certificate. The employers can scan the code on the certificate and verify whether the degree is authentic through a web portal or a mobile application. The Certificate of Equivalence also contains a QR code for verification by a Higher Education Institution (HSI).

Issuance of the credential and equivalency certificate

The institution generates degree certificates for the graduating students at the end of each academic session. It is granting the credential and equivalency based on 2 groups of information: the initial is information about the degree credentials (signified as Degree Details (DD)), and the next one is more specific information about the student’s ID and the information on her transcript (denoted as id/ transcript Details (ITD)). The student’s name, the program name, the year of graduation, and the university name are typically included in the DD. The following data components are included in the ITD: the student’s identity document details, course codes, titles, learning hours, grades achieved, GPA, and CGPA. These specifics are required to assess the foreign qualification and determine whether or not it meets the academic standards of the local educational system.

Equivalency estimation using TCRN

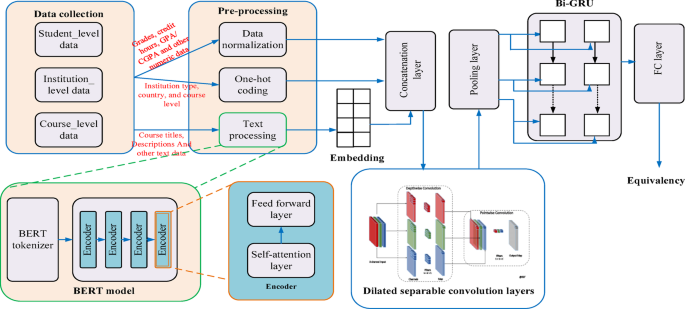

Deep learning models can manage complicated relations among academic records, courses, and grades for equivalency estimation, especially when nonlinear patterns exist in the data. Although GPT-based architectures and other large language models (LLMs) exhibit sophisticated natural language generation and processing capabilities, there are a number of obstacles to their widespread use. These include high computing costs, the necessity for big annotated datasets, a lack of transparency in decision-making, and substantial infrastructure needs that many educational institutions or assessment organizations may not be able to meet. Conversely, less complex methods such as rule-based systems, TF-IDF, or traditional machine learning models (like decision trees or SVMs) are unable to capture more profound semantic relationships between qualifications, like curriculum content, learning outcomes, or cross-national grading patterns. A balance between complexity and performance is offered by deep learning models, especially those that use word embeddings, CNNs, or GRU-based encoders with manageable size. They are more interpretable and resource-efficient than LLMs, and they can learn contextual linkages and semantic representations from datasets of intermediate size. Deep learning was therefore chosen as the best compromise method to achieve precise, scalable, and economical academic equivalency estimation.

In this work, a novel TCRN is proposed for equivalency estimation. TCRN was chosen for the equivalency estimate because it can handle complex, nonlinear academic data interactions. TCRN uses BERT embedding to capture global semantic context for textual and numerical data fusion. Deep-wise Separable Convolutions (DSC) extract course-specific data, while Bi-GRU layers retain long-term dependencies for assessing a student’s academic path. The simultaneous focus on local and global analysis makes TCRN more accurate than standard systems for equivalence estimates.

Figure 2 illustrates the main procedures and methods for machine learning-based equivalence scoring. The student’s data is collected and pre-processed using normalization and NLP models. Afterward, contextual features are extracted from the data, and embedding is produced using a pre-trained BERT model. The contextual embedded vectors are fed into a neural network that includes Bi-GRU and depth-wise separable convolution (DSC). The suggested model extracts localized and globalized semantic characteristics from embedded data using DSC, which differs from traditional convolution. The final estimate of the equivalence score is made using Bi-GRU.

Equivalency estimation using TCRN.

Data collection

Careful planning and data collection are needed to create a dataset for equivalency score estimation using machine learning. Relevant elements such as student academic records, course details, institution data, and grading systems should be gathered. That is, both student-specific and institution/course-specific data should be included in the dataset. This work considers the following details for training the proposed TCRN.

Student-level data (transcripts): This contains the student’s ID, grades, and courses taken. It also includes course code, title, and credit hours.

Course-level data: Most colleges and universities publish course descriptions, including syllabi, credit hours, and grading schemes. We accessed the following data from University websites: Course Code, course title, course description (syllabus), credit hours, and course category, whether the course is core, elective, or specialized. We also accessed and used the level of study, either undergraduate or graduate.

Institution-level data: Name of the Institution, Type of Institution (College, University, or Public), System of Grading (e.g., 10-point or 4-point scale), and Accreditation (the institution’s accreditation or rating).

The proposed model’s target variable is the equivalence score between two institutions or educational systems. This score can be a numerical score or a categorical label (e.g., equivalent, partially equivalent, not equivalent). The target variable in this work is the categorization label.

Pre-processing

After collecting the data, it must be cleaned and ready for machine learning models. This comprises:

Data normalization: Normalize grades, credit hours, and GPA/CGPA to a standard scale by using data normalization. This can be performed using a linear transformation. The grade equivalency preparation method standardizes multilingual and institutionally varied datasets.

Text processing: Natural Language Processing (NLP) techniques, such as tokenization and bi-directional encoder representation from transformers (BERT), are used to standardize and extract important information from course titles and descriptions. Grades were 4.0, courses aligned by NLP-based semantic similarity, and credit hours standardized. Tokenization unique to language and mBERT translation of multilingual data. Institutional metadata, including kind, accreditation, and equitable grading systems, were utilized.

Feature encoding: Single-Hot Encoding should encode unconditional data, including course level, country, and institution type. The study of the interquartile range eliminated outliers, while statistical techniques managed missing numbers. This ensures consistency, dependability, and fairness in establishing equivalencies throughout educational systems. Thus, by incorporating these fine-grained preprocessing filters, the system guarantees a proper account for the institutional variation and the explicit language difference to yield better equity estimates.

BERT

This work uses a pre-trained BERT model to create embedding and extract contextual aspects from text data. The input of BERT is the form of \(\:=({d}_{0},{d}_{1},\dots\:.{d}_{n})\) and it produces contextualized vector representation\(\:\:V=({v}_{0},{v}_{1},\dots\:.{v}_{n})\). There are four encoder layers in the pertained BERT. The two sub-layers of each encoder block are feed-forward and multi-head attention (MHA). The MHA consists of multiple parallel heads, and Self-attention serves as a symbol for each of the multiple heads. The self-attention determines the relationship between each word in a given phrase using the following expression:

$$\:x=softmax\left(\frac{\stackrel{-}{Q}{\stackrel{-}{K}}^{T}}{\sqrt{{d}_{m}}}\right)\stackrel{-}{V}$$

(1)

Here, \(\:\stackrel{-}{Q}\), \(\:\stackrel{-}{K}\)and\(\:\:\stackrel{-}{V}\) denote query, key, and value vectors correspondingly. \(\:{d}_{m}\) Represents size of \(\:\stackrel{-}{K}\). The following expression describes multi-head attention:

$$\:MHA\left(\stackrel{-}{Q},\stackrel{-}{K},\:\stackrel{-}{V}\right)=concat({x}_{0},{x}_{1},\dots\:{x}_{v})$$

(2)

The feed-forward consists of two linear transformations with a ReLU in the middle. The mathematical expression for the feed-forward layer is described as follows:

$$\:{FF}_{k}=\text{max}\left(0,d{\omega\:}_{1}+{\beta\:}_{1}\right){\omega\:}_{2}+{\beta\:}_{2}$$

(3)

The last encoder creates the contextualized embedding for the incoming text data after receiving the outputs from the encoders above it.

Depth-wise separable convolution layers

After converting the input text into word vectors using the BERT model, this embedding vector will be concatenated with other features, including student-level and institutional data, to give input to DSC layers to extract semantic features. The DSC is specifically used to reduce the quantity of hyperparameters and the complexity of conventional convolutional neural networks (CNN). To be more specific, DSC splits the usual convolutional layer into 2 layers: first for filtering and another for feature extraction using numerous 1 × 1 convolution kernels. The pointwise convolution is then utilized to combine the linearly depth-wise convolution output. Pointwise convolution modifies the stride to provide the down-sampling effect. The DSC layers used nonlinear functions like batch normalization (BN) and ReLU in this work. The depth-wise convolution’s feature map can be stated as follows:

$$\:{F}_{i,j,k}^{DSC}=\sum\:_{u,v}{K}_{u,v,k}.{Z}_{i+u-1,j+v-1,k}$$

(4)

Where \(\:Z\) stands for the initial tensor feature, and the final tensor feature is represented by \(\:{F}_{i,j,k}^{DSC}\). Also, the convolution kernels are represented by K. The element of the convolution kernel’s position is determined by \(\:u\) and \(\:v\). The input feature map channel is represented by \(\:k\) and the locations of the initial tensor feature element and final tensor feature are determined by\(\:\:i\) and \(\:j\).

Pooling layers

The yield of the DSC layer is given as input to the max-pooling block (MPB). This layer uses \(\:2\times\:2\) filter. The most prominent or highest value at each filter patch is chosen while the filter undergoes max-pooling traversal. This block provides an aggregated feature map with the greatest noticeable and significant elements.

Bi-GRU layers

The output of the MPB is sent into the Bi-GRU layer, which successively analyses the features generated forward and backward. The forward propagation formula for a GRU neural network is as follows:

$$\:{\widehat{h}}_{t}=\text{t}\text{a}\text{n}\text{h}({\omega\:}^{h}{F}_{t}^{DSC}+{\stackrel{-}{\omega\:}}^{h}\left({h{\prime\:}}_{t-1}\right)*{r}_{t})$$

(5)

$$\:{h{\prime\:}}_{t}=\left(1-{z}_{t}\right)*{\widehat{h}}_{t}+{z}_{t}*{h{\prime\:}}_{t-1}$$

(6)

Where* is the multiplication unit, \(\:{\omega\:}^{h}\) and \(\:{\stackrel{-}{\omega\:}}^{h}\) are the weight matrices of GRU,\(\:\:{h{\prime\:}}_{t}\) is the hidden layer, and\(\:{\widehat{h}}_{t}\) is the candidate’s hidden layer. Two unidirectional GRUs are combined to form the BiGRU model. The BiGRU’s output can be characterized as

$$H = \overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\rightharpoonup}$}} {h} ‘_{t} \oplus \overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\leftharpoonup}$}} {h} ‘_{t}$$

(7)

Where, \(\:H\) is the outcome of BiGRU, \(\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\rightharpoonup}$}} {h} ‘_{t}\) and \(\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\leftharpoonup}$}} {h} ‘_{t}\)and for two unidirectional GRUs. ⊕ denotes the addition unit.

FC layer

Following the mining of features from the DSC, the acquired feature vector is subjected to the softmax function to determine the likelihood of each category’s distribution. Mathematically, it is defined as:

$$\:{\rho\:}_{\sigma\:}\left({o}_{a}\right)=\frac{{e}^{{o}_{a}}}{\sum\:_{i=1}^{N}{e}^{{o}_{i}}}$$

(8)

Where \(\:{\rho\:}_{\sigma\:}\) denotes the distribution probabilities and \(\:{o}_{a}\) is the raw output (logit) for a class \(\:a\). The total number of classes (partial, full, and non-partial equivalents) is \(\:N\). As seen below, the loss of the suggested network can be calculated by contrasting the actual and anticipated values:

$$\:Loss=-\sum\:_{a=1}^{N}Actual\left({o}_{a}\right)\times\:log{\rho\:}_{\sigma\:}\left({o}_{a}\right)$$

(9)

Here, the loss function reduces the gap between actual and predicted equivalency values.

After detecting the equivalency standard, the accreditation body creates the equivalency certificate. Then, an enhanced MD5 Hash Function is used to determine the fingerprint for each item in the DD, ITD, and equivalence certificate separately. Subsequently, the hashed data is concatenated to produce a unique identifier (leaf node). Following that, a Merkle Mountain Range (MMR) is created using the fingerprints. Blockchain technology uses this MMR data format for scalable and effective transaction verification.

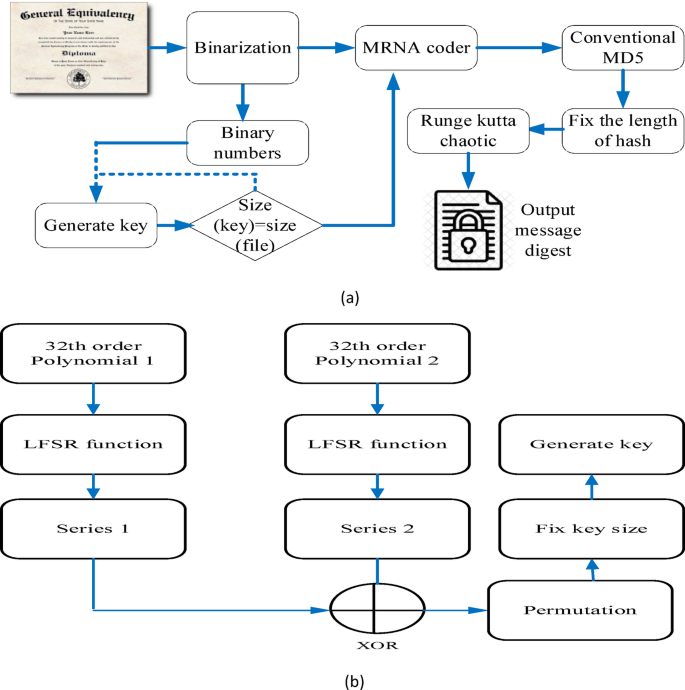

Extended MD5 hash function

This article generates fingerprints from DD, ITD, and equivalence certificates using an enhanced MD5 hash method. Recent research in the literature supports the continued use of MD5 in specific fields, despite its well-documented cryptographic flaws. Interestingly, MD5 is still useful in cases where low resource cost and quick computation are more important than collision resistance. The MD5 algorithm has been successfully applied to image recognition and watermarking as a component of hybrid systems designed for tamper detection and subtle watermarking. For example, MD5 is used as a lightweight hash to create watermark data in the study of image authentication and recovery38. It is ideal for embedding situations where system performance is critical and the application environment is not security sensitive due to its speed and ease of use. Likewise, MD5 is still preferred in the field of data deduplication because of its processing efficiency. The Fingerprint-Based Data Deduplication proposed in39 highlights that MD5 is still often used due to its efficiency in rapidly creating fingerprints for data chunks. This thesis acknowledges the origins of MD5 as a fast cryptographic hash function that uses less storage. Due to its small hash size (128-bit) and reduced computational cost, MD5 is particularly well suited for systems like the one proposed, where processing efficiency is paramount and millions of fingerprint calculations may be performed.

A Blockchain-Based Accreditation and Degree Verification System namely Cerberus also suggested to use popular hash functions, MD5 or SHA2 to generate fingerprint. The suggested approach improved the MD5 technique by adding binary and mRNA-based encoding40 as a preprocessing step prior to the MD5 hashing, despite the fact that conventional MD5 is known to be susceptible to collision attacks. The relationship between the input and the final hash is obscured by these changes, which make the input more complex and random. This makes it difficult for attackers to deduce or reverse the original data from the hash output, which is an essential feature for secure fingerprinting. Furthermore, the input transformation process becomes extremely sensitive and unpredictable when a chaotic system based on the Runge-Kutta method is incorporated. These changes preserve the initial benefits of MD5, mainly its computational efficiency and lower resource requirements, while raising the overall security of the MD5-based scheme to a level equivalent to that of SHA-family algorithms.

Real-time credential verification systems use extended MD5 hash for security, efficiency, and scalability. MD5’s faults can be rectified for academic data integrity. Extended MD5 is cheaper, faster, and more energy-efficient than SHA-256 for large datasets. Its seamless integration with blockchain infrastructure, especially MMR, ensures tamper resistance and high performance. While SHA-256 is more secure, extended MD5 protects academic credentials while prioritizing speed and scalability. The overall flow diagram of the suggested system is displayed in Fig. 3a. Initially, binary data is obtained from the input texts. Next, the key is constructed from a base finite field of degree utilizing 2 distinct cyclic polynomials to generate a new Galois field binary component (\(\:{2}^{k}\)). The LFSR technique is repeated 64 times on both polynomials (\(\:{P}_{1},{P}_{2}\)). The first key is then obtained by applying the XOR function between \(\:{P}_{1}\) and \(\:{P}_{2}\). Subsequently, the main key is created by following the steps of the preliminary permutation in DES. The main key is iterated until its size matches the block message. Figure 3 (b) depicts the basic framework for the key generation process.

Hash operation. (a) Extended MD5 hash. (b) Key generation.

The message file is split into multiple blocks after generating the keys. The proposed prototype employs RNA (MultiPRNA) encoding to encode cryptography at the bit level. MultiPRNA coding is constructed by combining the outcomes of the Key produced in the earlier phase with the result of the binarized text. The product key of the preceding phase is used to encode each block with ribonucleic acid (RNA), which converts the RNA chain into binary codes. The MD5 algorithm received the MultiPRNA codecs’ outputs as input. The primary expression utilized for updating the buffer variables during every round in MD5 is:

$$\:Q=Q+left\_rotate(P+F\left(Q,R,S\right)+Z\left[k\right]+G\left[i\right],s)$$

(10)

Where, \(\:P,Q,R,S\) denote the buffer values, \(\:\left(Q,R,S\right)\) represents the auxiliary functions, \(\:Z\left[k\right]\) is used to represent 32-bit words from the present 512-bit message block. \(\:G\left[i\right]\) Denotes known constant and \(\:s\) is the number of shifts for left rotation. The fourth-order Runge-Kutta method uses the outcome of the MD5 protocol as input for solving the differential equation and producing a first-class hash value.

Digital signature generation process

In the proposed Cerberus + + system, digital signatures are essential for guaranteeing the legitimacy and non-repudiation of academic qualifications. Here, an authorized administrator of the university creates digital signatures at the point of issuing the credential. Every transactions which contains Degree Details (DD), ID/Transcript Details (ITD), and other pertinent academic data is digitally signed using the university’s private key before being sent to the Cerberus + + network. After receiving each transaction, the accrediting body uses the university’s matching public key to validate these digital signatures. The following procedures are involved in creating a digital signature:

Credential information (degree details, transcript details, etc.) is first run through an enhanced MD5 cryptographic hash function. As a result, the original content is uniquely represented by a fixed-length hash value, also known as a message digest. The digital signature is created by encrypting the available hash using the university administrator’s private key. This is typically performed using Elliptic Curve Digital Signature Algorithm (ECDSA). The digital signature is appended to the credential transaction together with the hash and original content. The Cerberus + + Credential and Equivalency Verification network receives the entire signed transaction, which includes the university’s public key, the digital signature, and the original credential data.

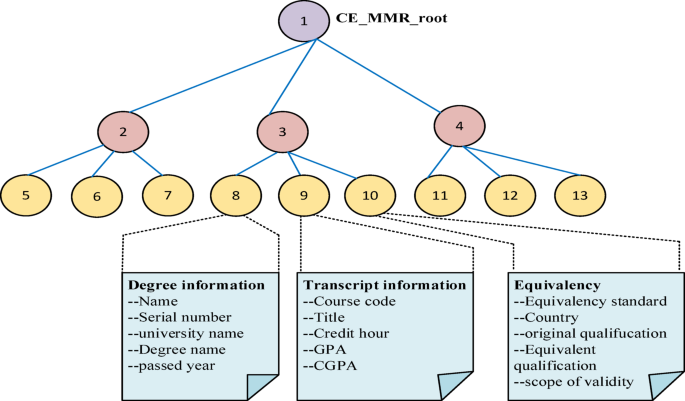

Merkle mountain range (MMR)

The fingerprints are entered into MMR after being identified from DD, ITD, and the equivalency certificate. Blockchain systems frequently use the cryptographic data structure MMR for effective data verification. The MMR is a higher-level data arrangement of the Merkle tree. It is understood as a series of Merkel trees, with the root node of every tree serving as a vertex of MMR. The proposed prototype forms the first Merkle tree by hashing and organizing the DD information. Here, a leaf node represents each unique data point, and a root hash is produced by recursively combining the hashes. Every entry in ITD is similarly hashed and organized to create the second Merkle tree. The same is repeated for the equivalency certificate. The Merkle trees are combined by appending a mutual parental node with root nodes to create a bigger tree. Suppose MMR contains \(\:m\) components and the peak roots are denoted. s \(\:{R}_{p1},{R}_{p2},\dots\:{R}_{pk}\). The MMR root is calculated as:

$$MMR_{{root}} = Hash\left( {R_{{p1}} \left\| {R_{{p2}} \ldots } \right\|R_{{pk}} } \right)$$

(11)

The composition of the suggested MMR tree is shown in Fig. 4. It shows the root is CE_MMR and leaf nodes combining the elements like Degree information containing Name, Serial number, University name and Passed year, next Transcript information of Course code, Title, Credit hour, GPA and CGPA then Equivalency of Equivalency standard, Country, Original qualification, Equivalent qualification and scope of validity parameters inserting into the MMR Tree.

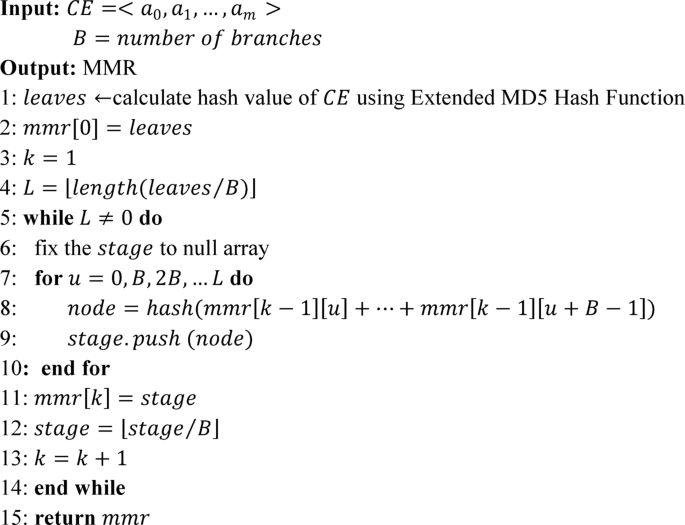

Once every node is inserted, the prototype determines if two trees are mergeable. If so, it inserts a parental node to finish the combining procedure. The root values of each Merkle tree are combined to create the MMR’s root value using hashing. The credential and equivalency MMR root (CE_MMR_root) was indicated by this Merkle root. All data elements in the primary collection, including the qualification, identification, transcription data, and equivalency for each student in the batch, can be authenticated using this CE_MMR_root. The creation of MMR is explained in Algorithm 3, where MMR is represented as an uneven two-dimensional array. The \(\:v\)-th node iIn left-to-right order) of the \(\:u\)-th layer (from the lower end to the upper end) of MMR is represented by the symbol \(\:mmr\left[u\right]\left[v\right]\). To create the MMR leaf nodes, the input data \(\:CE=\left\{DD,\:ITD,\:EC\right\}\) is hashed individually. Then, the hashes are computed beginning with the leaf nodes and merged according to the branching factor B of the input parameters to create MMR. Accrediting the student credentials and equivalency with the accreditation authority involves inserting the transaction and equivalency certificates onto the blockchain.

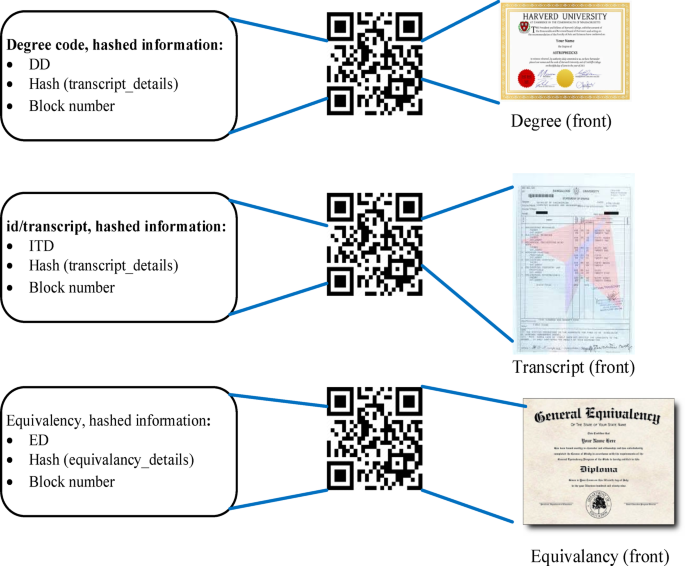

Distribution of the physical certificates

The hard copy certificates of the authorizations and equivalency enable the users to verify the information by scanning printed QR codes on the certificates and utilizing the CE_MMR_root on the blockchain. The content of the degree certificate is validated using the first QR code, the DD_code. The next QR code, which is signified as ITD_code, is utilized to verify the pupil’s credentials and the information on her record. The third QR code, symbolized by the EC_code, is utilized to verify the pupil’s degree equivalency. Figure 5 depicts the issuance of physical certificates.

Issuance of physical certificates.

Verification of the credential and equivalency certificates

The procedures for verifying the identity/transcript data, equivalency certificates, and degree contents are identical. The content of the degree can be checked by utilizing a verification program to scan the DD_code, which can also be found in resumes or inscribed on physical degree documents. The employer’s app initially calculates DD utilizing the raw text of the degree data in the DD_code. After that, the DD and ITD are hashed and concatenated. To reconstruct the Merkle tree and produce CE_MMR_root, the consequence is iteratively merged with the relevant peer nodes and encrypted. The application then uses the block code and transaction label in degree_code to query the Cerberus + + blockchain and determine whether CE_MMR_root matches CE_MMR_root that was established. Identity will be confirmed effectively when the match aligns with the degree details. Transcription data and equivalency certificates are reviewed in the same manner.

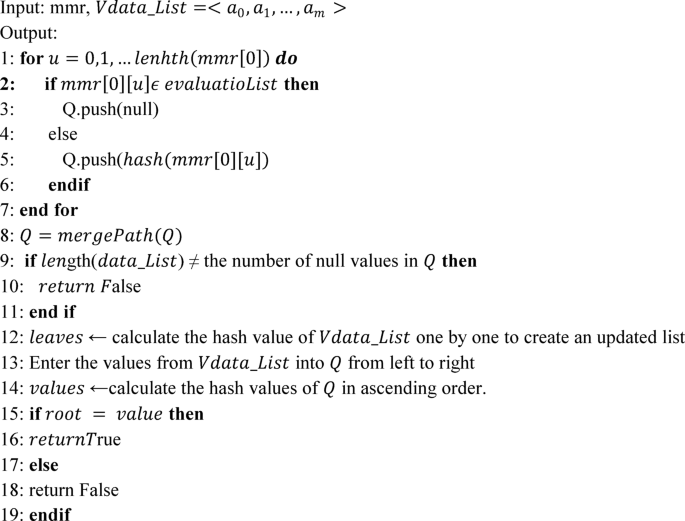

A proof path in MMR enables one to verify that a particular data item, such as a degree record or transaction, is included in the wider dataset of MMR without downloading or hashing the whole range. Rather of going through the full structure, we can create and provide a proof path that can be cross-checked with the root hash of the MMR. The algorithm displays the process of generating proof paths and verifying them. Here, a proof path is constructed for a list of data items (Vdata_List). This algorithm aims to generate a set of hashes that will eventually be used to prove the existence of specific data entries from Vdata_List in MMR.

Equivalent verification could benefit from adapting the algorithm used to generate auxiliary proof routes in MMR. This is especially true when verifying degree certifications, course credits, or transcript information against an established system or authority. In this case, the data elements in \(\:Vdata\_List\)Would include the following: course records, degree information, grade point average (GPA), and cumulative GPA (CGPA). These details will be verified using the existing MMR-stored academic database. The proof path will demonstrate that the degree or course specifics are real and unaltered in the system. The method determines whether any entry in the \(\:evaluatioList\) fits the current MMR node (\(\:mmr\left[0\right]\left[u\right]\)) for providing validated degree or course data. Adding null to the proof path (Q.push(null), indicates that the degree or course data is directly validated if the item is in the database. If the data item is not available in the\(\:\:evaluatioList\), node’s hash is created and pushed to the proof path, guaranteeing that the proof path can still be validated by rebuilding the root hash. After all pertinent nodes have been processed, the final verification chain is formed by merging the proof path.

This proof path consists of all the necessary hashes to ensure the degree or course data is in the database. This proof path checks the educational data against the reliable MMR-based academic database. Generally, users must validate the entire data collection to confirm the stored data’s accuracy, which adds a substantial processing burden. Real-time requirements and constrained network computing resources are common scenarios in practical applications. A sample verification technique can reduce the computational burden while maintaining data integrity. The verifier does not need to validate each data item separately to confirm the legitimacy of all the data MMR promised. Rather, a random sample of many data items is picked each time. Fibonacci, arithmetic, and exponential sampling are a few examples of sampling techniques.