In 2017, Savannah Tice attended the NeurIPS machine learning conference in Long Beach, California, to learn techniques she could use in her doctoral research on electronic identification. Instead, she returned to Yale with a changed view of the world.

At NeurIPS, she was listening to a talk by artificial intelligence researcher Kate Crawford who discussed bias in machine learning algorithms. She mentioned new research showing that facial recognition technology using machine learning detected gender and racial bias in its datasets. Women of color were 32% more likely to be misclassified by this technology than white men.

The study, published as a master's thesis by Joy Adwoa Buolamwini, is a landmark in the world of machine learning, revealing how seemingly objective algorithms can make errors based on incomplete datasets. became. And it was a turning point for Thais who knew machine learning through physics.

“I didn't even know that before,” says Tice, now an associate research scientist at Columbia University's Data Science Institute. “These are technology issues and we didn't know something like this was happening.”

After earning her Ph.D., Tice focused her research on the ethical implications of artificial intelligence in science and society. Such studies often focus on the direct effects on people, and this is due to, for example, algorithms designed to identify the signature of the Higgs boson in particle collisions against a pile of noise. It may look like something completely different.

However, these issues are also intertwined with the study of physics. Algorithmic biases can affect physics results, especially when machine learning techniques are used inappropriately.

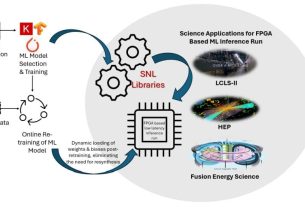

And research conducted for the purpose of physics probably does not stop at physics. By advancing machine learning technology in science, physicists are also contributing to improvements in other fields. “When you're in a fairly scientific context and you're thinking, 'Oh, we're building these models to do better physics research,' that's a far cry from social impact. “We're working hard,” Tice said. “But really it's all part of the same ecosystem.”

trust the model

In traditional computer models, a human tells a program each parameter it needs to know in order to make a decision. For example, the information that protons are lighter than neutrons helps the program distinguish between the two types of particles.

Machine learning algorithms, on the other hand, are programmed to learn their own parameters from the data they are given. Algorithms can come up with millions of parameters, each with its own “phase space”, i.e. the set of all possible iterations of that parameter.

Algorithms do not treat all phase spaces the same way. We weight the algorithm differently depending on its usefulness for the task it is trying to accomplish. Since this weighting is not determined directly by humans, it is easy to imagine that making decisions algorithmically could be a way to remove human bias. However, humans still add input to the system in the form of datasets that algorithms are trained on.

In his paper, Buolamwini analyzed an algorithm that creates facial recognition parameters based on a dataset consisting primarily of photos of white, mostly male people. Because the algorithm included a variety of examples of white men, it was able to come up with a suitable rubric for differentiating white men. There were fewer examples of people of other ethnicities and genders, so it was worse to differentiate between them.

Facial recognition technology can be used in a variety of ways. For example, facial recognition technology can be used to verify someone's identity. Many people use it every day to unlock their smartphones. Buolamwini cites other examples in his paper, including “developing more empathetic human-machine interactions, monitoring health conditions, and finding missing people and dangerous criminals.”

When facial recognition technology is used in these situations, it can range from the frustration of being denied access to something useful to the risk of being misdiagnosed in a medical setting if it doesn't work equally well for everyone. There may be negative effects. Fear of being mistakenly identified and arrested. “Characterizing how a model performs across phase space is a scientific and ethical issue,” Tice says.

Cosmologist Brian Nord has been thinking about this for years. He started using machine learning in his work in 2016. That's when he and his colleagues realized that machine learning models could classify astronomical objects observed through telescopes. Nord was particularly interested in algorithms that could decipher the strangeness of light bending around celestial objects, a phenomenon known as gravitational lensing. These models are better at classifying items based on existing data, so they can identify stars and galaxies in images much more accurately than humans.

But Nord, a scientist in Fermilab's AI Project Office and Space Physics Center, says other uses of machine learning in physics are far less reliable. While traditional programs have a limited number of parameters that physicists can manually tune to get the correct result, machine learning algorithms have millions of parameters that often do not correspond to real physical properties. used, making it impossible for physicists to modify them. “Based on the idea of statistics in physics, there is no surefire way to interpret errors resulting from AI methods,” says Nord. “It's something that doesn't exist yet.”

If physicists are not aware of these issues, they may use their models for purposes beyond their capabilities and compromise their results.

Nord is working to advance machine learning capabilities that support every step of the scientific process, from identifying testable hypotheses and improving telescope design to simulating data. He envisions a not-too-distant future in which the physics community can conceive, design, and execute large-scale projects in far less time than the decades it takes such experiments today.

However, Nord is also acutely aware of the potential pitfalls in moving machine learning technology forward. The image recognition algorithms that allow cosmologists to distinguish between galaxy clusters and black holes are the same techniques that can be used to identify faces in a crowd, Nord points out.

“If we're using these tools to do science, and we want to fundamentally improve them to do science, there's a very good chance that we can improve the tools in other places where it's applied, too.” says Nord. “I'm basically building technology to monitor myself.”

responsibility and opportunity

Physics is behind one of the most famous scientific and ethical difficulties, the production of nuclear weapons. Since the days of the Manhattan Project, the government research program to build the first atomic bomb, scientists have debated the extent to which involvement in the science behind these weapons equates to responsibility for their use. I've done it.

Joseph Rotblat, a physicist who withdrew from the Manhattan Project, appealed directly to the ethical sensibilities of scientists in his 1995 Nobel Peace Prize acceptance speech. “At a time when science plays such a powerful role in social life, and when the fate of humanity as a whole may depend on the results of scientific research, all scientists have a duty to fully recognize their role and act accordingly. “and accordingly myself,'' Rotblat said.

“Doing fundamental work and advancing the frontiers of knowledge is often done without much thought to the impact of our work on society,” he said.

Tice says he sees the same pattern repeating itself among physicists working on artificial intelligence today. There are few moments in the scientific process when physicists can stop and consider their work in a larger context.

As physicists increasingly learn about machine learning alongside physics, Tice says they should also be exposed to ethical frameworks. It can happen at conferences, workshops, online training materials, etc.

This summer, Kazuhiro Terao, staff scientist at SLAC National Accelerator Laboratory, hosted the 51st SLAC Summer Institute with the theme “Artificial Intelligence in Fundamental Physics.” He invited speakers on topics such as computer vision, anomaly detection, and symmetry. He also called on Thais to address ethics.

“For us, it's important to go beyond just the hype about AI and learn what it can do and what biases it has,” Terao says.

Research on the ethics of artificial intelligence can teach physicists ways of thinking that are also useful in physics, Terao said. For example, learning more about bias in machine learning systems can encourage healthy scientific skepticism about what such systems are actually capable of doing.

Ethics research also provides an opportunity for physicists to improve the use of machine learning across society. Physicists can use their technical expertise to educate the public and policy makers about the technology and its uses and impacts, Nord said.

And physicists have a unique opportunity to improve the science of machine learning itself, Tice says. This is because, unlike facial recognition data, physical data is highly controlled and the amount of data is enormous. Physicists know what kinds of biases exist in experiments and know how to quantify them. That makes physics as a field a perfect “sandbox” to learn to build models that avoid bias, Tice says.

But that only happens if physicists incorporate ethics into their thinking. “We need to think about these questions,” Tice says. “We can't escape the conversation.”