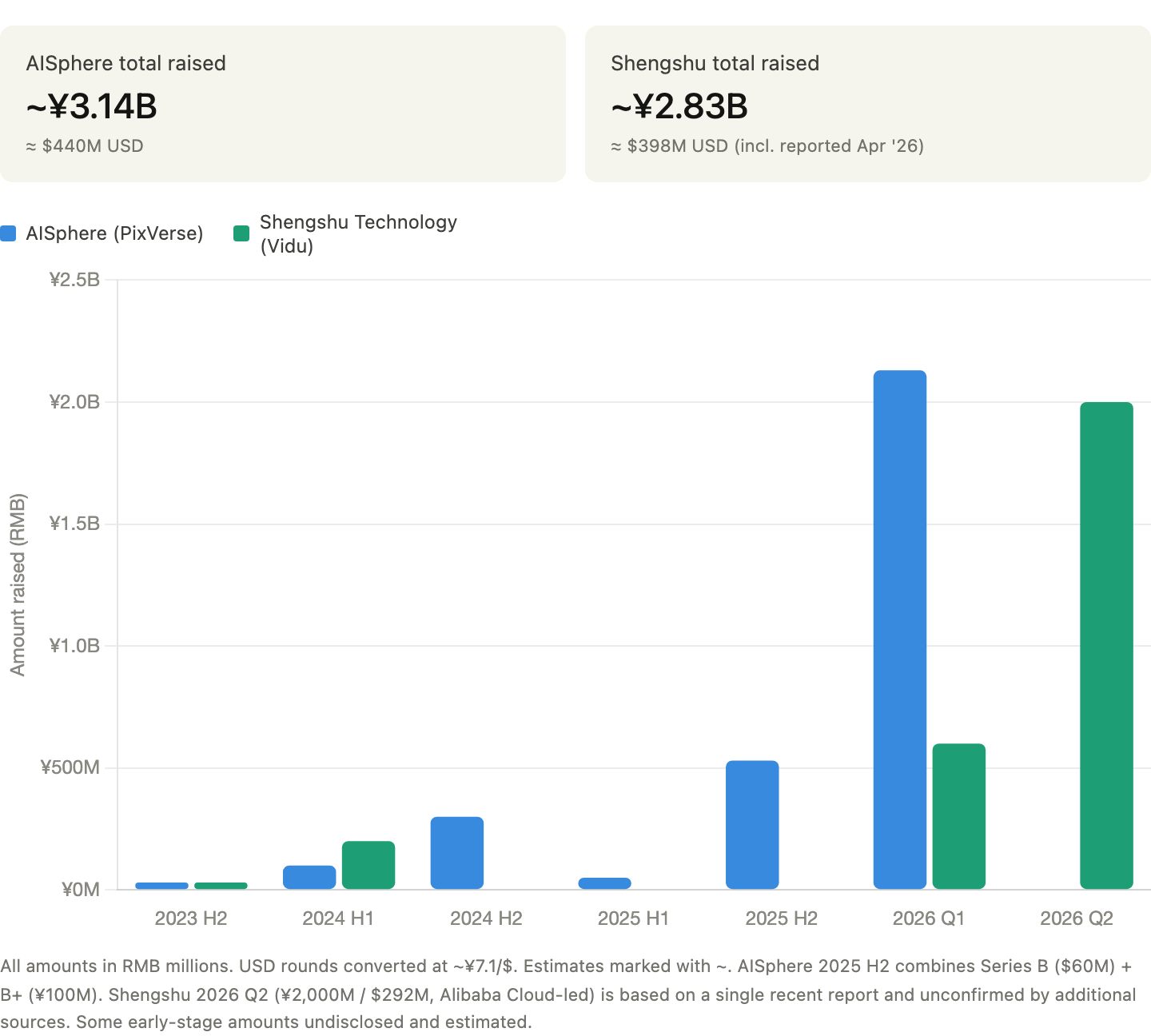

Within four weeks this spring, two Chinese AI startups each raised $300 million to build video generation technology.

AISphere, the developer of PixVerse, completed a $300 million Series C in March led by CDH Investments. Days later, ShengShu Technology, the Beijing-based startup that developed the Vidu video generator, secured $290 million in Series B led by Alibaba Cloud.

Both companies are betting that video generation is not the destination. Video generation is the gateway to world modeling, and many believe it is the next paradigm for AI. And both are intertwined, in different ways, with the same backer and competitor, Alibaba.

AISphere and its PixVerse platform are the more consumer-oriented of the two. Founded in April 2023 by Wang Changhu, a former executive at Microsoft Research Asia and ByteDance, AISphere launched PixVerse in January 2024 for users around the world. The platform allows creators to generate videos from text prompts and images, and it caught fire through a viral template that included the “Venom Transform” effect, racking up more than 1 billion views in late 2024.

As of Series C, PixVerse has more than 100 million users in 177 countries, 16 million monthly active users, and more than $40 million in annual recurring revenue, according to the company.

The company calls PixVerse “Canva for video generation.” Canva wins by making designs so simple that non-designers don’t even need a designer. PixVerse is making the same bet on video. The latest C1 model claims movie-grade quality and the ability to convert storyboards directly to video, and PixVerse V5.6 ranks among the top 10 video generators on the Artificial Analysis leaderboard.

Although the output leans more toward animation than photorealism, the motion quality and scene consistency are truly impressive. Some of their best generations offer something that’s hard to quantify: a creative, artistic quality that catches you off guard.

Its more ambitious model is the R1, launched in January 2026. This is a real-time interactive world model. According to the company, users can enter commands during video playback, such as changing lighting, replacing backgrounds, and redirecting characters, with a response delay of about 2 seconds, and can output in 1080P.

After R1’s launch, the company’s co-founder said that the majority of inbound company interest is coming from the gaming industry. This is reflected in the investor structure of AISphere’s Series C, which includes film and TV content company Ruyi Holdings and gaming company 37 Interactive Entertainment.

In contrast, Vidu plays a more technical and enterprise-focused game. ShengShu was founded in March 2023 by Tsinghua University professor Zhu Jun, who serves as the principal researcher. The company launched Vidu globally before OpenAI made the now-shuttered Sora widely available. The latest model, Vidu Q3 Pro, supports up to 16 seconds of synchronous audio and video generation with multi-shot compositing and camera control. The company reported that both user numbers and revenue will grow more than 10 times by 2025, but did not provide specific numbers.

While AISphere’s R1 targets the intersection of video and gaming, ShengShu’s goals are more based on embodied intelligence. The company has developed Motus, an embodied AI model designed to help robots perform actions. $290 million will fund a common world model that bridges generated video with real-world use cases such as industrial automation and robotics. Founder Zhu Jun explained that the goal is to connect perception and behavior and build an AI that can consistently model and predict real-world behavior.

In my view, AIsphere is roughly similar to MiniMax in its consumer-first, product-driven growth strategy, while ShengShu better reflects Zhipu in its academic origins and enterprise focus.

AIsphere and ShengShu are both betting on the world model, but applying it to different purposes.

The easiest way to understand the world model is to contrast it with how the LLM works. LLM predicts the next token. World models predict what will happen next in the world: how objects will move, how physics will behave, and how causal relationships will play out over time. This is why, in current AI-generated video clips, a collar disappears when a dog runs behind a couch, or a loveseat turns into a couch mid-shot. The model does not have a stable internal representation of the scene. Make a statistically plausible guess for each frame.

The world model’s theory that video is a training ground for AI that understands physical reality has attracted enthusiasts. Yann LeCun left Meta to pursue Meta. Google DeepMind’s Genie 3 simulates a real-time 3D world. Nvidia’s Cosmos platform was trained on 20 million hours of real-world data to support physical AI development. Companies that have already spent years building their video generation infrastructure are naturally in a position to take this leap.

This is where the story gets interesting. Alibaba Cloud was the lead investor in ShengShu’s $290 million round. Alibaba also led AIsphere’s $60 million Series B in September 2025. This means Alibaba is a major backer of two of China’s most well-funded video AI startups.

But the e-commerce and cloud giant is also building and deploying its own video model internally. Last week, the company’s newly formed AI arm Alibaba Token Hub (ATH) released a video model called HappyHorse-1.0. The model debuted anonymously on the Artificial Analysis benchmark and rose to the top of both text-to-video and image-to-video rankings, sparking a wave of speculation about its origins before Alibaba confirmed ownership.

The competition here is essentially Alibaba vs. ByteDance. ByteDance’s Seedance 2.0 was the dominant model in video AI leaderboards. HappyHorse was the first model to challenge and replace it. In my early reviews, HappyHorse’s photorealistic visual output is a clear step forward, but Seedance 2.0 has better audio-visual consistency and multi-shot camera control. But for the Alibaba-backed AI video startup, HappyHorse outperformed both Vidu and PixVerse in the benchmark.

This is similar to the LLM ecosystem in China. There, Alibaba’s open source Qwen family has always competed with ByteDance’s Seed series for developer mindshare while supporting major LLM startups such as Zhipu AI, MiniMax, and Moonshot AI. In both cases, Alibaba’s strategy combines in-house model development with external investment in the most promising labs. It’s a dual-track approach that maintains relevance at every layer of the stack, regardless of which specific model wins.

Alibaba Cloud’s revenue grew 36% last quarter driven by AI workloads, according to the company’s Q3 2026 earnings call in March. Video generation is one of the most compute-intensive workloads. Every inference request, every API call from a PixVerse or Vidu user represents GPU time, which directly translates into revenue for these companies when they run on Alibaba Cloud.

Looking at the global AI video landscape, Runway raised $315 million in February 2026 at a valuation of $5.3 billion. AIsphere raised $300 million in March at a valuation of more than $1 billion. Vidu raised $290 million in April at an undisclosed valuation. Three companies from the US and China have conducted three rounds within eight weeks, all within the $25 million range.

At LLM, the disparity between Chinese and U.S. companies is real, shaped by the first-mover advantage that OpenAI and Anthropic have built through years of basic research, U.S. export restrictions on advanced chips, and differing familiarities with subscription-based services. Video generation is another story.

Chinese research institutions entered the race at the same time as, and in some cases earlier than, their Western counterparts. Vidu was launched worldwide before Sora was widely available. Kuaishou’s Kling AI consistently performed competitively in benchmarks, generating $150 million in full-year 2025 revenue and exceeding $300 million in ARR by January 2026.

One of the factors that facilitates this is the structural advantage. China has the world’s most sophisticated short video ecosystem, with hundreds of millions of daily active users consuming, producing and sharing short videos for nearly a decade. This is both a huge benefit for training data and a consumer base that is ready to embrace AI video tools on their own. The regulatory environment also helps. Compared to the United States and Europe, China’s relaxed approach to intellectual property and copyright provides more room to train on existing content and generate derivative works without the legal friction that has slowed down some Western research institutions.

This field is also becoming increasingly specialized. A year or two ago, frontier LLM companies on both sides of the spectrum, such as OpenAI, Zhipu AI, and MiniMax, were avoiding risk by developing video generation models in parallel with their core LLM models. Since then, most people have retreated to focus on what they do best. Companies that stayed with video became considerably more radical.

What remains to be seen is whether the global model will be the integration layer that both sides have been aiming for, or whether the video and physical AI tracks will diverge further, creating different winners for each application.

Either way, Chinese video AI companies have earned their place at the table.