We already live in a world where virtual assistants can converse with people seamlessly (even seductively), but Apple's virtual assistant, Siri, struggles with some basic functions.

For example, when I asked Siri when the Olympics were taking place this year, she quickly responded with the correct date for the Summer Olympics. When I then followed up with, “Add it to my calendar,” the virtual assistant incompletely replied, “What should we name it?” A question whose answer would be obvious to a human. Apple's virtual assistant was confused. When I answered, “The Olympics,” Siri responded, “When should we schedule it for?”

Siri's lack of contextual awareness has limited and hampered her ability to carry on a conversation like a human would, but that may change on June 10, the first day of Apple's annual event. Worldwide Developers ConferenceThe iPhone maker is expected to announce a major update to its next mobile operating system, likely to be called iOS 18, which will reportedly bring big changes to Siri.

Apple's virtual assistant made waves when it debuted with the iPhone 4S in 2011. For the first time, people could talk to their phones and get human-like responses. Some Android smartphones offered basic voice search and voice control before Siri, but they were widely considered command-based and unintuitive.

Siri represented a breakthrough in voice-based interaction and laid the foundation for subsequent voice assistants such as Amazon's Alexa, Google Assistant, and even OpenAI's ChatGPT and Google's Gemini chatbot.

Out goes Siri, in comes the multimodal assistant

Siri wowed people with its voice-based experience in 2011, but some believe its capabilities lag behind other products. Alexa and Google Assistant are better at understanding and answering questions, and both have made inroads into the smart home in different ways than Siri. While their rivals have faced similar criticism, Siri doesn't seem to be living up to its potential.

In 2024, Siri will also face a dramatically different competitive environment intensified by generative AI. In recent weeks, OpenAI, Google, and Microsoft have unveiled a new wave of futuristic virtual assistants with multimodal capabilities that will pose a competitive threat to Siri. New York University professor Scott Galloway said in a recent podcast that these updated chatbots will be “Alexa and Siri killers.”

Scarlett Johansson and Joquin Phoenix attended the premiere of “Her” at the film festival in 2013. Fast forward to 2024, and Johansson is now accusing OpenAI of replicating her voice into a chatbot without her permission.

Earlier this month, OpenAI unveiled its latest AI model. The announcement highlighted just how far virtual assistants have come. In a demo in San Francisco, OpenAI showed that GPT-4o can hold two-way conversations in a human-like way. It has the ability to change the tone of voice, make sarcastic remarks, whisper, and even flirt. The technology demonstrated was quickly compared to Scarlett Johansson's character in the 2013 Hollywood drama “Her,” about a lonely writer who falls in love with a female-voiced virtual assistant, voiced by Johansson. After the GPT-4o demo, the American actor accused OpenAI of creating a voice for the virtual assistant that was “eerie” like hers without her permission. OpenAI said the voice was not intended to resemble Johansson.

The controversy appears to have unfairly criticized some of GPT-4o's features, such as its native multimodal capabilities, which are the ability of an AI model to understand and respond to inputs that include not only text but also images, spoken language, and even video. In practice, GPT-4o can chat with users about photos they view (by uploading media), explain what's happening in a video clip, and discuss news articles.

read moreScarlett Johansson 'furious' that OpenAI chatbot imitates 'her' voice

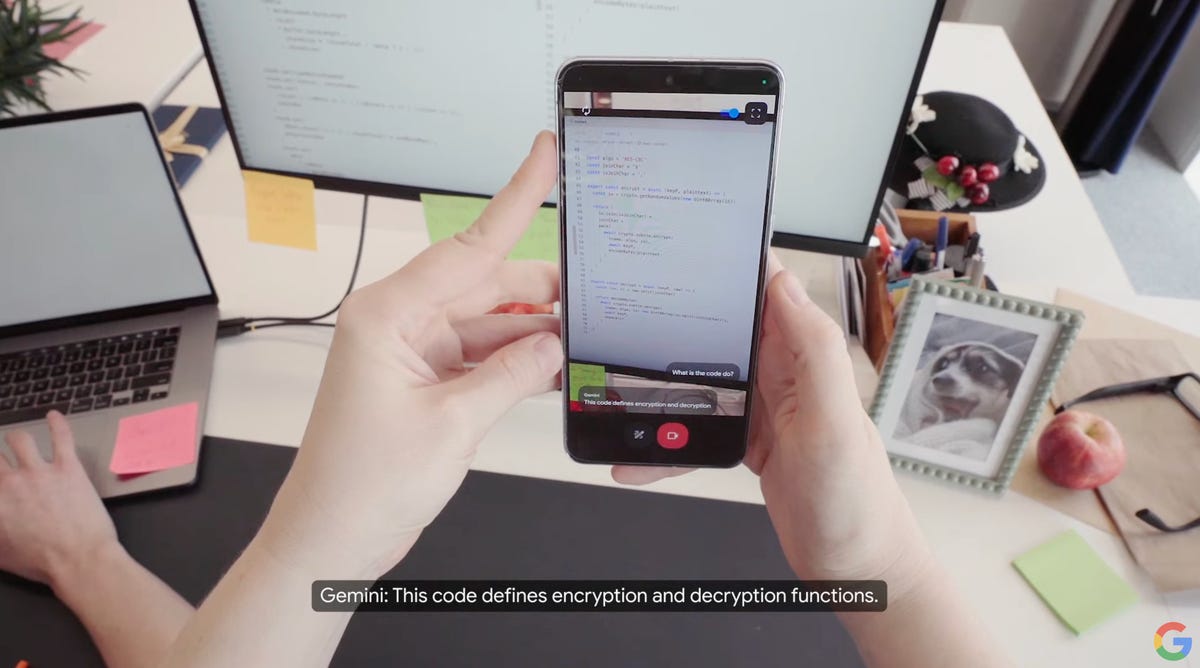

The day after OpenAI's preview, Google showed off its own multimodal demo, unveiling Project Astra, a prototype it calls “the future of AI assistants.” The demo video detailed how a user could use their smartphone camera to show Google's virtual assistant their surroundings and discuss objects in that environment. For example, a person interacting with Astra in what appears to be Google's London offices asked Google's virtual assistant to identify an object in the room that was making a sound. In response, Astra pointed out a speaker placed on a desk.

Google demonstrated Astra on a phone and in a pair of camera glasses.

Google's Astra prototype can not only understand its surroundings, but also remember details: When the narrator asks where it put its glasses, Astra can respond, “On the corner of the desk, next to the red apple,” and relay where it last saw them.

The race to build flashy virtual assistants isn't over with OpenAI and Google: Elon Musk's AI company xAI is working to give its Grok chatbot multimodal capabilities, according to public developer documents, and Amazon said in May it was working on giving its decades-old virtual assistant Alexa a generative AI upgrade.

Will Siri become multimodal?

Multimodal conversational chatbots currently represent the state of the art in AI assistants, and could offer a glimpse into the future of how we interact with our phones and other devices.

Apple still doesn't have a digital assistant with multimodal capabilities, making it behind the times. But the iPhone maker has published research on the subject. In October, it described Ferret, a multimodal AI model that can understand what's happening on your phone's screen and perform different tasks based on what it sees. In the paper, researchers explore Ferret's ability to identify and report what you're looking at, help you navigate between apps, and more. The research points to a future that could completely change the way we use iPhones and other devices.

Apple is exploring the possibility of a multimodal AI assistant called Ferret, where the assistant helps the user navigate the app, with Ferret performing basic tasks and more advanced tasks such as providing detailed descriptions of the screen.

Where Apple could excel is in privacy. The iPhone maker has long made privacy a core value in designing its products and services, and plans to pitch the new version of Siri as a more privacy-conscious alternative to its competitors, according to The New York Times. Apple will achieve this privacy goal by processing Siri requests on-device and using the cloud for more complex tasks, which the Wall Street Journal reports will be handled in data centers equipped with Apple chips.

As for chatbots, Bloomberg reports that Apple may inked a deal with OpenAI to bring ChatGPT to iPhones, which could suggest that Siri may not compete directly with ChatGPT or Gemini. According to The New York Times, Siri will focus on tasks it can already perform and make it better at those tasks, rather than tasks like writing poetry.

As part of a demo at WWDC 2012, Scott Forstall, Apple's senior vice president of iOS software, asked Siri to look up a baseball player's batting average.

How will Siri change? Focus on Apple's WWDC

Traditionally, Apple has deliberately delayed launches and preferred to take a wait-and-see approach when it comes to emerging technologies. This strategy has often worked, but not always. For example, the iPad wasn't the first tablet, but it's the best tablet for many people, including CNET editors. Meanwhile, Apple's smart speaker, the HomePod, arrived on the market several years after the Amazon Echo and Google Home, but never caught up with its rivals' market share. A recent example on the hardware side is the foldable smartphone. Only Apple continues to hold out in a big way. All major rivals, including Google, Samsung, Honor, Huawei, and even lesser known companies like Phantom, are ahead of Apple.

Avi Greengart, principal analyst at Techsponential, said that in the past, Apple has taken the approach of updating Siri on a regular schedule.

“Apple has always been more programmatic with Siri than Amazon, Google or Samsung,” Greengart said. Apple seems to be adding batches of information to Siri, such as sports one year and entertainment the next.”

When it comes to Siri, Apple is widely expected to play catch-up rather than break new ground this year. Still, Siri is likely to be a major focus of Apple's next operating system, iOS 18, which is rumored to include new AI features. According to Bloomberg, Apple is expected to show off further AI integration into existing apps and features, including Notes, emoji, photo editing, Messages, and Mail.

Siri can answer health-related questions on Apple Watch Series 9 and Ultra 2.

As for Siri, it's expected to evolve into a more intelligent digital helper this year, as Mark Gurman's October issue of Bloomberg's Power On newsletter reports that Apple is training the voice assistant with larger language models to improve its ability to answer questions in more accurate and sophisticated ways.

With the integration of large-scale language models and the technology behind ChatGPT, Siri is poised to transform into a more context-aware and powerful virtual assistant, allowing it to understand more complex and nuanced questions and provide accurate responses. According to the New York Times, this year's iPhone 16 lineup will have larger memory capacities to support new Siri features.

read more: What is an LLM and how does it relate to AI chatbots?

“We'd love to see Apple use generative AI to give Siri the ability to act as a more thoughtful assistant that understands what you're trying to ask and uses its database system for data-driven answers,” Techsponential's Greengart told CNET.

Siri could also be better at completing multi-step tasks: A September report from The Information detailed how Siri could respond to a simple voice command to perform a more complex task, like turning a series of photos into a GIF and sending it to one of your contacts. This would be a big step forward in Siri's capabilities.

“Because Apple is also defining how iPhone apps work, there's the ability for Siri to work across multiple apps, with developer permission, and potentially new capabilities for a smarter Siri to safely perform tasks on your behalf,” Greengart said.

Look at this: If Apple makes Siri like ChatGPT or Gemini, it's all over.

Editor's note: CNET has used its AI engine to create dozens of articles and label them accordingly. The notes you're reading are attached to articles that substantively address AI topics, but are all written by our expert editors and writers. For more information, visit AI Policy.