What is overfitting in machine learning?

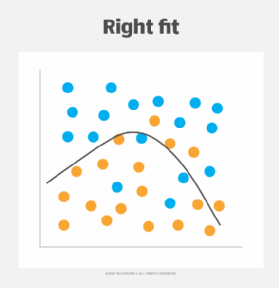

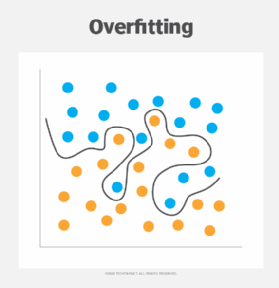

Overfitting in machine learning occurs when a model overfits the training data, capturing both relevant patterns and unimportant noise, resulting in inaccurate predictions for new data. The simpler the model, the less susceptible it is to noise and the capture of extraneous patterns, and the more likely it is to generalize effectively to unseen data.

For example, imagine a company uses machine learning to select a few candidates for interview from a large set of resumes based solely on resume content. The model can consider relevant factors such as education, experience, and skills. However, we are overly picky about font choice and reject well-qualified applicants who use Helvetica instead of Times New Roman.

Why does overfitting occur?

Most factors contributing to overfitting are in the model, data, or training method. When a machine learning model is too complex, it strictly memorizes the training data rather than learning relevant underlying patterns.

If your training data contains too much noise or your training data set is too small, your model won't have enough good data to distinguish between signal and noise. Even with optimized data and models, if a model is trained for too long, it will start learning noise, and the longer it is trained, the worse its performance will be. Another potential pitfall is repeatedly testing the model on the same validation data, leading to implicit overfitting to old data sets.

Overfitting and underfitting

Underfitting is the opposite of overfitting, where the machine learning model doesn't fit the training data well enough to learn the patterns in the data. Using a model that is too simple for a complex problem can lead to underfitting.

In the example above where a company uses machine learning to evaluate resumes, the underfit model is too simplistic to capture the relationship between resume content and job requirements. For example, an underfit model might select all resumes that contain certain keywords, such as: Java and JavaScriptEven if the only skills required for the position are JavaScript skills.Learning models focus too much on words only Java, JavaScript requires completely different skills. It then becomes unable to detect good candidates in training or new data.

How to detect overfitted models

One sign of an overfitted model is when it performs well on training data, but performs poorly on new data. However, there are other ways to test model performance more effectively.

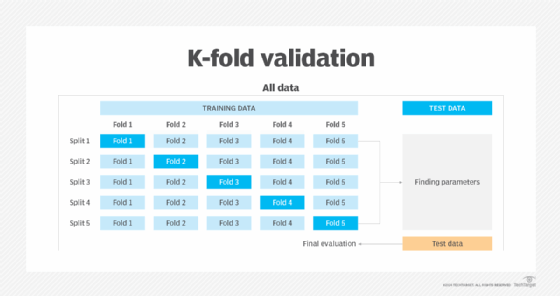

K-fold cross-validation is an essential tool when evaluating model performance. The training data is randomly divided into K subsets of equal size. fold. One fold is reserved for final validation and the model is trained on the remaining folds. The model then validates the remaining folds and computes performance metrics. This process is run K times with a different fold as the validation fold during each iteration. The performance metrics are then averaged to obtain a single overall performance measurement for the model.

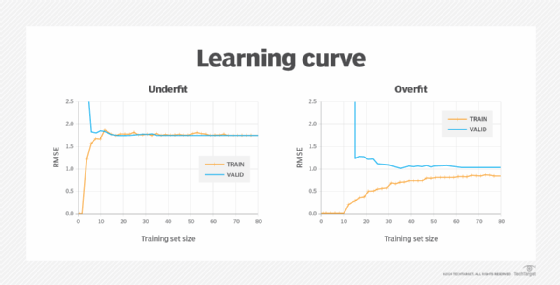

Technically, two learning curves are generated for one analysis. One learning curve is generated on the training data set to assess how the model is learning, and another curve is generated on the validation set to assess how well the model generalizes to new data. Evaluate whether The learning curve then plots performance metrics such as error and accuracy against the number of training data points.

As the dataset grows, patterns in performance metrics begin to emerge. When the training and validation errors plateau, it indicates that adding more data does not significantly change the fit. The learning curve for underfitting models tends to be closer and higher. The learning curve of an overfitted model has lower error values, but there is a gap between the validation and training results, indicating that the model is underperforming on the validation data.

Organizations need to improve their models and data to prevent machine learning from overfitting.

improve the model

Here we introduce some ways to refine and optimize your models to reduce the risk of overfitting in machine learning.

simpler model

At the beginning of a project, it is important to understand the problem and choose the appropriate machine learning algorithm. Although cost evaluation and performance optimization are important, beginners should start with the simplest algorithms to avoid complexity and improve generalization. Simple algorithms such as K-means clustering and K-Nearest Neighbors allow for more direct interpretation and debugging.

Feature selection

In machine learning, a feature is an individual measurable property or characteristic of data that is used as input to train a model. Feature selection identifies the features that are most useful for training the model, thus reducing the dimensionality of the model.

regularization

As the model becomes more complex, the risk of overfitting increases. Regularization imposes constraints on that model during training to avoid complexity.

During the training process, the weights (coefficients) of a machine learning model are adjusted to minimize a loss function that represents the difference between the model's predicted output and the actual target value. The loss function can be expressed as:

Min⍵→L(⍵→)

Regularization adds a new term α||. Apply ⍵→ || to the loss function to find the set of weights that minimize the output.

Min⍵→L(⍵→) + α|| ⍵→ ||

There are different ways to do this depending on the type of model.

Ridge regression

Ridge regression is a linear regression technique that adds a sum of squared weights to the loss function during training, with the goal of preventing overfitting by keeping the coefficients as small as possible without zeroing them out.

LASSO regression

In least absolute shrinkage and selection operator (LASSO) regression, the sum of the absolute values of the model weights is added to the loss function. This automatically performs feature selection by removing the weights of the least important features.

elastic net regression

Elastic net regression adds a regularization term that is the sum of the ridge regression and the LASSO regression, which is the sum of the ridge regression (γ = 1) and the LASSO regression (γ = 0), determines how much automatic feature selection is done on the model.

early stop

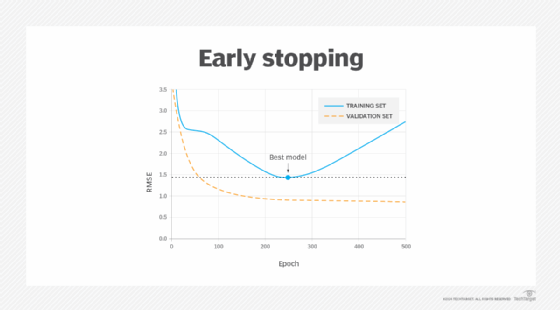

This method works with iterative learning algorithms such as gradient descent. The model learns using more data. As the model learns and is provided with more data, the prediction error for both the training and validation sets decreases. If too much data is added, overfitting will begin to occur and the error rate on the validation set will begin to increase. Early stopping is a type of regularization that stops training a model when the error rate on the validation data reaches a minimum value or when a plateau is detected.

Drop out

Dropout is a regularization technique used in deep neural networks. Each neuron has a probability known as a probability. Dropout rate — Being ignored or “dropped out” at each data point in the training process. During training, each neuron adapts to the occasional absence of its neighbors and is forced to rely more on its input. This reduces the impact on small input variations, making the network stronger and more resilient, and minimizing the risk of the network misinterpreting noise as meaningful data. Adjusting the dropout rate allows you to address overfitting by increasing the dropout rate, or address underfitting by decreasing it.

ensemble method

Ensemble methods aggregate predictions from multiple models toward the end of a machine learning project. This reduces both bias and variance, resulting in more comprehensive predictions. An example of an ensemble method is random forest, which builds multiple decision trees during training. Each tree is trained on a random subset of data and features. During prediction, random forests aggregate the predictions of individual trees to produce a final prediction, often achieving high accuracy and robustness to overfitting.

improve your data

Because data is as important as models, organizations can do the following to improve their data:

Training with more data

Large training data sets provide a more comprehensive representation of the underlying problem and allow models to learn true patterns and dependencies rather than memorizing specific instances.

Data augmentation

Data augmentation reduces overfitting by copying a single training data instance and slightly modifying it so that it can be learned by the model but cannot be detected by humans. The model has more opportunities to learn desired patterns while becoming more tolerant to different environments. Data augmentation is particularly useful for balancing datasets as it includes more underrepresented data, improving the model's ability to generalize across diverse scenarios and avoid bias in the training data. Helpful.