Claim: Video shows PTI supporters claiming to give up their wives and daughters for Imran Khan.

fact: This viral clip was generated by AI and does not depict a real event.

On January 21st, a Facebook user shared the following information. video It has the following caption:

یوتھیاں بیٹیاں تو کیا میں اپنی بیوی بھی دیدوگا بس مرشد خوش ہونا چاہئے 🖐️😁😂🖐️

#ٹٹ شعور کی یہ وہ آخری حد ہے جس کے بعد شعور بندے سے خود پوچھتا ہے بتا “”

[Translation: Anchor: If Imran Khan were to ask you for both your daughters, would you give them to him?

Youthiya supporter: Not just my daughters, I would even give my wife, as long as my leader is happy. 🖐️😁😂🖐️

Note: This is the final limit of consciousness, after which consciousness itself asks a person: Tell me, shameless one, what exactly is your consent—and how shameless are you willing to become?]

Fact or fiction?

Soch fact check Several visual discrepancies cast doubt on the authenticity of this viral clip, suggesting it was likely generated using an AI text-to-video model. For example, the Urdu script appearing on reporters’ microphones and children’s clothing appears to be gibberish. AI-generated content occurs frequently conflict It renders text correctly, especially on clothing, badges, and signs.

The logo that appears on the microphone will also appear on the caps of PTI supporters. Such repetition is common in AI-generated visuals, as generative models often duplicate salient visual elements between different objects as they try to maintain consistency within a scene.

Visual inconsistencies in viral clips: static crowds, gibberish text, repetitive logos

The important thing is that while the flag is moving, the people in the background remain the same. static and Doesn’t move. This is a notable indicator in AI-generated images, where background elements often lack natural or independent movement.

We also noted that one of the viral posts included the following: video Contains the TikTok watermark “@eleventwelve212”. A search for this handle on TikTok led me to this account meme news. The account’s profile said, “Here’s some humorous stuff for you to scroll through and enjoy.”

This viral clip was shared on January 15th. fixed on your account. It had the captionitny sary log kaha say gay” [Where did so many people come from] “Contains AI-generated media. This confirms that this post was created using AI and does not depict actual events. Importantly, this post was the only one to appear without a claim and was shared online a week before the remaining posts were fact-checked.”

After that, I checked other posts on the account and found that resemble AI-generated video. Many of these videos depict PTI protests, often showing reporters questioning supporters. inappropriate or misleading question.

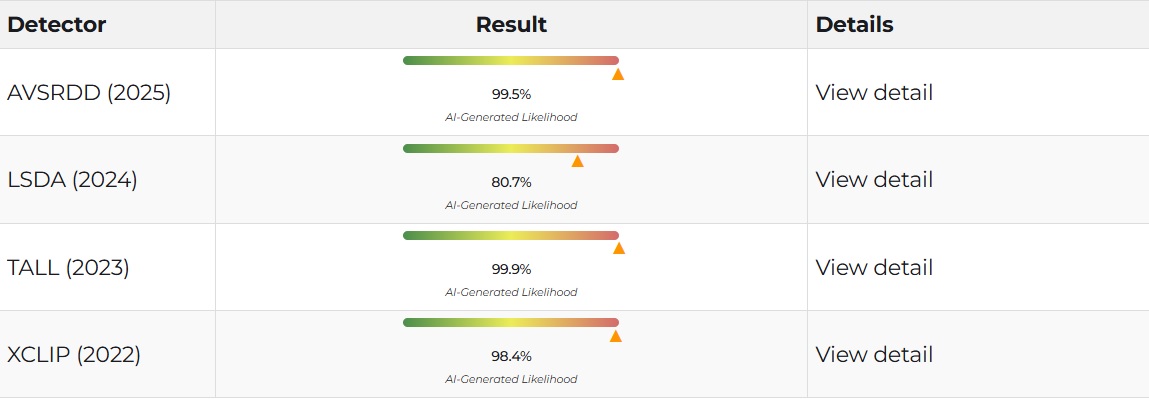

Soch fact check I also tested the video Deepfake-O-meteranalyzed using multiple AI-based detection models. The video results are below.

DeepFake-O-Meter results

We first used the AVSRDD (2025) model. This is an AVSR-based audio and visual deepfake detection method that leverages audio correlation. This model uses dual branch encoders for audio and video to support independent detection of each modality. They assessed the probability that the video was fake as 99.5%.

The videos were then analyzed using LSDA (2024), a deepfake detection model designed to assess whether a video or image is fully or partially synthesized using AI. The model evaluates visual and temporal cues such as facial movement, lip synchronization, and texture consistency to estimate the likelihood of a synthesis operation. The probability that this clip is fake was rated at 80.7%.

We also used the TALL (2023) model, which focuses on checking online videos. Online videos are often compressed or altered to hide small details. TALL helps reveal operations that may not be obvious to the eye by testing whether the video remains consistent after removing details. The probability that the video is fake was estimated at 99.9%.

Finally, we used the XCLIP (2022) model, which estimated the probability that the video is fake as 98.4%. This model uses cross-frame attention to analyze how frames relate to each other over time. This makes it possible to spot inconsistencies in facial movements, expressions, and time flow, which are common signs of deepfakes.

virality

viral clip shared here, here, here, hereand here On facebook. Archived here, here, here, hereand here.

It was shared in X here (archive).

It was shared on Instagram here (archive).

conclusion: The viral clip in which a PTI supporter said he would leave his wife and daughters for Imran Khan was generated by AI.

–

Cover photo background image:

How to file a complaint Please send an email to the following for confirmation appeal@sochfactcheck.com