What was claimed

New footage shows recent Israeli attacks on Iran.

our verdict

Three of these clips are not real and are likely created by artificial intelligence. One clip appears to be authentic footage from a strike in June of this year.

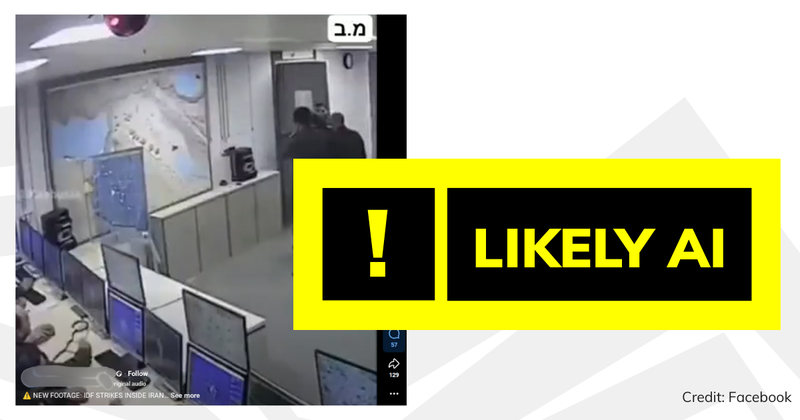

A viral video compilation purporting to show “new footage” of Israeli missiles attacking Iranian bases in June this year includes three clips, likely created using artificial intelligence (AI).

The video has been shared thousands of times on X and Facebook and includes four different clips of the explosion.

Experts said three of these clips were likely created by AI, while one was likely a real video segment.

Many of the clips shared on social media are very grainy and blurry, making it difficult to spot signs of AI. However, the earliest version FullFact could find was posted to Instagram on December 1 at a much higher resolution, making it easier to see the mistakes and glitches that are common in AI-generated videos.

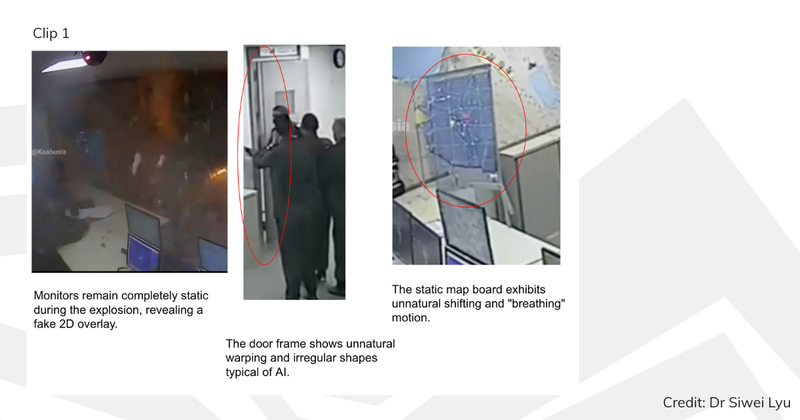

The first clip shows several monitors on the left side of the screen and a group of people gathered near a door on the right side. A large explosion occurs within seconds.

We consulted two experts on this topic. Dr. Siwei Lyu, a digital media forensics expert at the State University of New York at Buffalo, and Professor Hany Farid, a University of California, Berkeley specialist in digital forensics, misinformation, and image analysis, and chief scientific officer at GetReal Security, a cybersecurity company focused on preventing malicious threats through generative AI. Both said they found evidence of AI generation in the clip.

Dr. Liu highlighted the unnatural distortion and irregular shape of the door frame, as well as the unnatural shifting and “breathing” motion of the static map board, as clues that the clip was created using AI. He also noted that the monitor remained completely still during the explosion, displaying a fake 2D overlay.

Professor Farid also explained the physical discrepancies in the perspective geometry of the room’s interior.

In the second clip, a man in military uniform stands in the center of the room, with what appears to be an Iranian flag flying to the left. Again, an explosion occurs within seconds.

Professor Farid said he also believed the clip was generated by AI based on visual inspection. Dr. Liu agreed, noting that the digital clock “displays fluctuating and inconsistent symbols” instead of “stable, legitimate time data,” and that the standing man “displays unnatural body contortions and sudden hand movements just before the explosion,” and that the radar scan line is “distorted and curved, violating the linear geometry of the actual display.”

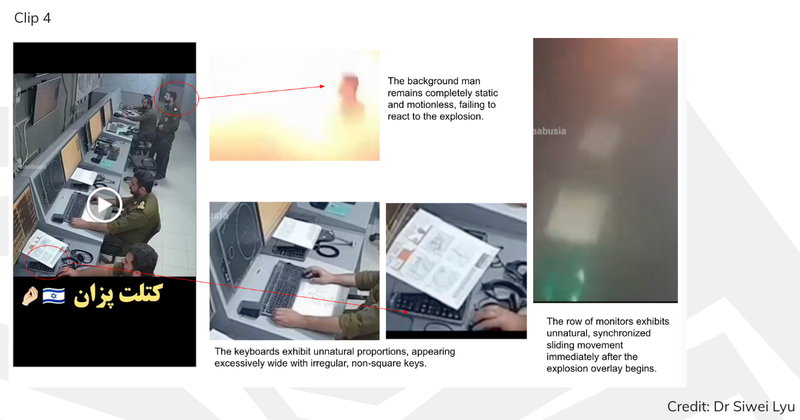

Both experts said there was also evidence of AI generation in the fourth clip. It shows men looking at a monitor before another explosion. Professor Farid once again emphasized perspective geometry, with Dr. Liu showing how the keyboard exhibited “unnatural proportions” and appeared “overly wide.” The man in the video is also unable to react to the explosion and remains completely still. Distorted headphones were also observed on the desk, with what appeared to be only one earpiece.

Join 72,953 people who trust us to check the facts.

Sign up to get weekly updates on politics, immigration, health and more.

Subscribe to Full Fact’s weekly email newsletter to stay up to date on politics, immigration, health and more. Fact checks are free to read, but not to create. As a result, you will occasionally receive emails about fundraising events and other ways to help. You can unsubscribe at any time. For more information on how we use your data, please see our Privacy Policy.

The third clip is probably real

However, both experts agreed that the third clip was probably real. A man in uniform is shown running across the room before the explosion. Professor Farid said: “We find no evidence of AI generation in the third clip,” while Dr Liu said the third clip “does not exhibit the typical unnatural motion characteristics of AI-generated footage.”

The video was reportedly broadcast by Iranian state television along with other CCTV videos of the Israeli attack that took place in June this year. Unlike the video, which appears to have been generated by AI, the clip shows a surveillance camera going black after the explosion.

During the Israeli-Iranian conflict in June, we saw more than a dozen AI-generated or falsely captioned videos and images widely circulated on social media.

Misleading information can spread quickly during breaking news, especially during periods of crisis or conflict.

Before sharing content you see online, it’s important to consider whether it comes from a reliable and verifiable source.

If you’re wondering if a video clip is AI, one tip worth noting is that some social media posts share a much grainier and blurrier version of the footage than the original, making it difficult to spot the signs of AI. So it’s always worth using tools like TinEye or Google Lens to search for keyframes in your footage and look for sharper versions.

We’ve created a toolkit of practical tips on how to identify malicious information. We also published a detailed guide on how to identify misleading videos online and how to tell if a fact checker is AI.