Arizona State University has unveiled a platform called Atomic that creates AI-generated modules based on lectures from ASU faculty by cutting long videos into very short clips and generating text and sections based on those clips.

The teachers and researchers I spoke to, whose lectures are published in Atomic, were upset that their lectures were being used in this way (sometimes as very short clips taken out of context), and some said they felt blindsided or angered by the publication. Most said they were not informed by the school and learned about it through word of mouth. And the tests I and others have done on Atomic have shown academically weak and even inaccurate content. Not only did ASU fail to tell the academic community that its lectures would be segmented and cannibalized by the AI platform, the resulting modules were allegedly terrible.

💡

Do you know anything else about ASU Atomic in particular, or how AI is being implemented at your school? We’d love to hear from you. Send messages securely with Signal (sam.404) using your non-work device. Otherwise, please email sam@404media.co.

AI in schools has been a highly controversial experiment, including: “Private school using AI” alpha school and AI agent It provides them with a student life where they don’t have to learn. In this case, the AI tool in question was created directly by the university using the labor of its faculty, without consulting them.

The Atomic FAQ page states, “We are testing an early version of ASU Atomic to learn what works and what doesn’t to further improve the learner experience before the full release.” “Once you start your subscription, you can generate an unlimited number of learning modules that are customized to fit your learning goals and schedule.”

The FAQ states that ASU alumni and those who have “previously expressed an interest in an ASU learning initiative or participated in research that contributed to the formation of ASU Atomic” were invited to test the beta. But on Monday morning, I used my personal email address to sign up for a 12-day free trial of the Atomic platform. Affiliation with ASU was not required. I first heard about this platform after seeing a post on Bluesky by ASU American literature professor Chris Hanlon.

“When I saw it, I was really surprised to see that the modular material produced by Atomic included my face and the faces of people I knew and people I didn’t know,” Hanlon said. It was a one-minute snippet from a 12-minute video he made as part of a lecture in which he mentioned literary critic Cleanth Brooks, which the AI transcribed as “client” Brooks. “I don’t think anyone could understand what was in that video without more context,” Hanlon said. He said he reached out to colleagues whose lecture videos were also included in that module and they were all equally shocked and alarmed. “I mean, it happens to all of us all of the time in some form or another, but let your institution do it. Let the university you work for use your images, lectures, materials without your permission and chop them up in a way that may not reflect the kind of teacher you really are…let alone give it to real students in the real world.”

The video appears to have been scrapped from Canvas, ASU’s learning management system, where lecture materials and class discussions are provided to students. Canvas is owned by Instructorone of the most popular learning management systems in the country and used by many universities. “ASU Atomic currently draws from ASU Online’s complete library of course content across subjects including business, finance, technology, leadership, history, and more. Once ASU teaches, our AI learning partner Atom can build hyper-personalized learning modules around it,” the Atomic FAQ page states.

As of Monday afternoon, sign-ups for Atomic were closed after I reached out to ASU Atomic’s email address for comment. However, you can create new modules using existing logins.

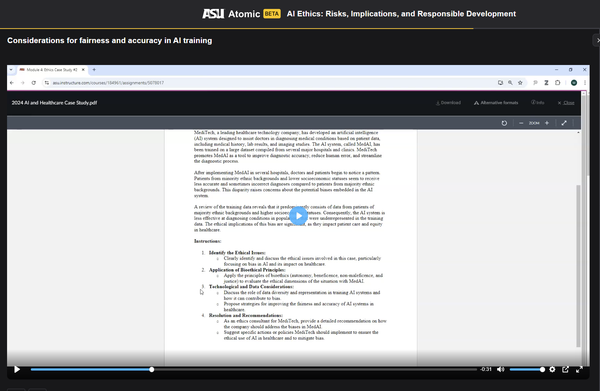

In my own testing, I used a chatbot to run through a series of prompts to determine what my custom module would look like. I told them that I was interested in learning about the ethics of artificial intelligence at the beginner-intermediate level, with the goal of learning as quickly as possible.

AI is escalating the war on libraries, education, and human knowledge

“Fascism and AI, whether they have the same goals or not, are certainly working to accelerate each other.”

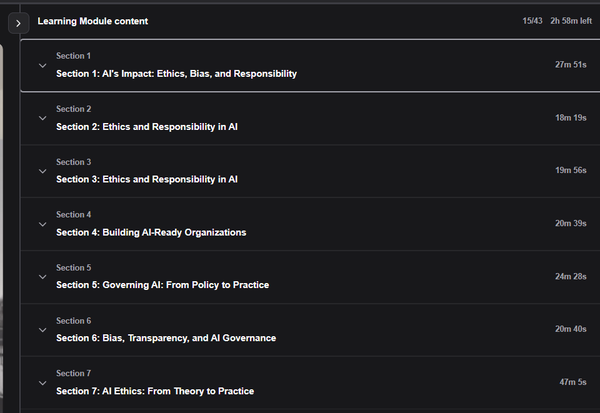

Atomic has produced a seven-section learning module, including sections with repeating titles (“Ethics and Responsibility in AI” and “AI Ethics: From Theory to Practice”). The first clip in the first section is a two-minute video excerpt from a lecture by Euvin Naidoo, Distinguished Professor of Accounting, Risk, and Agility Practice at the Thunderbird School of Management. In it, Naidoo talks about “X-riskers,” whom he defines as “a community that believes that the advancement, movement, and acceleration of AI should be alarmed.” Atomic’s AI transcribes this as “X-Riscus” and forwards that error throughout the module, referencing “X-Riscus” multiple times in the section and final quiz.

The next section jumps directly into the middle of a lecture where the professor is talking about his research on AI in healthcare, but there is no context as to why this is the case.

In a later section, Sarah Florini, professor of film studies and associate director of the ASU Lincoln Center for Applied Ethics, appears in a one-minute clip from an entirely unrelated lecture to briefly define artificial intelligence and machine learning. But the content of what she’s saying is from a completely unrelated class and taken out of context, so it’s irrelevant to the module.

“It makes me feel like someone who doesn’t know much about me. They’re naive about these positions and think because ‘experts’ say it, it must be true.”

“This is a video from one of the courses in the online Film and Media Studies master’s degree program. That class is FMS 598 Digital Media Studies. This is not a course about AI at all,” Florini told me. “This is an introduction to the key concepts used to study digital media in the field of media studies.” She recorded the song in 2020, before generative AI was widely used. “Those slides and those remarks were just to get students to think about AI as a subcategory of machine learning before I started talking about machine learning in depth. There is simply no way I could talk about AI today or in a class focused on machine learning and AI technology technology,” she said. “This is a great example of how problematic it is to take pieces of people who teach this way and take them out of context.”

Florini said she didn’t know about the Atomic platform until Friday. “I was not notified in any way, nor, to my knowledge, were any faculty members notified, and there was no option to participate or not participate in this project,” she said.

Another ASU scholar whose lecture I contacted was included in a module that Atomic produced for me (and who requested anonymity to discuss this topic) said he just learned of Atomic’s existence through my email. They searched their inboxes for any mention of it from the admin or anyone else, in case they missed an announcement about it, but found nothing. The excerpts of their lectures presented by Atomic were very short and attempted to elucidate a very complex topic.

“I don’t like the idea of my lectures being presented as if they were just saying something, taken out of the context of the whole course and the context of the readings for that module,” they told me. “I feel like someone who doesn’t know much about me. They’re naive about these positions and think because an ‘expert’ said it, it must be true…or they’ll think that’s patently ridiculous and this ‘expert’ must be stupid. But I might have been showing a foil!” The clips are so short that in some cases you can’t discern the context at all.

The instructor told me that the idea of their work being chopped up and used in this way was less of a concern for ownership of the material and more of a concern that someone might leave these modules with a half-hearted or incorrect conclusion about the topic at hand. “All the complexity of this topic is flattened out as if it were really simple,” they said of an excerpt Atomic produced from the talk. When students are assigned this topic, they receive dozens of pages of peer-reviewed academic papers. Atomic offers none of that. The module Atomic generated in my tests did not provide any source links, did not provide any outside information for further research, did not provide any specific citations as to where this information was obtained, and did not even mention who was featured in the videos presented, unless Zoom’s name or other business card was displayed within the video.

“I really want to know, how did this particular thing happen? How did this actually end up on the asu.edu website?” Hanlon said. “It’s very awkward. It’s a far cry from what I think of as a typical educational experience at ASU. Who decided that this represents us?”

ASU Atomic, the ASU President’s Office, and members of the media did not immediately respond to my requests for comment, but I will update if I hear back.