AI hardware didn’t start as AI hardware. The story of how GPUs became AI processors spans three decades, multiple architectural revolutions, and a cast of companies that include Nvidia, AMD, Intel, Apple, and Huawei. It starts with fixed-function graphics pipelines and ends—for now—with dedicated NPUs on every major consumer chip platform. Understanding that progression matters because the same architectural decisions that shaped AI hardware in 2017 are shaping the next generation of inference silicon today. This is not just computing history; it’s the foundation of the current AI industry.

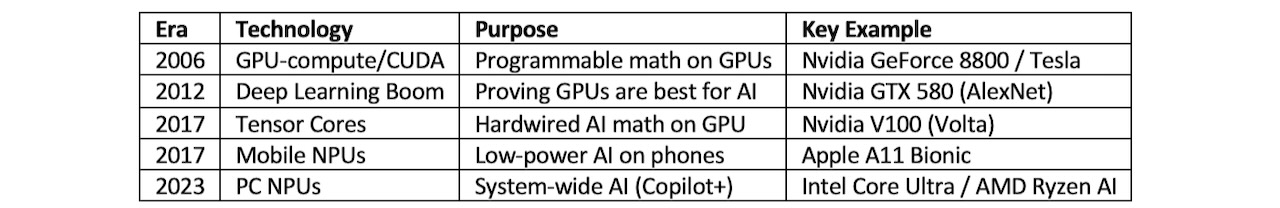

The GPU’s transformation from a graphics-only engine into the primary AI processing platform happened through three distinct technological shifts: the general-purpose GPU era, the introduction of dedicated AI hardware in the form of Tensor Cores, and the recent integration of NPU-like functionality alongside GPU and CPU compute on the same die. Each transition involved specific architectural decisions, specific product launches, and specific competitive dynamics that determined who led and who followed.

The GPGPU Era (2006–2012)

GPUs effectively became AI processors in 2006 with the launch of the Nvidia Tesla architecture and the CUDA platform. Before CUDA, developers who wanted to use GPU parallel compute for non-graphics workloads had to express their problems as graphics operations using OpenGL—a cumbersome mismatch that limited GPU adoption outside rendering. CUDA changed that by allowing researchers and engineers to write C-based code that ran directly on GPU hardware, exposing the underlying parallel compute infrastructure without requiring a graphics API as the intermediary.

The first GPU to support CUDA was the Nvidia GeForce 8800 GTX, launched in November 2006 on the G80 architecture. The G80 contained 128 shader processors unified into a single programmable array, replacing the separate vertex and pixel shader pipelines of earlier architectures. That unification was the hardware precondition for GPU compute (aka, GPGPU): a single pool of programmable processors that could execute arbitrary compute workloads rather than shader-specific graphics operations.

Nvidia simultaneously introduced the Tesla line as a compute-only product stripped of display outputs and optimized for data center deployment. Tesla C870, launched in 2007, provided researchers with a GPU they could install in workstations and servers without a monitor, designed entirely for numerical computation. The Tesla line established the pattern that data center AI GPU products follow today: a chip with the same compute architecture as the consumer graphics product but configured for throughput and reliability rather than display output.

It was a 2012 competition that forced the entire machine learning field to pay attention.

The broader AI community took time to recognize what CUDA had made possible. Between 2006 and 2012, a small but growing set of researchers used CUDA to accelerate neural network training, finding that the parallel structure of GPUs mapped well onto the matrix operations at the core of deep learning. But it was a 2012 competition that forced the entire machine learning field to pay attention.

Figure 1. The year of the cat.

The ImageNet Large Scale Visual Recognition Challenge in 2012 attracted teams from academia and industry competing to classify images from a dataset of over 1 million photographs. The winning entry, AlexNet, came from researchers at the University of Toronto who trained a deep convolutional neural network using two Nvidia GTX 580 GPUs. AlexNet’s top-5 error rate of 15.3% beat the second-place entry by more than 10 percentage points—a gap so large it could not be attributed to incremental tuning. The architecture itself, enabled by GPU training, had changed what was achievable. AlexNet demonstrated that the parallel nature of GPUs matched the computational structure of deep learning at a level no other hardware platform then available could match.

The Intel Xeon Phi question

Before examining Tensor Cores, the role of Intel’s Xeon Phi in the AI processor timeline deserves consideration, because it represents both an important architectural experiment and a cautionary lesson about the difference between repositioning existing hardware and designing hardware for a new purpose.

Xeon Phi emerged from the ashes of Larrabee, Intel’s canceled consumer GPU program. Larrabee was designed in 2009 as a many-core x86 processor intended to run graphics workloads through software-defined pipelines rather than fixed-function graphics hardware. Intel canceled Larrabee as a consumer product before launch, but the underlying many-core x86 architecture became the foundation of the Xeon Phi coprocessor line, first shipping as Knights Corner in 2012.

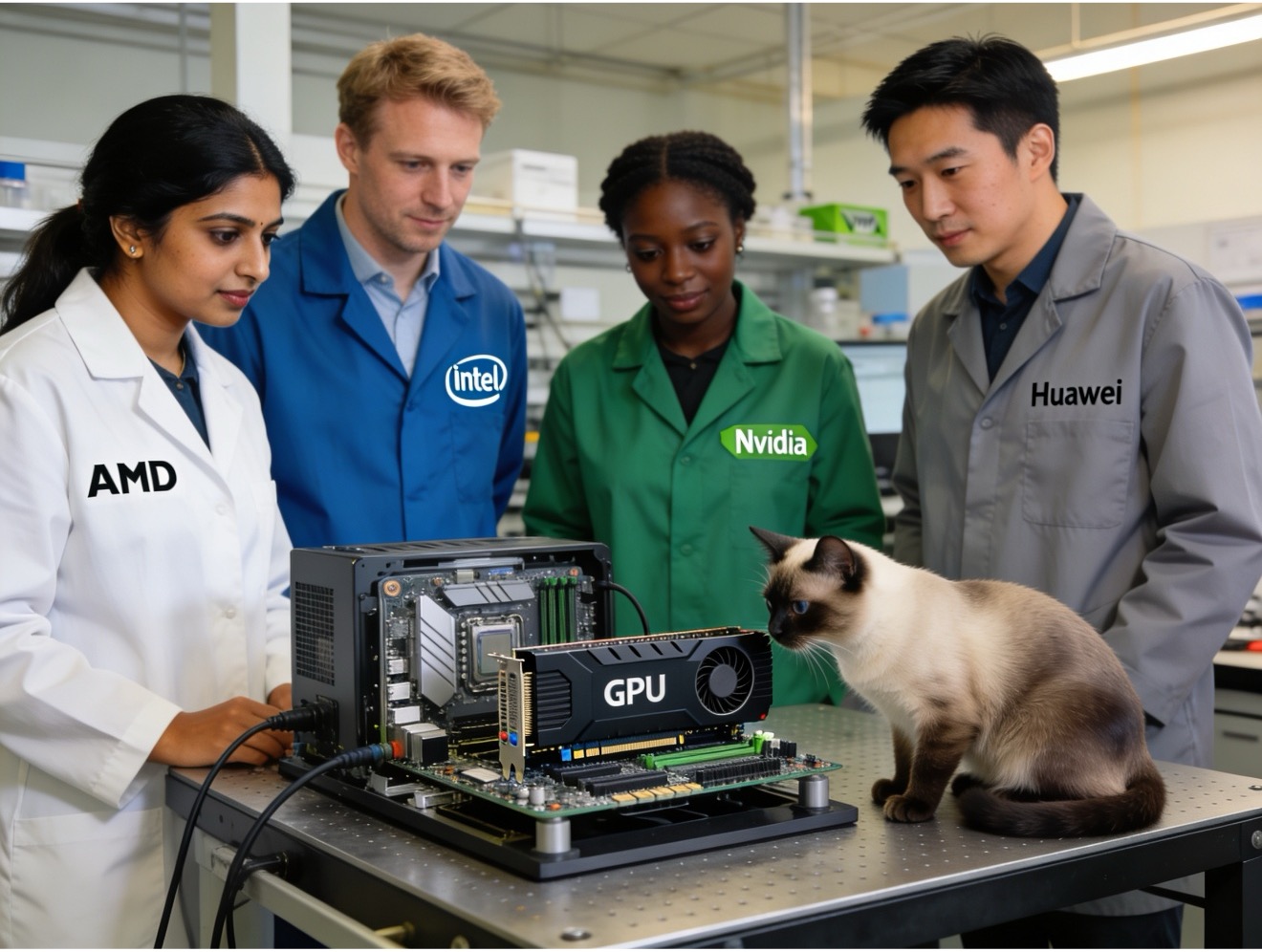

Table 1. Intel’s early AI entrance

Knights Corner packed up to 61 x86 cores on a single die connected via a ring bus, with each core running four hardware threads and executing 512-bit vector instructions through its Vector Processing Units (VPUs). The architecture targeted floating-point throughput in high-performance computing—weather simulation, molecular dynamics, finite element analysis—rather than the matrix operations that define AI training.

Knights Landing, released in 2016, added the AVX-512 instruction set and, uniquely, could boot as a stand-alone CPU rather than requiring a host processor, giving it flexibility that earlier Xeon Phi products lacked. But AVX-512 on a CPU architecture, while powerful for vectorized computation, is architecturally different from the systolic array or tensor-oriented compute that dedicated AI hardware uses.

Knights Mill, released in 2017, represented Intel’s most explicit pivot of Xeon Phi toward AI. It added support for variable precision floating-point operations through the QFMA (Quad Fused Multiply-Add) instruction, delivering up to 4× better deep learning throughput than Knights Landing for reduced-precision workloads. Intel marketed Knights Mill directly at the deep learning training market, positioning it against Nvidia’s Tesla P100 and AMD’s emerging Instinct line.

Whether Xeon Phi qualifies as an AI processor depends on how strictly one defines the term. As a deep learning competitor, Knights Mill earned the classification: Intel sold it explicitly for AI training, and it delivered meaningful throughput for that use case. As a native AI architecture, it does not qualify—Xeon Phi was an x86 many-core processor that added lower-precision support as an extension of its existing vector compute model. It was not designed from the ground up for matrix acceleration, the way Google’s TPU or Nvidia’s later Tensor Core architecture was.

The deeper problem with Xeon Phi was timing and ecosystem. By 2017, Nvidia had established CUDA as the de facto programming model for AI research. Every major deep learning framework—TensorFlow, PyTorch, MXNet—targeted CUDA first and other platforms as afterthoughts. Xeon Phi required developers to port their code to Intel’s MKL-DNN library, a friction cost that research teams operating under time pressure had little incentive to absorb. Xeon Phi exited the market in 2020 without ever displacing Nvidia in the AI training workload it had been repositioned to capture.

The broader lesson from Xeon Phi is that technical capability alone does not determine market position in AI hardware. Ecosystem, software compatibility, developer familiarity, and the compounding advantage of being the platform where training frameworks and model architectures are first validated all matter as much as FLOPS per watt.

Dedicated AI hardware: Tensor Cores (2017)

The second major transition in GPU AI capability came in 2017 with the Nvidia Volta architecture and the Tesla V100. Volta introduced Tensor Cores—specialized hardware blocks designed specifically to accelerate the matrix multiply-accumulate operations that define neural network computation.

Figure 2. Nvidia’s Volta.

Before Tensor Cores, GPU AI throughput came entirely from CUDA cores performing standard floating-point operations. CUDA cores handle general scalar and vector math efficiently, and their massive parallelism makes them useful for AI, but they are not optimized for the specific pattern of matrix math that deep learning uses. A Tensor Core performs a 4×4 FP16 matrix multiply and accumulate (MAC) in a single operation, producing an FP32 result. This is not a general scalar operation executed many times in parallel—it is a single hardware operation that computes the equivalent of 64 floating-point multiply-add operations in one cycle.

The V100 contained 640 Tensor Cores, and Nvidia claimed training throughput of 125 TFLOPS in FP16 versus 15.7 TFLOPS for FP64 general-purpose compute. The gap between those two figures captures precisely what Tensor Cores added: The same die area that delivers 15.7 TFLOPS of general compute delivers roughly 8× more throughput for the specific matrix operation that AI training requires. The hardware was not just faster—it was architecturally shaped for the workload.

Volta shipped initially in the Tesla V100 for data center use. The Titan V, released in December 2017, brought Volta Tensor Cores to the consumer market for the first time, though at $3,000, it targeted researchers and professionals rather than mainstream buyers. Consumer Tensor Cores reached the gaming market with the Turing architecture in September 2018, branded as RTX.

Turing used second-generation Tensor Cores that added INT8 and INT4 precision support alongside the FP16 of the first generation. Lower-precision arithmetic matters for inference: Once a model is trained, running it for prediction does not require the numerical precision of training, and INT8 or INT4 operations consume less memory bandwidth and less power than FP16 or FP32. Turing’s Tensor Cores enabled the RTX line to accelerate both AI training—at reduced throughput compared to data center Volta—and AI inference at practical efficiency levels.

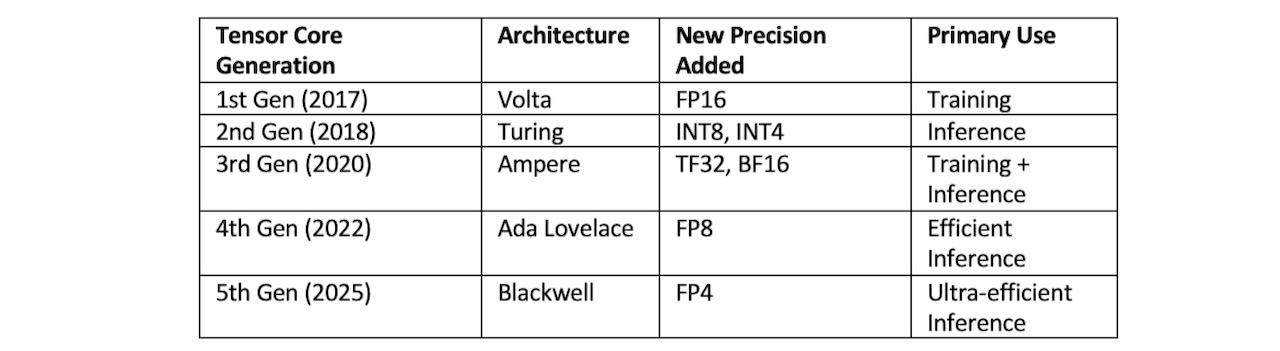

Third-generation Tensor Cores arrived with Ampere in 2020, adding TF32 and BF16 precision and doubling throughput for each precision level compared to Turing. Fourth-generation Tensor Cores in Ada Lovelace (2022) added FP8 support and further throughput increases. The fifth-generation Tensor Cores in Blackwell (2025) added FP4 support and introduced a new AI Management Processor for scheduling mixed AI and graphics workloads.

The evolution of Tensor Core precision support across generations tells the story of what the AI industry needed at each moment:

Table 2. The evolution of Tensor Core.

The pattern is not coincidental. FP16 addressed the training bottleneck. INT8 addressed inference efficiency. BF16 became the training standard as model scale increased. FP8 and FP4 address the memory and bandwidth constraints that appear when running very large models at inference time. Each generation of Tensor Core precision reflects what the most demanding AI workload of that moment required.

AMD’s path to dedicated AI GPU compute

AMD’s entry into dedicated AI GPU compute followed a different trajectory from Nvidia’s, in part because AMD’s GPU business operated under different constraints—competitive pressure from Nvidia in gaming, market position against both Nvidia and Intel in data center compute, and resource allocation challenges that come with being a smaller company competing across multiple product categories simultaneously.

AMD introduced the Radeon Instinct line in December 2016 with the MI6, MI8, and MI25 GPUs. These were rebranded versions of existing graphics architectures—Polaris for the MI6, Fiji for the MI8, and Vega for the MI25—positioned for AI inference workloads in data center environments. The MI25 delivered 24.6 TFLOPS of FP16 throughput, competitive with the contemporary Nvidia Tesla P100. But these were graphics architectures with their rendering hardware still intact, repackaged for compute—the same architectural approach Nvidia had used with Tesla products before Tensor Cores.

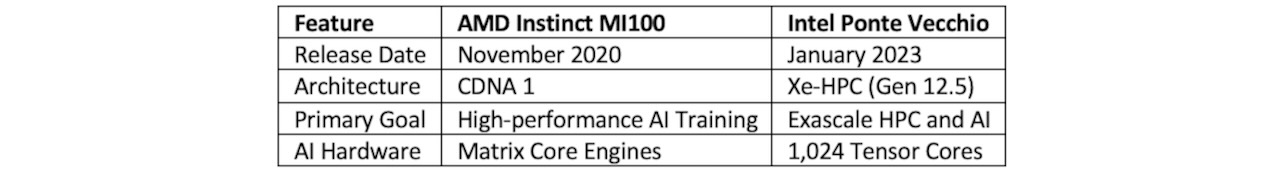

The more significant AMD transition came in November 2020 with the Instinct MI100, built on the first CDNA architecture. CDNA represented AMD’s commitment to splitting its GPU roadmap: RDNA for gaming graphics, CDNA for compute and AI. The MI100 removed all graphics rendering hardware—display engines, geometry processing units, rasterization pipelines—to maximize die area available for Matrix Core Engines, AMD’s equivalent of Tensor Cores. The MI100 delivered 184.6 TFLOPS of FP16 throughput, and it supported the FP64 precision needed for scientific computing alongside AI workloads.

Table 3. Comparison of AI GPU processors

CDNA 2, arriving with the MI200 series in late 2021, introduced multi-chip packages: The MI250X connected two CDNA 2 dies through AMD’s Infinity Fabric interconnect, creating a single logical GPU with 220 compute units and 128 GB of HBM2e memory. This allowed AMD to deliver data center-class compute capacity without requiring a single massive monolithic die.

The MI300 series, launched in late 2023, introduced the most architecturally distinctive product in AMD’s AI GPU history: the MI300A, an APU that integrated CPU cores (Zen 4), GPU compute dies (CDNA 3), and HBM3 memory on a single package with unified memory access. No discrete boundary between CPU and GPU memory meant no PCIe transfer overhead for workloads that mix CPU and GPU computation—a meaningful advantage for workloads where the CPU handles orchestration and the GPU handles matrix math. The MI300X, oriented purely toward AI training and inference without the CPU component, shipped with 192 GB of HBM3—the largest memory capacity of any GPU at its launch and a direct response to the memory requirements of large language models.

AMD’s CDNA 4 generation, the MI350 series shipping in 2025, adds FP6 and FP4 precision support and continues the HBM capacity trajectory. The MI350 competes directly with Nvidia’s Blackwell products in the LLM training and inference market, which has become the primary revenue driver for the entire data center GPU segment.

Intel’s AI GPU path: Ponte Vecchio and Gaudi

Intel’s path to AI GPU capability involved two parallel tracks that reflected different strategic bets about how to win in data center AI: an internal GPU development program that produced the architecturally ambitious Ponte Vecchio, and an acquisition—Habana Labs in December 2019—that brought the Gaudi AI processor into Intel’s portfolio.

Habana Labs had been developing AI training and inference chips since 2016. Its Gaudi processor, launched before the Intel acquisition, used a distributed SRAM architecture and Ethernet-native scale-out rather than NVLink-style proprietary interconnects, positioning it as a more open and cost-effective alternative to Nvidia’s training stack. After the acquisition, Intel continued Gaudi development as its primary AI training accelerator, while internal teams worked on Ponte Vecchio for HPC and AI workloads.

Ponte Vecchio, formally the Intel Data Center GPU Max, represented Intel’s most ambitious GPU engineering effort. It used a chiplet design connecting five different types of compute tiles—Xe-HPC compute tiles, Xe-Link tiles for inter-chip connectivity, Rambo cache tiles, base tiles, and HBM stacks—assembled using Intel Foveros 3D packaging and EMIB interconnect technology. The full configuration contained over 100 billion transistors across 47 tiles. It shipped in January 2023 in the GPU Max 1100 and GPU Max 1550 configurations, targeting the Argonne National Laboratory Aurora supercomputer and similar HPC deployments.

Ponte Vecchio included 1,024 Tensor Cores across its compute tiles and supported FP64, FP32, FP16, BF16, and INT8 precision—covering both scientific computing requirements and AI training workloads. The Aurora supercomputer, one of the first exascale systems in the US, deployed Intel Xeon CPU Max processors and GPU Max accelerators from Intel. But Ponte Vecchio arrived in a market where Nvidia’s H100 had already established itself as the dominant AI training platform, and its HPC orientation left it less competitive for the pure AI training workloads that were driving data center GPU revenue growth.

Intel’s 2025–2026 AI GPU strategy centers on Jaguar Shores, the next major Xe architecture product targeting late 2026 or early 2027 availability. Jaguar Shores is expected to use HBM4 memory and represents Intel’s attempt to compete more directly with Nvidia Blackwell and AMD CDNA 4 in the LLM training and inference market rather than the HPC market where Ponte Vecchio was optimized.

The NPU integration: Mobile first, then PC

The third architectural transition—integrating dedicated NPU blocks alongside GPU and CPU compute on the same die—began not in the data center but in mobile devices, driven by constraints that data center hardware never faces: battery life measured in hours rather than power draw measured in kilowatts, thermal envelopes measured in milliwatts rather than hundreds of watts, and always-on workloads like face recognition and photography enhancement that would drain a battery in minutes if run on a GPU.

Apple introduced the Neural Engine with the A11 Bionic in September 2017, the same year Nvidia launched Volta Tensor Cores. Apple’s Neural Engine was a dedicated block of silicon designed specifically for the matrix operations used in neural network inference, physically separate from the GPU cores and CPU cores on the same die. The A11’s Neural Engine contained two cores capable of 600 billion operations per second, enabling Face ID facial recognition and real-time photography enhancement without the power cost of GPU compute.

The A11 Neural Engine ran these workloads at a fraction of the power of the GPU, which matters on a smartphone where battery life is a primary user experience metric. A GPU running inference for Face ID would drain a battery noticeably. The Neural Engine handled the same inference workload at power levels measured in milliwatts rather than watts.

Huawei introduced the Kirin 970 in the same year, featuring what Huawei called an NPU block co-developed with Cambricon Technologies. The Kirin 970 NPU targeted photography AI enhancement—the same category of on-device inference that Apple’s Neural Engine addressed. Both announcements in 2017 established a template that every subsequent mobile SoC followed: dedicated low-power inference compute alongside the GPU and CPU.

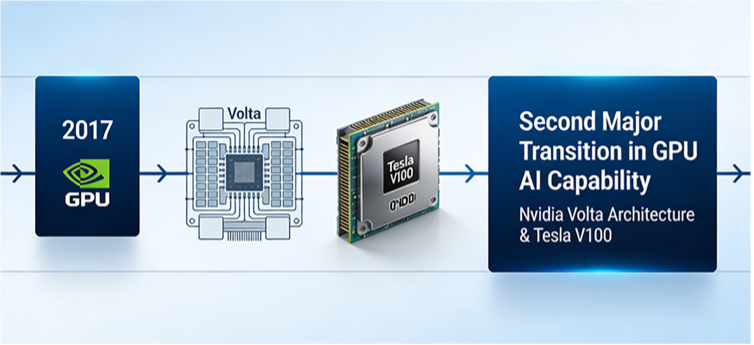

Table 4. Years of GPU-compute development.

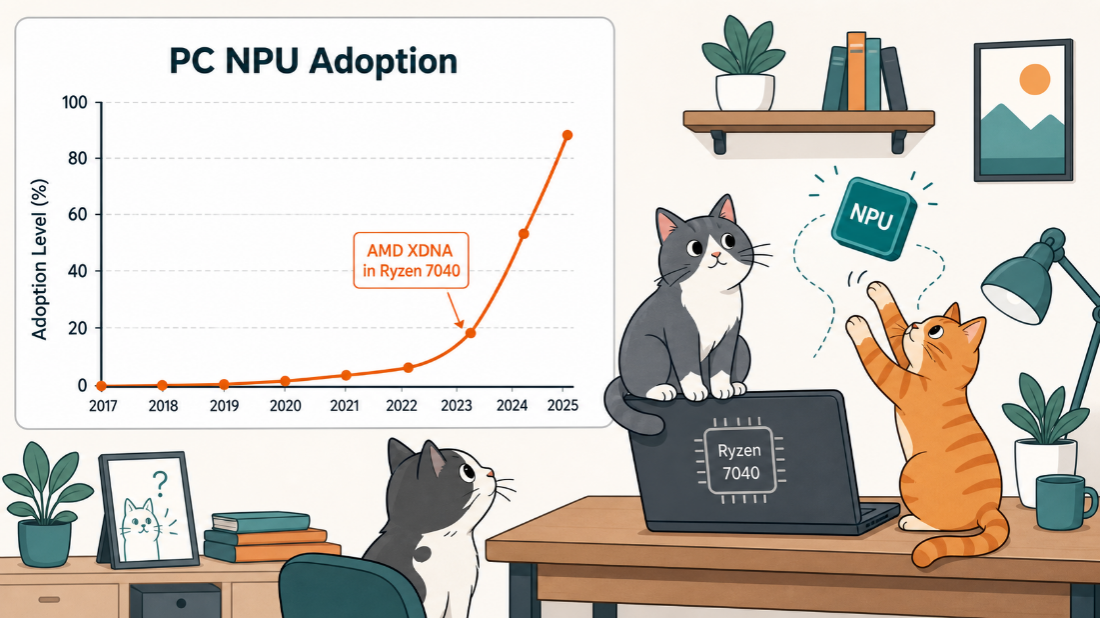

The PC market took six years after the introduction of mobile NPU blocks to standardize on dedicated NPU blocks in mainstream processors. AMD was first to market in the PC space with the XDNA NPU architecture, integrated into the Ryzen 7040 (Phoenix) series beginning in early 2023. AMD’s XDNA NPU uses a very long instruction word (VLIW) architecture with SIMD processing tiles, delivering 10–16 TOPS of inference throughput in a low-power envelope appropriate for laptop battery operation.

Intel integrated its first dedicated NPU into the Core Ultra (Meteor Lake) processor family in December 2023. Meteor Lake’s NPU 3 architecture delivered 11 TOPS, similar to AMD’s initial XDNA offering. Both target the Microsoft Copilot+ PC specification, which requires a minimum of 40 TOPS of NPU performance—a threshold that Meteor Lake and initial Ryzen AI 300 products did not reach individually but that combined platform TOPS calculations could meet.

Intel’s Lunar Lake (Core Ultra 200V), released in September 2024, raised the NPU contribution to 48 TOPS from the dedicated NPU block alone—exceeding the 40 TOPS Copilot+ threshold from the NPU independently, without counting GPU or CPU AI compute contributions. AMD’s Ryzen AI 300 (Strix Point), built on the XDNA2 architecture, delivered 50 TOPS from its NPU block in mid-2024.

The PC NPU creates a different model than the data center GPU. The GPU in a laptop or desktop handles high-throughput AI tasks: image generation, video processing, and local LLM inference for larger models. The NPU handles always-on, low-latency inference tasks: live transcription, real-time translation, background removal in video calls, and photo enhancement. The NPU runs these tasks continuously without waking the GPU or drawing significant power, extending battery life and reducing thermal load.

The architectural difference between GPU AI compute and NPU inference reflects a fundamental divide in AI workload requirements. GPUs excel at training and high-throughput inference because their massively parallel architecture closely matches the structure of large-batch matrix operations. NPUs optimize for low-power, low-latency single-inference tasks where the overhead of GPU start-up and the power cost of GPU operation outweigh any throughput advantage. Neither architecture replaces the other—they address different points on the AI compute efficiency curve.

Figure 3. NPUs make AI work in training and inference.

The GPU’s transformation from a fixed-function graphics rasterizer to the dominant AI training platform to one component in a heterogeneous AI compute ecosystem took less than two decades. What began as parallel floating-point units for rendering triangles became the infrastructure on which the entire modern AI industry runs. The architectural path from GeForce 8800 and CUDA in 2006, through Volta Tensor Cores in 2017, through mobile NPUs in the same year, through PC NPUs in 2023, shows a hardware ecosystem responding to workload requirements as they emerged—not executing a predetermined plan, but adapting continuously to what researchers and developers needed from the underlying silicon.

Getting smaller

AI GPUs began developing specialized, high-performance hardware support for lower-precision formats like 16-bit and 4-bit, starting in 2017 and accelerating significantly with the Blackwell architecture in 2024. The industry has shifted from relying solely on 32-bit floating-point (FP32) to smaller formats to improve inference speed and reduce memory usage, with 4-bit quantization now acting as a standard for large-scale model inference.

Timeline of low-precision capability development

16-bit (FP16/BF16):

• 2017 (Volta Architecture): Nvidia introduced Tensor Cores with Volta V100, bringing native acceleration for FP16.

• 2020 (Ampere Architecture): Introduced support for BF16 (Brain Floating Point 16) in the A100.

8-bit (INT8):

• 2018 (Turing Architecture): Turing Tensor Cores expanded specialized inference capabilities, expanding on INT8 support.

• 2020 (Ampere Architecture): A100 Tensor Cores offered significant INT8 TOPS (Tera Operations Per Second) speed.

4-bit (INT4/FP4):

• 2020 (Ampere Architecture): Nvidia Ampere introduced foundational INT4 support, accelerating inference.

• 2024–2025 (Blackwell/Blackwell Ultra): Nvidia introduced NVFP4 (4-bit floating point), purpose-built for 4-bit pretraining and inference, providing up to 3.5× memory reduction compared to FP16.

• 2025 (AMD): AMD RDNA 4 introduces 4-bit integer WMMA (Wave Matrix Multiply Accumulate) operations.

Key architectural milestones

• Volta (2017): First Volta V100 Tensor Cores designed to accelerate 4×4×4 matrix operations using FP16.

• Ampere (2020): 3rd Gen Tensor Cores introduced TF32, BF16, INT8, and INT4 support.

• Hopper (2022): H100 introduced FP8 native support and Tensor Engine.

• Blackwell (2024): 5th Gen Tensor Cores added support for 4-bit matrix operations (FP4), offering double the throughput of 8-bit operations.

Impact on AI

As of 2026, 4-bit quantization (NVFP4) is used to drastically increase inference throughput, enabling massive models to run on lower-power devices and reducing memory usage by 3.5×, while achieving nearly FP8-equivalent accuracy for large-scale inference. [

What do we think?

The GPU’s evolution into an AI processor was not a design decision—it was a discovery. Researchers found that parallel floating-point hardware designed for graphics mapped almost perfectly to the matrix operations deep learning requires, and the hardware industry followed suit. Every subsequent architectural addition—Tensor Cores, Matrix Core Engines, NPU blocks—responded to a specific performance or efficiency gap that the existing hardware could not close. That pattern continues: The question driving the next generation of AI hardware is not how to make GPUs faster, but how to make inference at massive scale economically viable. The architecture that answers that question will define the next decade of AI silicon.

The GPU’s 2017 introduction of Tensor Cores marked a genuine inflection point—not because it made GPUs faster, but because it made GPUs architecturally distinct from general-purpose processors for the first time. Before Tensor Cores, a GPU did AI the same way it did graphics: through general parallel compute. After Tensor Cores, AI hardware had a dedicated class of silicon with no analog in traditional computing. The current inflection point builds on that foundation: NPUs in every consumer device, dedicated inference silicon in the data center, and the beginning of a disaggregated AI compute model where training, inference, and always-on AI run on different hardware optimized for each workload. That disaggregation, now visible in every major platform from Apple Silicon to AMD Strix Halo to Nvidia Blackwell, is the inflection point the industry is currently navigating.

You can read Part I of this series here, and Part II here.

LIKE WHAT YOU’RE READING? INTRODUCE US TO YOUR FRIENDS AND COLLEAGUES.