Researchers from Macau University, the Chinese Academy of Sciences, the University of Dublin and Nanyang University of Technology have focused on building better tensor processing units (TPUs) to meet the demands in tensor operating units to meet better machine learning and acceleration of artificial intelligence (ML and AI).

“Recent advances in AI SoC [System-on-Chip] The design focuses on accelerating tensor calculations, with equally important tasks of tensor operations centering on the movement of advanced volume data with minimal calculations. The researchers have not explained it. “This work addresses the gap by introducing measurements close to those designed to be designed to introduce tensor operating units (TMUs), compensation standard types, and employing tensor operating units (TMUs), a componise extension of efcisted efcisted efcisted efcisted efcisted efcisted efcisted efcisted efcisted efcisted efcisted efcisted efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efcised efci

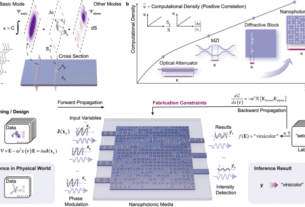

The researchers designed the tensor operating unit (TMU). It says it can dramatically improve latency for AI workloads. (📷: Zhou et al)

The explosion of interest in generative artificial intelligence has dramatically increased the demand for more computing power to work with training models and to collaborate with them. However, researchers are trying to accelerate the manipulation of tensors rather than throwing more tensor processing power into the problem. This is a multiple linear transformation that underpins artificial intelligence algorithms.

To prove the concept, the team is designed to use Smic's established 40nm process nodes to create “tensor operation units” or TMUs and integrate them into systems targeting machine learning and artificial intelligence workloads. Despite occupying just 0.019mm² of die space, the 10-operated TMU achieved a 1,413.43x reduction in operator-level label compared to ARM's general-purpose cortex-A72 processor and 8.54x Jetson Tx2 AI-system-system-ship using a execution model inspired by docred instruction set computing (RISC). Overall gain integrated with the in-house TPU is reported as a 34.6% reduction in end-to-end inference latency.

Team work prelints are available Cornell's ARXIV Server With open access conditions.