In today’s hospitals and clinics, dermatologists may use artificial intelligence models to classify skin lesions and assess whether they are at risk of developing into cancer or whether they are benign. However, if the model is biased toward certain skin tones, it may not be able to identify high-risk patients.

Perhaps one of the best-known and most persistent challenges that AI research continues to consider is bias. Although bias is often discussed in relation to training data, model architecture can also contain and amplify biases, which can negatively impact model performance in real-world settings. In high-stakes medical scenarios, the very real consequences of poor performance make bias a fundamental safety issue.

A new paper from researchers at MIT, Worcester Polytechnic Institute, and Google, accepted at the 2026 International Conference on Learning Representations, proposes a new debiasing approach called “weighted rotation debiasing” (or WRING) that can be applied to visual language models (VLMs) like OpenAI’s OpenCLIP.

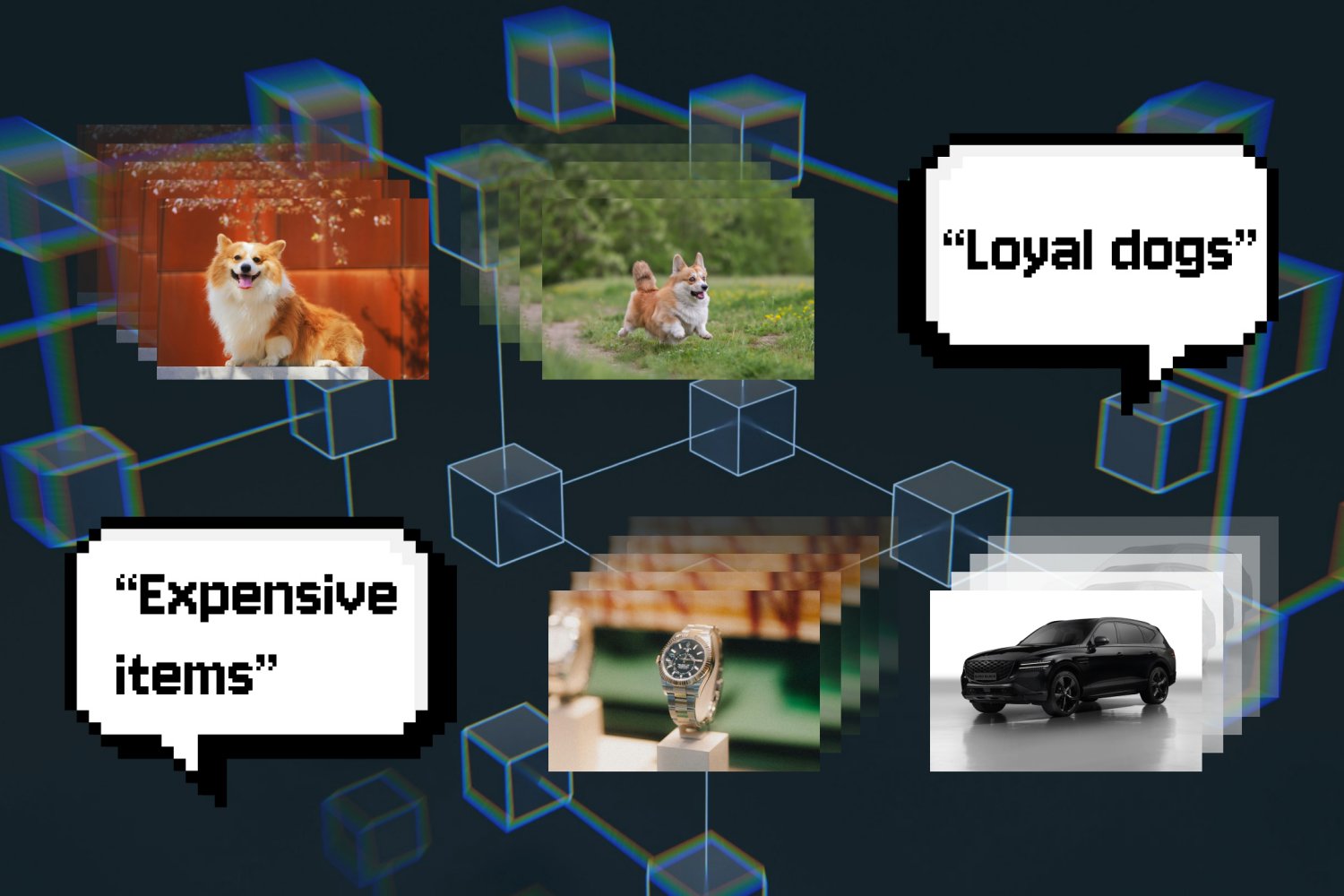

VLM is a multimodal model that can simultaneously understand and interpret different data modalities such as video, images, and text. Although debiasing approaches for VLM exist, the most commonly used approach is known as “projection relaxation,” which leads to what is known as the “whack-a-mole dilemma.” This is an empirical observation that was formally introduced into AI research in 2023.

Projection debiasing is a post-processing approach that removes undesired biased information from a model’s embeddings by “projecting” a subspace from the representation space of relations, thereby removing bias. However, this approach also has drawbacks.

“When you do that, you inadvertently crush everything around you,” says the study’s lead author, Walter Gerich, who conducted the study as a postdoc at MIT last year. “When you do this, all the other relationships that the model learns change.”

Gerich is currently an assistant professor of computer science at Worcester Polytechnic Institute, and MIT graduate students Cassandra Parent and Quinn Perrian also contributed to the paper. Google’s Rafiya Javed. Justin Solomon and Marji Ghasemi, associate professors of electrical engineering at the Massachusetts Institute of Technology, are affiliated with the Abdul Latif Jameel Clinic for Machine Learning and Health and the Information and Decision Systems Institute.

Projection debiasing stops the model from acting on biases projected from the subspace, but it can end up amplifying and creating other biases, creating a whack-a-mole dilemma. According to Ghasemi, unintentional amplification of model bias is “both a technical and practical challenge. For example, when removing bias in VLMs that acquire images of clinical staff, removing racial bias may have the unintended consequence of amplifying gender bias.”

WRING works by moving certain coordinates in the model’s high-dimensional space (the coordinates that appear to be causing the bias) to a different angle. This makes the model unable to distinguish between different groups within a given concept. This changes the representation in a particular space while leaving other relationships in the model unchanged. Also, like projection debiasing, WRING is a post-processing approach, meaning it can be applied “on the fly” to a pre-trained VLM.

“People are already spending a lot of resources and a lot of money training these huge models, and they don’t really want to change anything during training because then they have to start from scratch,” Gerich explains. “[WRING is] Very efficient. No further training of the model is required and it is minimally invasive. ”

As a result, the researchers found that WRING significantly reduced bias toward the target concept without increasing bias in other areas. But for now, this approach is somewhat limited to the Contrastive Language-Image Pre-training (CLIP) model, a type of VLM that connects images to language for retrieval and classification.

“Extending this to a ChatGPT-style generative language model is a logical next step for us,” Gerych says.

This research was supported in part by a National Science Foundation CAREER Award, an AI2050 Award Early Career Fellowship, a Sloan Research Fellow Award, a Gordon and Betty Moore Foundation Award, and an MIT-Google Computing Innovation Award.