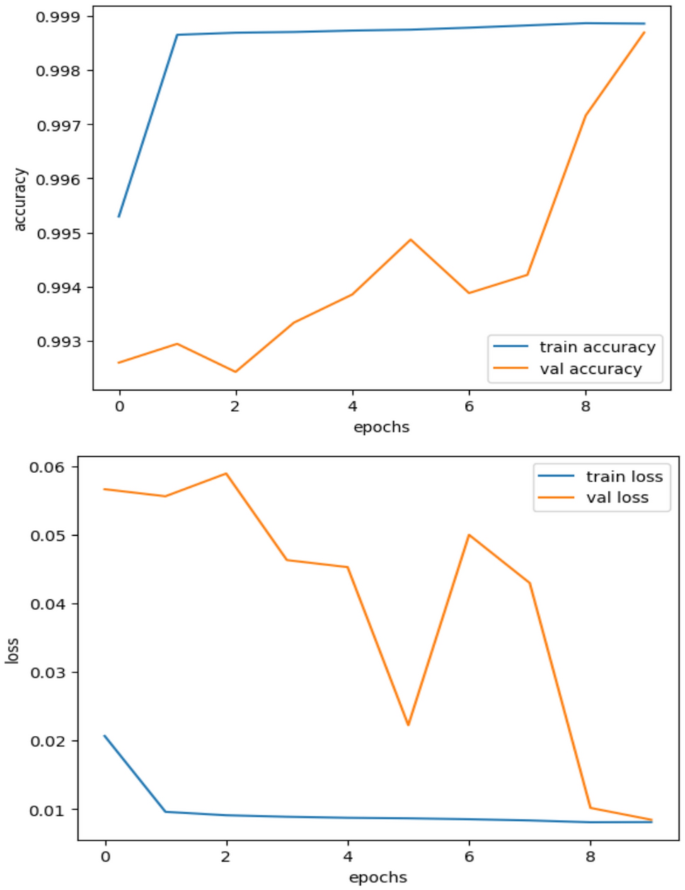

To evaluate our model’s overall performance and generalization capabilities, we assess its training performance on a training dataset and its performance on test datasets and other databases. The training and testing performances are shown in Fig. 15.

Training and validation accuracy and loss performance curves.

Furthermore, our model appears to be effective in generalizing to unknown inputs, as indicated by the remarkable similarity between the training and validation accuracy curves. Instead of overfitting to the training set, this alignment demonstrates that the model has learned to capture the underlying patterns in the dataset. The consistency of training and validation accuracy favorably reflects the model’s robustness. It implies that the model can generalize its learning to new scenarios and that noise and outliers in the training data do not significantly impact its performance.

The absence of observable variations or gaps between the training and validation loss curves is one of the best signs indicating the absence of overfitting. This suggests that, rather than merely memorizing the training data, our model has successfully learned to generalize to new examples it has not yet encountered, striking a balance between complexity and generalization. The training and validation accuracy and loss performance curves provide valuable insights into the functionality and generalizability of our Deep Factorization Machine model for SCADA intrusion detection. The nearly identical training and validation accuracies, along with the constant training and validation loss trajectories, indicate that our model performs exceptionally well without overfitting the training set.

Model evaluation

The six metrics that are employed in this study provide a brief narrative.

-

i.

Accuracy: The accuracy shows the percentage of test cases identified correctly out of all test samples.

$$\text{Accuracy }=\text{ TP }+\text{ TN}/\text{ TP }+\text{ TN }+\text{ FP }+\text{ FN}$$

(6)

-

ii.

Precision: The accuracy metric measures the percentage of test samples with accurate labels among all the gathered instances.

$$\text{Precision }=\text{ TP }/\text{TP }+\text{ FP}$$

(7)

-

iii.

Recall: It goes under several other names, such as detection rate (DR), true positive rate (TPR), and sensitivity. It is the proportion of all malware samples in a test batch that were successfully identified.

$$\text{Recall }=\text{ TP}/\text{ TP }+\text{ FN}$$

(8)

-

iv.

F1 score: It shows the model’s harmonic average of recall and precision.

$$\text{F}1 = 2 *\text{ Recall }*\text{ Precision}/\text{ Recall }+\text{ Precision}$$

(9)

-

v.

Confusion matrix: The performance of a classification model is sometimes explained by a table known as a confusion matrix. It presents an overview of the predictions made by a model for a particular dataset by comparing the predicted and true labels. The confusion matrix consists of four main parts:

-

TP: The positive class was accurately predicted by the model.

-

TN: The negative class was accurately predicted by the model.

-

FP: A Type I mistake occurred when the model mispredicted the positive class.

-

FN: A Type II mistake occurred when the model mispredicted the negative class.

-

vi.

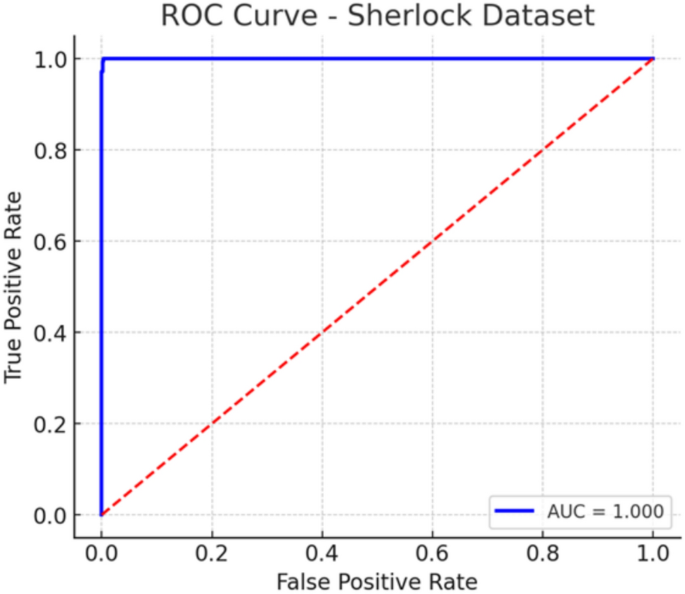

ROC curve: The discrimination threshold of a binary classification model can be adjusted to demonstrate how diagnostic the model is, as shown by the ROC curve, a graphical representation. It is created by plotting the true positive rate (TPR) against the false positive rate (FPR) at various threshold values.

-

TPR: Also known as sensitivity or recall, TPR expresses the proportion of true positive cases the model correctly identifies.

-

FPR: The fraction of actual negative cases the model incorrectly identifies as positive is measured by the False Positive Rate or FPR.

The ROC curve visually represents the trade-off between TPR and FPR across various threshold values. A perfect classifier would have a ROC curve with a high sensitivity and low false positive rate that passes through the top-left corner of the plot (TPR = 1, FPR = 0).

The model hyperparameters for training the DeepFM model are presented in Table 6.

The model’s performance on unseen test data is as follows in Table 7.

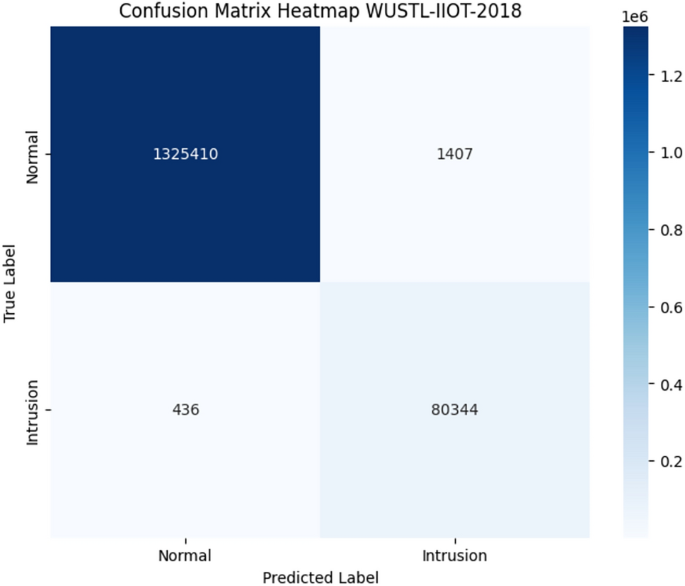

The model appears to perform reasonably well on previously encountered datasets, as indicated by the above performance on all assessment criteria, which suggests the model’s potential for generalization. As illustrated in Figs. 16 and 17, we will utilize the ROC curve and confusion matrix to assess the model’s performance further.

WUSTL-IIOT-2018 confusion matrix on test data.

WUSTL-IIOT-2018 ROC curve on test data.

According to the confusion matrix, nearly all regular and attack classes are correctly classified. It is also crucial to acknowledge that the dataset is highly unbalanced, demonstrating that the model effectively made accurate predictions on an unbalanced dataset. The dataset’s size was also huge, contributing to the model’s high accuracy. In the usual class, only 1400 samples are incorrectly predicted, whereas 1.3 million samples are correctly classified. Comparably, just 436 intrusion traffic instances are incorrectly classified. Although there is a noticeable imbalance in both classes, the model performance is still acceptable. The model’s performance was evaluated using the ROC curve, as shown in Fig. 17.

The AUC score is also 1.0, indicating no misclassification for either the normal or attack classes. This high accuracy can be attributed to the model’s novel FM and DNN parts function. We can utilize this model within the industrial SCADA framework as a state-of-the-art model for the cybersecurity of industrial instruments. To further test the model’s generalization and applicability, let’s evaluate it on other databases.

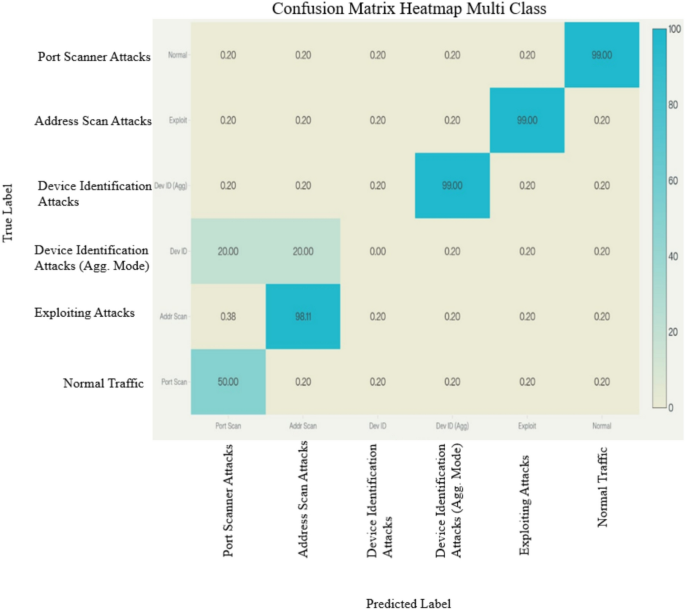

Figures 18 and 19 illustrate that the model’s performance is evaluated on both binary and multiclass data. Here, too, the performance is satisfactory. As mentioned in the data description section, some classes contribute as little as 0.001% to the dataset overall. Still, our model correctly classifies the data because it removes underfitting caused by data imbalance. Additionally, each class has sufficient data to train a model for that class.

Confusion matrix on WUSTL-IIOT-2018 multi-class.

WUSTL-IIOT-2021 multi-class ROC curve.

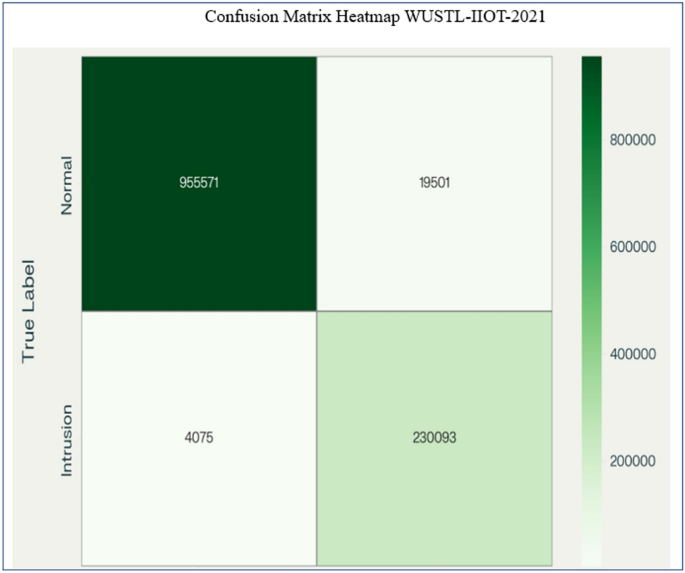

Model evaluation on WUSTL-IIOT-2021 dataset

We examined the IIoT network data in the WUSTL-IIoT-2021 dataset to determine if it could be utilized for a cybersecurity study. Our IIoT testbed is the source of the information. Our testbed aims to reflect real-life industrial systems correctly while allowing users to attack them realistically. It took us 53 h to gather 2.7 GB of info. We cleaned and pre-processed the dataset by removing extreme outliers, corrupted values (i.e., invalid records), and missing values. The smaller copy of the information that we used and shared is just over 400 MB.

This dataset (Table 8) and Table 9 below have more features. This dataset has around 41 characteristics, compared to 6 in the prior dataset. Below are some features: With this data, we have tested the model’s success without changing its design or preprocessing. Table 10 illustrates the effectiveness of the plan.

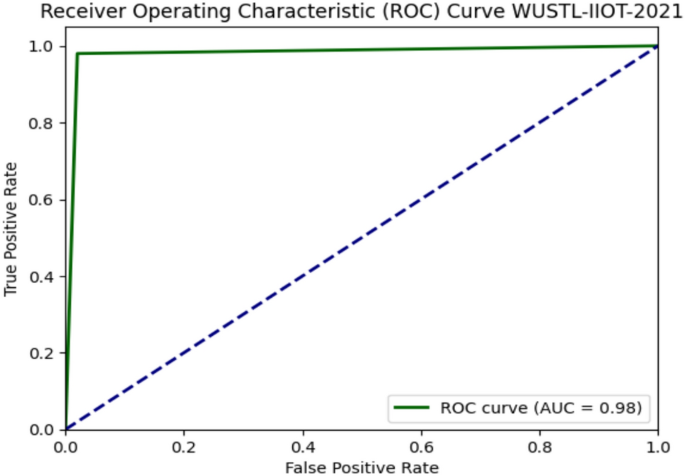

The assessment measures also indicate the model’s remarkably high performance, with an accuracy of almost 98% even without fine-tuning of hyperparameters or model architecture, demonstrating the model’s capacity for generalization and robustness. Although this dataset contained more characteristics, only Sport, Mean, Ploss, SRCLoss, and Dport were considered the most significant features. The maximal sports correlation coefficient was 14%, although the highly connected dataset in the prior dataset had a 30% correlation, indicating a discrepancy in the model’s performance. Figures 20 and 21 display the model’s confusion matrix and ROC curve.

WUSTL-IIOT-2021 confusion matrix heatmap on test samples.

WUSTL-IIOT-2021 AUC-ROC curve on test samples.

The ROC curve and confusion matrix further support the model’s correct performance. We test our model on a second dataset to confirm its superior generalization and resilience compared to other models. This helps us further validate the model’s performance.

HAI (HIL-based augmented ICS) security dataset

A Hardware-in-the-Loop (HIL) simulator that simulated the generation of steam turbine power and pumped storage hydropower, from which the HAI dataset was derived, was added to a real industrial control system (ICS) testbed. Using this pure industrial dataset, we evaluate the model’s performance without adjusting its hyperparameters or architecture. This is the model’s output with this dataset.

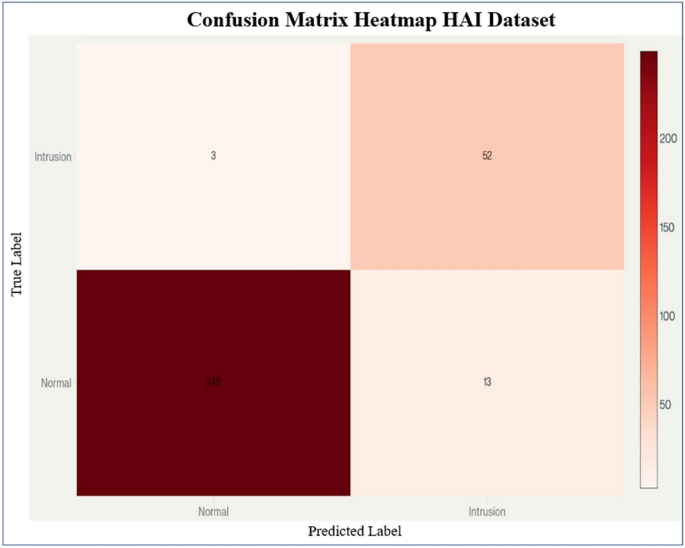

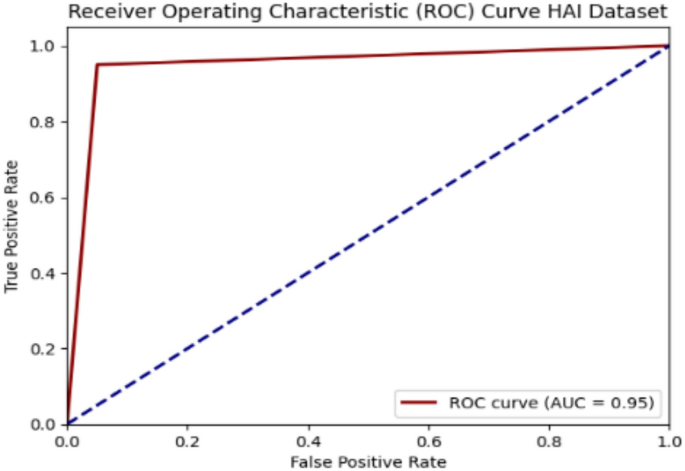

Table 10 above also indicates that the model’s performance is very high, at 95%. Although this dataset differs significantly from the prior ICS intrusion dataset, the model performed exceptionally well overall, indicating its capacity for generalization and robustness. This essentially shows the model’s performance, which is achieved more quickly. Figures 22 and 23 display the dataset’s confusion matrix and ROC curves.

HAI (HIL-based augmented ICS) security dataset confusion matrix test data.

HAI (HIL-based augmented ICS) security dataset ROC curve.

The confusion matrix and ROC curves also suggest the model’s exceptional performance. The model achieved a satisfactory accuracy of around 95%, which is unprecedented for the model. It is also important to note that the model architecture is entirely unchanged. Still, due to the FM and DNN components, both low-order and high-order features are captured successfully, resulting in an effective model with good classification performance. The model also suggests that it can be applied in real-world scenarios and used to detect anomalies in Industrial SCADA environments.

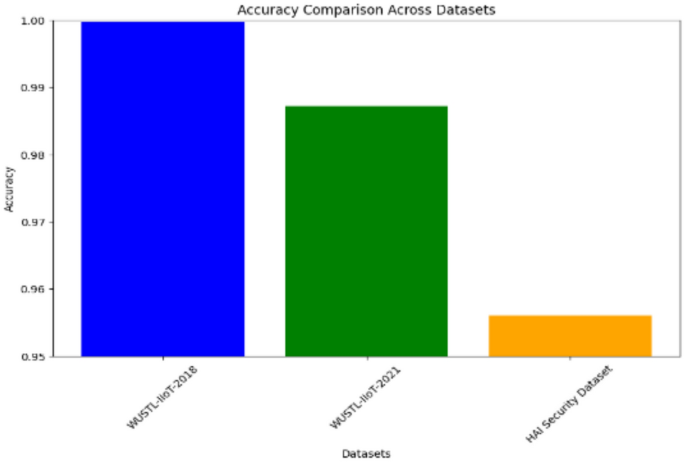

The model’s accuracy across the entire dataset is very high, as demonstrated by the bar chart in Fig. 24 above. The model performs best, having been optimized, especially for the 2018 WUSTL-IIOT dataset. The accuracy of the WUSTL-IIOT dataset in 2021 is 98%, indicating the model’s potential for generalization, whereas the HAI security dataset yielded an accuracy rate of 95%.

Evaluation datasets performance for DeepFM model.

Furthermore, the model’s performance is compared with that of state-of-the-art machine learning models, which are widely used for intrusion detection but not specifically in SCADA environments. The performance here is as follows.

In Table 11, we can see that even though state-of-the-art models, which have very high performance, are outstanding, their performance is significantly lower compared to our proposed model, suggesting that our model is not novel in this intrusion detection domain. Using both a deep learning model and a factorization mechanism, it still outperforms many state-of-the-art models.

The algorithm identifies the critical steps of data preprocessing, model initialization, model training, and model testing. A concise description of the algorithm contains the following points:

Step 1: DeepFM-Based Intrusion Detection Workflow Load Dataset: Load the dataset in CSV or database format, using WUSTL-IIOT-2018 as an example in this case. Neighborhood processing: Standardize features and train and test themselves.

Step 2: Model Definition: DeepFM model as a combination of FM component spanning the low-order feature interactions. DNN component of high-order feature learning. Compilation: Compile using Adam as an optimizer, cross-entropy loss, and accuracy as the evaluation metric.

Step 3: Training Loop (per epoch): Run through training batches to train the model. Calculate accuracy, confusion matrix, and ROC on test data. Provides performance visualizations when performance milestones are met (e.g., accuracy > 95%).

Table 12 presents a comparison of the complexity of intrusion models.

Besides, we have also included a complexity analysis of the proposed model: FM component: Pairwise feature interaction calculations are performed with a complexity of:

Where the number of features is n, and the embedding size is k. This evades the O(n2) computational expense.

Step 4: DNN component: The cost of forward propagation is

$$O({\text{i}} = 1\sum {\text{m}} – 1{\text{ Li}} \cdot {\text{Li}} + 1)$$

(11)

Li is the number of neurons in layer i, and m represents the total number of layers. Overall complexity:

$$O(n \cdot k + \sum {i = 1m – 1Li \cdot Li + 1)}$$

(12)

Step 5: Performance validation: Such a balance ensures that DeepFM is capable of learning both low-order interactions (FM) and high-order feature abstractions (DNN) without being computationally prohibitive for near-real-time SCADA/ICS intrusion detection.

As another measure to validate the proposed DeepFM framework and address concerns about performance validity, we conducted additional experiments, including ablation studies, cross-dataset validation, and baseline comparisons. These longer analyses will provide more substantial evidence of the model’s strength, generalizability, and improved performance compared to existing procedures.

Step 6: Model efficiency estimation: To provide specific figures, we estimated the parameters and FLOPs of our most optimal model (DeepFM 3-layer with 256-128-64 units and k = 10).

To present definitive results, we have estimated the parameters and floating-point operations (FLOPs) of our fastest-acting model (DeepFM with 3 hidden layers, 256-128-64 units, k = 10). Table 13 presents the computational complexity of DeepFM compared to the baselines.

FM-only is of low complexity but sacrifices accuracy.

The DNN-only model is significantly larger, with a substantially greater number of FLOPs and slower inference.

The DeepFM hybrid introduces only a ~ 12 percent overhead over the DNN-only model, but is found to provide up to 2–4 percent accuracy gains.

Notably, inference time is approximately 1 ms/sample, which makes it suitable for short-latency SCADA implementations (where latencies of a few milliseconds are acceptable).

Complexity is theoretically linear in both the number of features and the number of hidden layer nodes, enabling the method to scale to large datasets.

Empirical thresholds demonstrate that DeepFM effectively balances accuracy and performance, training in a reasonable amount of time, and inferring quickly enough to support real-time intrusion detection.

The FM module has minimal additional cost compared to deep-only methods and substantial performance gains in recall and F1-score.

Model evaluation on Sherlock dataset

To better justify our methodology and enable its application to even more industrial cases, we have supplemented our experimental analysis with a larger and decidedly more relevant dataset of medium size. After examining current benchmark tasks, we have selected the Sherlock data set, which focuses on power grid intrusion detection, as it is recent, realistic, and well-creditable in the ICS sector.

Augmentation of the Sherlock dataset (power grid intrusion detection)

-

i.

Introduction to Sherlock.

In 2025, the Sherlock dataset was introduced, which is particularly well-suited for process-aware intrusion detection in power grid networks—a key research area in SCADA/ICS. It has been modeled through Wattson co-simulator; further, it also contains realistic attacks (such as state variables and measurement manipulation) in a modern power grid system. The use of Sherlock, along with its modern presentation of attack profiles and process simulation, enables a strong gateway to assess the generalization and robustness of intrusion detection models on cases that are not typical of usual network traffic. Table 14 shows the DeepFM performance on the Sherlock dataset.

The robustness of the F1-score (0.955) of this model on the Sherlock dataset demonstrates that it can identify minor flaws and process-level issues, while maintaining stable performance in dynamic multisensor ICS power grid environments. Figure 25 shows the multi-class ROC curve for the Sherlock dataset.

Sherlock dataset multi-class ROC Curve.

These plots are for the WUSTL-IIoT 2018, WUSTL-IIoT 2021, HAI, and Sherlock datasets, with a focus on the ROC curves. As we can see, our model achieved excellent performance in nearly all datasets, indicating its generalization ability and potential practical real-world usage for actual intrusion detection.

-

ii.

Cross-dataset evaluation

Here’s a visual comparison of the model’s versatility, showing WUSTL-IIoT-2018, WUSTL-IIoT-2021, HAI and Sherlock dataset side by side. Table 15 compares their performance across different datasets.

-

Diverse applicability: The applicability of Sherlock encompasses the concept that DeepFM can be scaled to other ICS areas, such as IoT-driven SCADA simulation, as well as power grid modeling.

-

Stability in various industrial scenarios: The results on the HAI Security Dataset emphasize the flexibility of DeepFM to address various ICS-related security issues, especially those related to building automation and control systems.

-

Performance variation insight although the overall performance is slightly lower on Sherlock than on the WUSTL-IIoT datasets, performance is higher overall due to the complexity and real-world details inherent in process-level simulation data.

-

Equitable detection ability: The model’s recall rate (0.959) demonstrates a high ability to detect process anomalies, and precision (0.952) correctly indicates a low ability to produce false positives. This balance is precarious when dealing with critical ICS, such as power systems.

-

No change in architecture: There is no change in architecture, but we are happy with the performance. This secures the capability of DeepFM to adapt to new datasets and tuning processes, which is of significant value in the application.

The high performance of our DeepFM model, coupled with its high generalizability across different SCADA and ICS environments, is supported by external testing on the Sherlock dataset, in addition to testing on the previously evaluated WUSTL-IIoT datasets. Our three databases benchmark demonstrates our contribution, as we have shown generality and domain independence, as well as intrusion detection performance that remains secure under various conditions.

-

iii.

Ablation study.

We examine the contributions of each component within DeepFM via an ablation study. Specifically, we performed the following experiments: (i) utilizing only the FM module, (ii) utilizing only the DNN module, and (iii) employing the integrated DeepFM model comprising both modules. This experiment highlights the significant advantages that the combined architecture offers over the individual sub-modules.

Table 16 shows the result of an ablation study on the WUSTL-IIoT-2018 and WUSTL-IIoT-2021 datasets using the FM-only, DNN-only, and DeepFM models. The FM-only model produces very high results on both sets of data, performing exceptionally well at low-order feature interactions, attaining accuracies of 0.942 and 0.963 in 2021 and 2018, respectively. This may be because they do not involve high-order structures. Nevertheless, it has incomplete recall values, indicating that the complex, nonlinear dependencies are challenging to represent. The DNN-only variant, in its turn, shows better performance with higher accuracy/F1-scores (0.968/0.966 in 2021; 0.971/0.963 in 2018), which suggests its capability of capturing high-order relationships. It ignores the more linear association.

The hybrid DeepFM consistently outperforms the separate modules. On WUSTL-IIoT-2021, it achieves an accuracy of 0.987 and an F1-score of 0.994, demonstrating both linear and nonlinear modeling capabilities. Analogously, DeepFM exhibits the best consistency (0.991) and balanced scores in each category on WUSTL-IIoT-2018. All the results show that DeepFM consistently outperforms standalone modules, with differences ranging from 2 to 3 percent, indicating that the complementary advantages of FM and DNN modules enable DeepFM to generalize better. These results confirm that the synergistic use of linear and nonlinear interactions is critical to achieving optimum performance in IIoT intrusion detection.

-

iv.

Cross-dataset validation

To test DeepFM’s ability to generalize, we ran additional experiments on two US datasets: WUSTL-IIoT-2021, HAI Security and Sherlock Dataset. These datasets have significantly different feature distributions, imbalanced class ratios, and varying patterns. If the model performs well on both, it suggests that it hasn’t overfit to any one of these datasets. Table 17 shows the cross-dataset performance of DeepFM.

The results indicate that DeepFM exhibits high performance, with an accuracy score that consistently remains in the range of 95.6% to 99.1%. Notably, the F1-score with the WUSTL-IIoT-2021 dataset is the highest (0.994), suggesting an outstanding precision-recall balance despite the dataset’s inherent heterogeneity. The same model performance is sustained even in a more challenging HAI dataset, which features more subtle sensor anomalies, with the F1-score remaining high at 0.954. Further, the Sherlock dataset targeted at detecting cybersecurity anomalies represents a reflection on DeepFM that it can make decisions in complex, real-life situations with precision of 0.954 and balanced measures, verifying its versatility and reliability. These results indicate that DeepFM can be effectively transferred and perform well in various industrial settings, demonstrating its applicability beyond a particular benchmark.

-

v.

Comparison with baseline studies

To demonstrate the novelty more convincingly, we compared the proposed DeepFM framework with similarly popular machine learning and deep learning models, including Logistic Regression, XGBoost, and Random Forest, as shown in Table 18. We have also included the outcomes reported in peer-reviewed articles published in recent years.

The comparison shows that although Random Forest achieves a performance of 98.9, DeepFM achieves an accuracy of 99.9; the latter outperforms all the baselines. In contrast to Logistic Regression and XGBoost, which primarily promote the learning of first-order and ensemble-based interactions, DeepFM can simultaneously learn low-order and high-order features within a comprehensive framework. This architectural benefit leads to increased performance, demonstrating the novelty and contribution of the proposed methodologies compared to other existing methods.

Together, the ablation, cross-dataset, and baseline performance give an in-depth overview of DeepFM’s performance advantages. The study of ablation confirms that the pairing of FM and DNN is necessary. The cross-dataset validation supports high generalization and robustness even in the presence of a distribution shift. Lastly, in this comparative analysis, it is observed that DeepFM contributes to increasing accuracy by a small margin, while also providing a consistent model that can be applied to other IIoT/SCADA datasets. These findings clearly confirm the soundness of the suggested model and set it above the current techniques.

Model performance under SCADA: high response and complex settings

Proper optimization enables the integration of the DeepFM model into SCADA systems, which require high availability and rapid response times. Although DeepFM requires a significant number of resources, its linear time complexity and effective handling of sparse features enable it to trade speed and power for efficiency. The model may be implemented for quick, real-time inference and trained offline for real-time intrusion detection, reducing computation during crucial SCADA processes. GPU or TPU acceleration can further improve response times, guaranteeing that the system’s latency requirements are satisfied. Methods such as model compression, pruning, and parallel processing can reduce resource requirements while maintaining accuracy, ensuring seamless integration without compromising SCADA performance. The computational burden of the model can be further distributed through distributed computing and failover procedures, assuring continuity in the event of model failure. Resource isolation strategies, such as containerization, can protect essential SCADA operations by preventing the model from using excessive resources. Despite its complexity, DeepFM’s improved detection accuracy over simpler models ultimately justifies its employment in SCADA contexts. It can enhance SCADA security while maintaining the system’s operational continuity, thanks to its high detection precision and improvements that minimize its impact on system performance.

Comparative analysis

The comparative analysis in Table 19 of various studies on the WUSTL-IIoT dataset highlights the advancements in intrusion detection and anomaly detection methodologies over recent years. The PSO + Bat Algorithm, when combined with Random Forest, demonstrated an accuracy of 95.68%7. More recent KNN-based models have achieved even better accuracy, at 96.67%11. Alternative methods, such as ensemble and hybrid algorithms, have been explored. For instance, Random Forest in combination with Isolation Forest and Pearson Correlation Coefficient (PCC) achieved a moderate accuracy of 93.57 percent21, which suggests the heterogeneity of traditional machine learning strategies on the specified dataset. Deep-learning models such as Convolutional Neural Networks (CNN) and Feedforward Neural Networks (FNN) showed accuracy rates of 93.08 percent and 93.26 percent, respectively, which demonstrates that neural models are capable of capturing complex patterns, albeit not yet at 94 percent23.

Subsequent work on deeper learning models, including Outlier-Aware Deep Autoencoders, achieved much higher accuracy of up to 96.1 percent, indicating the promise of deep anomaly detectors specialized to that task24. Likewise, techniques such as Gradient Boosting and Support Vector Machine (SVM) attained accuracies of 96.5% and 95.85%, respectively30, whereas the Subspace Discriminant Algorithm reached an accuracy of only 93.1%28. These findings suggest that both traditional machine learning and deep learning models offer competitive yet slightly divergent performance on the WUSTL-IIoT dataset, with neither achieving an accuracy higher than 90%.

Conversely, our DeepFM-based model outperforms all the mentioned models, achieving an accuracy of up to 99.99% on the WUSTL-IIoT 2018 database. This makes it superior to DeepFM in modeling both low- and high-order interactions between features, which is crucial for detecting weak anomalies and intrusions in IIoT environments. The close accuracy implies that DeepFM improves generalization and performance compared to other models of the past, making DeepFM a leading choice in addressing the challenges of industrial IoT security. This significant performance disparity should highlight the prospect of adopting deep factorization machines in complex cybersecurity procedures.

Table 20 compares our proposed model, DeepFM, with the state-of-the-art methods. The results clearly show that our model consistently outperforms the other models (99.99% vs. 94–98% in the case of WUSTL-IIoT2018, 98% vs. 95% and 98% in the cases of HAI and WUSTL-IIoT2021, respectively). It also demonstrates strong generalization across datasets (98 vs 95 and 98 vs 95 in the cases of HAI and WUSTL-IIoT2021, respectively). This highlights the benefit of using a single architecture that can capture both low-order feature interactions (through FM) and high-order feature dependencies (through DNN).

This extended comparison substantiates that not only does our work demonstrate better accuracy, but it also generalizes well across multiple datasets, a feature that has never been reported in the literature before. The addition of these recent works reinforces the value of our work and highlights the innovativeness of using DeepFM in SCADA IDS.

Random Forest (RF) and K-Nearest Neighbors (KNN) are classical machine learning methods used in SCADA datasets, providing relatively high accuracy rates (95 to 97 percent). The models, however, are limited in their ability to capture high-order feature interactions and must be heavily engineered in their feature specifications. Similarly, CNNs or Feedforward Neural Networks (FNNs) models can utilize hierarchical feature extraction. Still, they do not perform well in highly imbalanced datasets and tend to perform worse on categories with a low number of examples.

Furthermore, sophisticated hybrid systems, such as Outlier-Aware Deep Autoencoders and PSO-Bat Algorithm-based Random Forests, have attempted to enhance the detection of anomalies in industrial networks. Despite the use of advanced preprocessing or ensemble technologies, these methods often yield subpar performance when applied to various SCADA datasets or require substantial computational resources to execute. By comparison, DeepFM combines the use of Factorization Machines, which capture low-order interactions, and Deep Neural Networks, which capture high-order interactions, and has shown strong and consistent accuracy (99.99%) on diverse datasets without extensive feature engineering.

To gain a better understanding of the current state of the literature and its gaps, we have expanded our review by categorizing prior works according to their features, dataset type, model complexity, and scalability, as presented in Table 21 above. This table reveals not only the unique benefits of our proposed model but also positions it within a broader research environment, demonstrating that our model helps resolve both the problems of complexity and generalizability, which have been observed in many past studies.

The results shown in Table 20 indicate that although many conventional and hybrid models perform well in SCADA intrusion detection, they often struggle with high-dimensional data, sparse categorical features, and generalizing across different datasets. For instance, while Random Forest and KNN models can achieve high accuracy on specific datasets, they may fail in scenarios with class imbalance or complex feature interactions. Similarly, deep learning models like CNNs or autoencoders can provide advanced feature extraction, but they also face limitations in scalability and handling sparse tabular data.

When compared with this, the proposed DeepFM framework generally performs better by leveraging the advantages of factorization machine and deep neural networks. It can effectively capture both low- and high-order feature interactions without requiring extensive feature engineering. The proposed dual approach not only achieves better classification but also increases model generalization to multiple SCADA datasets, as evidenced by testing the method on the WUSTL-IIoT 2018, WUSTL-IIoT 2021, and the HAI datasets. In general, the extended analysis reaffirms the argument that the proposed methodology represents a significant advancement in accuracy, robustness, and adaptability in addressing the gaps that exist within the current state-of-the-art in the field.