Quantum machine learning holds great promise for creating powerful and efficient algorithms, but progress is hampered by a key limitation: the difficulty of extracting information from quantum systems. Guilin Zhang, Wulan Guo, and Ziqi Tan of George Washington University, along with Hongyang He and Hailong Jiang of Youngstown State University, are tackling this challenge with a new model architecture that overcomes a critical measurement bottleneck. Their work introduces a lightweight system that cleverly combines processed quantum features with the original raw data to effectively circumvent this limitation without adding significant computational costs. Our results reach 55% accuracy compared to existing methods, demonstrating a significant performance improvement, while enhancing privacy and providing a viable path to practical quantum machine learning applications in resource-limited environments such as edge computing.

Although quantum machine learning promises compact and expressive representations, it suffers from performance limitations known as measurement bottlenecks, which also amplify privacy risks. The researchers propose a lightweight hybrid architecture that combines quantum features and raw inputs before classification, effectively circumventing this bottleneck without increasing the complexity of the quantum system. This approach allows for a more efficient transfer of information from the quantum system to the classical processing stage, reducing the limitations imposed by limited readout. In experiments, this new model outperforms both a pure quantum model and a previous hybrid model in both focused and federated learning settings, achieving up to 55% accuracy improvement compared to the quantum baseline, demonstrating a significant improvement in predictive performance.

Avoid measurement bottlenecks with residual hybrid architecture

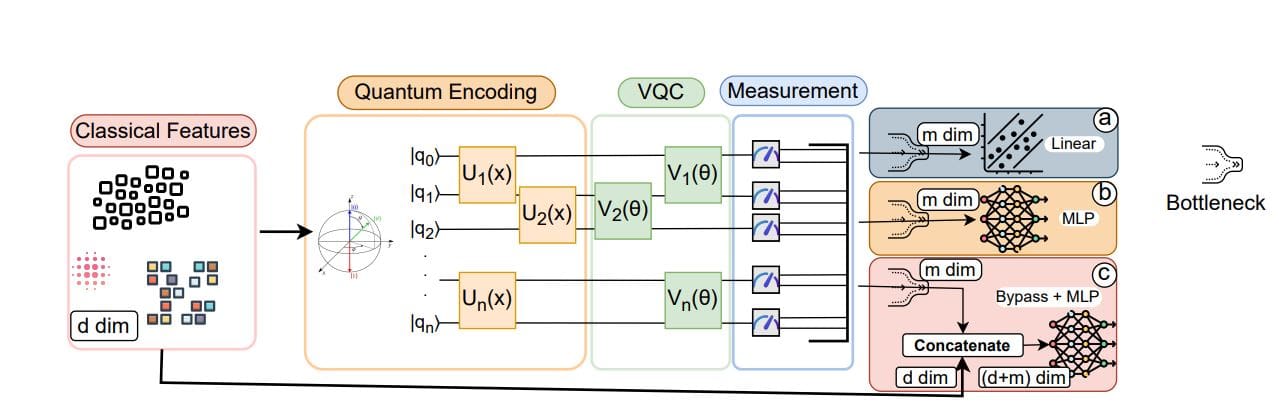

The research team designed a new hybrid quantum-classical model to overcome the limitations imposed by measurement bottlenecks inherent in quantum machine learning. This bottleneck arises from compressing high-dimensional classical inputs into a small number of quantum observables, limiting accuracy and violating privacy. To address this, scientists have developed an architecture that combines raw classical input with measured quantum features before classification. This innovative approach avoids bottlenecks by exposing both the original input and quantum-enhanced features to the classifier without changing the underlying quantum circuitry.

In this study, we carefully compared this new model with both a pure quantum model and a standard hybrid model, keeping a consistent number of parameters for a fair evaluation. Classical inputs are first encoded into quantum states and transformed using parameterized quantum circuits, finally yielding quantum features. The team’s method combines the original classical input with these quantum features to create a combined representation. A projection layer then reduces the dimensionality of this combined representation before classification, ensuring consistent input size across all models.

In our experiments, we rigorously evaluated the performance of our new architecture using four benchmark datasets: Wine, Breast Cancer, Fashion-MNIST subset, and Forest CoverType subset. The team utilized membership inference attacks to measure the likelihood of information leakage and evaluated both accuracy and privacy robustness under model release threats. Reconstruction performance is quantified using the area under the curve (AUC), with values around 0.5 indicating indistinguishability and strong privacy protection. The results show that the hybrid model achieves near-classical accuracy with 10-20% fewer parameters compared to the baseline model, and the privacy robustness is significantly improved. Ablation studies have confirmed that exposing both raw input and quantum features to classifiers is important to overcome measurement bottlenecks and maximize performance.

Hybrid quantum learning improves accuracy and privacy

This work presents a novel hybrid architecture for machine learning that addresses the limitations of measurement bottlenecks in quantum models and enhances privacy. Experiments demonstrate significant improvements in accuracy and privacy robustness, especially in both centralized and federated learning settings. The team achieved up to 55% accuracy improvement compared to the baseline model while maintaining low computational costs and enhanced privacy. Evaluation across four datasets: Wine, Breast Cancer, Fashion-MNIST, and CovType reveals that pure quantum models consistently underperform due to readout limitations.

In contrast, hybrid models achieve higher accuracy with fewer parameters than traditional baselines. Deeper model variations further improve performance. These results confirm that the bypass strategy effectively alleviates the readout bottleneck without requiring an increase in quantum depth. Privacy evaluation using Membership Inference Attacks shows the inherent privacy benefits of the hybrid architecture. While the classic model exhibits a high degree of privacy leakage, the hybrid model achieves significantly stronger privacy guarantees.

This privacy improvement is achieved without relying on explicit noise injection, unlike differential privacy techniques, which can significantly reduce accuracy. In a federated learning scenario with five clients, the hybrid model achieves comparable accuracy to traditional federated learning on the breast cancer dataset of over 90%, while reducing communication overhead by approximately 15% and transmitting 1.7MB in 50 rounds. Convergence takes 22 rounds, demonstrating a practical trade-off between performance and communication efficiency. Ablation studies further isolate the effects of bypass connections and confirm their effectiveness in improving both accuracy and privacy.

Residual connectivity unlocks quantum machine learning

In this work, we introduce a novel hybrid quantum-classical architecture designed to overcome the limitations of measurement bottlenecks in quantum machine learning. By combining the original input data with quantum-transformed features before classification, the team was able to avoid this bottleneck without increasing the complexity of the model. Experiments demonstrated significant performance improvements with up to 55% accuracy improvement compared to existing methods in both standard and federated learning environments. Importantly, these improvements were achieved while maintaining a compact model size and increased privacy protection against inference attacks.

The research team confirmed the validity of this connection at the quantum-classical interface through detailed ablation studies. This approach provides a practical path to integrating quantum machine learning into resource-constrained settings such as edge computing, and is easily compatible with existing quantum-classical hybrid systems without changing the underlying quantum circuitry. While the researchers acknowledge that more complex architectures exist, they emphasize that the benefits often increase incrementally, strengthening the basic model’s suitability for real-world deployments. Future work will focus on applying this technique to larger models, testing it on real quantum hardware, and exploring its potential in broader security contexts, including adversarial robustness and differential privacy.