Image by rawpixel.com on Freepik

Machine learning models are only useful when used in production to solve business problems. However, business problems and machine learning models are constantly evolving. Therefore, machine learning must be maintained to keep up with business KPIs. This is where the concept of MLOps came from.

MLOps (Machine Learning Operations) is a collection of techniques and tools for machine learning in production. Machine learning automation, version control, delivery, and monitoring are what MLOps handles. This article focuses on monitoring and how to use Python packages to configure monitoring model performance in production. Let’s get into it.

When we talk about monitoring in MLOps, we can mean many things as one of the principles of MLOps is monitoring. for example:

– Monitor changes in data distribution over time

– Monitor features used in development and production

– Monitor model decay

– Monitor model performance

– Monitor system age

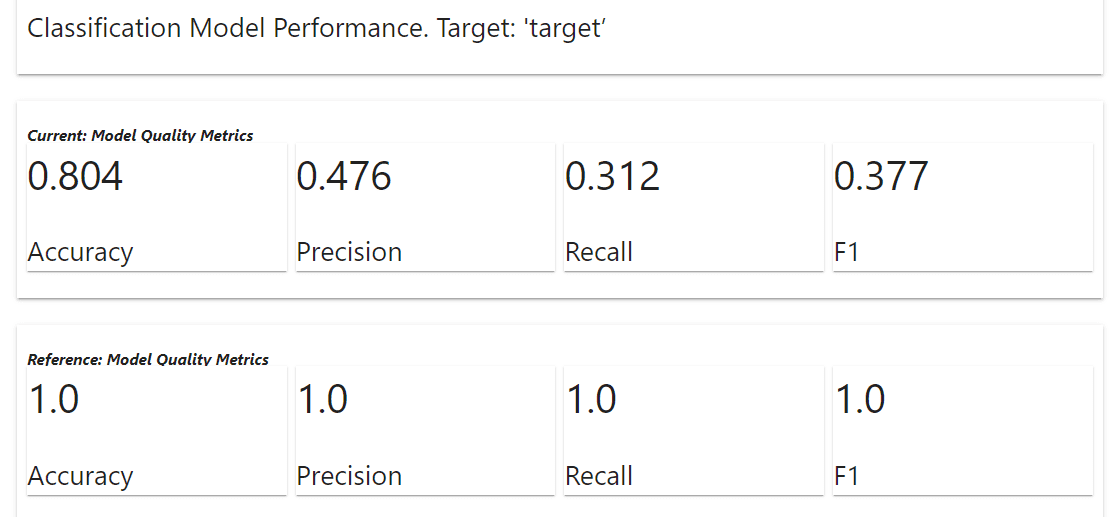

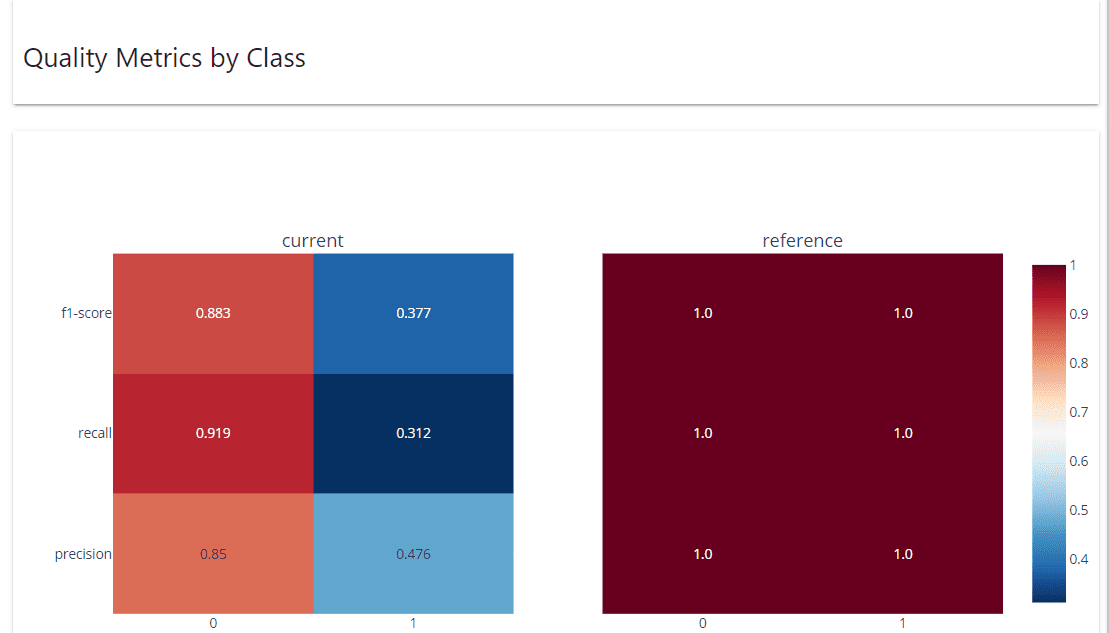

There are many more things to monitor with MLOps, but this article will focus on monitoring model performance. In our case, model performance refers to the model’s ability to make reliable predictions from unseen data, measured by specific metrics such as accuracy, precision, and recall.

Why should you monitor model performance? Maintaining confidence in model predictions to solve business problems. Before going into production, we often calculate the model’s performance and its impact on KPIs. For example, if you want your model to continue to meet your business needs, your baseline is 70% accuracy, but anything less than that is unacceptable. So monitoring the performance ensures that the model always meets the business requirements.

Learn how model supervision works using Python. Let’s start by installing packages. There are many choices for model monitoring, but for this example we will use an open source package for monitoring called Obvious.

First you obviously need to install the package using the following code:

After installing the package, download the insurance claims data sample data from Kaggle. It also erases the data before using it further.

import pandas as pd

df = pd.read_csv("insurance_claims.csv")

# Sort the data based on the Incident Data

df = df.sort_values(by="incident_date").reset_index(drop=True)

# Variable Selection

df = df[

[

"incident_date",

"months_as_customer",

"age",

"policy_deductable",

"policy_annual_premium",

"umbrella_limit",

"insured_sex",

"insured_relationship",

"capital-gains",

"capital-loss",

"incident_type",

"collision_type",

"total_claim_amount",

"injury_claim",

"property_claim",

"vehicle_claim",

"incident_severity",

"fraud_reported",

]

]

# Data Cleaning and One-Hot Encoding

df = pd.get_dummies(

df,

columns=[

"insured_sex",

"insured_relationship",

"incident_type",

"collision_type",

"incident_severity",

],

drop_first=True,

)

df["fraud_reported"] = df["fraud_reported"].apply(lambda x: 1 if x == "Y" else 0)

df = df.rename(columns={"incident_date": "timestamp", "fraud_reported": "target"})

for i in df.select_dtypes("number").columns:

df[i] = df[i].apply(float)

data = df[df["timestamp"] < "2015-02-20"].copy()

val = df[df["timestamp"] >= "2015-02-20"].copy()The above code selects some columns for model training purpose and converts them to numeric representation to split the data for reference (data) and current data (val).

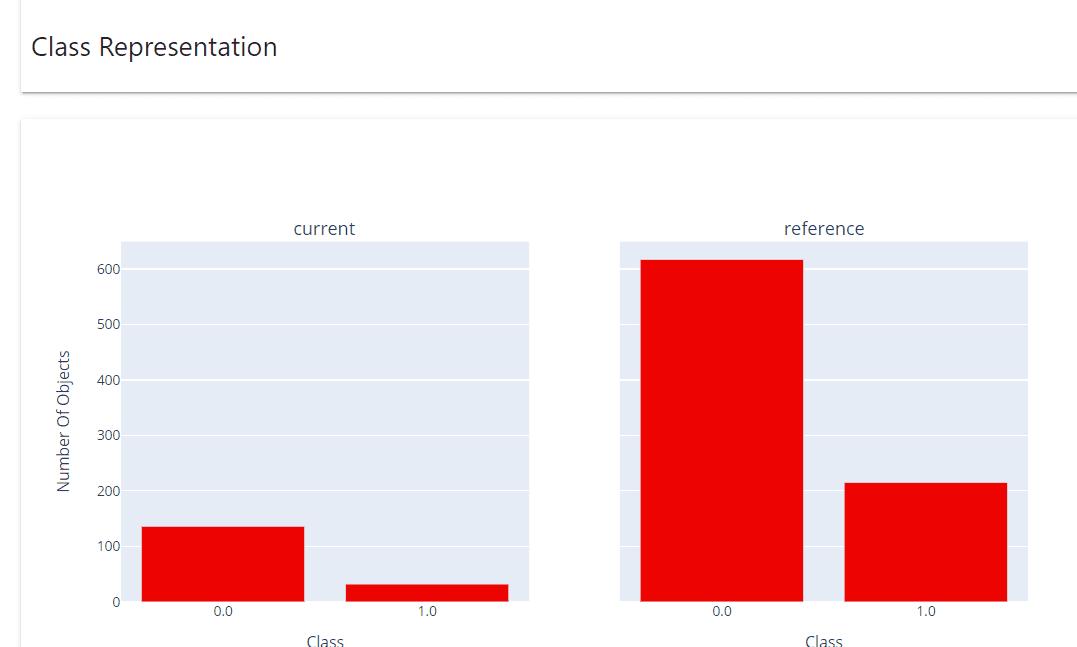

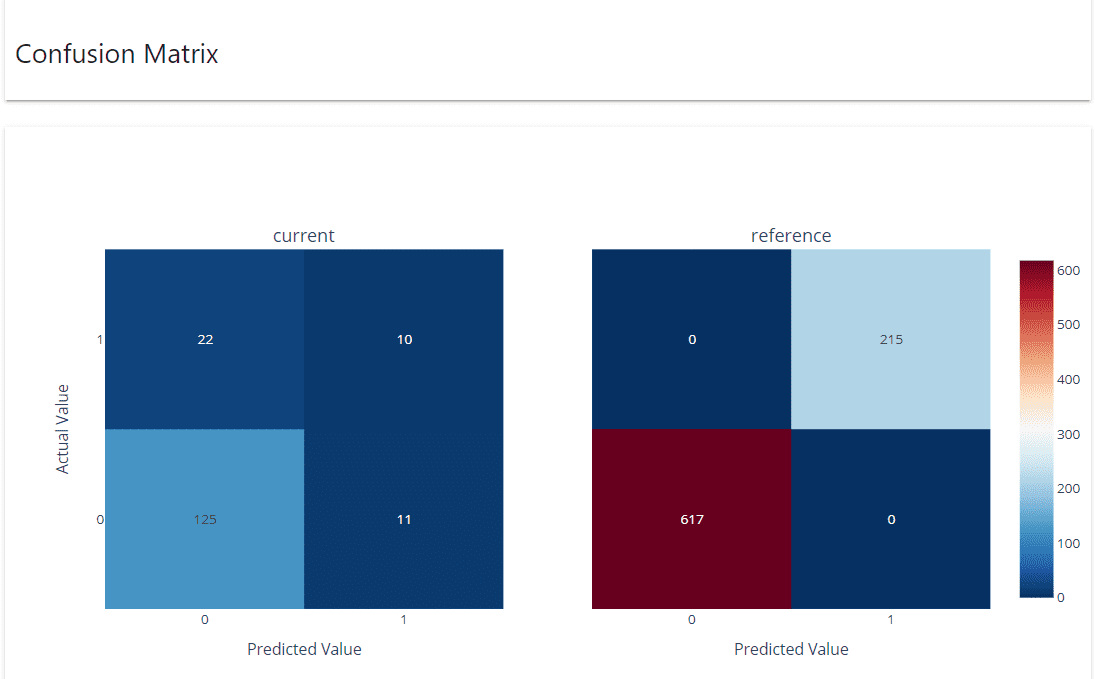

MLOps pipelines require reference or baseline data to monitor model performance. Usually data that has been separated from training data (such as test data). It also requires current data or data not visible to the model (incoming data).

Let’s obviously use it to monitor data and model performance. Data drift affects model performance and can be monitored.

from evidently.report import Report

from evidently.metric_preset import DataDriftPreset

data_drift_report = Report(metrics=[

DataDriftPreset(),

])

data_drift_report.run(current_data=val, reference_data=data, column_mapping=None)

data_drift_report.show(mode="inline")

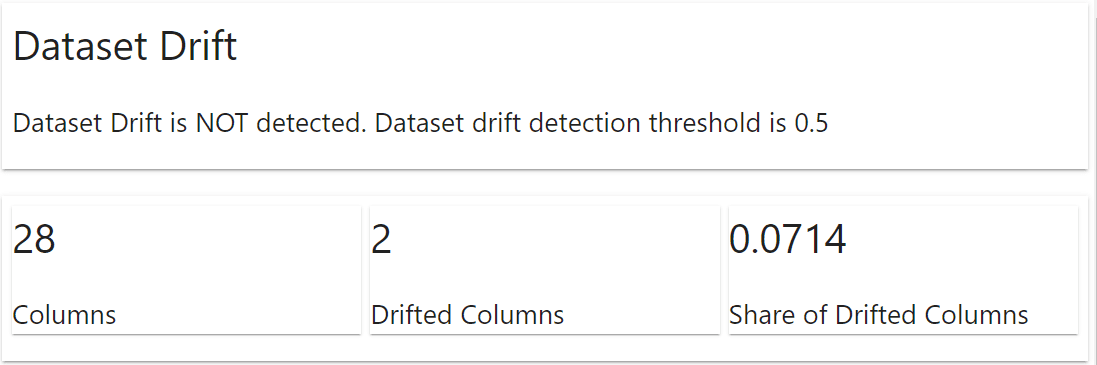

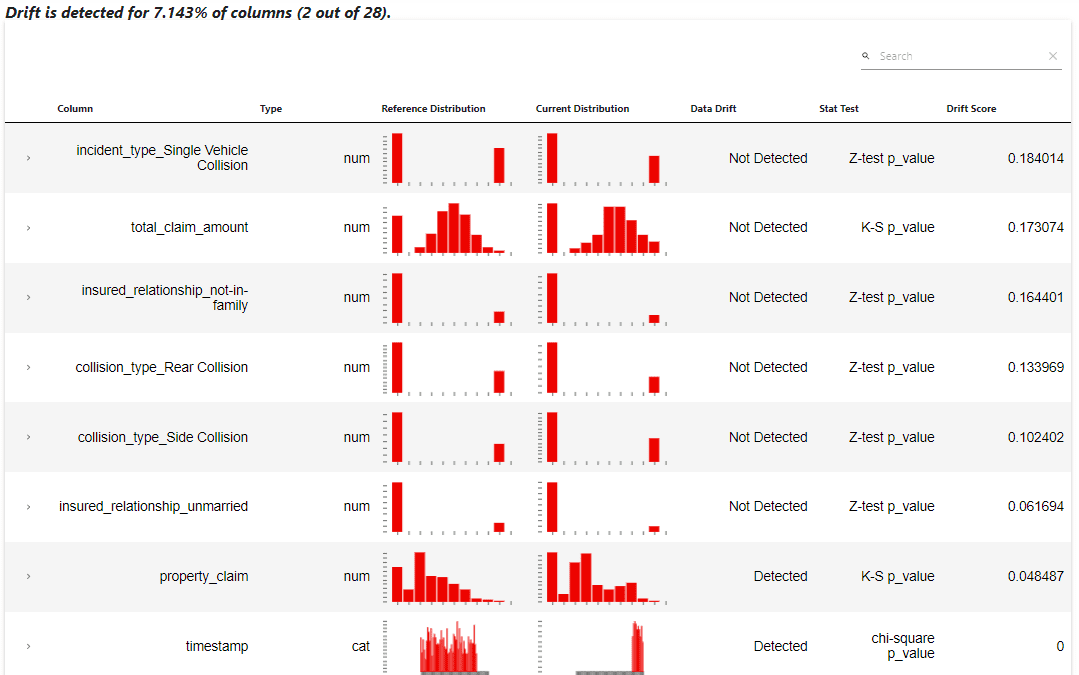

Apparently the package automatically displays a report about what happened to the dataset. This information includes dataset drift and column drift. In the example above, the dataset is not drifting, but two columns are drifting.

This report shows that the columns “property_claim” and “timestamp” indeed detected drift. This information should be used in the MLOps pipeline to retrain the model or explore the data further.

If you prefer, you can also retrieve the above data report in a log dictionary object.

data_drift_report.as_dict()Now let’s train a classifier model from our data and monitor the performance of the model using Obvious.

from sklearn.ensemble import RandomForestClassifier

rf = RandomForestClassifier()

rf.fit(data.drop(['target', 'timestamp'], axis = 1), data['target'])Obviously, we need both the target and prediction columns in the reference and current datasets. Add model predictions to your dataset and use them to monitor performance.

data['prediction'] = rf.predict(data.drop(['target', 'timestamp'], axis = 1))

val['prediction'] = rf.predict(val.drop(['target', 'timestamp'], axis = 1))As a caveat, it is recommended to have reference data, not training data from real cases, to monitor model performance. Let’s set up performance monitoring for our model using the following code: